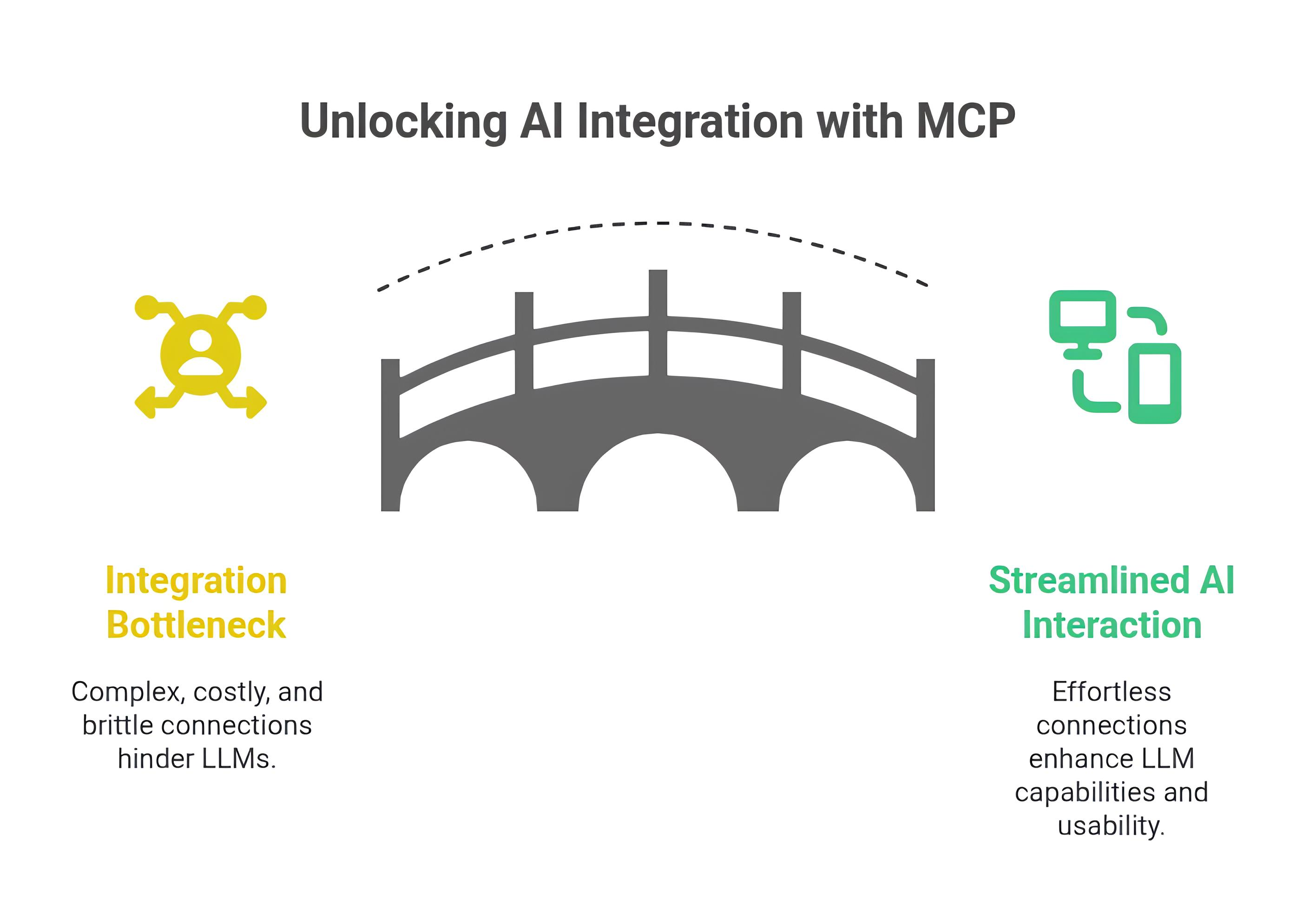

Large Language Models (LLMs) like Anthropic’s Claude have shown incredible potential. But unlocking their full power often hinges on connecting them to the outside world – databases, files, APIs, and custom tools. Historically, this integration has been a major bottleneck, requiring complex, expensive, and brittle custom solutions for every new connection.

Imagine needing a unique, custom-built adapter every time you wanted to plug a new device into your computer. That’s been the reality for integrating LLMs. Until now.

Enter the Model Context Protocol (MCP), an open standard released by Anthropic in late 2024, poised to fundamentally change how AI models interact with external systems.

The Pre-MCP Integration Nightmare

Before MCP, connecting an AI model like Claude to a specific database (say, Postgres) required dedicated engineering effort. Connecting it to your Google Drive? Another custom integration. Connecting it to Slack APIs? Yet another.

Now, multiply this effort across multiple AI models (Anthropic, OpenAI, Deepseek, etc.) and multiple tools and data sources. This “N x M” problem meant a rapidly exploding number of custom integrations were needed (N models * M tools = N x M integrations), stifling innovation and making sophisticated AI assistants costly and difficult to build.

MCP: The Universal Translator for AI

At its core, it is an open standard protocol designed for seamless, standardized communication between AI models and external data sources or tools. Think of it as a universal adapter or translator. Instead of building custom bridges everywhere, developers can now build tools and models that speak the common language of MCP.

Key Benefits:

- Standardization: Provides a single, unified way for AI systems to access diverse external resources.

- Reduced Complexity: Solves the N x M integration problem. Tool builders implement the MCP server protocol once. LLM providers implement the MCP client protocol once. Now, N models and M tools only require N + M implementations, not N * M.

- Open Standard: Fosters a collaborative ecosystem where anyone can build compatible tools and integrations.

- Enhanced Capabilities: Enables AI models to securely and effectively leverage external knowledge and perform actions in the real world.

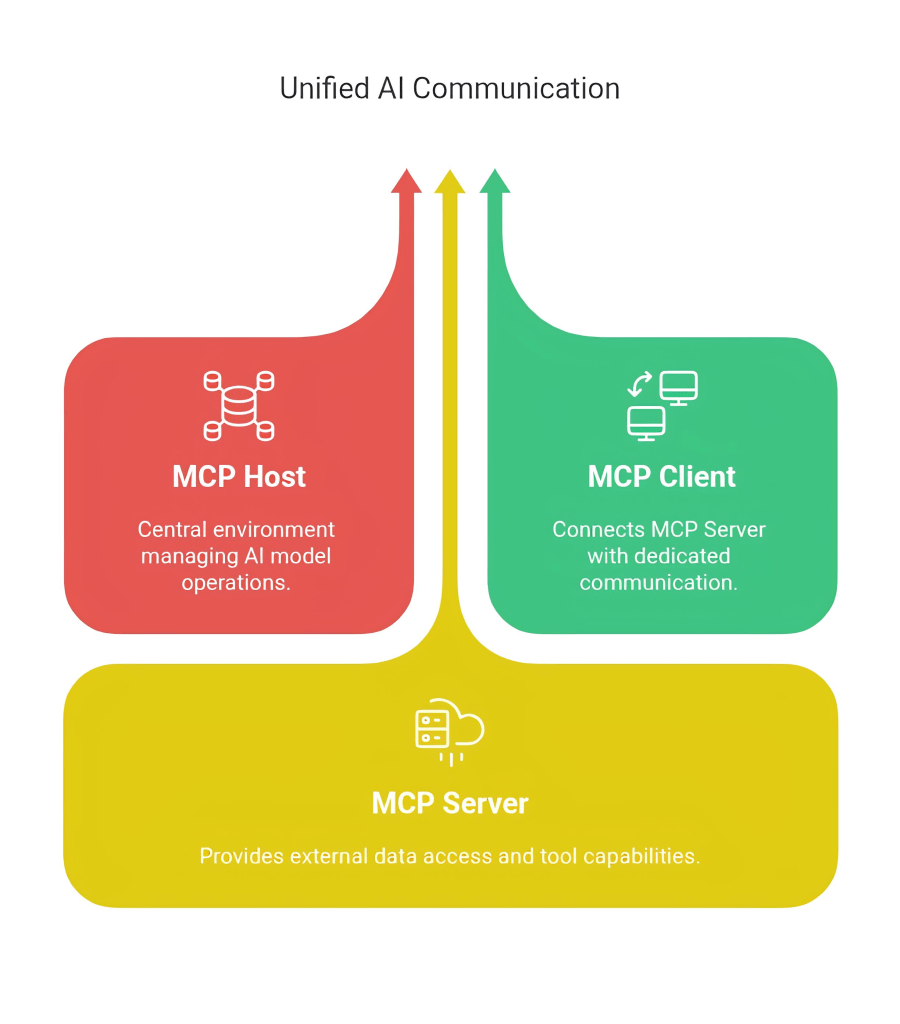

How MCP Works: The Architecture

MCP utilizes a familiar client-server architecture with three key players:

- MCP Host: The environment where the AI model runs (e.g., the Claude desktop application). It manages and provides the environment for Clients.

- MCP Client: Components residing within the Host. Each client establishes and maintains a one-to-one connection with an MCP Server using the MCP protocol.

- MCP Server: Separate processes that represent external data sources or tools (like a database connection, a file system interface, or a web API wrapper). They expose capabilities to the MCP Client via the protocol.

Essentially, the Host (Claude) uses Clients to talk the MCP language to various Servers, which in turn interact with the actual external resources (databases, files, APIs).

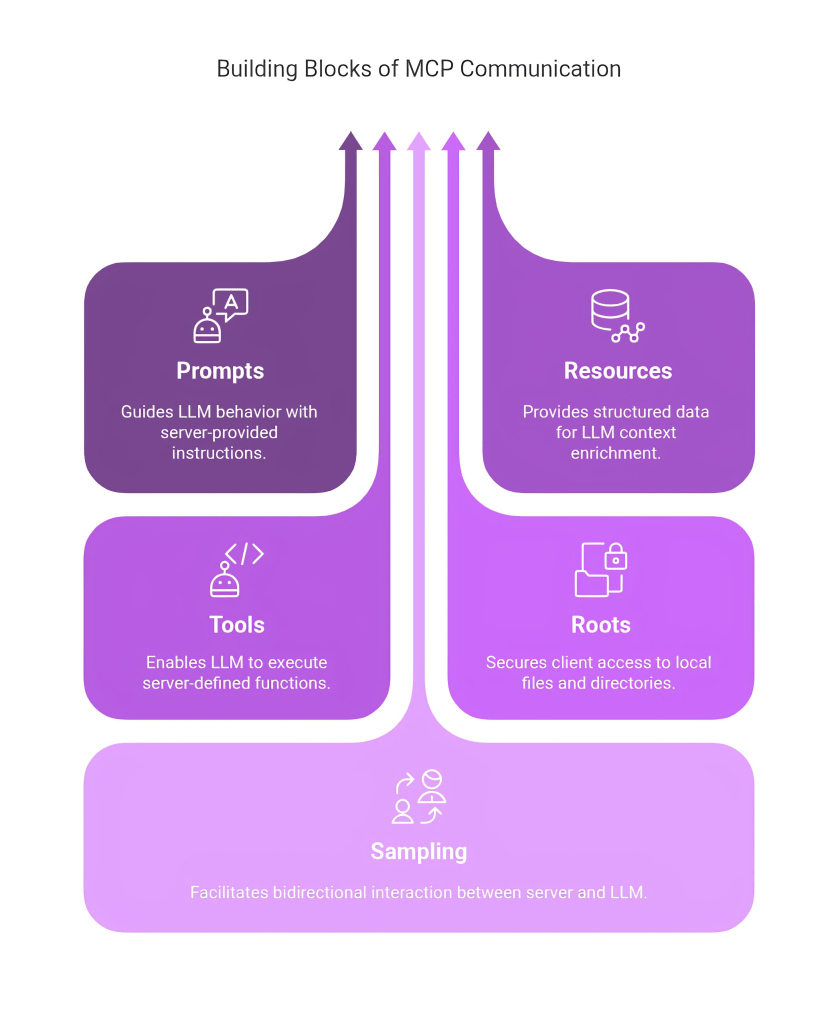

Under the Hood: The Five Core Primitives

MCP’s power comes from five core “primitives” – the building blocks that define the standardized communication:

Server-Side Primitives (What Servers Offer):

- Prompts: Instructions or templates the server provides to guide the LLM’s behavior when interacting with its specific resource or task.

- Resources: Structured data objects (like file contents, database rows, API responses) that the server can send to be included directly in the LLM’s context window, giving it external information to reason about.

- Tools: Executable functions defined by the server that the LLM can choose to call. This allows the AI to actively retrieve information (e.g., query a database) or perform actions (e.g., modify a file) beyond its immediate context.

Client-Side Primitives (How Clients Interact):

- Roots: A secure mechanism allowing the AI application (via the client) to request access to specific local files or directories. It acts like a secure channel, enabling tasks like reading code, opening documents, or analyzing data files without granting unrestricted access to the entire file system.

- Sampling: Enables a two-way interaction. While Tools let the LLM call the server, Sampling allows the server to request help from the LLM. For example, a database server analyzing a schema might ask the LLM (via Sampling) to help formulate the most relevant SQL query for a user’s request.

These primitives work together to enable rich, secure, and standardized interactions between the AI and the outside world.

MCP in Action: Claude + Postgres Example

Let’s say you want Claude to analyze data in your PostgreSQL database.

- No Custom Code Needed: Instead of writing a bespoke integration, you use an existing MCP Server designed for PostgreSQL.

- Connection: The Claude application (MCP Host) uses an MCP Client to connect to the PostgreSQL MCP Server.

- Interaction:

- The MCP Server might provide Tools for querying the database.

- Claude, understanding the user’s request, decides to use the query Tool.

- The MCP Client sends the query request via the MCP protocol to the Server.

- The MCP Server executes the query against the actual PostgreSQL database.

- The results are returned as Resources via the protocol to the Client.

- Claude (Host) incorporates these results into its context window and generates an insightful response for the user.

- Alternatively, the server might use Sampling to ask Claude for help formulating the best query based on the database schema and user intent.

All this happens using the standardized MCP, maintaining security and context throughout.

The Growing MCP Ecosystem

It is more than just a specification; it’s a growing ecosystem. Developers have already built MCP server integrations for:

- Google Drive

- Slack

- GitHub

- Git

- PostgreSQL

- …and more are emerging.

Software Development Kits (SDKs) are available in popular languages like TypeScript and Python, making it easier for developers to build their own MCP-compatible clients and servers.

The Future is Connected

Model Context Protocol represents a significant leap forward in making AI more practical and powerful. By providing a universal, open standard for integration, it breaks down the walls between LLMs and the vast world of external data and tools. It dramatically simplifies development, fosters innovation, and paves the way for more sophisticated, context-aware, and capable AI applications. Model Context Protocol is positioned to become a foundational technology in the evolving AI landscape.