The million-dollar problem that keeps the engineering leaders awake. A silent productivity killer is wreaking havoc on software delivery timelines. This killer is stealthy, we won’t be able to notice from above, but it is leaving an impact for sure. This killer is test data management.

QA engineers spend almost half their time finding and analysing test data, while test data bottlenecks are status quo, and enterprises unknowingly experience costly delays and risk non-compliance with data privacy regulations.

To be honest, the numbers are staggering. The UK software testing industry reached a market of £1.2 billion in 2024. However, despite this massive investment, around 40% of the testing professionals cite a lack of time as the biggest challenge to achieving software quality objectives. It’s a paradox that’s costing businesses millions. The more complex our application becomes, the more time we waste on the very foundation that should accelerate our testing. Our Data!

But what if I told you that, thanks to intelligent test data management, progressive companies are cutting manual labour by 40 to 70% and the application delivery cycle time by 25%?

Let’s bring the elephant into the room now.

Why Traditional Approaches Are Failing?

Challenge 1: The Data Silos Nightmare

Using various technologies and data formats, enterprise data is frequently fragmented and siloed across dozens of data sources.

Consider this: a normal application retrieves information from numerous JSON files, RESTful APIs, contemporary cloud databases, and old mainframes. Each system speaks a different language, follows different schemas, and requires integration effort.

The Solution: Universal Data Source Abstraction

Our framework design principle centres on creating a unified interface that abstracts away the complexity of data sources. Rather than building point-to-point integration, we’ve architected a pluggable data source registry:

// One interface, endless possibilities

DataSource jsonSource = new JsonDataSource("/customer-data/");

DataSource apiSource = new ApiDataSource("https://api.internal.com/");

DataSource dbSource = new DatabaseSource("postgres://prod-replica");

// All accessed through the same unified API

TestDataManager manager = TestDataManager.getInstance();

UserProfile customer = manager.getStaticData("premium-customer", UserProfile.class); This architectural decision eliminates the cognitive overhead of remembering different APIs for different data sources, whilst providing seamless scalability as new systems are introduced.

Challenge 2: The Compliance Minefield

While GDPR fines reach up to £17.5 million or 4% of global revenue, data privacy isn’t just a technical concern; it’s an existential business risk. Enterprises should substitute personal data with realistic, non-sensitive data to eliminate exposure to privacy risks.

The Solution: Security-First Architecture

We’ve designed security as a fundamental layer, not an aftermath:

// Automatic PII detection across any data structure

SecurityManager security = new SecurityManagerImpl();

Map<String, Set<String>> detectedPII = security.detectPII(customerData);

// Intelligent masking with business logic preservation

Map<String, Object> compliantData = security.maskSensitiveData(

customerData,

customMaskingRules

);This approach ensures that every piece of test data is automatically screened and sanitised while maintaining reference integrity and business logic.

Challenge 3: The Problem of Brittle Data

Conventional methods of creating test data are labour-intensive, brittle, and manual. Dozens of test scenarios fail when one upstream system is changed.

The Solution: AI-Powered Dynamic Generation

Instead of relying on static data dumps, we’ve architected an intelligent generation system:

// AI generates contextually appropriate data

AIDataGenerator generator = new GeminiDataGenerator(apiKey);

UserProfile realisticUser = generator.generateData(

"Create a premium banking customer profile for UK market testing",

UserProfile.class

);

// Traditional generation for consistent scenarios

BasicDataGenerator basicGen = new UserProfileGenerator();

UserProfile standardUser = basicGen.generateData(testParameters);This dual approach provides both consistency for regression testing and variety for exploratory scenarios.

The Architecture That Changes Everything

First Design Choice: Thread-Safe Singleton Pattern

Instead of permitting numerous instances that might cause data corruption, we have put in place a thread-safe singleton pattern:

public class TestDataManager {

private static volatile TestDataManager instance;

private final Object locker = new Object();

public static TestDataManager getInstance() {

if (instance == null) {

synchronised(locker) {

if (instance == null) {

instance = new TestDataManagerImpl();

}

}

}

return instance;

}What makes this significant? QA teams frequently encounter the issue of testers unintentionally overriding one another’s test data. Time and effort are lost as a result of test data corruption. In concurrent testing environments, this prevents the dreaded situation where several threads override one another’s test data.

Design Decision 2: Component Architecture Based on Templates

Instead of monolithic classes, we’ve designed the framework around reusable templates:

- Data Source Templates – Pluggable connectors for any data format

- Generation Templates – Reusable patterns for common business entities

- Security Templates – Configurable privacy controls

- Integration Templates – Framework-specific adaptors

The business Impact – Teams can now share components across projects, reducing development time by an estimated 40% and ensuring consistent quality standards.

Design Decision 3: JSONPath Query Engine

Traditional data access requires developers to navigate complex nested structures manually. SQL-like queries on JSON data are made possible by our JSONPath implementation:

// Clean, intuitive queries

UserProfile vipCustomer = manager.getJsonDataListItem("customers",

"$[?(@.tier == 'VIP')]", UserProfile.class);This design decision reduces code complexity whilst making test data access self-documenting.

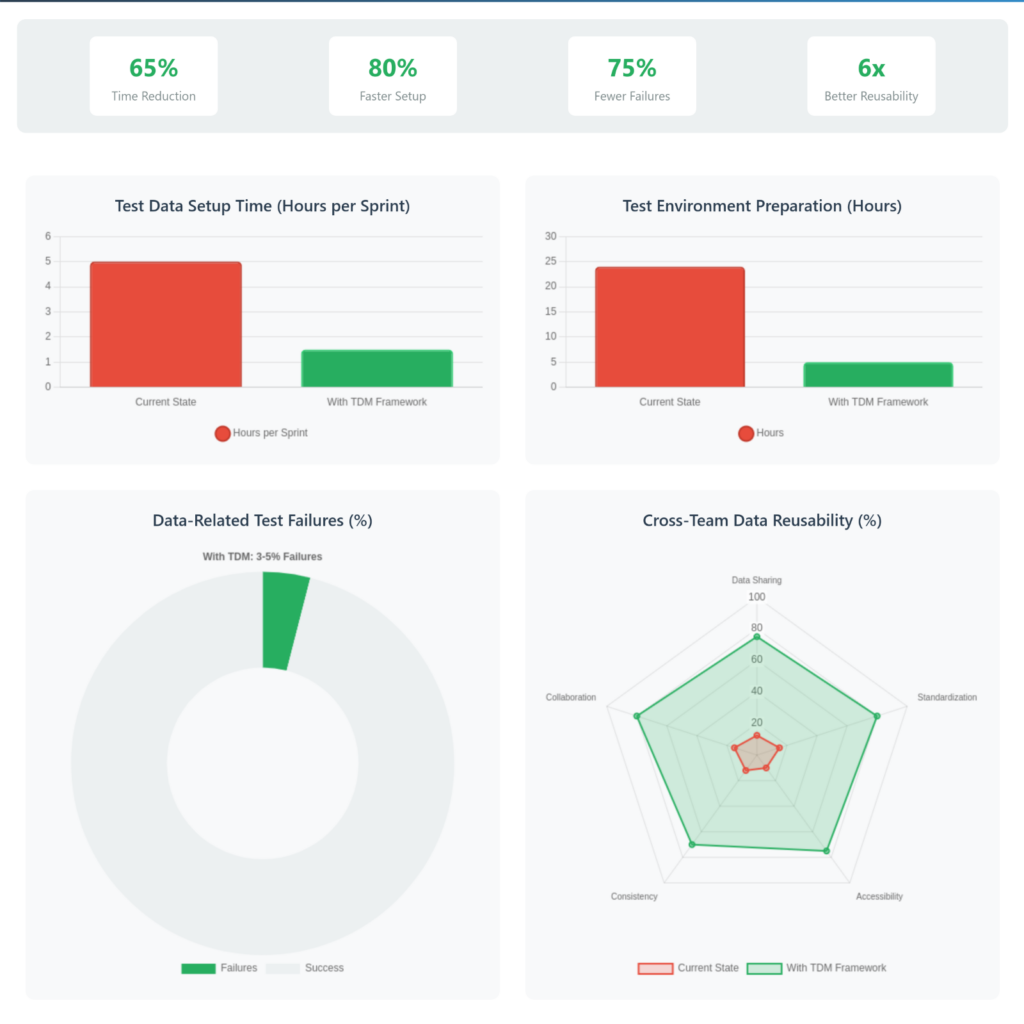

Quantifiable Benefits

Based on our implementation and industry benchmarks, organisations adopting modern test data management frameworks typically realise:

- 40-70% reduction in test data creation and provisioning costs through automation

- 25% improvement in application delivery cycle times

- 40-70% decrease in time and resources needed for test data provisioning

Cost Reductions

- Forrester’s Total Economic Impact study shows test management platforms can generate 312% ROI over 3 years. Source: Link

- Lowered infrastructure expenses by leveraging data subsetting and synthetic data generation.

- Lower compliance risk with automated PII detection and masking

Quality Improvements

- Comprehensive test coverage through advanced subsetting capabilities

- Reduced false positives through intelligent data relationships

- Fewer bugs escaped into production environments

The Architecture Decisions That Deliver

Accept Modularity Instead of Monoliths

Instead of creating a big, complicated system, design your framework as separate, modular parts.

- Independent testing and deployment of components

- Team specialisation around specific modules

- Easier maintenance and updates

- Reduced cognitive load for developers

Prioritise Engineer Experience

Your framework’s success depends on adoption. Design decisions should optimise for:

- Intuitive APIs that feel natural to use

- Comprehensive error messages that guide resolution

- Extensive documentation with practical examples

- Zero-configuration defaults that work out of the box

Build for Scale from Day One

Even if you’re starting small, architect for enterprise scale:

- Implement proper caching strategies

- Design for horizontal scaling

- Plan for disaster recovery scenarios

Taking Action: Your Next Steps

The test data management landscape is evolving rapidly. The global Enterprise Data Management market is projected to grow from $97.5 billion in 2023 to $281.9 billion by 2033, indicating that organisations investing now will have significant competitive advantages.

The Future Will Be Scalable, Secure, and Intelligent

Businesses will outperform those that invest in intelligent test data management architectures as the pressure to deliver software more quickly without sacrificing quality or compliance increases. Whether our testing capabilities become competitive advantages or expensive bottlenecks will depend on the design choices we make today, including embracing modularity, giving security top priority, and building for scale.

The question isn’t whether you need a modern test data management framework. The question is whether you’ll adapt to the transformation to catch up.

References:

- https://www.ibisworld.com/united-kingdom/market-research-reports/software-testing-services-industry/

- https://katalon.com/reports/state-quality-2024

- https://www.k2view.com/blog/test-data-management-roi/

- https://gdpr-info.eu/issues/fines-penalties/

- https://www.practitest.com/2023-forrester-tei-report/

- https://market.us/purchase-report/?report_id=63894