Imagine this: You’re ready to buy a product online. The reviews look flawless – hundreds of glowing five-star ratings. Confident, you click “Buy Now.” But when the package arrives, it’s disappointing. You’ve just fallen victim to a fake review.

Fake reviews are everywhere. Moreover, they distort reality, mislead shoppers, and damage businesses. For retailers, the cost is especially high: lost sales, reputational harm, regulatory risks, and declining customer loyalty.

Traditional detection struggles to keep up. That’s where Artificial Intelligence (AI) steps in – analyzing vast data, spotting subtle anomalies, and adapting to new fraud tactics faster than any human team could.

What is a fake review?

A fake review is an online review that fails to reflect genuine customer experiences and instead seeks to mislead consumers or distort brand reputation.

Moreover, fraudsters design these reviews to influence purchasing decisions – positively or negatively – and they often spread across e-commerce platforms, review sites, and social media.

Why fake reviews are a growing threat?

Fake reviews typically fall into two types:

- Human-generated:

- Content creators are paid to write fake reviews that promote or criticize products.

- Common patterns include:

- Self-promotion: Product owners hire writers to post positive reviews to boost ratings and attract customers.

- Competitor sabotage: Rivals pay spammers to post negative reviews, discouraging buyers and pushing them toward their own products.

- Paid campaigns: Freelancers or agencies produce large volumes of fake reviews (positive or negative) for profit.

- AI-generated:

- Fraudsters use automated algorithms (NLP and Machine Learning) to generate fake reviews at scale.

- In addition, with generative AI tools like ChatGPT, convincing fake reviews can be produced quickly using simple prompts.

- As a result, these AI-generated reviews closely resemble real customer feedback, making them much harder to detect.

The result? A positive skew might temporarily boost sales but risks long-term credibility. Conversely, negative deceitful reviews can lead to unwarranted reputational damage, affecting revenue and customer loyalty adversely. Customers make poor purchasing decisions, while businesses see lower trust scores, increased product returns, and even abandoned shopping carts. Fake reviews don’t just harm sales – they weaken the credibility of the entire digital marketplace.

Why human detection falls short?

Shoppers often rely on gut feeling – judging reviews based on tone, length, or pictures. But research shows people can only detect fake reviews correctly about 52 – 65% of the time. In contrast, machine learning models have reached up to 86% accuracy.

This gap highlights a clear truth: humans alone can’t win the fight against fake reviews. Therefore, businesses need AI-powered systems that can detect fraud at scale, faster and more reliably than manual checks. Ultimately, this is where AI offers a breakthrough – scanning data at scale and spotting patterns invisible to the human eye.

How AI helps businesses spot fake reviews?

Think of AI as a detective that never sleeps. Rather than relying on gut feeling or surface checks, it digs deep into every piece of data – review text, timing, user behavior, and even the tone of language – to uncover what’s real and what’s fake.

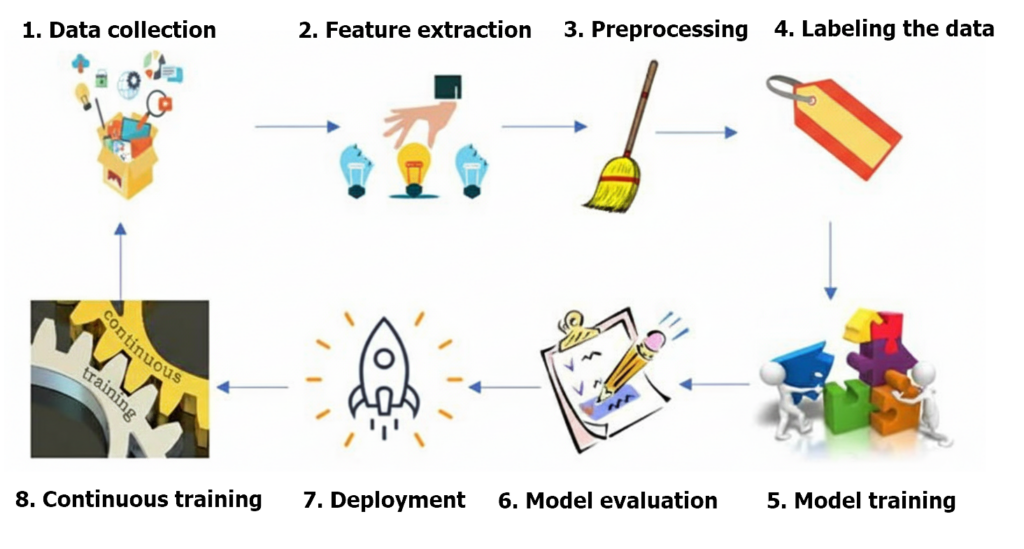

In practice, detecting fake reviews using Artificial Intelligence (AI) involves training a model to analyse various features and patterns within reviews to distinguish between genuine and fake ones. Finally, the image below depicts the steps of the fake review detection process and enumerates them.

Here’s how the process works step by step:

Step 1: Data Collection

The process begins with gathering a large and diverse dataset of reviews. These datasets typically contain both genuine customer feedback and known fake reviews. Sources include:

- E-commerce platforms (Amazon, Shopee, Lazada) where fake reviews are most common.

- Social media discussions where influencers or bots promote products.

- Review aggregators like Trustpilot or Yelp.

By compiling information across platforms, AI ensures it has a broad spectrum of examples to learn from.

Step 2: Feature extraction

After collecting data, AI extracts key features that may indicate suspicious behavior. These features fall into three main categories:

- Textual and linguistic cues: repetitive wording, unnatural sentence structure, or excessive positivity/negativity. For example, reviews like “Amazing, perfect, wonderful product!!!” without specifics often trigger red flags.

- Behavioral signals: unusual patterns in posting activity, such as a sudden surge of five-star reviews within a short period, or the same account reviewing dozens of unrelated products in a day.

- Network relationships: detecting groups of accounts working together, such as multiple reviewers tied to the same seller or posting from the same IP range.

These features are crucial because fraudsters may disguise one aspect, but rarely all three at once.

Step 3: Data preprocessing

Before analysis, raw data must be cleaned and standardized. This includes removing special characters, converting text to lowercase, handling missing values, and normalizing timestamps. Preprocessing transforms messy real-world data into a structured form that AI can analyze consistently.

Step 4: Labelling the data

For AI to learn effectively, reviews must be labeled as genuine or fake. This can be achieved through:

- Manual annotation by experts.

- Rule-based identification, such as flagging reviews from accounts with suspicious histories.

- Crowdsourcing, where multiple human evaluators validate review authenticity.

Balanced datasets – where researchers fairly represent both genuine and fake reviews – are vital to avoid bias in the model.

Step 5: Model training

The labeled dataset is then fed into machine learning algorithms. Different approaches may be used depending on the complexity of the platform:

- Logistic Regression & Decision Trees: effective for detecting basic fraud patterns.

- Random Forests & Gradient Boosting: handle large datasets and capture subtle variations.

- Deep Learning (RNNs, CNNs, Transformers): analyze language context, tone, and even semantic meaning, making them especially useful for spotting AI-generated reviews that mimic human writing.

During training, the model learns the distinguishing signals that separate fake reviews from authentic ones.

Step 6: Model evaluation

No AI model is deployed without thorough testing. Evaluators measure performance using key metrics:

- Accuracy: percentage of correctly classified reviews.

- Precision: how many flagged reviews are actually fake.

- Recall: how many fake reviews the system successfully caught.

- F1-score: a balance of precision and recall.

Step 7: Model deployment

Once validators confirm the system, developers then integrate it into the e-commerce platform. From that point onward, the system screens reviews in real time and flags suspicious ones for further review. Depending on company policy, these flagged reviews may be:

- Hidden from public view until verified.

- Escalated for human moderation.

- Permanently removed if confirmed fraudulent.

This creates a scalable, automated first line of defense.

Step 8: Continuous learning

Fraudsters constantly evolve their methods, especially with the rise of generative AI tools like ChatGPT. To stay ahead, AI models must update continuously with new data. Feedback loops – where the system re-checks flagged reviews and adds them back into the training dataset – help the model adapt and improve. This ensures platforms are not only reactive but also proactive against emerging fraud patterns.

Final thoughts

Fake reviews are more than a nuisance – they threaten the credibility of digital commerce. By harnessing AI, businesses can shift from reactive defense to proactive fraud prevention, protecting both customers and brand reputation.

In today’s marketplace, where trust is currency, AI is not just a safeguard – it’s a growth enabler. Companies that invest now will stand out tomorrow, not only for their products but for their integrity and resilience.