Welcome back to our journey of Akka Agentic AI! The last blog, Akka Agentic AI: Secret to Planning a Perfect Trip – Part 5, provides a step-by-step guide to add a Dynamic Plan, which orchestrates multiple AI Agents and use a Workflow to execute the plan. Also, in future, more AI Agents can be added to the application without updating the orchestration workflow.

Dynamic orchestration is a good addition, it will make the AI Agents work autonomously. But who will evaluate, whether the dynamic orchestration is catering to the User preferences? Having it evaluated by a human would be expensive in terms of both – time and money. Also, as the application will scale, manual evaluation might become an overhead. Hence, getting the results/recommendations evaluated by an AI Agent would be a much better approach.

This article will guide us to:

- Add an agent that will evaluate the quality of the AI recommendation, given the original request and the updated user preferences.

- Use a Consumer to trigger evaluation when the User preferences are changed.

Add Evaluator Agent

Adding an EvaluatorAgent is a 3-step process:

- Define detailed instructions of how to evaluate the user preferences.

- Retrieve user preferences.

- And, at last provide evaluation of AI Agents’ results in a structured format.

import akka.javasdk.agent.Agent;

import akka.javasdk.annotations.AgentDescription;

import akka.javasdk.annotations.ComponentId;

import akka.javasdk.client.ComponentClient;

import com.example.entity.PreferencesEntity;

import java.util.List;

import java.util.stream.Collectors;

@ComponentId("evaluator-agent")

@AgentDescription(

name = "Evaluator Agent",

description = """

An agent that acts as an LLM judge to evaluate the quality of AI responses.

It assesses whether the final answer is appropriate for the original question

and checks for any deviations from user preferences.

"""

)

public class EvaluatorAgent extends Agent {

public record EvaluationRequest(

String userId,

String originalRequest,

String finalAnswer

) {}

public record EvaluationResult(

int score,

String feedback

) {}

private static final String SYSTEM_MESSAGE =

"""

You are an evaluator agent that acts as an LLM judge. Your job is to evaluate

the quality and appropriateness of AI-generated responses.

Your evaluation should focus on:

1. Whether the final answer appropriately addresses the original question

2. Whether the answer respects and aligns with the user's stated preferences

3. The overall quality, relevance, and helpfulness of the response

4. Any potential deviations or inconsistencies with user preferences

A response is "Incorrect" if it meets ANY of the following failure conditions:

- poor response with significant issues or minor preference violations

- unacceptable response that fails to address the question or violates preferences

A response is "Correct" if it:

- fully addresses the question and respects all preferences

- good response with minor issues but respects preferences

IMPORTANT:

- Any violations of user preferences should result in an incorrect evaluation since

respecting user preferences is the most important criteria

Your response must be a single JSON object with the following fields:

- "explanation": Specific feedback on what works well or deviations from preferences.

- "label": A string, either "Correct" or "Incorrect".

""".stripIndent();

private final ComponentClient componentClient;

public EvaluatorAgent(ComponentClient componentClient) {

this.componentClient = componentClient;

}

public Effect<EvaluationResult> evaluate(EvaluationRequest request) {

var allPreferences = componentClient

.forEventSourcedEntity(request.userId())

.method(PreferencesEntity::getPreferences)

.invoke();

String evaluationPrompt = buildEvaluationPrompt(

request.originalRequest(),

request.finalAnswer(),

allPreferences.entries()

);

return effects()

.systemMessage(SYSTEM_MESSAGE)

.userMessage(evaluationPrompt)

.responseAs(EvaluationResult.class)

.thenReply();

}

private String buildEvaluationPrompt(

String originalRequest,

String finalAnswer,

List<String> preferences

) {

StringBuilder prompt = new StringBuilder();

prompt.append("ORIGINAL REQUEST:\n").append(originalRequest).append("\n\n");

prompt.append("FINAL ANSWER TO EVALUATE:\n").append(finalAnswer).append("\n\n");

if (!preferences.isEmpty()) {

prompt

.append("USER PREFERENCES:\n")

.append(preferences.stream().collect(Collectors.joining("\n", "- ", "")))

.append("\n\n");

}

prompt

.append("Please evaluate the final answer against the original request")

.append(preferences.isEmpty() ? "." : " and user preferences.");

return prompt.toString();

}

}Handle Preference Changes

Since, we are using “LLM as Judge” pattern. Hence, any changes to User Preferences, should be handled. For handling the same, we need a PreferencesConsumer which will consume PreferencesEntity and trigger the LlmAsJudge.

import akka.javasdk.annotations.ComponentId;

import akka.javasdk.annotations.Consume;

import akka.javasdk.client.ComponentClient;

import akka.javasdk.consumer.Consumer;

import com.example.domain.PreferencesEvent;

import com.example.entity.PreferencesEntity;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

@ComponentId("preferences-consumer")

@Consume.FromEventSourcedEntity(PreferencesEntity.class)

public class PreferencesConsumer extends Consumer {

private static final Logger logger = LoggerFactory.getLogger(PreferencesConsumer.class);

private final ComponentClient componentClient;

public PreferencesConsumer(ComponentClient componentClient) {

this.componentClient = componentClient;

}

public Effect onPreferenceAdded(PreferencesEvent.PreferenceAdded event) {

var userId = messageContext().eventSubject().get(); // the entity id

logger.info("Preference added for user {}: {}", userId, event.preference());

// Get all plan (sessions) for this user from the PlanView

var plans = componentClient

.forView()

.method(PlanView::getPlans)

.invoke(userId);

// Call EvaluatorAgent for each session

for (var plan : plans.entries()) {

if (plan.finalAnswer() != null && !plan.finalAnswer().isEmpty()) {

var evaluationRequest = new EvaluatorAgent.EvaluationRequest(

userId,

plan.userQuestion(),

plan.finalAnswer()

);

var evaluationResult = componentClient

.forAgent()

.inSession(plan.sessionId())

.method(EvaluatorAgent::evaluate)

.invoke(evaluationRequest);

logger.info(

"Evaluation completed for session {}: score={}, feedback='{}'",

plan.sessionId(),

evaluationResult.score(),

evaluationResult.feedback()

);

if (evaluationResult.score() < 0) {

// run the workflow again to generate a better answer

componentClient

.forWorkflow(plan.sessionId())

.method(PlanTripWorkflow::runAgain)

.invoke();

logger.info(

"Started workflow {} for user {} to re-answer question: '{}'",

plan.sessionId(),

userId,

plan.userQuestion()

);

}

}

}

return effects().done();

}

}Note: For a real-time application, evaluating only the relevant suggestions, like last 3 suggestions, would be a better approach. Since, real-time applications are time sensitive and require minimal response times.

Let’s Plan a Trip!

1. Set OpenAI API Key as environment variable

set OPENAI_API_KEY=<your-openai-api-key>2. Start the service locally

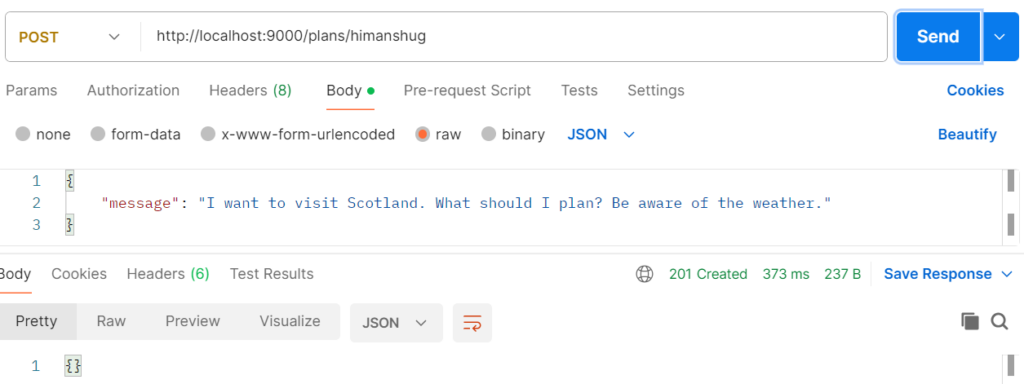

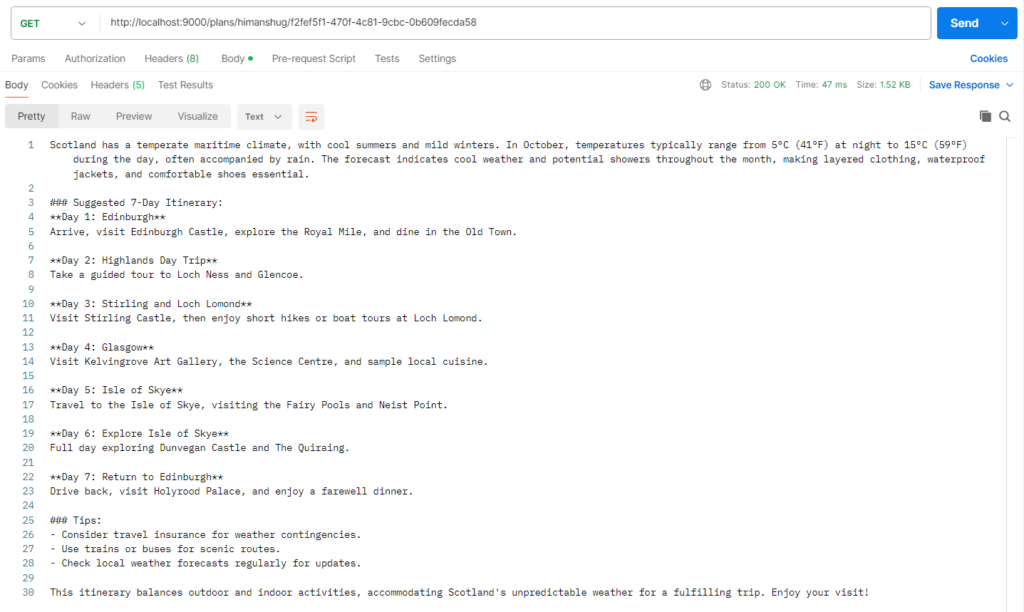

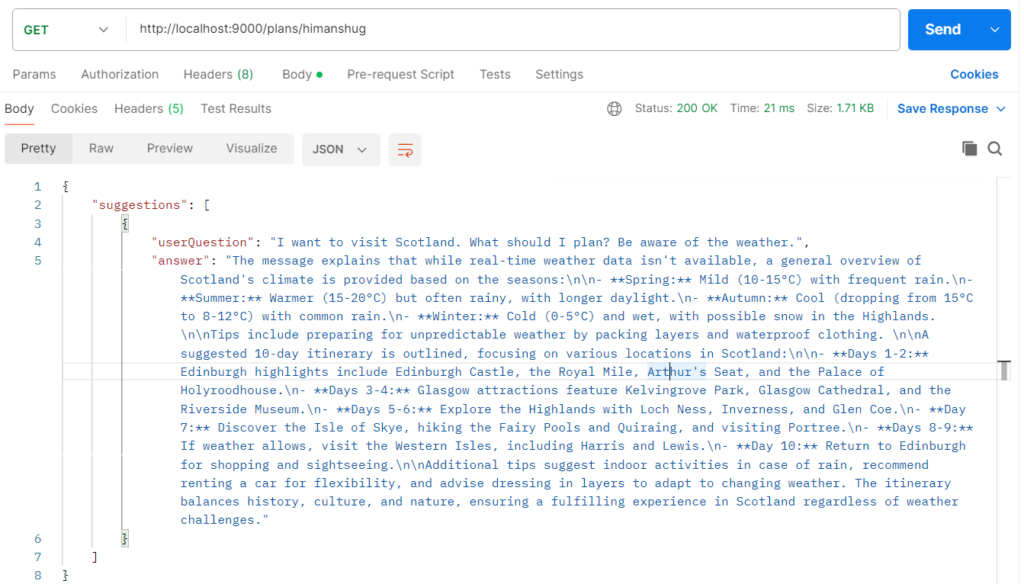

mvn compile exec:java3. Plan Trip

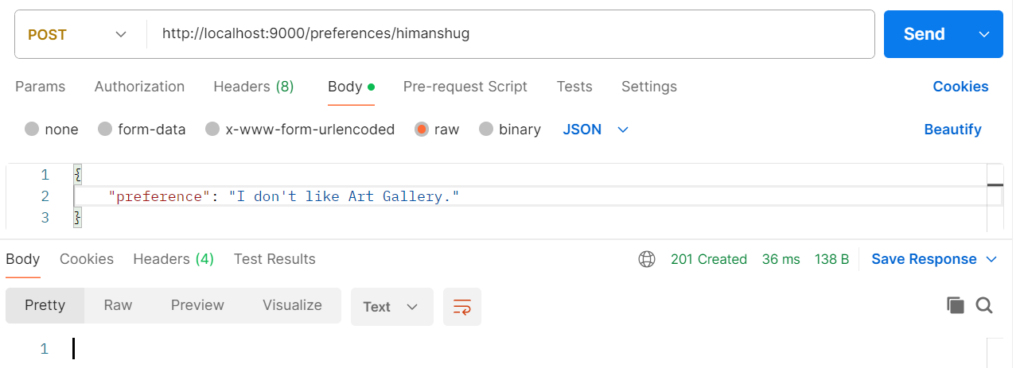

4. Update Preferences

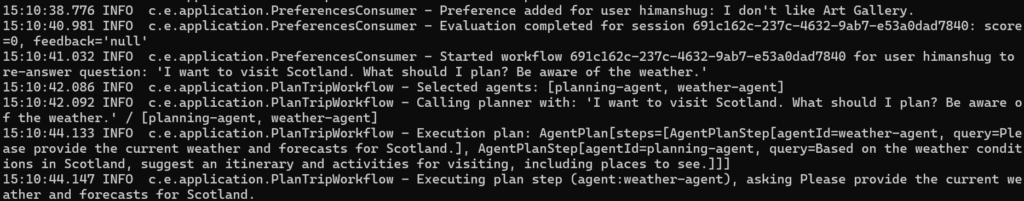

As soon as we update the preferences, the PreferencesConsumer evaluates the previous trip suggestions to update them according to changes in preferences. This can be seen in application logs.

5. Retrieve Updated Plan

In the image above, we can notice that Akka Agentic AI application has updated the trip plan as per the User preferences and removed the “Art Gallery” recommendation.

Conclusion

Finally, we have completed our first Akka Agentic AI application. If anyone is interested in experimenting with the code, then you can download it from the following link – akka-agentic-ai-trip-planner.