Spec-driven development (SDD) becomes popular, and most AI Coding Assistant tools have adopted .md-based configuration systems, but they solve slightly different problems. Understanding both helps you pick the right tool — and write better specs for each. In this article, we’ll focus on Github Copilot and Claude Code.

1. Spec-Driven Development

Spec-driven development (SDD) is the practice of writing a structured specification document before coding, then using that spec as the authoritative source of truth throughout the entire development lifecycle.

It’s not new. Teams have written PRDs, ADRs, and technical specs for decades. What is new is the audience: your spec is no longer just for humans — it’s for AI agents too.

When you give an AI coding agent a clear .md spec file, you’re not just describing what to build. You’re giving it:

- Constraints it must respect

- Conventions it should follow automatically

- Context it would otherwise hallucinate

- Test criteria it can verify against

The result? Less back-and-forth, fewer hallucinated implementations, and code that actually fits your system.

The Core Problem SDD Solves

Without a spec, every conversation with an AI agent starts from zero. The agent doesn’t know:

- What coding style your team uses

- What testing framework is in place

- What edge cases matter most

- What “done” actually means

With a well-written .md spec, the agent has all of that — and it applies it automatically.

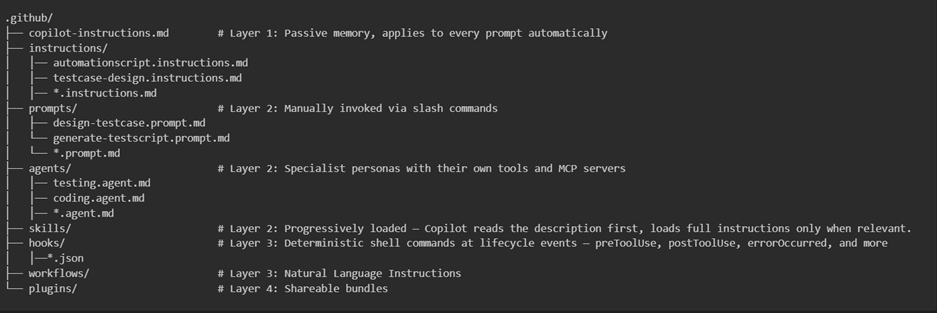

2. GitHub Copilot spec files.

In Github Copilot, the .md files are stored in .github folder with 4 layers.

Layer 1 — Always-On Context (copilot-instructions.md) This file acts as “passive memory” — loaded automatically on every prompt. Use it to enforce non-negotiable standards like coding standards, naming conventions.

Layer 2 — On-Demand Capabilities Prompt files, custom agents, and skills load progressively based on relevance. The agent only pulls what it needs. This layer is used for repeatable operational workflows

Layer 3 — Enforcement & Automation Hooks fire at lifecycle events (pre-commit, pre-push, on-review), and it will play the role as deterministic compliance gates. For workflows, they can be used for autonomous repo maintenance

Layer 4 — Distribution Plugins bundle everything for sharing across repos or organizations.

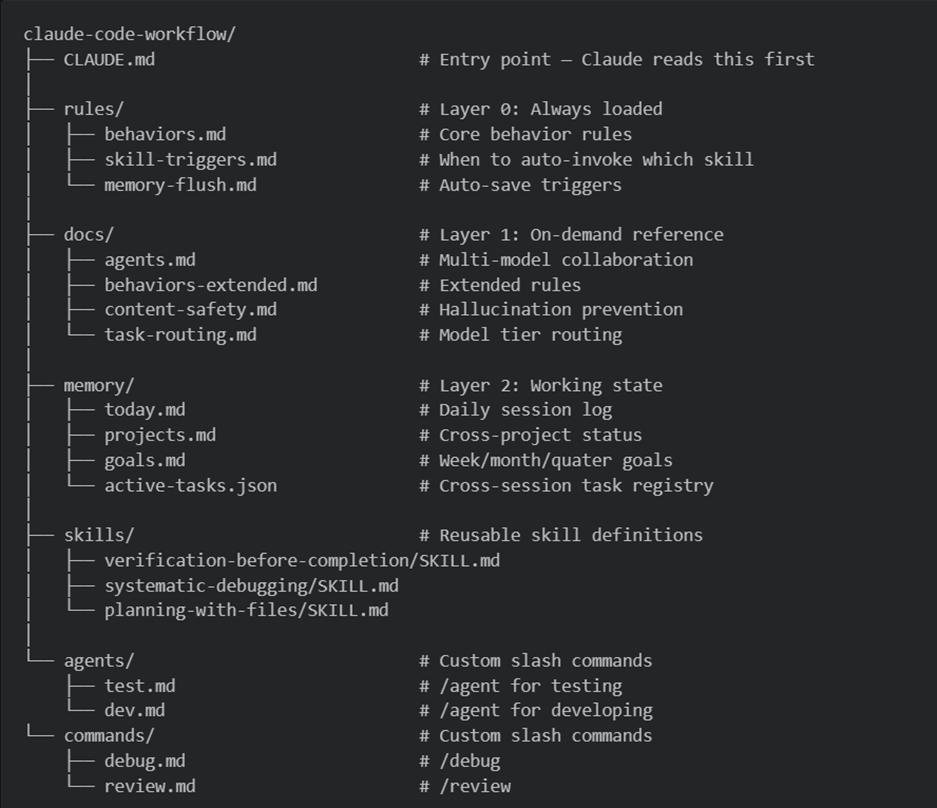

3. Claude Code spec files

Claude Code takes a complementary approach. Rather than organizing by tool layer, it organizes by **cognitive layer**: what Claude always knows, what it loads on demand, and what it writes as it works.

4. Practical Principles:

After seeing both systems, a few patterns consistently produce better results:

4.1. Be prescriptive, not aspirational

Bad:

Write good tests with reasonable coverage.Good:

Every exported function requires tests for: the happy path, one edge case, and one error path. Run `vitest run --coverage` and confirm branch coverage stays above 80%.4.2. Define “done” explicitly

The single highest-ROI thing you can add to any spec file:

## Definition of Done

A task is complete when:

- [ ] All new functions have tests

- [ ] `npm test` exits with code 0

- [ ] No new TypeScript errors (`tsc --noEmit`)

- [ ] The PR description explains *why*, not just *what*4.3. Enumerate what NOT to do

AI agents benefit enormously from explicit anti-patterns:

## Do Not

- Do not use `any` type in TypeScript

- Do not write tests that only assert `toBeTruthy()`

- Do not mock the database — use the test Docker container in `docker-compose.test.yml`

- Do not skip tests because the function "seems simple"4.4. Give the agent runnable commands

Don’t describe tests abstractly — give exact commands:

## Test Commands

– Unit tests: `pnpm test:unit`

– Integration tests: `pnpm test:integration` (requires Docker)

– Full suite with coverage: `pnpm test:ci`

– Single file: `pnpm vitest run src/path/to/file.test.ts`

4.5. Version your spec alongside your code

Your .md spec is code. It belongs in version control, it gets reviewed in PRs, and when your tech stack changes — your spec changes too.

Conclusion

Spec-driven development with .md files is not about adding bureaucracy — it’s about giving AI agents the context they need to be genuinely useful rather than generically helpful.

Both GitHub Copilot’s .github/ architecture and Claude Code’s CLAUDE.md system are converging on the same insight: the quality of AI output is bounded by the quality of the spec it works from.

Write better specs. Get better code. Ship tests that actually catch bugs.

Referrence:

- https://www.linkedin.com/feed/update/urn:li:activity:7442194903536656384/?originTrackingId=3HwntTrRePdY86RfmCQYEQ%3D%3D