What is Data Quality?

Data quality is a process to measure the accuracy, completeness, validity, consistency, uniqueness, etc. of the dataset or data assets. By tracking the data quality, a business can pinpoint potential issues that harm the quality of the data. Data quality is important because it directly impacts the reliability and accuracy of data used for decision-making.

To ensure the data quality of the data we are going to use a great expectations tool that is helpful for data quality checks.

What is Great Expectations?

Great Expectations is an open-source tool for managing data quality. It is basically used to validate the quality of the data to make it accurate, complete, unique, etc. and further, it can be used as per the business purpose.

- Test data they ingest from different sources to ensure the validity of data.

- Prevent data quality issues

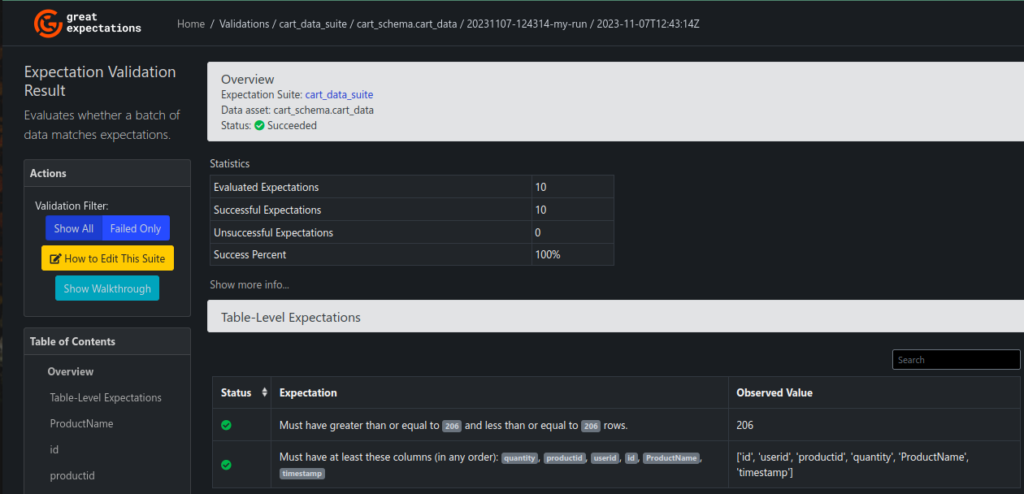

- It also provides the UI to view the quality of data.

In the below diagram, various data sources are identified. The Great Expectations tool is adept at accommodating multiple data sources for the purpose of conducting data quality checks. Following the validation process, the refined data becomes useful for analytical pursuits.

Some of the terms of great expectations tools are

What is Data Source?

A data source refers to a location or system from which data is collected, stored, and retrieved for various purposes, such as analysis, reporting, or application functionality. Configure your data source to apply the data quality checks.

What is Data Context?

A data context is the primary entry point for the great expectations, with configurations. Data Context contains the configurations for Expectations, Metadata Stores, Data Docs, Checkpoints, and all things related to working with Great Expectations.

What is Expectations?

Expectations are the conditions or rules that should meet to check the quality of data. Expectations help ensure data integrity, facilitate error detection, and enhance the overall quality of data for analysis and decision-making. Example Column data is null or not, distinct data in column or table, etc.

What is Checkpoint?

A Checkpoint is the primary means for validating data in a production deployment of Great Expectations. It contains the whole configuration about the data source, expectations suite, etc.

Great Expectations Dashboard of Data Quality

Conclusion

The data quality is used for accurate decision-making. Great Expectations, an open-source tool, plays a vital role by validating data quality through defined expectations and checkpoints, ensuring reliability and integrity across diverse data sources. Refer for more information.