What is Parallel Programming?

Parallel programming represents a programming paradigm where the simultaneous execution of multiple tasks or processes is employed to enhance overall program performance and efficiency. The objective is to decompose a problem into smaller, independent tasks that can be executed concurrently, leveraging the parallel processing capabilities of contemporary computer systems, such as multi-core processors.

Creating And Starting New Task

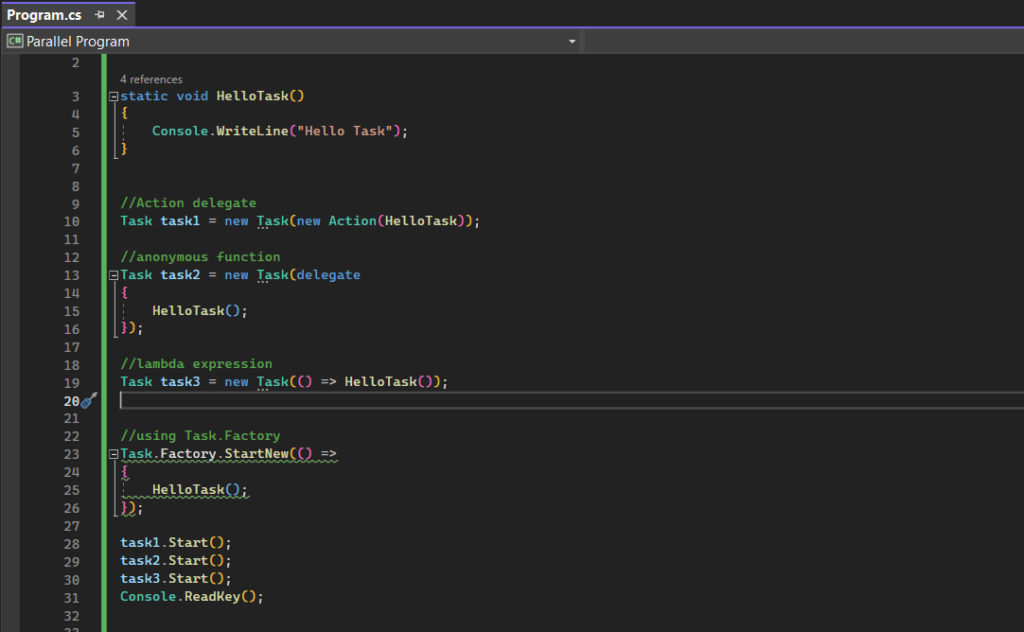

Task Parallel Library (TPL) provides several ways to start and create tasks. Here are some common methods:

- Action delegate

- Anonymous function

- Lambda function

- Task.Factory

Upon instantiation of a new Task class and specifying the desired workload as a constructor argument, initiating execution is achieved by invoking the Start() method on the created instance.

Cancelling A Task

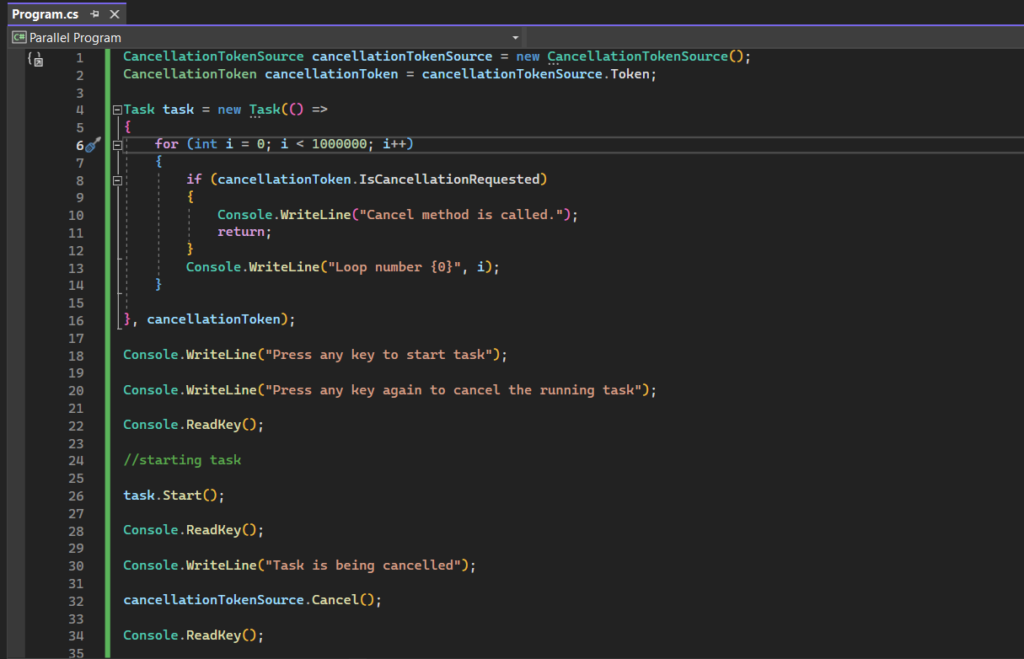

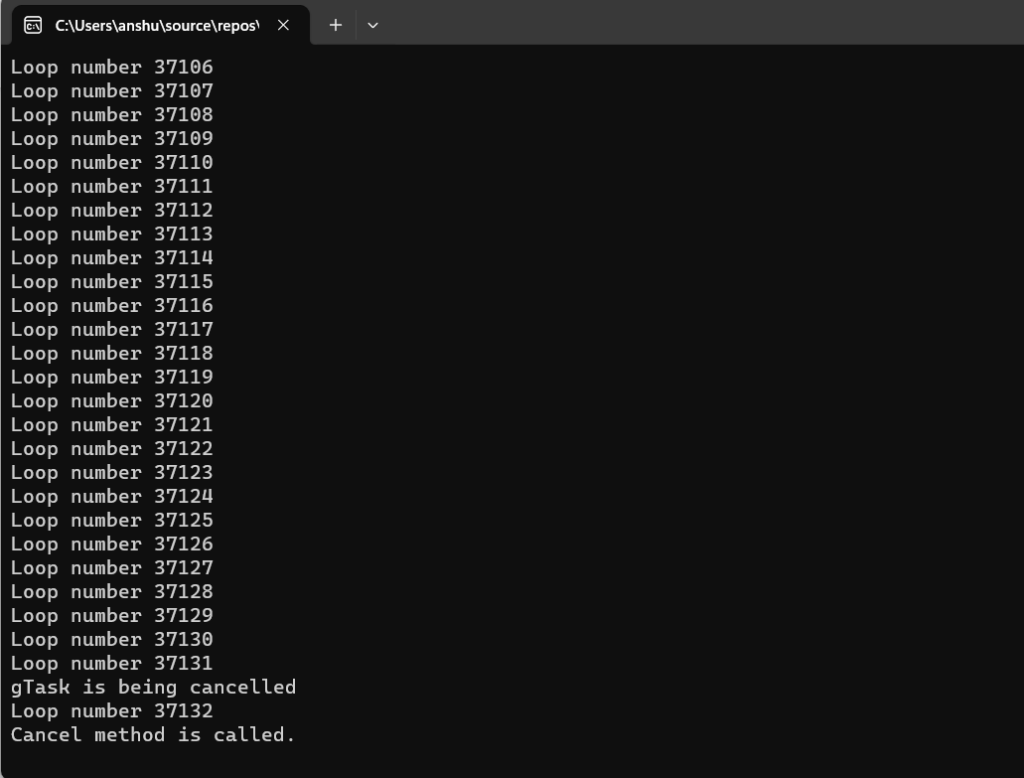

When dealing with more intricate tasks that require a significant amount of time for completion, it becomes essential to have a mechanism for canceling them if necessary. The Task Parallel Library (TPL) addresses this need by introducing cancellation tokens. To enable the cancellation of a initiated task, it is imperative to furnish the task’s constructor with an instance of CancellationToken. The acquisition of this token involves a two-step process. Initially, the creation of a CancellationTokenSource instance is required. Subsequently, to obtain the necessary CancellationToken instance, the CancellationTokenSource.Token property is invoked.

Once the token is obtained and passed to the task’s constructor, initiating cancellation is accomplished by invoking the Cancel() method of the CancellationTokenSource. It is crucial to note that calling the Cancel() method does not immediately cancel the associated task. Consequently, within the body of the given task, continuous monitoring of the token is necessary. This involves checking the IsCancellationRequested property of the token. When this property is set to true, indicating a cancellation request, the task can be canceled either by invoking “return” or by throwing an OperationCanceledException.

The following example shows a basic use of cancellation tokens to cancel a running task.

Waiting For Tasks

By invoking the Wait() method on an instance, you can wait until a singular task reaches completion. It’s essential to note that a task is considered complete not only when it executes its entire workload but also in cases where it has been canceled or has thrown an exception.

The static Task.WaitAll() method proves useful when waiting for multiple tasks to conclude. It ensures that the method doesn’t return until all the specified tasks have completed, thrown an exception, or been canceled. This method follows a similar overloading pattern as the Wait() method.

The static Task.WaitAny() method shares similarities with WaitAll, but instead of waiting for the completion of all tasks, it awaits the first task that either completes, gets canceled, or throws an exception. Additionally, it provides the array index of the first completed task as part of its return value.

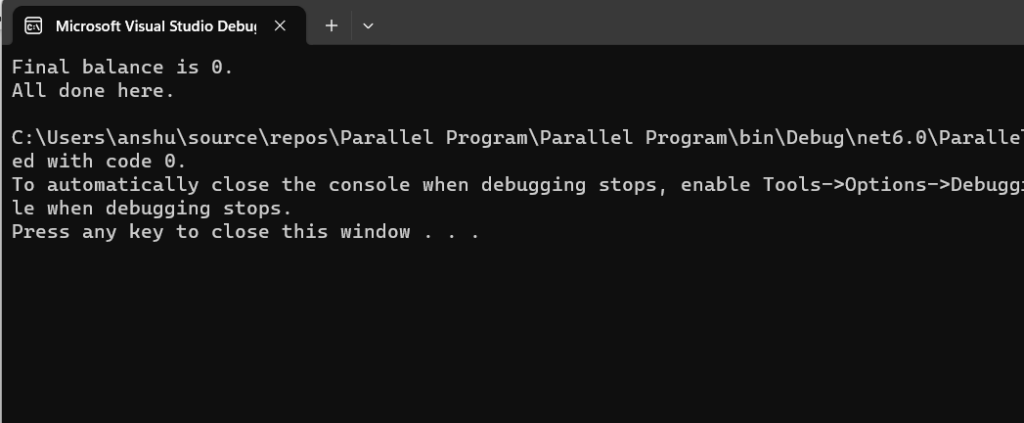

Example

Data Sharing and Synchronization

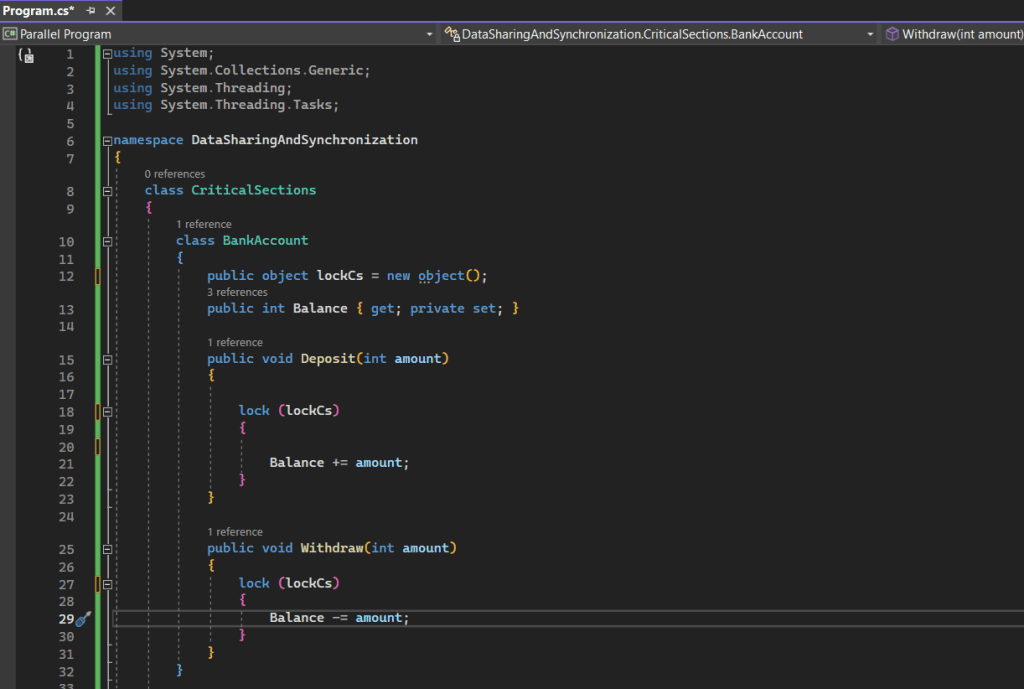

Critical Section

A critical section refers to a portion of code that should be executed by only one thread at a time. It is crucial to ensure that concurrent execution of this section by multiple threads does not lead to data corruption or unexpected behavior. In the TPL, critical sections are typically managed using synchronization mechanisms to control access to shared resources. The most common synchronization mechanism is the lock statement in C#, which ensures that only one thread can enter a specific block of code at a time. Here’s a basic example:

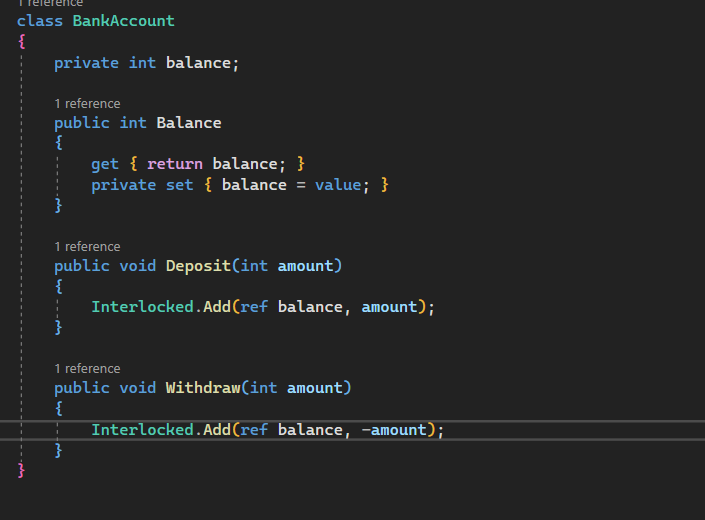

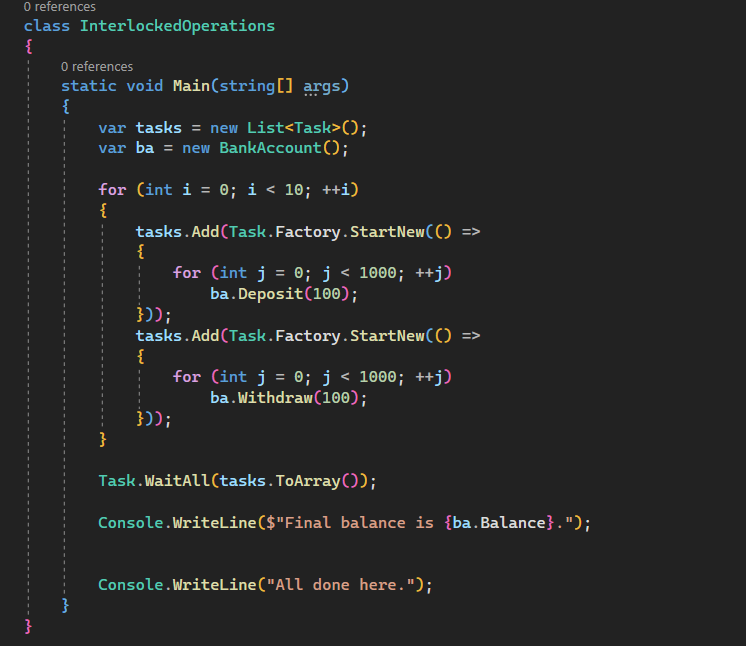

Interlocked Operations

nterlocked operations play a crucial role in executing atomic and thread-safe operations on shared variables. The Interlocked class offers methods that enable these operations to be performed without requiring explicit locks or additional synchronization mechanisms. This ensures that the operations are carried out seamlessly and without interruption from other threads.

Mutex

A Mutex (Mutual Exclusion) is a synchronization primitive used to control access to a shared resource by multiple threads. A Mutex ensures that only one thread can acquire the mutex at a time, allowing for exclusive access to the protected resource. The TPL itself doesn’t provide a specific Mutex class, but you can use the Mutex class from the System.Threading namespace.

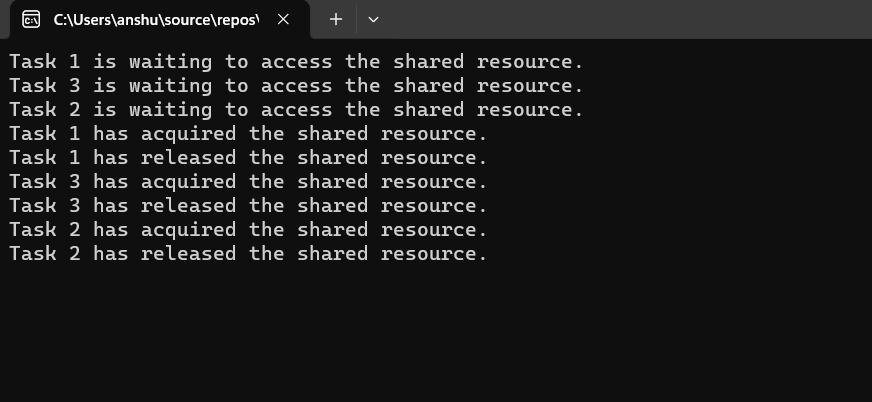

Example

Parallel Loops

In the Task Parallel Library (TPL) in C#, the parallel loop is often implemented using constructs like Parallel.Invoke, Parallel.For and Parallel.ForEach. These constructs allow for the concurrent execution of iterations in a loop, distributing the workload among multiple threads.

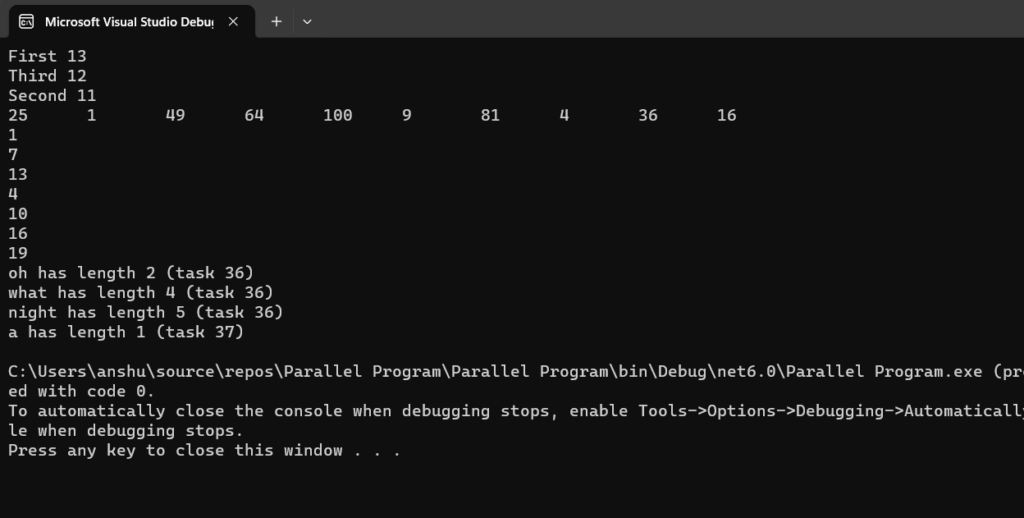

Example

Conclusion

In conclusion, parallel programming in C# through the Task Parallel Library (TPL) offers a robust approach to optimizing computational efficiency. By harnessing the power of concurrent execution and parallel constructs like Task, developers can capitalize on the capabilities of modern multi-core processors. The integration of synchronization mechanisms, cancellation tokens, and reader-writer locks ensures thread safety and data integrity in shared environments. While parallel programming provides substantial performance gains, thoughtful consideration of task nature and dependencies is crucial for effective implementation. Embracing parallelism in C# empowers developers to unlock the full potential of their applications, achieving improved responsiveness and scalability.