Performance Testing

Performance testing systematically evaluates how well an application performs under load. The term ‘Performance’ specifically addresses the application’s behaviour concerning its responsiveness and stability. Responsiveness refers to how quickly the application responds to user interactions or requests, ensuring a smooth and seamless user experience. Stability, conversely, relates to the application’s ability to maintain consistent performance levels without crashing or exhibiting unexpected behaviour under varying conditions. This blog will explain performance testing and different types of performance testing.

The other crucial term in this context is ‘Load,’ which refers to the burden or demand placed on an application by real-world user interactions. Load testing involves simulating different levels of user activity to assess how the application handles the resulting workload. By applying realistic user loads during performance testing, testers can gauge the application’s performance metrics such as response times, throughput, and resource utilization under typical usage scenarios.

Therefore, performance testing is essential for determining how effectively an application can maintain its responsiveness and stability when subjected to the actual user load it is expected to handle in real-world environments. This comprehensive evaluation helps identify performance bottlenecks, optimize system resources, and ensure a satisfactory user experience even during peak usage periods.

The process of performance testing encompasses several essential steps that are crucial for evaluating and improving the performance of an application or system. These steps ensure a systematic and comprehensive approach to identifying performance issues and optimizing the system’s responsiveness, scalability, and reliability.

Here’s a detailed outline of the key steps involved in the performance testing process:

Requirement Analysis:

The first step involves understanding the performance requirements and objectives of the application or system under test. This includes defining performance metrics such as response times, throughput, concurrency levels, and resource utilization targets. Clear performance goals help guide the testing process and ensure that the testing efforts align with business and user expectations.

Test Planning:

In this phase, the testing team creates a detailed test plan outlining the scope, objectives, test scenarios, testing tools, resources, and timelines for performance testing. The test plan specifies the types of performance tests to be conducted, such as load testing, stress testing, scalability testing, and endurance testing, based on the identified requirements and performance goals.

Test Environment Setup:

Setting up the test environment involves configuring the hardware, software, networks, and test data required for conducting performance tests. The test environment should closely resemble the production environment to simulate realistic user interactions and workload conditions accurately. This includes provisioning servers, databases, network infrastructure, test scripts, and performance monitoring tools.

Test Script Development:

Performance test scripts play a pivotal role in simulating real-world user interactions and transactions. These scripts are meticulously crafted using performance testing tools or scripting languages to accurately mimic user behavior. The aim is to generate the desired user load, simulate concurrent user sessions, and measure critical performance metrics such as response times, transactions per second, and error rates. By developing comprehensive test scripts, testers can effectively gauge the application’s performance under varying conditions and ensure its responsiveness and stability.

Test Execution:

Once the test scripts are developed, the next step is to execute the test scenarios according to the meticulously crafted test plan. The performance testing tools simulate user activities, generate load on the system, and collect performance data during test execution. Various types of performance tests, such as load tests, stress tests, endurance tests, and scalability tests. These tests executed to assess different aspects of the system’s performance under varying conditions.

Performance Monitoring:

During test execution, performance metrics and key performance indicators (KPIs) are monitored in real-time using performance monitoring tools. This includes tracking metrics such as CPU utilization, memory usage, network latency, response times, throughput, and error rates to analyze system behaviour under load and identify performance bottlenecks or anomalies.

Results Analysis:

After completing test execution, the performance test results are analysed to evaluate the system’s performance against the defined performance criteria and objectives. Performance reports and dashboards are generated to summarize test findings, identify performance issues, and prioritize areas for optimization and improvement.

Optimization and Retesting:

Based on the analysis of test results, performance bottlenecks are identified, and optimization strategies are implemented to improve system performance. This may involve code optimizations, database tuning, caching mechanisms, server configurations, or infrastructure upgrades. After implementing optimizations, retesting is conducted to validate performance improvements and ensure that the system meets the desired performance standards.

Reporting and Documentation:

Finally, a detailed performance test report is prepared, documenting the test objectives, methodologies, test results, findings, recommendations, and actions taken. The performance test report provides stakeholders with valuable insights into the system’s performance, highlights areas for improvement, and serves as a basis for decision-making and future performance testing initiatives.

Overall, the performance testing process is a structured and iterative approach aimed at evaluating, optimizing, and validating the performance of an application or system to ensure optimal responsiveness, scalability, and reliability in real-world usage scenarios.

Scope of Performance Testing

The scope of performance testing encompasses various aspects and scenarios that need to be considered to ensure thorough evaluation of a system’s performance. Here are the key areas that fall within the scope of performance testing:

Response Time:

Measure the time taken by the system to respond to user requests under different loads and scenarios. This includes assessing response times for various operations such as page loading, data retrieval, and transaction processing.

Throughput:

Evaluate the system’s ability to handle a certain volume of transactions or requests within a specific timeframe. Throughput testing helps determine the system’s processing capacity and performance under load.

Concurrency:

Test the system’s performance under concurrent user sessions to identify issues related to resource contention, locking mechanisms, and concurrency control. This includes assessing how well the system handles multiple users performing operations simultaneously.

Scalability:

Assess the system’s scalability by increasing the load gradually and measuring its performance as the load grows. Scalability testing helps determine if the system can handle increasing user demands by adding resources (e.g., servers, processors) without significant performance degradation.

Stress Testing:

Subject the system to extreme conditions beyond its normal operational limits to assess its stability and resilience. Stress testing helps identify failure points, bottlenecks, and performance issues under high-stress scenarios.

Load Balancing:

Evaluate how well load balancing mechanisms distribute incoming traffic across multiple servers or resources to optimize performance and prevent overload on any single component.

Resource Utilization:

Monitor and analyse the system’s resource utilization, including CPU usage, memory consumption, disk I/O, and network bandwidth, to identify performance bottlenecks and optimize resource allocation.

Database Performance:

Assess the performance of database operations, including data retrieval, storage, indexing, and query execution. Database performance testing helps optimize database configurations, indexing strategies, and query optimization to improve overall system performance.

Caching Mechanisms:

Evaluate the effectiveness of caching mechanisms (e.g., content caching, database caching, session caching) in improving response times, reducing server load, and enhancing overall system performance.

Third-Party Integrations:

Test the impact of third-party integrations (e.g., APIs, external services, plugins) on system performance to ensure smooth interaction and minimal performance overhead.

Client-Side Performance:

Evaluate client-side performance factors such as browser rendering, JavaScript execution, CSS optimization, and client-server communication to enhance user experience and responsiveness.

Mobile Performance:

Assess the performance of mobile applications or responsive web designs on different mobile devices, screen sizes, and network conditions to ensure optimal performance across mobile platforms.

Geographical Performance:

Test the system’s performance from different geographical locations to evaluate latency, network speed, and content delivery across global user bases.

Baseline Performance:

Establish performance baselines under normal operating conditions to compare against during testing, track performance trends, and identify deviations or improvements over time.

Compliance and Standards:

Ensure that the system meets performance requirements, industry standards, and regulatory compliance related to performance metrics, response times, availability, and reliability.

Considering these aspects, organizations comprehensively evaluate and optimize system performance to deliver seamless user experiences and meet business objectives.

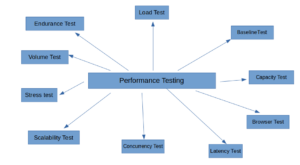

Types of Performance Testing

Performance testing encompasses several types, each focusing on specific aspects of an application or system’s performance. Here are the key types of performance testing commonly used in software development:

Load Testing:

Purpose: Assess the system’s ability to handle a specific workload or user concurrency level.

Key Metrics: Response times, throughput, resource utilization under load.

Tools: Apache JMeter, LoadRunner, Gatling.

Stress Testing:

Purpose: Evaluate system behaviour under extreme conditions or beyond normal operational limits.

Key Metrics: Stability, reliability, error handling, recovery mechanisms.

Tools: Apache JMeter, LoadRunner, Stress Stimulus.

Endurance/Soak Testing:

Purpose: Evaluate system performance over an extended period under sustained load conditions.

Key Metrics: Memory leaks, resource exhaustion, degradation over time.

Tools: Apache JMeter, LoadRunner, Soak Testing Tool.

Scalability Testing:

Purpose: Assess the system’s ability to scale up or down based on changing workload demands.

Key Metrics: Scalability factors (vertical/horizontal), resource allocation, performance under varied loads.

Tools: Apache JMeter, LoadRunner, Gatling, Tsung.

Volume Testing:

Purpose: Evaluate system performance with a large volume of data to ensure efficient data processing and storage.

Key Metrics: Data handling capabilities, database performance, data retrieval times.

Tools: Apache JMeter, LoadRunner, Gatling, Tsung.

Concurrency Testing:

Purpose: Evaluate system performance under multiple concurrent user sessions or processes.

Key Metrics: Resource contention, locking mechanisms, concurrency control.

Tools: Apache JMeter, LoadRunner, Gatling, Tsung.

Baseline Testing:

Purpose: Establishing performance baselines under normal operating conditions allows for comparison during testing.

Key Metrics: Response times, throughput, resource utilization under typical loads.

Tools: Performance monitoring tools, load testing tools with baseline recording capabilities.

Capacity Testing:

Purpose: Determine the maximum capacity of the system in terms of users, transactions, or data volume.

Key Metrics: Maximum capacity thresholds, resource utilization at peak loads.

Tools: Apache JMeter, LoadRunner, Gatling, Capacity Planner tools.

Latency Testing:

Purpose: Measure network latency and response times between client-server interactions.

Key Metrics: Network latency, round-trip times, data transfer speeds.

Tools: Ping, Traceroute, Network monitoring tools.

Browser Performance Testing:

Purpose: Assess client-side performance factors including browser rendering, JavaScript execution, and CSS optimization.

Key Metrics: Page load times, rendering speeds, client-server communication efficiency.

Tools: Google Lighthouse, Webpage Test, YSlow.

Customization in Performance Testing:

Performance testing offers customizable options to address specific performance challenges and ensure optimal system performance.

Identifying Performance Bottlenecks:

A key goal of performance testing is to identify bottlenecks that affect application responsiveness or stability. Through meticulous analysis of performance metrics. Testers can uncover areas of inefficiency and implement targeted optimizations to enhance overall system performance.

Optimizing Resource Utilization:

Performance testing empowers organizations to efficiently utilize hardware, software, and network resources to meet performance requirements. By fine-tuning resource allocation, organizations can maximize system efficiency and minimize performance bottlenecks.

Meeting Performance Requirements:

Ultimately, the goal of performance testing is to ensure that your system meets performance requirements and user expectations. Thorough performance testing ensures reliable system performance, delivering a seamless user experience under diverse loads and scenarios.

Conclusion:

In conclusion, customization plays a pivotal role in performance testing, enabling organizations to tailor testing methodologies to their specific needs.