Introduction

In recent years, natural language processing (NLP) has witnessed a revolutionary breakthrough with the advent of BERT (Bidirectional Encoder Representations from Transformers). BERT, a pre-trained transformer-based language model, has significantly advanced the capabilities of machines in understanding and processing human language.

Understanding BERT

BERT, developed by researchers at Google, is a groundbreaking model that captures the bidirectional context of words in a sentence using a transformer architecture. Unlike traditional language models that process text sequentially, BERT leverages self-attention mechanisms to consider the entire context of a word. This enables BERT to generate highly contextualized word representations, leading to a profound understanding of language semantics.

Pre-training and Fine-tuning

The strength of BERT lies in its pre-training and fine-tuning process. During pre-training, BERT is trained on massive amounts of unlabeled text data, such as Wikipedia articles. It learns to predict masked words in a sentence by utilizing the context provided by other words. Additionally, BERT learns the relationship between consecutive and random sentences, enhancing its comprehension of discourse-level semantics. After pre-training, BERT can be fine-tuned on specific downstream tasks, making it adaptable and highly efficient.

Applications of BERT

- Text Classification: It has demonstrated remarkable performance in various text classification tasks. By fine-tuning labeled data, BERT can accurately classify sentiment analysis, spam detection, topic categorization, and more. Its contextual understanding allows it to capture subtle linguistic cues, resulting in improved classification accuracy.

- Named Entity Recognition (NER): NER involves identifying and classifying named entities in text, such as names of people, organizations, and locations. BERT’s ability to comprehend context aids in accurately extracting and classifying these entities, benefiting applications like information extraction, chatbots, and data mining.

- Question Answering: It has proven highly effective in question-answering tasks. By fine-tuning question-and-answer datasets, BERT can accurately generate relevant answers by understanding the contextual information within the question and the provided text.

- Natural Language Understanding (NLU): BERT excels in NLU tasks by comprehending the nuances of language. It has contributed to improving chatbot capabilities, virtual assistants, and automated customer support systems. BERT’s contextual representations enable more accurate and context-aware responses.

- Machine Translation: It has also made significant contributions to machine translation tasks. By understanding the contextual meaning of words, BERT helps capture subtle differences in language and improves the accuracy and fluency of translated text.

Example: Sentence Classification

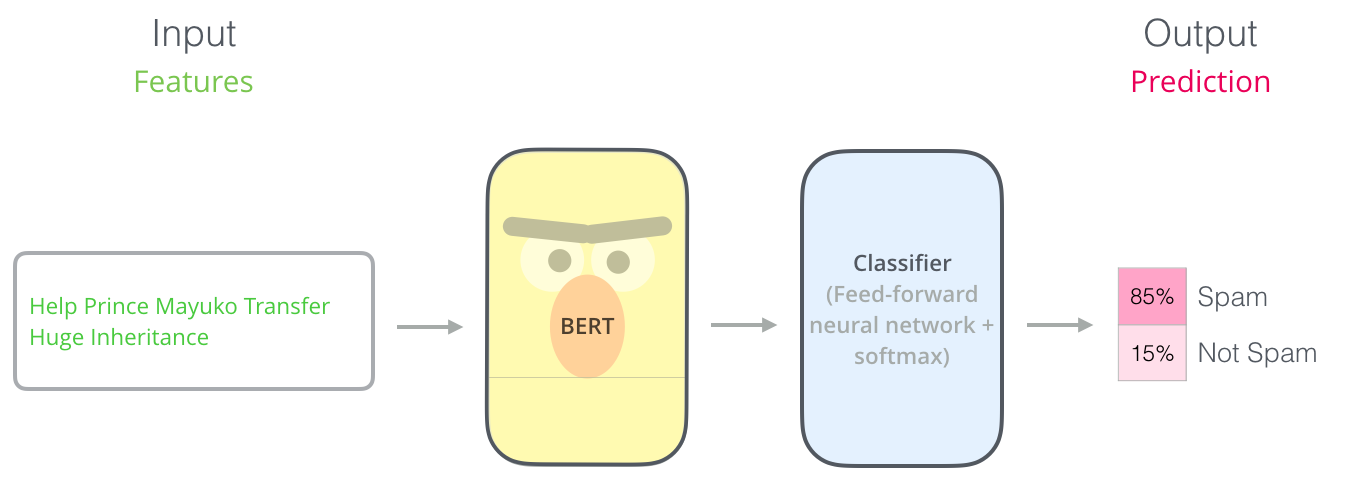

The easiest way to use BERT is to classify a single piece of text. The model will look like this –

To train such a model, you mainly have to train the classifier, with minimal changes happening to the BERT model during the training phase. This training process is called Fine-Tuning.

Conclusion

BERT has ushered in a new era of natural language processing, revolutionizing the way machines understand and process human language. Its ability to capture bidirectional context and generate deep contextualized representations has led to breakthroughs in various NLP applications. BERT’s impact is evident in text classification, named entity recognition, question answering, machine translation, and many more domains. As research continues to evolve, we can expect further advancements and refinements to BERT and its successors, empowering machines with even greater language understanding capabilities. The future of NLP looks promising, thanks to BERT’s transformative power.

References

- https://www.techtarget.com/searchenterpriseai/definition/BERT-language-model#:~:text=BERT%20is%20an%20open%20source,surrounding%20text%20to%20establish%20context.

- https://towardsdatascience.com/bert-explained-state-of-the-art-language-model-for-nlp-f8b21a9b6270?gi=51d8f9156ffc