As artificial intelligence (AI) and natural language processing (NLP) evolve, the ability of models to remember and utilize context over extended interactions becomes increasingly critical. This capability is especially important for applications requiring complex reasoning and long-term user engagement. LangChain, provides powerful tools for managing memory in interactions

Understanding Memory in LangChain

Memory in LangChain can be thought of as a mechanism that stores and retrieves information across multiple interactions. This allows the model to maintain context and provide more accurate and relevant responses. Memory management in LangChain is designed to handle different types of memory, including:

- Short-term Memory: Retains information for the duration of a single interaction or a limited number of interactions. Useful for maintaining context within a conversation.

- Long-term Memory: Stores information over extended periods, enabling the model to remember facts, user preferences, and past interactions across multiple sessions.

- Working Memory: A dynamic memory that handles temporary information needed for immediate processing and reasoning tasks.

Types of Memory in LangChain

LangChain supports various memory types that cater to different use cases and interaction complexities. Understanding these memory types is crucial for designing applications that effectively utilize the framework’s capabilities.

- Entity Memory: Focuses on retaining information about specific entities mentioned during interactions. This type of memory is useful for applications that need to remember details about people, places, objects, or any other specific entities.

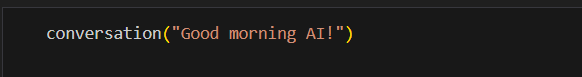

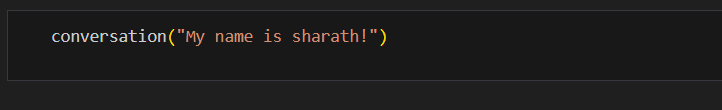

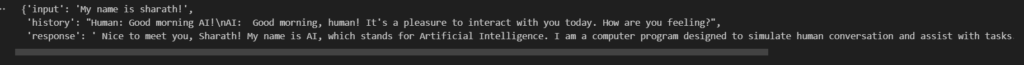

- Conversation Memory: Stores the history of a conversation, allowing the model to maintain context over multiple exchanges. This is essential for chatbots and virtual assistants that need to refer back to previous messages to provide coherent responses.

- Knowledge Memory: Retains factual information and knowledge acquired during interactions. This can include general facts, domain-specific knowledge, or user-provided information that the model needs to remember and utilize in future interactions.

- User Memory: Focuses on storing user-specific data, such as preferences, past interactions, and personalized settings. This type of memory is key to delivering personalized experiences and improving user satisfaction.

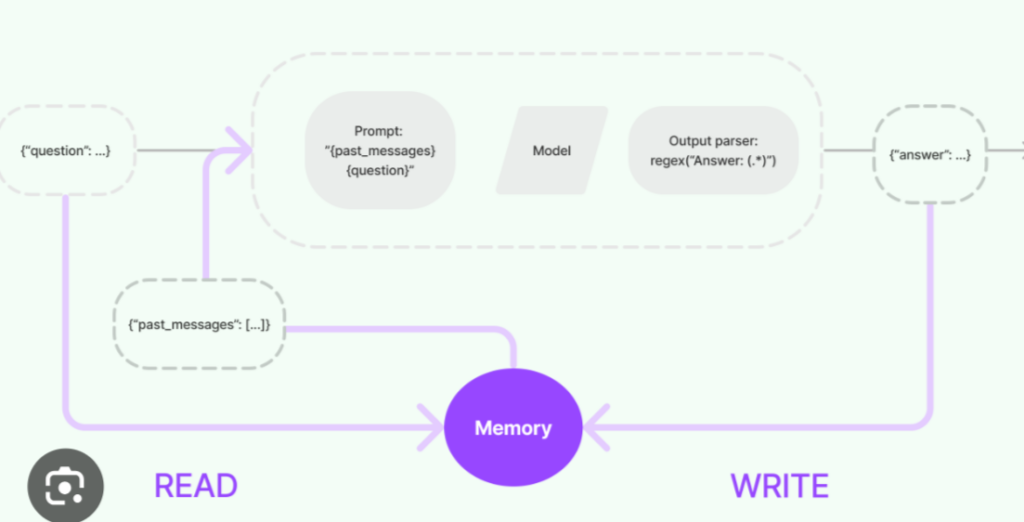

Components of Memory Management in LangChain

LangChain’s memory management system is composed of several key components that work together to ensure efficient and effective memory usage:

- Memory Stores: These are storage systems where information is kept. LangChain supports various types of memory stores, such as in-memory databases, persistent storage, and external databases like Redis or MongoDB.

- Memory Providers: These are interfaces that allow the model to read from and write to memory stores. Memory providers abstract the underlying storage mechanisms, making it easy to switch between different memory stores.

- Memory Policies: These define how memory is managed, including strategies for information retention, retrieval, and forgetting. Policies can be customized based on the application’s needs, such as prioritizing recent interactions or retaining important facts indefinitely.

- Memory Handlers: These are responsible for orchestrating memory operations, such as updating the memory store with new information, retrieving relevant context, and applying memory policies.

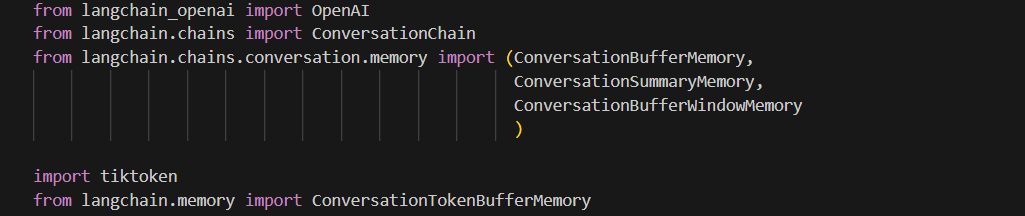

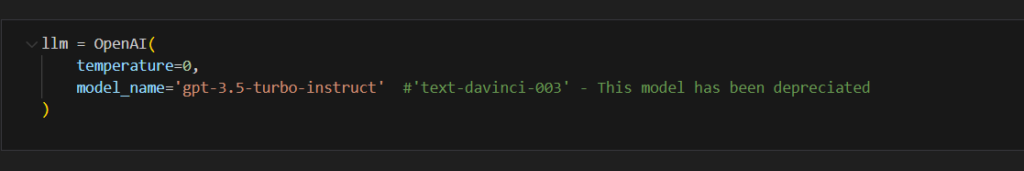

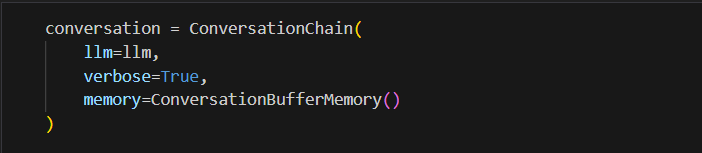

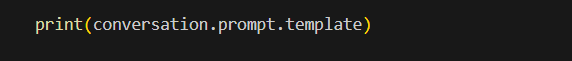

Implementing Memory Management in LangChain

Benefits of Effective Memory Management

Implementing effective memory management in LangChain offers several benefits:

- Enhanced Contextual Understanding: By retaining relevant information, the model can provide responses that are more accurate and contextually aware.

- Personalized Interactions: Remembering user preferences and past interactions allows the model to deliver a more personalized and engaging experience.

- Improved Efficiency: Efficient memory management reduces the need for redundant data processing, leading to faster and more responsive interactions.

- Scalability: LangChain’s flexible memory management system can be scaled to handle large volumes of data and complex interaction histories, making it suitable for enterprise-level applications.

Conclusion

Memory management is a crucial aspect of building intelligent and context-aware applications using LangChain. By leveraging its robust memory stores, providers, policies, and handlers, developers can create sophisticated conversational agents that deliver enhanced user experienPostces. Whether you’re building a customer support chatbot, a virtual assistant, or any other AI-driven application, effective memory management in LangChain will help you achieve better performance and user satisfaction.