Prompt engineering has become an essential skill in the world of AI and Natural Language Processing (NLP). It involves designing prompts that guide AI models, like GPT-4, to generate accurate and relevant outputs. This guide will explore key techniques in prompt engineering, complete with code examples to help you apply these concepts effectively.

Understanding Prompt Engineering

Prompt engineering is all about crafting prompts that extract the desired output from AI models. This involves carefully structuring the prompt, providing context, and sometimes offering examples to guide the model’s behavior.

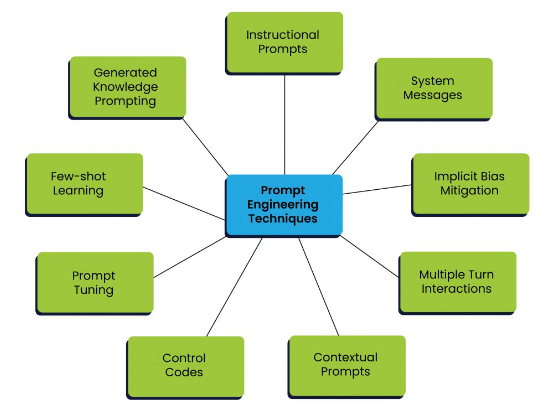

Key Techniques in Prompt Engineering

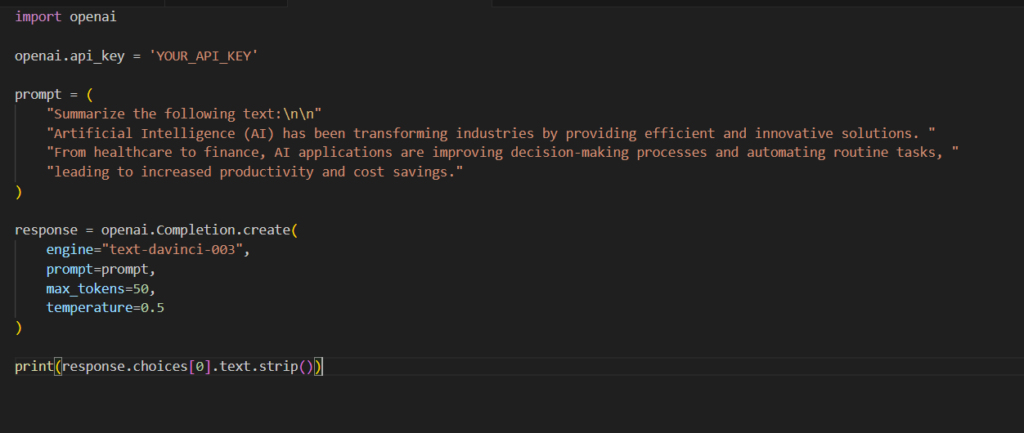

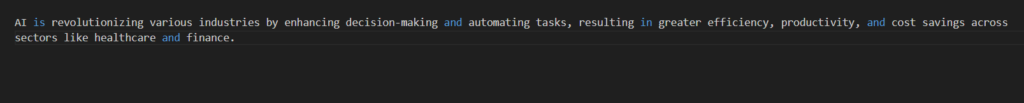

1. Instruction-Based Prompts

Instruction-based prompts are direct commands or questions that tell the model exactly what to do. These prompts are simple yet powerful for tasks that require straightforward outputs.

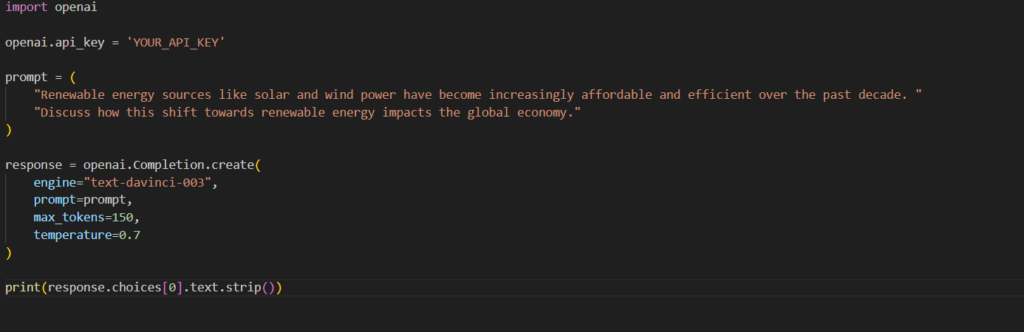

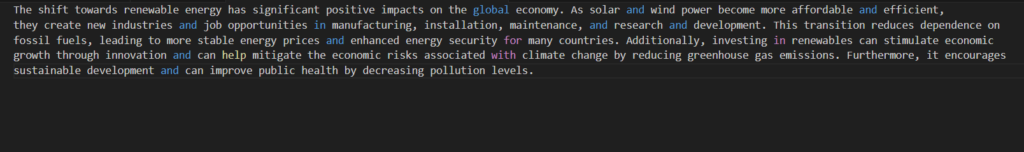

2. Contextual Prompts

Contextual prompts provide additional background information or context to guide the model towards generating more relevant and accurate responses.

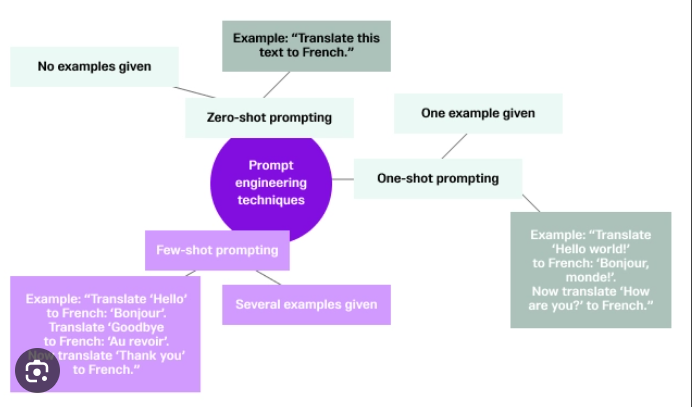

3. Few-Shot Learning Prompts

Few-shot learning involves providing the model with a few examples of the desired input-output behavior to guide its response.

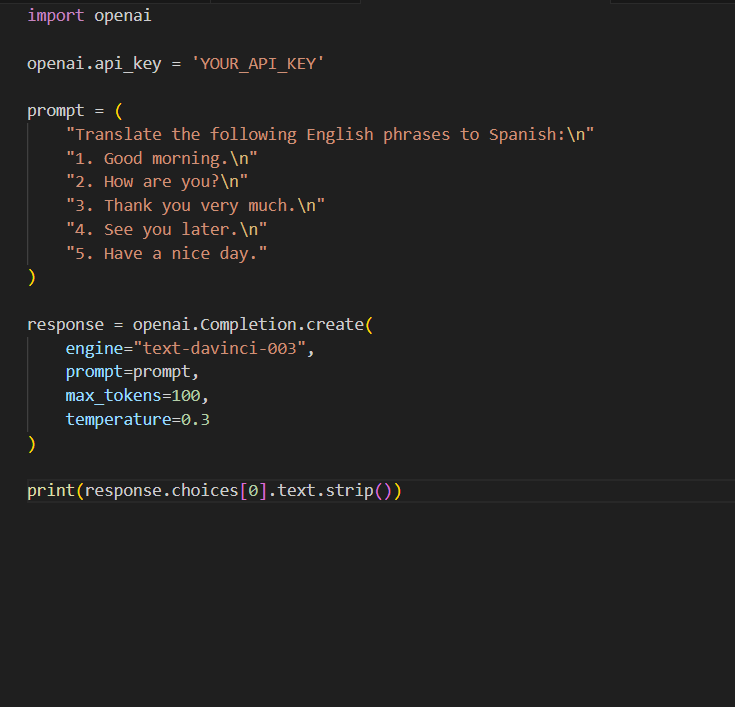

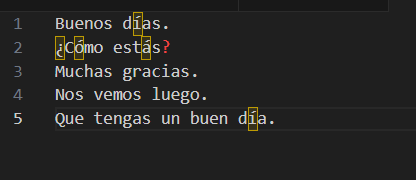

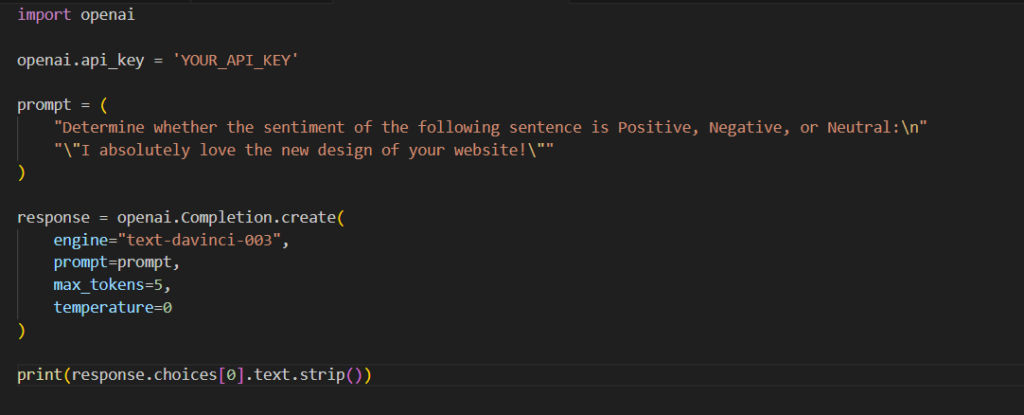

4. Zero-Shot Learning Prompts

Zero-shot learning requires the model to perform a task without any prior examples, relying solely on the instruction provided.

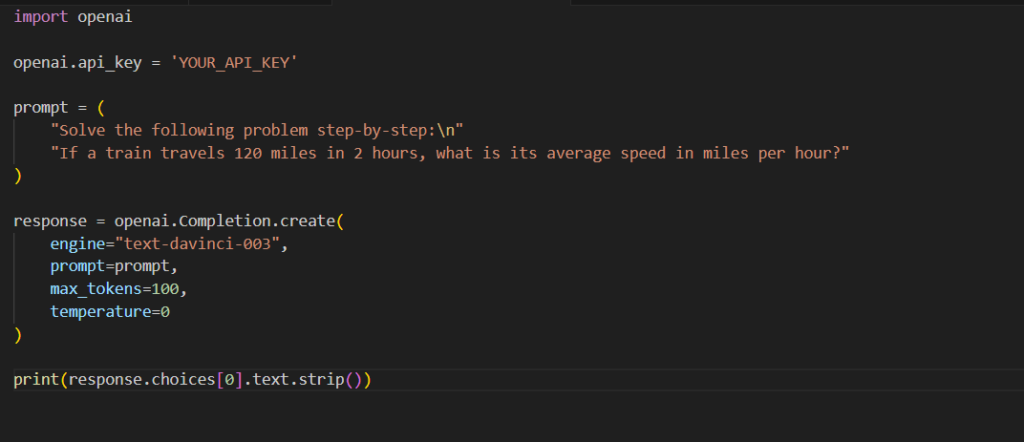

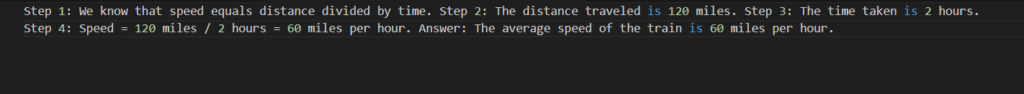

5. Chain-of-Thought Prompting

Chain-of-thought prompting guides the model to reason through a problem step-by-step, enhancing its ability to handle complex tasks.

Conclusion

Effective prompt engineering is essential for leveraging the full capabilities of AI language models. By utilizing techniques such as instruction-based prompts, contextual prompts, few-shot and zero-shot learning, chain-of-thought prompting, persona-based prompts, and detailed descriptive prompts, you can guide models like GPT-4 to produce accurate, relevant, and high-quality outputs tailored to your specific needs.