As software applications become more complex, traditional API testing methods can struggle to keep up. API testing is crucial for ensuring systems communicate and work together smoothly, but it can be challenging as APIs evolve. This is where Artificial Intelligence (AI) and Prompt Engineering come in. These technologies transform API testing by making it smarter, faster, and more adaptable.

By combining traditional testing with AI, we can create a streamlined process that:

- Handles the growing complexity of APIs.

- Boosts productivity and accuracy.

- Reduces the time spent on debugging and maintenance.

In this guide, we’ll show you how AI and Prompt Engineering can improve API testing and prepare you for future challenges in test automation.

Understanding Prompt Engineering and How AI Can Optimize Testing Workflows

Prompt engineering involves crafting and optimizing prompts to maximize the effectiveness of large language models (LLMs) across various applications. By carefully structuring prompts, you can enhance model performance on tasks like question answering and reasoning while generating context-aware, realistic test inputs tailored to your testing needs.

AI and prompt engineering address challenges in traditional API testing, including test case creation, response validation, and test data management. These tasks can be time-consuming and prone to mistakes. By using AI, we can address these problems in several ways:

- Automated Test Case Creation: AI can analyze API specifications and automatically generate comprehensive test cases. This reduces manual effort and ensures that all possible scenarios are tested.

- Dynamic and Realistic Data Simulation: AI can create context-specific, edge-case data, simulating real-world conditions for thorough testing.

- Intelligent Validation: AI uses machine learning to detect subtle issues in API responses. It ensures that discrepancies, even those easily missed manually, are caught.

- Scalable Testing: AI can easily handle large-scale API testing with minimal effort, running multiple test scenarios at once.

- Self-Healing Tests: AI adapts test scripts, automatically fixing issues when changes occur. For example, if an API changes, AI can detect the change and update the tests accordingly, saving time on maintenance.

Leveraging AI and Prompt Engineering for Advanced API Test Automation with Robot Framework

In this section, we delve into an innovative approach to enhancing API test automation by leveraging real-world code examples. Our focus is to provide practical solutions for common challenges, demonstrating how Prompt Engineering and AI optimize the testing process.

The primary goals of this section are to:

- Enhance and improve existing API test flows.

- Optimize testing efficiency through the integration of AI and Prompt Engineering.

- Demonstrate the seamless incorporation of these cutting-edge methodologies into an established test framework.

Why Robot Framework?

For demonstration purposes, we have chosen the Robot Framework, a widely used and powerful open-source test automation tool. Its flexibility and scalability make it an ideal platform for this initiative. This is especially true when integrating Prompt Engineering and AI capabilities into API test flows. Below are the reasons why it is a perfect fit for this purpose:

- Extensibility for AI Integration: Supports Python/Java, enabling integration with AI frameworks for test case generation, analysis, and flow optimization.

- Keyword-Driven Testing: High abstraction and readability, allowing AI to dynamically generate or optimize test keywords.

- Seamless API Test Automation: Robust API libraries (e.g., RequestsLibrary) enable dynamic test generation, request optimization, and adaptive testing using AI.

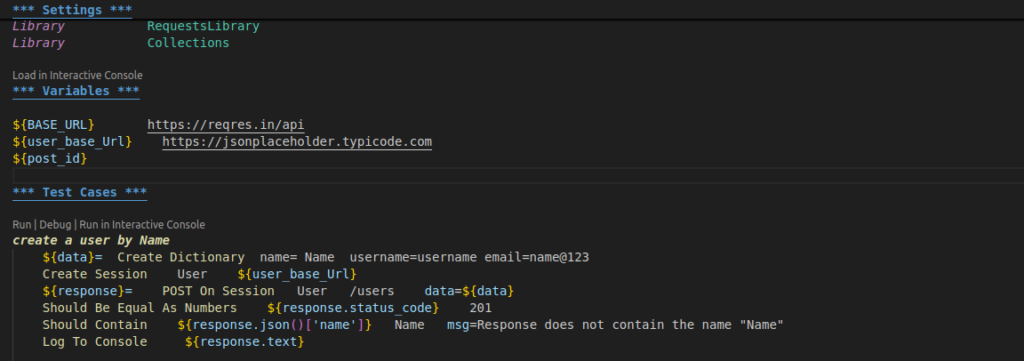

Step 1: Laying the Foundation for Writing Basic API Tests

To enhance our API test with AI and Prompt Engineering, we will first write a basic API test. This will allow everyone to understand each step of the process. Once the foundation is in place, we’ll introduce AI and Prompt Engineering to demonstrate the benefits they offer over traditional API testing.

Step 2: Integrating AI with API Testing

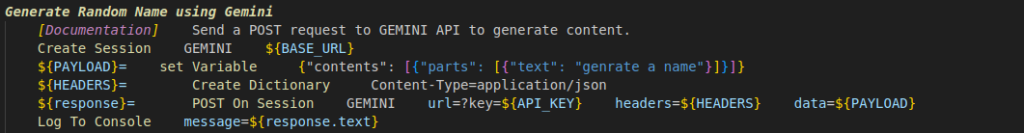

As we have already implemented a basic API test that creates a user by using name, username, and email as payload data, the next step is to enhance this process by integrating AI. One key objective of using AI in API testing is the ability to generate realistic, static data for payloads, thus improving the test’s authenticity and ensuring it better simulates real-world scenarios.

For this purpose, we can leverage AI tools like Gemini AI, OpenAI, or GitHub Copilot, which are widely available and capable of generating dynamic data. These tools can generate realistic user information, such as names, usernames, and emails, for our API tests. By using AI-generated data, we ensure that the tests are not only varied but also closely resemble real-world user interactions, enhancing the quality and reliability of the testing process.

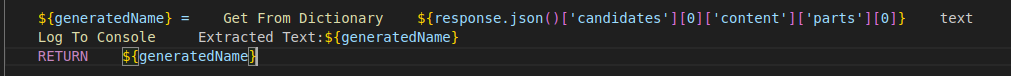

Once we’ve generated test data using tools like Gemini AI, the next step is to retrieve and incorporate this data into our test cases effectively. This process ensures seamless integration of dynamically created data into the testing workflow, enabling robust and realistic test scenarios.

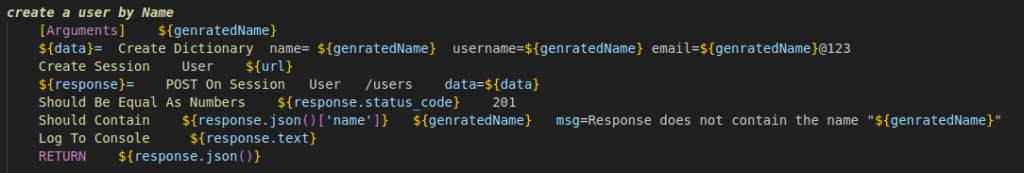

At this stage, we have the API request and payload data, dynamically retrieved at runtime from a previous API call. This approach makes the test automation process more flexible and adaptive by generating payload data on the fly based on real-time information, rather than relying on static inputs.

Key Benefits of This Approach:

- Real-World Simulation

By leveraging data from a previous API response, our tests more accurately simulate real-world scenarios. This ensures that they align closely with actual use cases and workflows. - Reduced Maintenance Effort

With dynamic data retrieval, there’s no need to manually update test data whenever API responses change. The payload is automatically updated with the latest information, making the tests more resilient and reliable. - Elimination of Hardcoded Payloads

Static payloads are replaced with dynamically generated data, significantly enhancing the robustness and adaptability of the testing process. - Seamless API Chaining

This mechanism allows smooth chaining of API calls. The output of one request serves as input for the next. This mirrors real-world API interactions, improving the accuracy and efficiency of the tests.

By adopting this dynamic data-fetching mechanism, we create a more reliable and efficient testing process that adapts effortlessly to changing requirements and API behaviours.

Step 3: Generating Tests and Assertions in Real Time

While we have successfully generated realistic data with the help of AI, the primary motivation for using AI is not simply generating data. Realistic data can also be generated using predefined functions and Python libraries in Robot Framework. The true advantage of leveraging AI lies in its ability to dynamically create test cases and assertions that validate the response of API requests in real time. This approach allows for smarter and more adaptive testing, improving both test creation and validation efficiency.

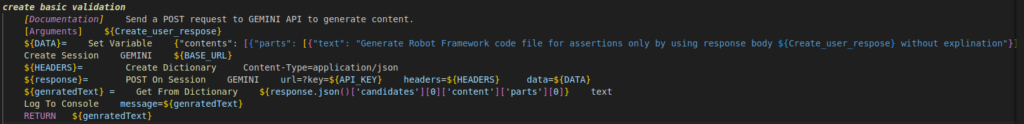

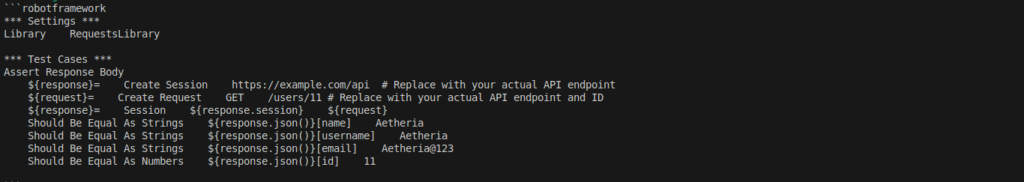

In this test, I pass the Create User API response to generate basic validation using Gemini AI in real time. The test dynamically validates the Create User API response, ensuring key attributes like name, username, and ID are correctly returned. By integrating Gemini AI, the validation process becomes intelligent and adaptable, reducing the need for manual test creation and improving test coverage in real time.

How can we create a file and save an AI-generated test?

While we have the desired output, the challenge is how to use this generated data to validate the API request at runtime. Since we are working with the Robot Framework, we can leverage the OperatingSystem library, which allows us to generate dynamic data and append it directly to the test file during execution. This enables us to validate API requests using real-time generated data, making the tests more flexible and adaptable to changing inputs.

Create File And Save Data

# Create a new file and write data to it (overwrites if file exists)

Create File ${FILE_PATH} ${genrateValidationRespose}

# Append data to the file

Append To File ${FILE_PATH} ${ADDITIONAL_DATA}

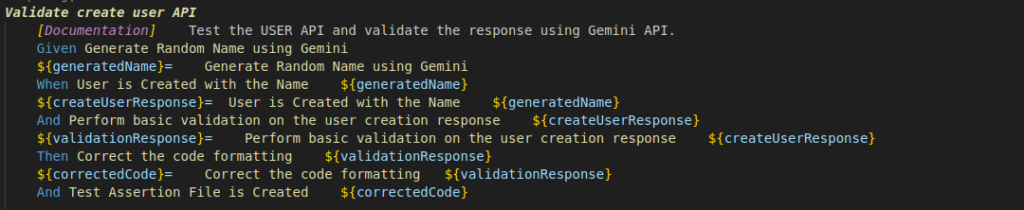

above code will create a file at the specified location, containing the generated validation response. Additionally, Prompt Engineering can be leveraged to write tests in a high-level language, making them more human-readable. As we’ve discussed, Robot Framework supports Gherkin-style BDD keywords, allowing us to write tests in a form that is both user-friendly and easily understood by the framework

In the above code, you can see the use of GIVEN, WHEN, and THEN keywords, which help describe the test case functionality in a way that anyone can easily understand with just a single read. This approach, which aligns with Prompt Engineering, enables seamless integration of natural language prompts into the test automation process. It makes testing more intuitive while maintaining efficiency, as the use of clear, structured language allows testers to write and interpret tests more effectively.

Conclusion

This approach improves API test automation by reducing the need for manual test script writing and better handling the increasing complexity of modern APIs. With Prompt Engineering and AI, automated test cases can be generated using simple, human-readable language, making the testing process more intuitive and easier for non-technical stakeholders to understand.

By leveraging Prompt Engineering and AI, we can create self-healing frameworks and much more! Stay tuned for valuable insights in future posts. For more in-depth content, visit our Test Automation NashTech Blog.