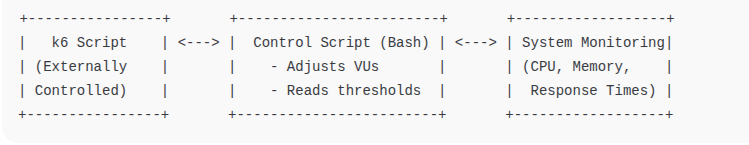

In this blog, we’ll explore how to implement auto-scaling k6 load tests using the externally-controlled executor and a monitoring script, allowing you to dynamically adjust the number of virtual users (VUs) during a test run based on CPU usage or other system metrics.

Why Auto-Scaling Load Tests?

In traditional performance testing, the number of virtual users (VUs) is generally defined at first, and it stays consistent throughout the whole testing period. Real-world environments, however, are far more unpredictable. Load may spike unexpectedly, and also system issues can emerge sooner than expected. Resource limitations might be kicking in as well.

An approach that is smarter and more adaptive is what auto-scaling load tests do provide. Your test can respond dynamically to system conditions like error rate, latency, or CPU load by scaling the VUs during the test run.

Benefits of Auto-Scaling Load Tests

Some of the benefits of auto-scaling load tests are mentioned below:

- Reduce load to prevent infrastructure crashes: Avoid system overload when resource thresholds are breached.

- Testing That Is Cost-Efficient: Save resources; do not overload without need.

- Smart Load Adjustments: Automatically increase load whenever the system is underutilized. The system relents when struggling now.

- Realistic Behavior Modeling: Simulate production user behavior near elastic workloads.

What is externally-controlled?

The externally-controlled executor allows you to start a k6 test and control the number of VUs from an external system during runtime via a REST API. This is ideal for:

- Adaptive or self-healing tests

- CI/CD test triggers

- Auto-scaling based on monitoring

How to auto-scale k6 load tests?

Below are the steps to auto-scale k6 tests using externally-controlled executor:

Step 1: Prerequisite

Before auto-scaling k6 load tests, you should have the following prerequisites:

- k6 installed

- Basic understanding of Shell scripting

Step 2: Create a k6 Script:

Let’s start with a simple k6 test script that targets a demo endpoint and supports auto-scaling through the externally-controlled executor:

import http from 'k6/http';

export const options = {

discardResponseBodies: true,

scenarios: {

contacts: {

executor: 'externally-controlled',

vus: 20,

maxVUs: 50,

duration: '10m',

},

},

};

export default function () {

http.get('https://test.k6.io/contacts.php');

}

In this script, we’re using a public test endpoint since k6 furnished it. This endpoint is meant for testing that is safe and simple. The use of the externally-controlled executor stands out from others. It is especially noticeable. We can adjust the number of virtual users during the test runs. So, if the system is handling things well, we can ramp up the load, and then if it starts to battle, we can dial it back all in real time because we use the k6 REST API. It is ideal for tests that require reaction to actual system performance.

Step 3: Script for monitoring & auto-scaling

Here is the bash script to increase or decrease the VUs based on CPU & memory usage:

#!/bin/bash

LOG_FILE="auto-scale.log"

# Configuration

MAX_VUS=100

MIN_VUS=0

CURRENT_VUS=20 # Start with the same as in your k6 script

CPU_UPPER_THRESHOLD=40

CPU_LOWER_THRESHOLD=35

MEM_UPPER_THRESHOLD=35

MEM_LOWER_THRESHOLD=25

K6_CONTROL_API="http://localhost:6565/v1/status"

log() {

echo "[$(date '+%Y-%m-%d %H:%M:%S')] $1" | tee -a "$LOG_FILE"

}

# Start k6 in the background

k6 run --address localhost:6565 ./k6_tests/test.js &

K6_PID=$!

log "Started k6 (PID $K6_PID)"

# Function to get CPU usage

get_cpu_usage() {

top -bn2 | grep "Cpu(s)" | tail -n1 | awk '{print 100 - $8}' | awk '{printf "%.0f", $1}'

}

# Function to get memory usage

get_mem_usage() {

free | awk '/Mem:/ { printf "%.0f", ($3/$2)*100 }'

}

# Function to pause → update VUs → resume

scale_vus() {

local vus=$1

log "Pausing test before updating VUs..."

# Pause

curl -s -X PATCH "$K6_CONTROL_API" \

-H 'Content-Type: application/json' \

-d '{

"data": {

"attributes": {

"paused": true

},

"id": "default",

"type": "status"

}

}' > /dev/null

sleep 1

log "Updating VUs to $vus..."

# Update VUs

curl -s -X PATCH "$K6_CONTROL_API" \

-H 'Content-Type: application/json' \

-d "{

\"data\": {

\"attributes\": {

\"vus\": $vus

},

\"id\": \"default\",

\"type\": \"status\"

}

}" > /dev/null

sleep 1

log "Resuming test..."

# Resume

curl -s -X PATCH "$K6_CONTROL_API" \

-H 'Content-Type: application/json' \

-d '{

"data": {

"attributes": {

"paused": false

},

"id": "default",

"type": "status"

}

}' > /dev/null

log "Sent VU update to $vus"

}

# Main monitoring loop

while kill -0 "$K6_PID" 2>/dev/null; do

CPU_USAGE=$(get_cpu_usage)

MEM_USAGE=$(get_mem_usage)

log "CPU: $CPU_USAGE% | MEM: $MEM_USAGE% | VUs: $CURRENT_VUS"

# Check scale-up condition

if { [ "$CPU_USAGE" -gt "$CPU_UPPER_THRESHOLD" ] || [ "$MEM_USAGE" -gt "$MEM_UPPER_THRESHOLD" ]; } && [ "$CURRENT_VUS" -lt "$MAX_VUS" ]; then

CURRENT_VUS=$((CURRENT_VUS + 5))

[ "$CURRENT_VUS" -gt "$MAX_VUS" ] && CURRENT_VUS=$MAX_VUS

log "[SCALE-UP] - CPU or MEM high → Increasing VUs to $CURRENT_VUS"

scale_vus "$CURRENT_VUS"

# Check scale-down condition

elif { [ "$CPU_USAGE" -lt "$CPU_LOWER_THRESHOLD" ] || [ "$MEM_USAGE" -lt "$MEM_LOWER_THRESHOLD" ]; } && [ "$CURRENT_VUS" -gt "$MIN_VUS" ]; then

CURRENT_VUS=$((CURRENT_VUS - 5))

[ "$CURRENT_VUS" -lt "$MIN_VUS" ] && CURRENT_VUS=$MIN_VUS

log "[SCALE-DOWN] - CPU and MEM low → Decreasing VUs to $CURRENT_VUS"

scale_vus "$CURRENT_VUS"

else

log "INFO - No scaling action taken."

fi

sleep 5

done

log "k6 test completed."The above Bash script runs the k6 test in externally-controlled mode & monitors the CPU & memory usage every 5 seconds. A few of the points are mentioned below:

- Starts the k6 test and captures its process ID.

- Monitors system CPU and memory usage using

topandfree. - If usage exceeds thresholds, it increases the VUs (up to a defined max).

- If usage drops below thresholds, it decreases the VUs (down to a defined min).

- It uses the k6 REST API to:

- Pause the test

- Update the number of VUs

- Resume the test.

- All actions are logged to autoscale.log for traceability.

Conclusion

Utilizing the externally-controlled executor for k6 load tests provides for authenticity and improved flexibility in performance evaluation. Instead of depending on fixed VU patterns, you can now react adaptively to how the system behaves—increasing the load when your application performs well and reducing it when resources feel pressure.

This method reflects actual traffic trends with more precision and also aids in avoiding congestion. Furthermore, this method improves the use of resources and uncovers limitations of performance in a way that is managed and responsive.

This method introduces an important aspect for your performance testing resources, whether verifying auto-scaling infrastructure or stress testing an environment similar to production.