Introduction

Searching for a substring inside a larger text might sound like a basic programming task. After all, most languages provide built-in functions like indexOf() or contains(). But behind those simple methods lies a deep and fascinating area of algorithm design. When the text becomes massive – think log files, DNA sequences, search engine indexes, or streaming data – a simple brute-force search quickly becomes inefficient.

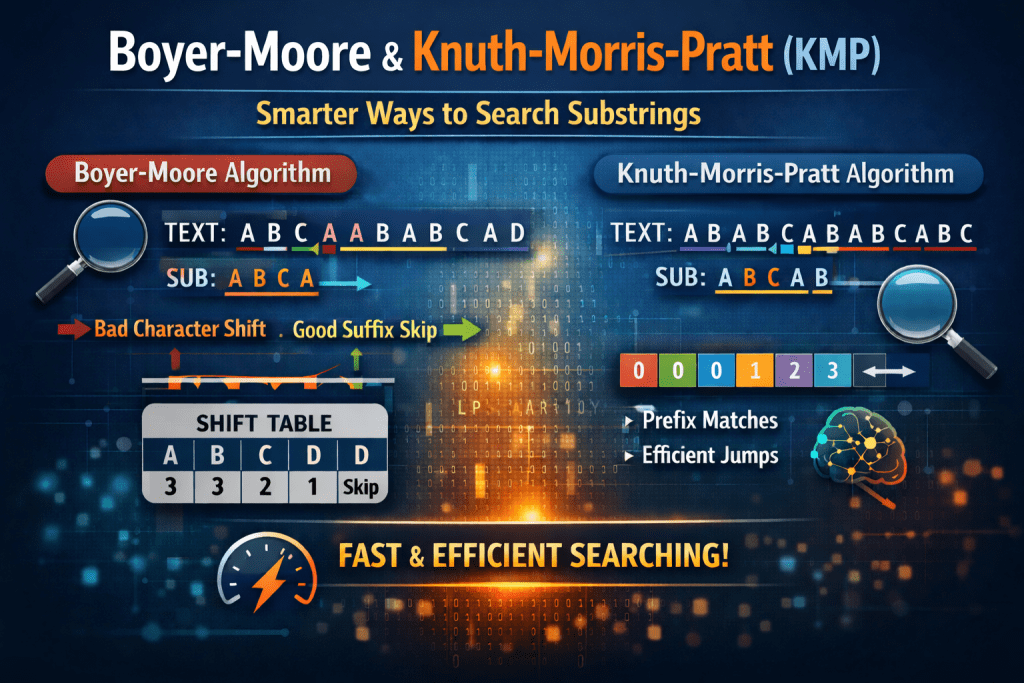

This is where classic string matching algorithms like Knuth-Morris-Pratt (KMP) and Boyer-Moore come into play. These algorithms were designed to solve the substring searching problem efficiently by reducing unnecessary comparisons. They are not just theoretical concepts taught in classrooms – they are practical tools that influence real-world systems even today.

Lets understands what makes them powerful.

The Substring searching problem

At its core, substring searching asks a simple question:

Given a large text and a smaller pattern, where does the pattern occur in the text?The most straightforward approach compares the pattern with the text one character at a time and shifts by one position whenever there is a mismatch. While this works, its worst-case time complexity can reach O(n x m), where n is the length of the text and m is the length of the pattern.

For small inputs, that’s acceptable. For large-scale systems processing gigabytes of data, it’s not.

Knuth-Morris-Pratt (KMP): avoiding rework

KMP improves efficiency by ensuring we never compare the same characters twice unnecessarily. The key idea is simple but clever: when a mismatch occurs, instead of starting over from the beginning of the pattern, we use information about the pattern itself to decide where to resume.

It does this by preprocessing the pattern and building something called the LPS( Longest prefix which is also suffix) array. This array helps determine how much we can safely skip when a mismatch happens.

In other words, KMP remembers partial matches and uses that memory to avoid redundant comparisons.

The result?

A guaranteed time complexity of O(n+m).

This makes KMP predictable and reliable, especially in systems where worst-case performance matters.

Boyer-Moore: skipping smartly

while KMP works from left to right, Boyer-Moore uses two powerful heuristics:

- Bad Character Rule – skip ahead based on the mismatched character.

- Good Suffix Rule- Re-align the pattern based on matched suffixes.

Instead of shifting by just one position, Boyer-Moore often jumps several characters forward. In many real-world cases, especially with large alphabets like English text, this makes it extremely fast – sometimes even faster than KMP.

Although its worst-case complexity can degrade, in practice, Boyer-Moore is often the preferred choice for text-heavy applications.

KMP vs Boyer-Moore: What’s the Difference?

KMP guarantees linear time and is methodical in its approach. It preprocesses the pattern and ensures no character is compared more than necessary.

Boyer-Moore, on the other hand, is opportunistic. It tries to skip as much text as possible using smart heuristics. In average cases, it often performs fewer comparisons than KMP.

If you need guaranteed performances in all cases, KMP is safe. If you want faster average performance for large text searches, Boyer-Moore is usually better.

Where are these algorithms used?

These algorithms are used in:

- Text editors (find functionality)

- Search engines

- Bioinformatics (DNA matching)

- Log processing systems

- Network intrusion detection

- Data indexing systems

Even if modern libraries abstract these implementations, understanding them helps engineers design better indexing and search systems.

Conclusion

Substring searching is a fundamental problem in computer science, but solving it efficiently requires more than brute force. KMP and Boyer-Moore demonstrate how intelligent preprocessing and smart skipping can drastically improve performance.

For more tech-related blogs, you can visit Nashtech Blogs