What is Great Expectations Tool?

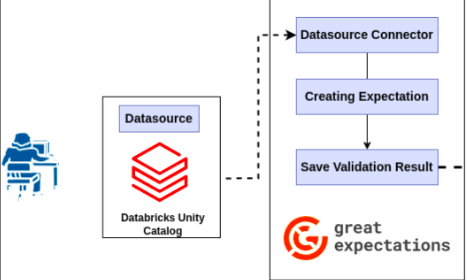

Great Expectations is an open-source tool for managing data quality. It is basically used to validate the quality of the data to make it accurate, complete, unique, etc. and further, it can be used as per the business purpose.

Now, we are going to discuss how to connect with the Databricks unity catalog and apply the validation on the data with the help of great expectations tool.

Steps for Data Quality Check

Here we are going to check the data quality on the Databricks unity catalog data by installing the Great Expectations library locally.

Step 1: Install the Necessary Dependency for great expectations

- pip install great_expectations

Steps 2: Open any IDE either IntelliJ IDEA or Visual Studio Code

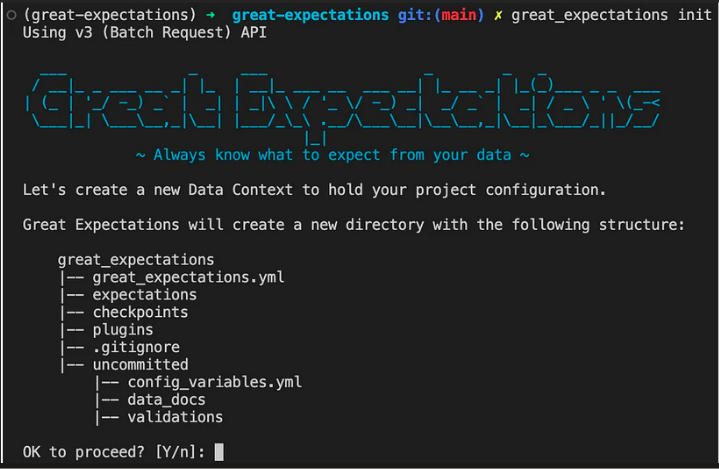

Step 3: Configure Data Context: Run the below command to generate all the files and directories related to great expectations such as great_expecttaions.yml, checkpoint, expectations, etc.

Command: great_expectations init

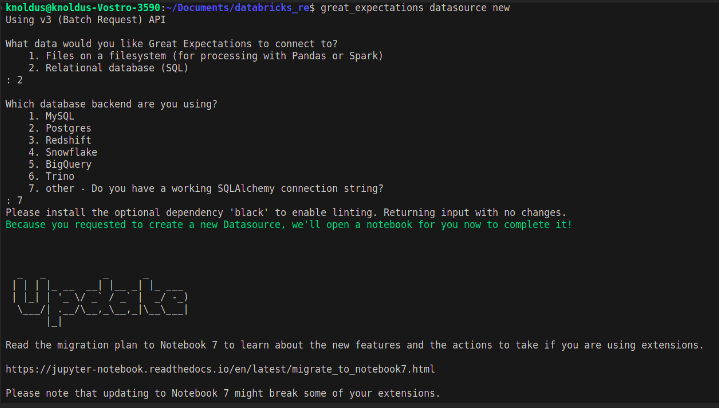

Step 4: Connect to Data Source: Run the below command and after that select other for Databricks connection.

Command: great_expectations data source new

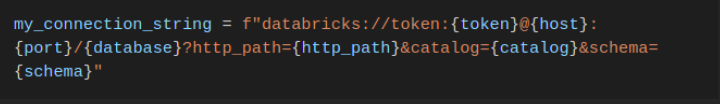

Step 5: Now one Jupiter notebook will open inside that notebook configure the databricks configuration. In this port is not necessary you can remove it from the string.

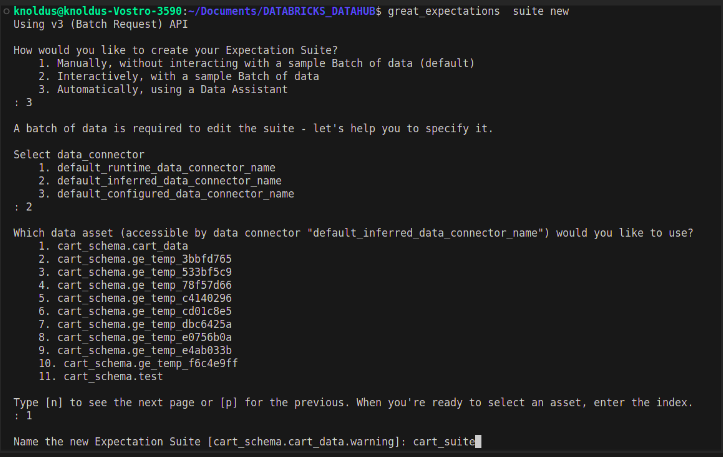

Step 6: Create Expectation Suite: Run the below command and select the data assets and after that write the expectations suite for the data.

Command: great_expectations suite new

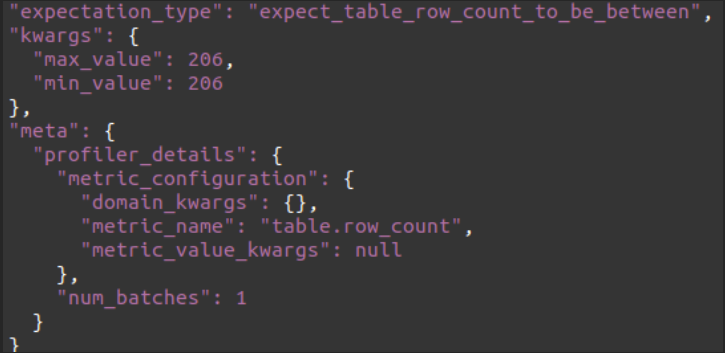

Expectation Example:

Step 7: Create Checkpoint: Run the below command to create a checkpoint inside which contains all configuration.

Command: great_expectations checkpoint new checkpoint_name

Step 8: Run the checkpoint

Command: great_expectations checkpoint run checkpoint_name

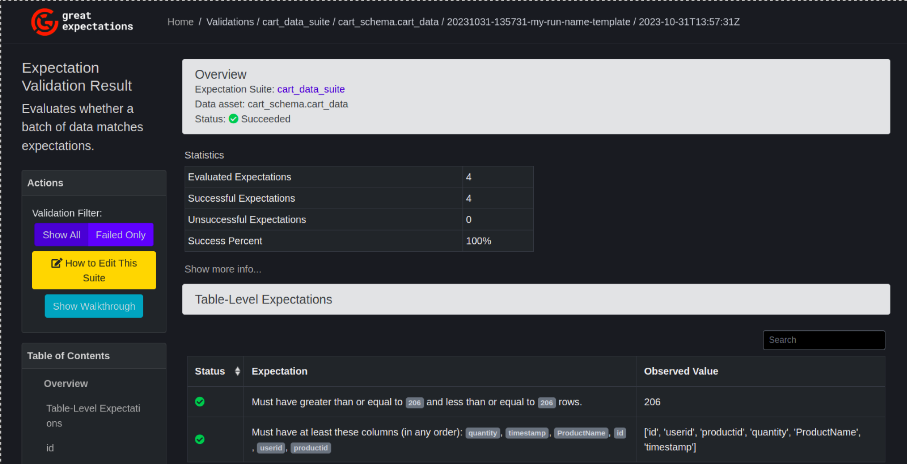

Great Expectation Dashboard

Now to view the dashboard of the quality check move to the below directory and open the HTML file

\uncommited\data_docs\local_site\index.html

Conclusion

Incorporating Great Expectations into Databricks Unity Catalog ensures robust data quality checks. By configuring expectations, creating checkpoints, and leveraging the user-friendly dashboard, data integrity is maintained, empowering informed decision-making for business success. Refer for more