AI-driven software testing is becoming a key part of modern Quality Assurance (QA). Many testing teams now use AI tools to generate test cases, create automation scripts, analyze bugs, and improve test coverage. However, the success of AI in software testing depends largely on how well we write our prompts.

In this guide, we will learn what prompt engineering is, why it matters in AI testing, and how to write effective prompts step by step, with detailed explanations and practical examples.

1. What Is Prompt Engineering in AI-Driven Software Testing?

Prompt engineering is the process of writing clear, structured, and detailed instructions for AI tools so they can produce accurate and useful testing outputs.

In software testing, prompts are used to:

- Generate functional, regression, and edge-case test scenarios

- Create automation scripts using tools like Selenium, Playwright, or Cypress

- Analyze defect reports and identify root causes

- Suggest test strategies for performance, security, and usability testing

A well-written prompt works like a clear test requirement document for AI.

2. Why Writing Effective Prompts Is Important in AI Testing

AI tools do not have real-world understanding of our application unless we provide it. Poor prompts often result in incomplete or incorrect test results, which can waste time instead of saving it.

Effective prompts help us:

- Improve test accuracy by clearly defining expectations

- Increase test coverage by guiding AI to consider edge cases

- Reduce rework by minimizing irrelevant outputs

- Save time by getting usable results on the first attempt

- Maintain consistency across test artifacts

Think of AI as a new QA team member—the better our instructions, the better the outcome.

3. Key Principles for Writing Effective Prompts for AI Testing

Below are the most important principles, explained in detail, to help us write high-quality prompts for AI-driven software testing.

3.1. Be Clear and Specific in Our Prompts

Clarity is the most important rule when writing AI prompts. Avoid general or short instructions that leave room for interpretation.

When writing a prompt, always clearly mention:

- What feature needs to be tested

- What kind of output we expect

- What aspects of testing should be covered

Example:

❌ Poor prompt:

“Test the login page”

This prompt is unclear. It does not specify test type, application type, or output format.

✅ Improved prompt:

The second prompt gives enough detail for AI to generate meaningful test cases.

3.2. Provide Complete Application Context

AI does not automatically know how the application works. Providing context ensures that generated test cases match the real system behavior.

We should include details such as:

- Application type (web, mobile, desktop, API)

- Business domain (e-commerce, banking, healthcare, education)

- User roles (admin, registered user, guest)

- Important workflows or business rules

With context, AI generates more realistic and relevant test scenarios.

3.3. Clearly Define the Type of Software Testing Required

AI tools can perform many types of testing, but only if we specify which one we need.

Common testing types include:

- Functional testing to verify features work correctly

- Regression testing to ensure changes do not break existing functionality

- Security testing to identify vulnerabilities

- Performance testing to measure system behavior under load

- Accessibility testing to ensure usability for all users

Example:

This prevents AI from generating unrelated test scenarios.

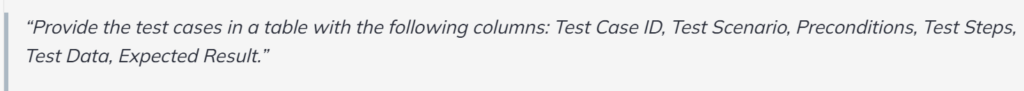

3.4. Specify the Output Format in Detail

Without a defined format, AI may return information that is difficult to use or inconsistent.

Always specify:

- Structure (table, list, JSON, Gherkin)

- Required columns or fields

- Naming conventions if needed

Example:

This makes the output ready for test management tools.

3.5. Break Down Complex Testing Requests

Large and complex prompts often confuse AI and lead to partial or incorrect results.

Instead of asking for everything at once:

- Divide tasks into smaller, focused prompts

- Handle one testing activity per prompt

Example approach:

Generate functional test casesConvert selected test cases into automation scriptsCreate regression test scenariosIdentify edge and negative cases

This step-by-step approach improves accuracy and clarity.

3.6. Use Examples to Guide AI Behavior

Examples act as a reference and help AI understand the expected output style and depth.

By showing a sample test case, we reduce inconsistencies and formatting issues.

Example:

This helps AI replicate the preferred format correctly.

3.7. Define Constraints and Assumptions Clearly

Constraints limit the scope of AI output and prevent incorrect assumptions.

We can specify:

- What should be included

- What should be excluded

- Assumptions about system state

Example:

This keeps AI aligned with our actual test environment.

3.8. Review, Improve, and Refine Prompts Iteratively

Prompt writing is an iterative process, just like test design.

After reviewing AI output:

- Identify missing scenarios

- Add more detail to the prompt

- Request refinements or additional coverage

Example refinements:

- “Add boundary value test cases.”

- “Include mobile-specific scenarios.”

- “Focus on error handling and validations.”

Continuous refinement leads to better AI-generated test assets.

4. Common Mistakes to Avoid in AI Testing Prompts

Even powerful AI testing tools can produce poor results if prompts are not written correctly. Below are the most common mistakes QA professionals make when writing AI testing prompts—and why they should be avoided.

4.1. Writing Overly Generic or Vague Prompts

One of the most frequent mistakes is using prompts that are too broad or unclear. Generic prompts do not provide enough direction, causing AI to make assumptions or produce shallow results.

Why this is a problem:

- AI may miss critical test scenarios

- Output may lack depth or relevance

- Results often require heavy rework

Example of a weak prompt:

This does not specify the application type, payment methods, or testing scope.

Better approach:

Clear prompts lead to more accurate and usable test cases.

4.2. Not Providing Enough Application or Business Context

AI does not understand the application unless we explain it. When prompts lack context, AI-generated tests may not align with real user behavior or business rules.

Why this is a problem:

- AI may assume incorrect workflows

- Business-critical rules may be ignored

- Test cases may not reflect real-world usage

What context should include:

- Application type (web, mobile, API) Business domain (banking, healthcare, retail)

- User roles and permissions

- Important workflows and restrictions

Example improvement:

Context ensures realistic and meaningful outputs.

4.3. Asking for Too Many Things in a Single Prompt

Many testers try to save time by combining multiple requests into one prompt. This often confuses AI and results in incomplete or poorly structured output.

Why this is a problem:

- AI may focus on only part of the request

- Output becomes inconsistent or disorganized

- Important scenarios may be skipped

Example of a problematic prompt:

Better approach:

Break the request into smaller steps:

- Generate functional test cases

- Create automation scripts from selected cases

- Suggest performance testing scenarios

- Add security test scenarios

This step-by-step method improves accuracy and clarity.

4.4. Not Specifying the Desired Output Format

If we do not tell AI how to format the output, it will choose a format on its own, which may not be useful for our workflow.

Why this is a problem:

- Output may not be reusable

- Formatting may be inconsistent

- Extra time is needed for manual restructuring

Recommended formats include:

- Test case tables

- Gherkin syntax (Given–When–Then)

- JSON or CSV formats

- Structured bullet points

Example of a clear format request:

This makes the output directly usable in test management tools.

4.5. Assuming AI Understands Business Logic Automatically

AI does not automatically know our organization’s business rules, validations, or compliance requirements.

Why this is a problem:

- AI may generate invalid test scenarios

- Critical compliance rules may be missed

- Business-critical defects may go undetected

Example mistake:

Better prompt:

4.6. Not Reviewing or Refining AI-Generated Results

Many testers accept AI output as final without validation. This can introduce incorrect or incomplete test scenarios into the test suite.

Why this is a problem:

- Incorrect assumptions may go unnoticed

- Test quality may degrade

- False confidence in AI results

Best practice:

- Review AI-generated test cases carefully

- Refine prompts to cover missing areas

- Use AI output as a starting point, not the final solution

Prompt refinement is an essential part of AI testing.

5. Conclusion

AI-driven software testing has the potential to greatly improve speed, efficiency, and test coverage—but only when it is used correctly. The effectiveness of AI testing tools depends not on the tool itself, but on how well QA professionals communicate with it through prompts.

Poorly written prompts can lead to incomplete, inaccurate, or misleading test results. On the other hand, clear, structured, and well-defined prompts enable AI to generate high-quality test cases, automation scripts, and insights that truly support the testing process.

Prompt engineering is no longer an optional skill for QA professionals. It is becoming a core competency, just like test design, defect analysis, and automation. Testers who understand how to provide context, define scope, set constraints, and refine prompts will be able to fully leverage AI without losing control over test quality.

In the future of software testing, AI will not replace testers—but testers who master prompt engineering will lead the way. By continuously improving prompt-writing skills, QA teams can ensure that AI becomes a powerful partner in delivering reliable, high-quality software.

Source:

https://platform.openai.com/docs/guides/prompt-engineering

https://docs.cloud.google.com/vertex-ai/generative-ai/docs/learn/prompts/introduction-prompt-design