In recent years, AI has really taken off, soaring from quiet labs into our everyday lives. It’s touching everything from the smartphone in your hand and the car you drive, to how we work and play. Terms like “Machine Learning,” “Deep Learning,” “NLP,” and “Computer Vision” are practically household words now. But have you ever stopped to wonder, what exactly is AI, where did it come from, and how on earth does it do all those amazing things?

As an IT engineer, I’ve always been fascinated by these fundamental questions. That’s why I’m so excited to start my brand new blog series: “The Essential Foundations of Artificial Intelligence”

What is Artificial Intelligence (AI)?

Intelligence: includes the capacity for logic, understanding, learning, reasoning, creativity, and problem solving, etc.

Artificial intelligence (AI): attempts not just to understand but also to build intelligent entities.

What Does AI Actually Do? A Closer Look at Intelligent Systems

When people hear Artificial Intelligence (AI), they often imagine robots that talk or machines that beat humans at chess. But AI goes far beyond flashy demos — it’s a broad and evolving field of research focused on building intelligent entities that can simulate human capabilities across many dimensions.

🧠 Thinking, Learning, Planning

At the core of AI is the ability to think and learn. These systems analyze data, identify patterns, and make decisions — just like humans do when solving problems or planning ahead. From recommendation engines to self-driving cars, AI continuously learns from experience and refines its understanding over time.

👁️ Perception: Seeing, Hearing, and Sensing the World

AI doesn’t just deal with abstract data. It also tries to perceive the world like we do — using sensors, microphones, and cameras. This enables machines to

- See (via computer vision),

- Hear (via speech recognition), and

- Feel (via sensors and feedback mechanisms).

🗣️ Communication in Natural Language

One of the most powerful aspects of AI is its ability to understand and generate human language. Through Natural Language Processing (NLP), AI can:

- Participate in coherent and context-aware conversations

- Translate between languages

- Summarize documents

- And even write articles

This ability bridges the gap between humans and machines in an intuitive way.

🦾 Manipulation and Movement

Beyond the virtual world, AI also enables machines to interact physically with their environment. Whether it’s a robotic arm assembling parts on a factory line or a drone navigating through complex terrain, AI allows systems to move, manipulate, and perform tasks in the real world.

🧠 Foundations of Artificial Intelligence: The 8 Core Disciplines Behind Smart Machines

Artificial Intelligence (AI) is not a standalone invention. It is the result of a convergence of multiple academic disciplines — from classical philosophy to modern cybernetics. Behind every machine learning algorithm, every chatbot, and every self-driving car lies a rich foundation of knowledge that has been shaped and refined over decades.

So, what are the intellectual foundations upon which AI is built? Let’s explore 8 core disciplines that have played a critical role in the evolution of artificial intelligence:

1. Philosophy & Logic: Foundations of Thought and Reasoning

AI didn’t start with code—it started with questions.

- What is intelligence?

- Can a machine think?

- How do we know something is true?

From ancient philosophy to formal logic, this field laid the intellectual groundwork for artificial intelligence. Philosophy gave us the big questions and conceptual tools to think about the nature of mind, knowledge, and rationality. Logic, its formal arm, turned reasoning into something machines can compute.

Core contributions include:

🧠 Mind as a physical system – the basis for modeling thought in machines

🔍 Methods of reasoning – deduction, induction, and abduction

⚖️ Rationality – the study of optimal decisions and beliefs

🔣 Formal logic – inference rules, symbolic systems, and theorem proving

🧩 Foundations of learning and language – how knowledge is acquired and structured

Together, they laid the conceptual and ethical groundwork for AI development.

2. Mathematics: The Language of AI

Mathematics is the formal backbone of artificial intelligence. It turns abstract ideas into computable models and makes intelligence measurable, explainable, and optimizable.

From representing knowledge formally to reasoning under uncertainty, mathematics enables AI to work with precision.

Core contributions include:

📐 Formal representation & proof — allows AI systems to reason and verify correctness

⚙️ Algorithms & computation — define the step-by-step logic that powers machine learning

🚫 (Un)decidability — shows which problems are fundamentally unsolvable by any algorithm

🧮 (In)tractability — helps us estimate the cost of solving problems, critical in optimization and planning

🎲 Probability & statistics — provide the foundation for reasoning under uncertainty, from Bayesian networks to deep generative models

Without mathematics, AI would be little more than intuition. With it, we can design systems that learn, generalize, and make probabilistic decisions in complex environments.

3. Economics and Intelligent Decision-Making

The role of economics in shaping artificial intelligence. Sounds a bit unusual, right? But in reality, concepts like Utility, Decision Theory, and Rational Economic Agents are the sturdy foundations that help AI make the “intelligent” decisions we see.

Utility: The Core of Every Decision

In economics, Utility is simply a measure of the satisfaction or value an individual gets from an action, a choice, or a good/service. It’s not a fixed number; rather, it’s subjective and can change depending on the person and the circumstances.

So, how does AI fit into Utility? Imagine an AI system designed to manage financial investments. Its goal is to maximize the profit (Utility) for the investor. To do that, the AI needs to assess risks, predict market trends, and make buy/sell decisions for stocks in a way that maximizes overall benefit. Every decision the AI makes, even the smallest one, is driven by the goal of maximizing Utility in some form.

Decision Theory: The Framework for Choice

Decision Theory is a field that studies how individuals (or in our case, AI agents) make choices under conditions of uncertainty. It provides a mathematical framework for analyzing decisions based on possible outcomes and their probabilities.

When an AI system faces multiple options, Decision Theory helps it to:

- Identify alternatives: List all possible actions it can take.

- Evaluate outcomes: Predict the potential results for each action.

- Assign probabilities: Assign probabilities to each outcome based on available information.

- Calculate expected Utility: Estimate the overall value of each action by multiplying the Utility of each outcome by its probability, then summing them up.

For example, an AI healthcare system might use Decision Theory to choose the best treatment plan for a patient. It would consider different medications, potential side effects, and the success probability of each treatment to make a decision that maximizes Utility (the patient’s health) under risk.

Rational Economic Agents: The Blueprint for AI

In economics, a Rational Economic Agent is an individual who always acts to maximize their Utility, based on available information and existing constraints. They aren’t swayed by emotions or irrational factors.

Sound familiar? This is precisely the blueprint we try to build for AI systems. When an AI is considered “rational,” it means it:

- Calculates and analyzes: Doesn’t act impulsively but relies on data and logic.

- Is goal-oriented: Always strives to achieve its defined objective (often maximizing some form of Utility).

- Learns and adapts: Updates information and adjusts strategies to make better decisions in the future.

However, it’s important to remember that AI’s “rationality” is limited by the data it’s trained on and the algorithms it uses. AI doesn’t have consciousness or emotions like humans, but it can simulate rational behavior to achieve its defined goals.

4. Neuroscience and Biological Inspiration

Ever wonder why AI systems learn so effectively? Much of that “intelligence” is rooted in how closely they mimic the ultimate learning machine: the human brain. Neuroscience, the study of how our brains learn and process information, has directly inspired some of the most powerful AI paradigms, especially Artificial Neural Networks (ANNs), Convolutional Neural Network (CNN), Recurrent Neural Network (RNN). These biologically inspired models laid the foundation for the emergence of deep learning.

From Brain to Model: Key Inspirations

- Neural Models Mimicking Biological Neurons: At the very heart of ANNs are artificial neurons, simplified mathematical models designed to mimic their biological counterparts. Just like real neurons, these artificial units receive input, process it, and then fire an output. This basic, yet profound, concept forms the building blocks of complex neural networks that can recognize patterns, understand language, and even generate creative content.

- Synaptic Plasticity in Learning: One of the most critical concepts borrowed from neuroscience is synaptic plasticity. In our brains, the connections between neurons (synapses) strengthen or weaken over time based on our experiences. This “plasticity” is how we learn and form memories. In AI, this idea translates directly to the weights in neural networks. When an AI learns, it’s essentially adjusting these weights, strengthening or weakening connections between artificial neurons, much like our brains adjust synaptic strengths. This is the core mechanism behind how neural networks learn from data. More detail: Synaptic Plasticity

- Brain Mapping Contributing to Cognitive Architectures: Beyond individual neurons, neuroscience also explores how different parts of the brain specialize and interact to produce complex behaviors and thoughts. While AI hasn’t fully replicated the brain’s intricate architecture, concepts from brain mapping and understanding cognitive functions have influenced the design of more sophisticated cognitive architectures in AI. These architectures aim to integrate different AI modules that handle specific tasks, much like different brain regions contribute to overall cognition.

Ultimately, deep learning, the branch of AI responsible for many of the breakthroughs we see today—from image recognition to natural language processing—owes an immense debt to neuroscience’s relentless exploration of the brain. It’s a powerful reminder that sometimes, the best way to build the future is to understand the incredible biological systems that already exist. Reference this for more detail: Neural Network

5. Psychology & Cognitive Science: Understanding Human Thought

Alright, let’s dive into the fascinating role of Psychology and Cognitive Science in the world of AI. If you’ve ever found yourself amazed by how an AI seems to understand your needs, remember things you’ve told it, or even respond in a way that feels surprisingly natural, you’re seeing the direct influence of these fields. They are absolutely critical for helping AI mimic how humans think, learn, and even interact emotionally.

More Than Just Smart: Aiming for Human-like Intelligence

The ultimate goal here isn’t just to make AI intelligent in a purely logical or computational sense. It’s about striving for human-like intelligence – an AI that can reason, learn, and interact in ways that resonate with us. Here’s how psychology and cognitive science are key:

- Cognitive Models of Memory, Learning, and Decision-Making: These fields provide detailed blueprints of how our brains handle complex processes. By studying human memory (how we store and retrieve information), learning (how we acquire new skills and knowledge), and decision-making (how we weigh options and choose actions), AI researchers gain invaluable insights. This allows them to design AI systems that can remember context, learn from past interactions, and make choices that mirror human rationality (and sometimes, even human biases!).

- Human-Computer Interaction (HCI) and Adaptive Systems: A huge part of building useful AI is making it easy and intuitive for humans to interact with. Psychology informs the entire field of Human-Computer Interaction (HCI), helping developers create user-friendly interfaces and systems that understand human input, whether it’s through voice, text, or gestures. Furthermore, understanding human cognitive processes helps create adaptive AI systems that can learn user preferences and adjust their behavior over time, making interactions feel more personal and efficient.

- Emotion-Aware and Personalized AI Systems: This is where AI truly begins to feel more “human.” Psychology and cognitive science are at the forefront of developing emotion-aware AI systems that can detect and even interpret human emotions through facial expressions, tone of voice, or text analysis. This capability paves the way for truly personalized AI experiences, allowing systems to respond empathetically, tailor information to a user’s mood, or provide support that feels genuinely understanding.

By drawing from psychology and cognitive science, we’re not just making AI smarter; we’re making it more intuitive, more relatable, and ultimately, more seamlessly integrated into our human world.

6. Computer Engineering: The Hardware Backbone

How Algorithms Get Their Muscle: The Hardware Behind AI

Think of it this way: AI algorithms are the brains, but computer engineering provides the body and the muscles. It’s how those brilliant algorithms gain the speed, scale, and real-world presence we’ve come to expect. Here are some of the critical contributions:

Modern AI—especially deep learning—needs to process huge amounts of data all at once. Imagine trying to read and analyze millions of images or sentences in a very short time. A regular computer simply isn’t fast enough for this job

- GPU/TPU Design for Parallel Processing: Modern AI—especially deep learning—needs to process huge amounts of data all at once. Imagine trying to read and analyze millions of images or sentences in a very short time. A regular computer simply isn’t fast enough for this job. That’s where Graphics Processing Units (GPUs) and Tensor Processing Units (TPUs) come in:

GPUs were originally built for graphics, like rendering images in video games or movies. But they turned out to be great at doing thousands of calculations at the same time, which is exactly what AI models need when learning from data.

TPUs are custom chips developed by Google, designed specifically for machine learning tasks. They’re even faster and more efficient than GPUs for certain types of AI workload - AI Accelerators for Training Large Models: Beyond general-purpose GPUs and TPUs, the field of computer engineering is constantly innovating with dedicated AI accelerators. These are specialized chips or hardware architectures optimized for specific AI tasks, like inference (running a trained AI model) or even highly specific types of neural network operations. They aim to deliver even greater efficiency and speed, crucial for handling the ever-growing size and complexity of state-of-the-art AI models.

- Embedded Systems for Robots, Drones, and IoT Devices: AI isn’t just living in the cloud or on supercomputers. It’s increasingly found in the physical world, powering everything from your smart home devices to autonomous drones and industrial robots. This is thanks to embedded systems – compact, efficient computing systems designed for specific functions within larger mechanical or electronic systems. Computer engineers design these embedded AI systems, ensuring that AI algorithms can run effectively on devices with limited power, size, and processing capabilities, bringing AI’s intelligence directly into our everyday lives.

Without the relentless innovation in computer engineering, AI would remain a theoretical concept, confined to research papers and simulations. It’s the hardware backbone that gives AI its incredible capabilities and its ability to impact the real world.

7. Control Theory & Cybernetics: Managing Dynamic Systems

Let’s wrap up our exploration of AI’s foundational fields with something incredibly practical and hands-on: Control Theory & Cybernetics. While we often think of AI as purely digital intelligence, a huge part of its impact comes from its ability to interact seamlessly with the physical world. This is precisely where Control Theory and Cybernetics shine, enabling AI to move, respond, and react in dynamic environments.

Bringing AI to Life: Interacting with the Physical World

Imagine a self-driving car navigating busy streets, a drone maintaining a steady flight path, or a robot precisely assembling a product. These aren’t just feats of algorithms; they are triumphs of control theory. This field provides the essential tools for AI to operate robots, autonomous vehicles, and countless automated systems with stability and precision. Here’s how:

- Real-time Feedback Loops for Behavior Adjustment: At the heart of control theory are feedback loops. Think about how you adjust your steering wheel while driving. You constantly observe your position (output), compare it to where you want to go (desired state), and make small adjustments (input) to correct any deviation. AI systems use these same principles. Sensors gather data from the environment, this data is fed back to the AI, which then calculates necessary adjustments to its actions in real-time. This continuous loop allows AI to adapt its behavior on the fly, making it incredibly responsive.

- Stability Control in Drones and Vehicles: Ever wonder how a drone hovers so steadily despite wind gusts, or how an autonomous vehicle stays perfectly in its lane? That’s largely due to sophisticated control algorithms. These algorithms ensure stability control, preventing systems from becoming erratic or unstable. They constantly monitor factors like speed, orientation, and external forces, making micro-adjustments hundreds or thousands of times per second to maintain the desired state.

- Precision in Robotics and Autonomous Systems: When a robotic arm needs to pick up a delicate object, or a factory automaton performs a repetitive task with millimeter accuracy, it’s leveraging principles of precision control. This involves not just maintaining stability but also executing movements with incredible exactness. Control theory provides the mathematical frameworks for AI to achieve this level of precision in robotics and autonomous systems, enabling complex tasks that require fine motor skills and exact positioning.

Without Control Theory and Cybernetics, AI would largely remain confined to the digital realm. These fields are absolutely crucial for bringing AI to life, allowing it to move, sense, and intelligently interact within our complex physical world.

8. Linguistics – Enabling Human-Machine Communication

Let’s talk about how AI learns to speak our language – literally! Today, we’re diving into the crucial role of Linguistics in the world of Artificial Intelligence. If you’ve ever chatted with a smart assistant, used a translation app, or marveled at how a search engine understands your quirky queries, you’ve witnessed the power of linguistics at play. This field is what empowers machines to understand, generate, and translate human language, making it absolutely essential for Natural Language Processing (NLP).

Language: The Key to True Interaction

Think about it: for AI to be truly intelligent and helpful, it needs to communicate with us on our terms. That’s where linguistics steps in. It provides the frameworks and theories that allow AI to bridge the gap between complex human expression and rigid machine logic. Here are some key areas where linguistics makes a massive impact:

- Syntax, Semantics, and Pragmatics: These are the building blocks of language understanding for AI.

- Syntax teaches AI about grammar and sentence structure – the rules that dictate how words combine to form meaningful phrases.

- Semantics delves into the meaning of words and sentences. It helps AI understand what a word refers to and the relationships between concepts.

- Pragmatics is perhaps the most nuanced; it’s about understanding language in context – inferring intent, recognizing sarcasm, or knowing what’s implied rather than explicitly stated. These three pillars allow AI to move beyond simply recognizing words to actually comprehending the message.

- Speech Recognition and Generation: Linguistics is vital for both ends of verbal communication with AI. Speech recognition (turning spoken words into text) relies on linguistic models to interpret sounds as phonemes and then words, even with different accents or speaking styles. Conversely, speech generation (text-to-speech) uses linguistic rules to create natural-sounding spoken output, including correct pronunciation, intonation, and rhythm.

- Natural Language Understanding (NLU) for Chatbots, Search, and Voice Assistants: This is where linguistics directly impacts our everyday AI interactions. Whether you’re asking your voice assistant to play music, typing a query into a search engine, or conversing with a customer service chatbot, Natural Language Understanding (NLU) is at work. Linguistics provides the theories for AI to parse your input, understand your intent, and extract relevant information, enabling these systems to respond appropriately.

Ultimately, language is what allows AI to truly interact with us. Without the deep insights from linguistics, AI would be a brilliant mind locked in a silent room, unable to share its intelligence or understand our needs. It’s the bridge that transforms complex algorithms into conversational companions and intuitive tools.

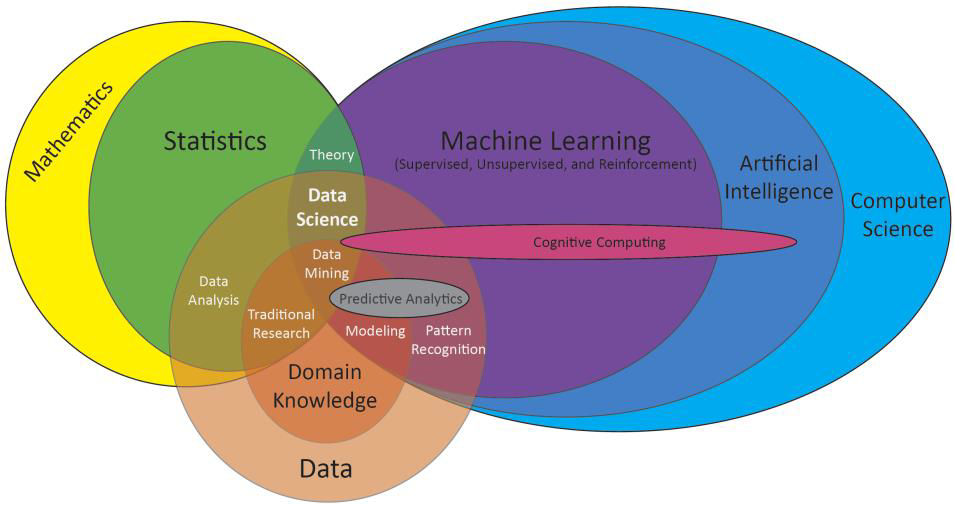

The Data Science & AI Venn Diagram: Where Disciplines Converge

After our journey through the 8 grand academic “foundations” that gave birth to Artificial Intelligence, today, I want to introduce a diagram you’ll frequently encounter in the world of AI and Data Science. This isn’t just a pretty illustration; it’s a roadmap of how various fields intersect to create what we now call “intelligence.”

The diagram is a version of “The Data Science & AI Venn Diagram” – a popular concept for visualizing the skills and fields necessary to be a data scientist, and more broadly, how AI is built upon these foundations.

The Difference from Our “8 Core Disciplines”

First, let’s clarify the distinction between this diagram and the 8 core disciplines (Philosophy, Mathematics, Logic, Linguistics, Economics, Neuroscience, Psychology & Cognitive Science, Computer Engineering, Control Theory & Cybernetics) we previously discussed.

- The 8 Core Disciplines: These are broad, academic scientific and engineering fields, the intellectual wellsprings that have provided the fundamental ideas, theories, and methodologies for AI to develop over decades. They explain why and how AI came into being and evolved theoretically. They are the “inspiration” and the “big brain.”

- This Venn Diagram: Focuses on the specific applied fields and skills required to build and deploy AI and Data Science in practice. It shows what is combined and how it’s applied to solve concrete problems. It’s the “muscle” and the “hands” of AI.

Let’s deconstructing this diagram together:

Mathematics & Statistics:

– These are the analytical brains. Mathematics provides the foundational tools for every algorithm, from linear algebra to calculus.

– Statistics helps us understand data, draw inferences, test hypotheses, and build predictive models. The intersection of these two fields forms the solid Theory underpinning all data analysis.

Computer Science:

– This is the execution and building brain. Computer Science brings programming capabilities, efficient algorithm construction, big data management, and system architecture.

– Machine Learning itself is a major branch of Computer Science, where algorithms learn from data without being explicitly programmed (including Supervised, Unsupervised, and Reinforcement Learning).

Domain Knowledge:

– This is an often-underestimated but critically important factor. No matter how skilled you are in math, statistics, or programming, if you don’t deeply understand the field you’re working in (e.g., finance, healthcare, manufacturing, marketing), you won’t be able to ask the right questions, comprehend the data’s true meaning, or interpret results appropriately. Domain knowledge helps transform data and algorithms into real business value.

Key Concepts Within the Intersections:

- Data Science: Positioned at the core intersection of Computer Science, Statistics, and Mathematics. It’s the discipline that synthesizes tools and methods to extract knowledge and insights from data. When combined with domain knowledge, it helps solve real-world problems, leading to insightful traditional research and data analysis.

- Data Mining: Lies between Computer Science and Statistics/Mathematics, focusing on discovering hidden patterns within large datasets.

- Predictive Analytics: Uses statistical and machine learning models to forecast future events, a crucial part of Data Science.

- Modeling & Pattern Recognition: Core techniques used extensively in both Data Science and Machine Learning.

- Cognitive Computing: A broader concept, often seen as building upon Machine Learning and being part of Artificial Intelligence. It aims to build systems that can simulate human thought processes, including the ability to learn, reason, understand natural language, and interact with humans.

Conclusion: The Symphony of Intelligence, Applied

So, while the 8 core foundations provide the deep-rooted principles, this Venn diagram paints a clear picture of how we actually build and apply AI and Data Science in the real world. It demonstrates that to succeed in this field, you need a diverse blend of skills and understanding, transforming AI from an abstract idea into a living, constantly evolving technology.

Next Blog: we’re diving into “AI, Machine Learning and More”.

Blog after that: “The Machine Learning Foundation”.

If you have any questions 🤔 or would like to explore any aspect in greater detail, feel free to leave a comment below. I’ll be glad to engage in further discussion. 🤷