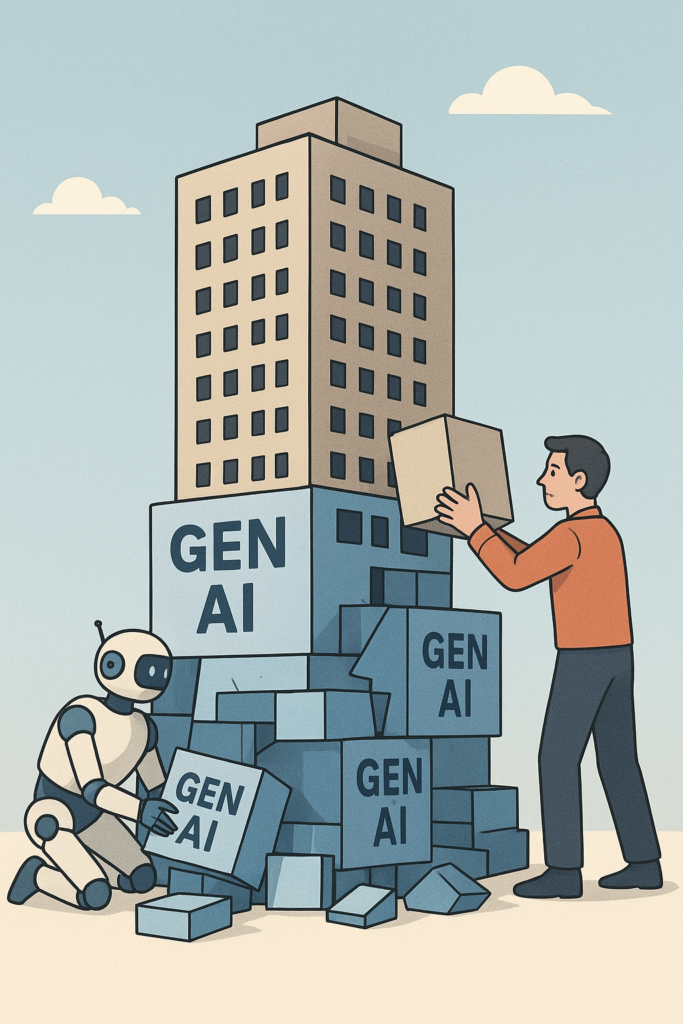

Generative AI has changed everything. It can write essays, code features, design architectures, explain complex theories, and even argue like it has decades of experience. At first glance, its output looks logical — sometimes even brilliant.

And that’s exactly the problem.

Behind the confident tone and structured explanations lies a very uncomfortable truth:

Generative AI doesn’t think. It doesn’t reason. It doesn’t understand. It only predicts.

This illusion of intelligence is powerful enough to mislead many developers into thinking the machine knows better than they do. If we’re not careful, we risk losing our skills, our credibility, and our value — not because AI replaces us, but because we stop thinking.

When Developers Trust AI More Than Themselves

Over the past year, I’ve seen a pattern emerging inside developer teams — and it worries me.

Many developers now rely heavily on Copilot, ChatGPT, or other Gen AI tools to produce entire features. They believe:

- “If AI generated it, it must follow best practices.”

- “The AI wouldn’t make such a basic mistake.”

- “It looks correct, so it must be correct.”

But when we review the code, the reality becomes clear:

- The feature only works for happy paths.

- The styling is inconsistent or outdated.

- The logic includes irrelevant or unused code.

- Security is an afterthought — or completely missing.

- Error handling is weak, misleading, or just wrong.

- Some parts are copy-pasted patterns from unrelated domains.

Here’s a real example:

A developer used Copilot to generate an authentication layer. The code “worked” — technically. But it used insecure random token generation, logged sensitive data, swallowed exceptions, and had no concept of rate limiting or brute-force protection. He trusted it because the output looked logical.

But under the surface, it was fundamentally broken.

This is the illusion at work:

AI makes things look right even when they are dangerously wrong.

Behind the Curtain: How Generative AI Really Works

This leads to the big question:

“How can something that doesn’t use logic generate something that looks so logical?”

The short answer: because it’s not using logic — it’s imitating the appearance of logic.

1. LLMs Don’t Think — They Predict

Generative AI models (LLMs) have one job:

Given the previous tokens, predict the most likely next token.

That’s it.

There is:

- No reasoning engine,

- No internal logic tree,

- No understanding of truth,

- No awareness of correctness.

Just patterns.

2. Why It Looks Logical

Humans write logical text.

Humans write structured code.

Humans write explanations that follow cause and effect.

AI sees billions of examples and learns the patterns of logic — not logic itself.

So the model can produce output that looks reasoned, structured, and thought-out, even though it is only mimicking the surface structure.

3. Logic Without Understanding Is Still Not Logic

When an LLM generates code, it is not thinking:

- “What is clean architecture here?”

- “Is this algorithm secure?”

- “Does this scale under load?”

- “Does this satisfy the non-functional requirements?”

It is simply assembling what “usually appears” in contexts similar to your prompt.

That’s why AI-generated code:

- Looks correct,

- Feels well-formed,

- Passes quick tests,

but often breaks under pressure, fails edge cases, and lacks engineering depth.

It’s logic-shaped output — not logic-based output.

The Consequences of Using Gen AI the Wrong Way

If developers continue relying blindly on AI, here’s what happens.

1. Losing Credibility

When a developer can’t defend or explain their own code, reviews become painful.

Senior engineers quickly notice when someone is shipping AI-written work they don’t understand.

2. Losing Trust

Leads stop assigning important tasks to developers who consistently deliver shallow, fragile, or copy-paste solutions.

Trust, once lost, is hard to rebuild.

3. Losing Skills

This is the biggest danger.

If AI handles your:

- Debugging,

- Problem-solving,

- Architecture thinking,

- Reasoning,

- Decision-making,

your skills degrade — just like muscles decay without use.

Developers who stop thinking don’t just stay stagnant — they regress.

4. Losing Confidence

Once developers rely too heavily on AI, they start doubting themselves:

- “Maybe the AI knows better.”

- “Maybe my logic is wrong.”

- “Maybe I shouldn’t challenge the output.”

They become passive operators instead of active problem-solvers.

5. Slower Development (Ironically)

Bad AI output leads to:

- More bugs,

- More refactoring,

- More rework,

- More security reviews,

- More production issues.

What looks fast in the beginning becomes slow in the long-term.

AI doesn’t slow down good developers.

It slows down developers who stop thinking.

How to Use Gen AI Correctly: Mindful, Not Dependent

AI is not the enemy.

Blind dependence is.

The right mindset is not “replace myself with AI” but:

Use AI to accelerate thinking, not to replace thinking.

Here’s how.

1. Use AI as a Drafting Tool

Let it generate:

- Boilerplate code,

- Documentation,

- Examples,

- Test scaffolding.

But always refactor it like you would refactor junior developer code.

2. Validate Everything

Before trusting AI output, check:

- Security,

- Performance,

- Error handling,

- Readability,

- Irrelevant logic,

- Edge-case behavior,

- Architectural impacts.

Don’t just accept — interrogate.

3. Use AI to Learn, Not to Outsource

Ask it:

- “Explain how this works.”

- “Why did you choose this approach?”

- “What are alternative solutions?”

- “Show me best practices for this pattern.”

Make it your tutor, not your ghostwriter.

4. Stay in Control

AI should amplify your decisions, not make them.

You decide the architecture.

You decide the boundaries.

You decide the constraints.

You hold the responsibility.

Conclusion: The Developer’s Mind Is Still the Most Valuable Asset

Generative AI is one of the most powerful tools ever created — but only when used correctly.

The danger is not that AI takes our jobs.

The danger is that developers stop thinking and expect AI to do the thinking for them.

AI output looks logical.

AI sounds confident.

AI acts like it knows the answer.

But behind the curtain, it’s just pattern prediction.

If we want to remain valuable — if we want to write high-quality software and solve real problems — we must stay mindful:

- Use AI to accelerate,

- Not to depend.

- Use AI to assist,

- Not to replace judgment.

- Use AI to learn,

- Not to avoid learning.

The illusion of intelligence is powerful —

but a thoughtful developer is still more powerful.