Introduction to AI Ecosystem Packages, Frameworks, and Platforms

What are AI ecosystem packages and toolkits?

Artificial Intelligence (AI) has transitioned from isolated algorithms to large-scale, interconnected systems. In this context, AI ecosystem packages have emerged as fundamental tools that provide reusable modules, frameworks, and infrastructures for building complex solutions. Instead of starting from scratch, developers can leverage these packages to accelerate innovation, ensure interoperability, and maintain scalability.

Why AI frameworks and platforms matter in modern intelligent systems?

Multi-agent systems, where multiple AI agents collaborate to achieve common or individual goals, rely heavily on these ecosystem packages. Without them, coordinating tasks, managing data flows, and deploying at scale would be prohibitively complex.

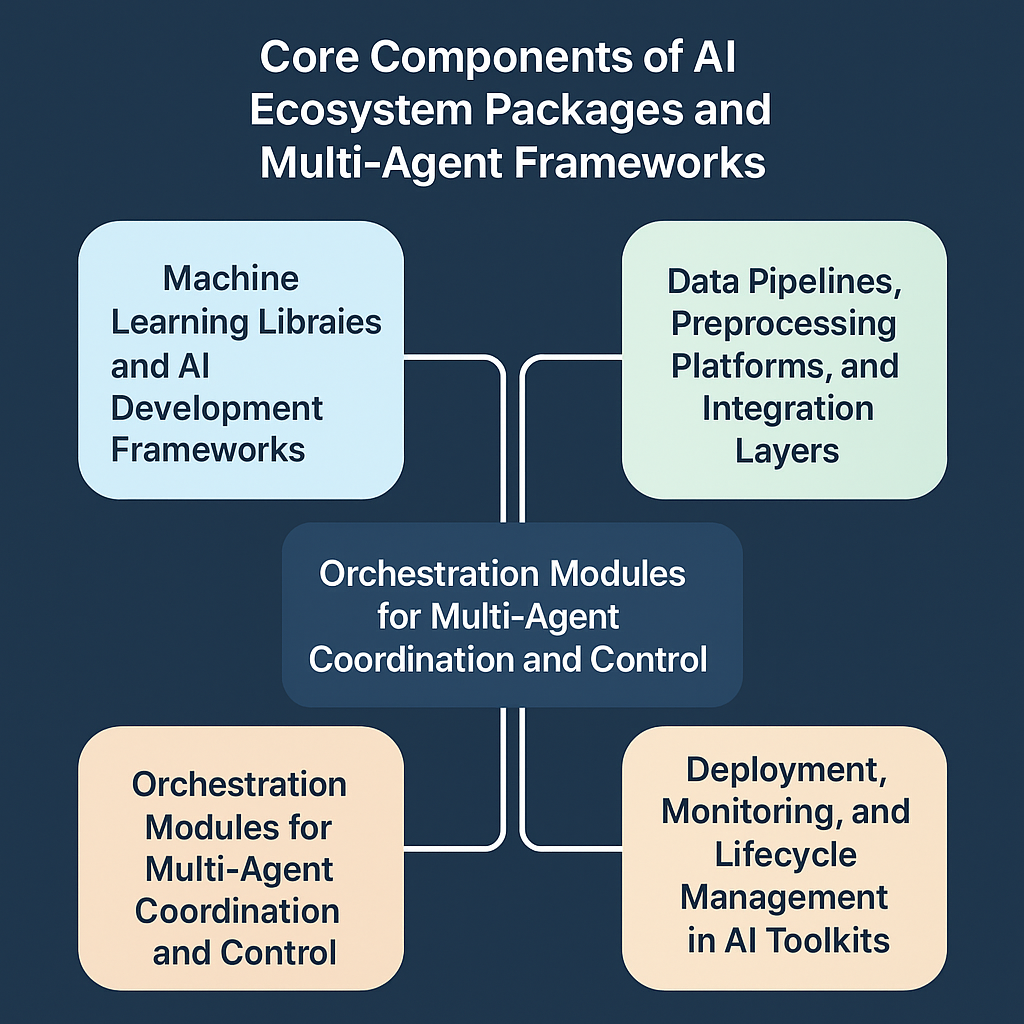

Core Components of AI Ecosystem Packages and Multi-Agent Frameworks

Machine learning libraries and AI development frameworks

At the heart of every AI ecosystem package lies machine learning libraries and frameworks. These toolkits, such as PyTorch and TensorFlow, provide the mathematical operations, neural network structures, and optimization algorithms needed for intelligent agents. Furthermore, they act as the foundation upon which higher-level orchestration systems and multi-agent platforms are built.

Data pipelines, preprocessing, and integration tools

AI agents cannot operate effectively without clean and structured data. Therefore, ecosystem frameworks often include data pipelines that handle ingestion, preprocessing, and integration of data. In addition, platforms like Apache Airflow and Prefect ensure that agents receive consistent and timely data, which consequently improves the overall reliability of multi-agent workflows.

Orchestration modules for multi-agent coordination

Another crucial element of AI ecosystem packages is the orchestration layer. These modules define how agents communicate, synchronize tasks, and balance computational loads across distributed environments. Moreover, they reduce redundancy by enabling agents to share intermediate results, which consequently improves efficiency in large-scale intelligent systems.

Deployment, monitoring, and lifecycle management in AI toolkits

Developing an AI system is only the beginning; deployment and monitoring complete the process. Consequently, ecosystem packages often integrate tools for containerization, CI/CD pipelines, and real-time monitoring dashboards. In addition, these frameworks allow lifecycle management, ensuring that agents not only function correctly but also evolve and adapt through updates and retraining.

Role of AI Ecosystem Packages in Multi-Agent Systems

Communication Protocols and Collaboration Between AI Agents

One of the most critical functions of AI ecosystem packages is providing standardized communication protocols. These protocols ensure that multiple agents can share data, exchange decisions, and coordinate actions effectively. Moreover, they reduce the risk of miscommunication by establishing well-defined message formats and synchronization mechanisms.

Scalability Through Distributed AI Platforms and Orchestration Tools

Multi-agent systems often need to scale across hundreds or even thousands of tasks. Therefore, AI ecosystem packages include distributed frameworks such as Ray, which allow parallel execution across multiple nodes. Additionally, these orchestration tools balance workloads, allowing agents to perform efficiently even in resource-intensive environments.

Interoperability Across Heterogeneous AI Frameworks and Agents

In practice, agents are rarely built on the same framework or language. Consequently, AI ecosystem packages provide interoperability layers, middleware, and APIs that bridge these gaps. As a result, agents designed for computer vision, reinforcement learning, or natural language processing can work together seamlessly. This interoperability ultimately leads to more flexible and adaptive multi-agent ecosystems.

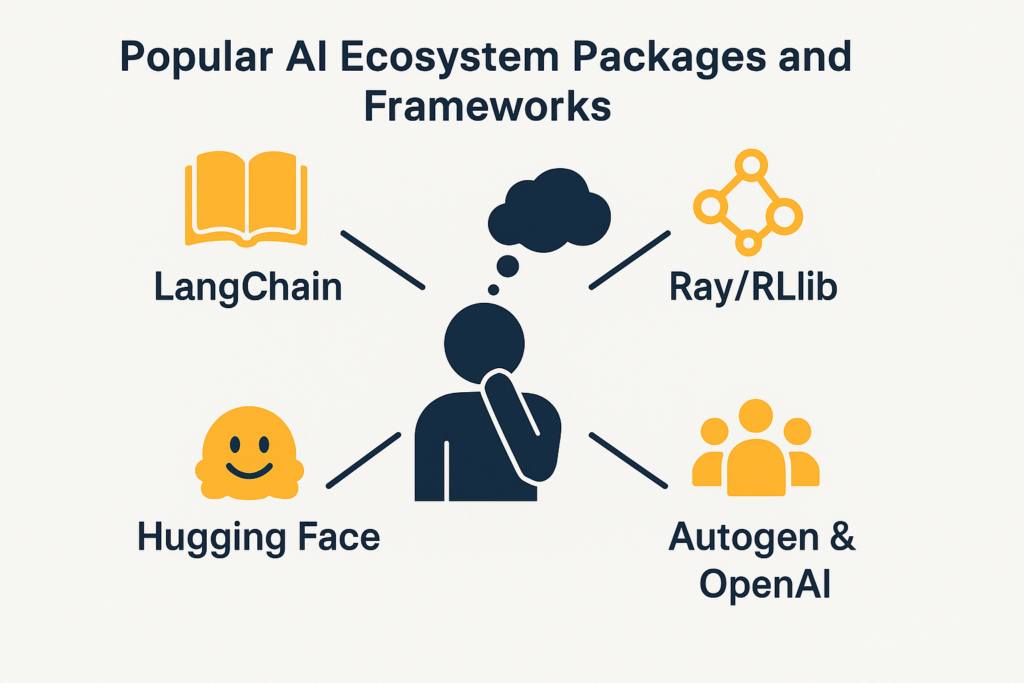

Popular AI Ecosystem Packages and Frameworks

LangChain and LlamaIndex for LLM-driven multi-agent orchestration

LangChain and LlamaIndex have become popular ecosystem packages for applications built on large language models (LLMs). These frameworks simplify the orchestration of multi-agent workflows by offering structured pipelines for reasoning, retrieval, and tool usage. In addition, they allow developers to integrate multiple LLMs into intelligent systems without designing communication protocols from scratch.

Ray and RLlib as distributed AI ecosystem frameworks

Ray is a powerful distributed AI platform that provides the foundation for scaling multi-agent systems. Its ecosystem includes RLlib, a reinforcement learning library that enables parallel training across clusters. Consequently, developers can design large-scale simulations where numerous agents collaborate or compete, ensuring both scalability and efficiency.

Hugging Face platform for model integration and AI toolkits

The Hugging Face ecosystem offers a unique combination of model repositories, APIs, and libraries, including Transformers. Through this platform, agents can access pre-trained models for vision, text, or speech and integrate them directly into workflows. Moreover, the collaborative nature of Hugging Face encourages sharing, which strengthens the overall ecosystem of multi-agent development.

Microsoft Autogen and OpenAI multi-agent collaboration packages

Recent advancements in multi-agent orchestration come from Microsoft Autogen and OpenAI’s ecosystem tools. These packages offer flexible APIs for defining roles, assigning goals, and managing agent-to-agent collaboration. As a result, they make the development of complex multi-agent frameworks more accessible while maintaining reliability and scalability.

Challenges in Building AI Ecosystem Packages and Frameworks

Standardization and interoperability across AI frameworks

One of the most significant challenges in developing AI ecosystem packages is the lack of standardization. Different frameworks and platforms often adopt unique protocols, which makes integration difficult. Consequently, ensuring seamless interoperability between agents built on heterogeneous toolkits remains an ongoing issue.

Resource efficiency and scalability in multi-agent systems

Multi-agent systems are inherently resource-intensive, as numerous agents may run simultaneously. Therefore, ecosystem packages must focus on optimizing memory usage, computational efficiency, and communication overhead. In addition, scalability concerns often arise when transitioning from experimental prototypes to large-scale deployments.

Security, trust, and safe interaction in AI ecosystems

Security is another critical concern when building AI frameworks and orchestration tools. Agents must exchange information reliably, but malicious interference or faulty coordination can compromise entire systems. Moreover, trust mechanisms such as authentication, encryption, and verification become essential to maintain safe collaboration across distributed platforms.

Future Directions of AI Ecosystem Packages and Multi-Agent Frameworks

Open-source collaboration, platforms, and shared standards

The future of AI ecosystem packages will be shaped heavily by open-source collaboration. As more developers contribute, frameworks and platforms are likely to converge toward shared standards. Furthermore, this collective effort will lower adoption barriers and accelerate innovation in multi-agent ecosystems.

Edge computing, cloud-native integration, and distributed AI

Another major direction involves tighter integration with edge and cloud-native infrastructures. Consequently, AI toolkits and orchestration frameworks will evolve to support real-time decision-making with lower latency. In addition, hybrid deployments across cloud and edge will ensure that multi-agent systems remain both scalable and responsive.

Towards autonomous AI agents and self-organizing ecosystems

Looking further ahead, AI ecosystem packages will likely support autonomous, self-organizing multi-agent frameworks. Therefore, agents will be able to discover resources, negotiate roles, and adapt to dynamic environments without constant human supervision. Moreover, this shift could transform AI from static programmed systems into evolving intelligent ecosystems.

Code Demo: Orchestrating Multi-Agents with Ray Ecosystem Package

import ray

import time

# Initialize Ray

ray.init()

@ray.remote

def agent(name, task):

print(f"{name} started task: {task}")

time.sleep(2)

return f"{name} completed {task}"

# Define multiple agents

agents = [

agent.remote("Agent-1", "Data Preprocessing"),

agent.remote("Agent-2", "Model Training"),

agent.remote("Agent-3", "Evaluation")

]

# Collect results

results = ray.get(agents)

print("Results:", results)

ray.shutdown()

Explanation:

ray.init()sets up the distributed environment.- Each agent executes tasks in parallel using the

@ray.remotedecorator. - Results are gathered asynchronously, demonstrating how AI ecosystem packages facilitate orchestration in multi-agent systems.

Conclusion

AI ecosystem packages provide the essential building blocks for developing multi-agent systems. They integrate libraries, orchestration modules, and deployment support into cohesive frameworks that enhance scalability, interoperability, and robustness. As AI applications continue to evolve, the importance of these packages will only grow, enabling researchers and engineers to design intelligent, distributed ecosystems that can operate autonomously and adapt to real-world challenges.

References

- https://www.ray.io/

- https://www.langchain.com/

- https://huggingface.co/

- https://github.com/microsoft/autogen