Introduction

What is DataHub?

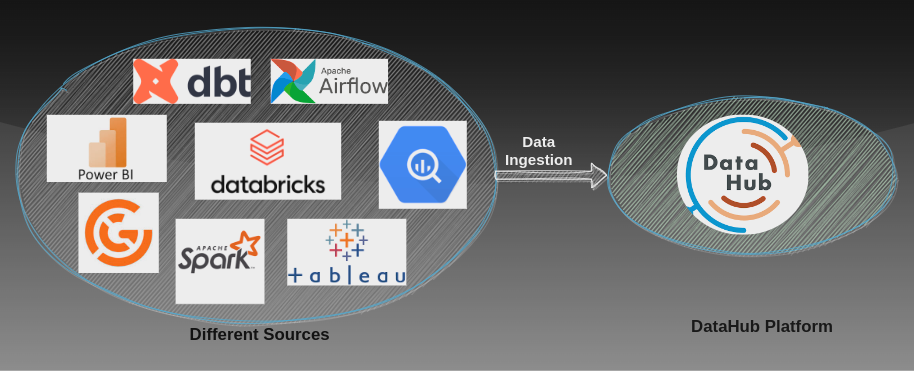

DataHub is a modern data catalog that is built for end-to-end data observability, data discovery, and data governance. A datahub is a centralized system for data definition, delivery, and storage. The data hub can ingest data from different sources such as Databricks, postgres, spark, BigQuery, etc. It is used for data governance.

Key Objectives of DataHub

- Search all corners of the data stack

- Track end-to-end lineage

- Manage Entity Ownership

- Govern with Tags, Glossary Terms, and Domains

- Create Users, Access Policies & Groups

- Data Quality Checks

Core Components of DataHub

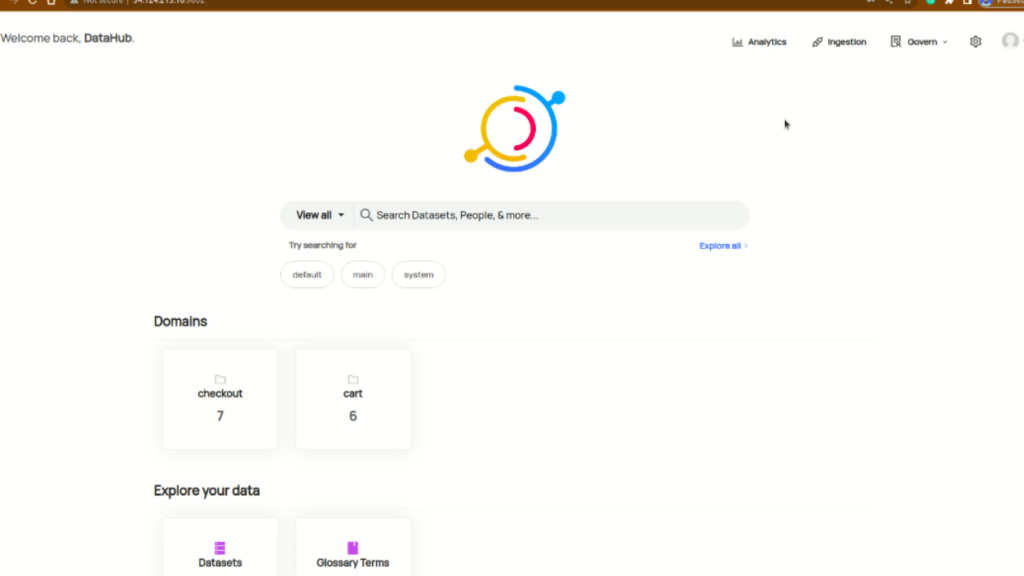

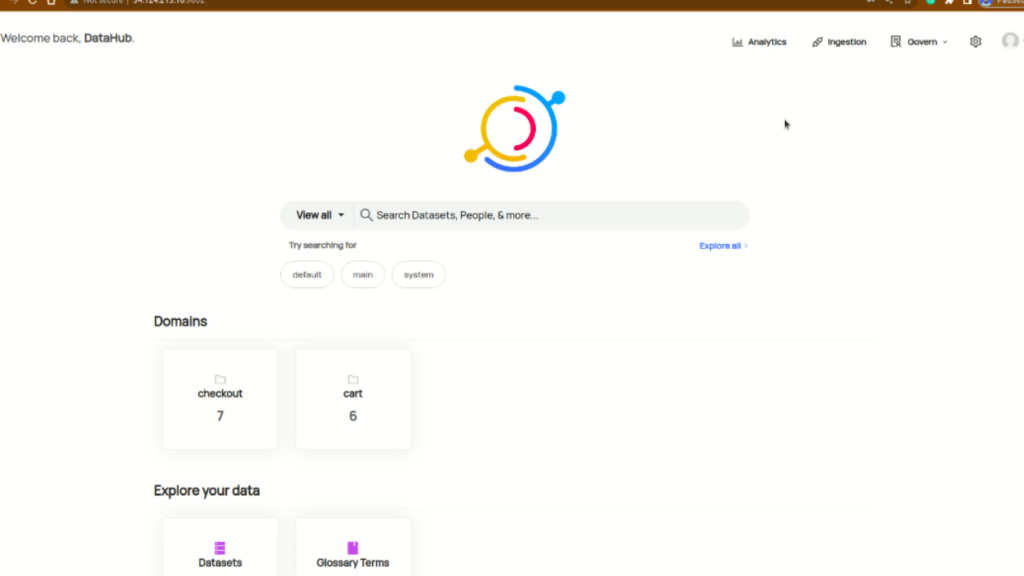

DataHub User Interface

The DataHub User Interface serves as a user-friendly tool for managing data efficiently. It allows users to invite others, create groups, and assign specific roles and permissions for controlled data access. The interface also supports easy data ingestion and the implementation of policies to ensure organized and secure data handling. Overall, it provides a straight forward solution for organizations to manage their data effectively.

Some of the basic terms are

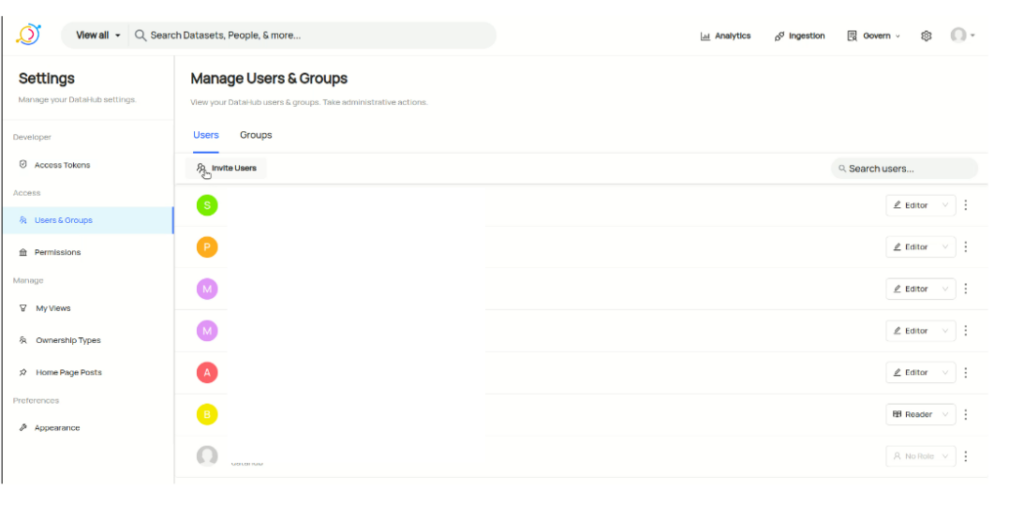

1. User’s

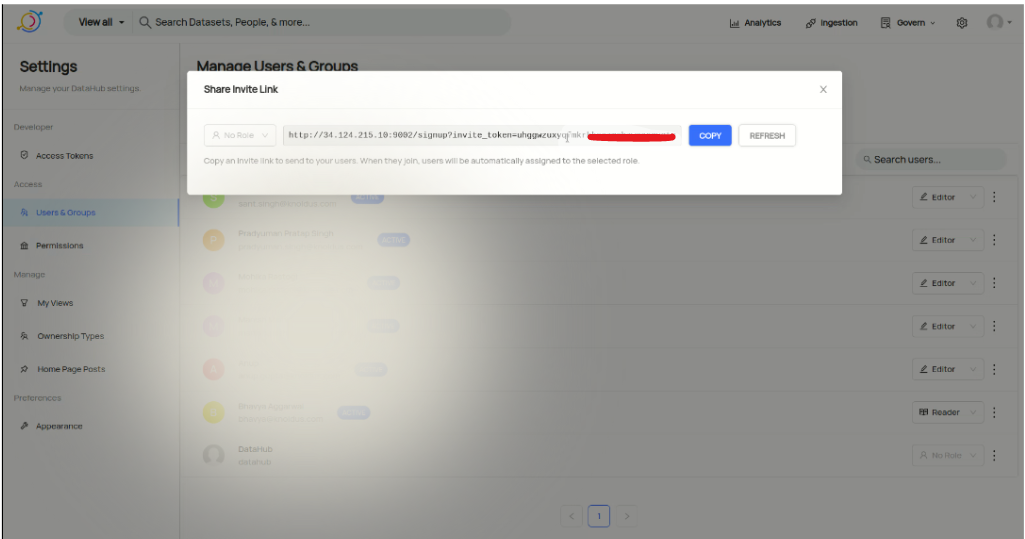

DataHub provides the functionality to add users to the DataHub platform. To add a new user click on the setting icon on the home page. After that in the left panel click on user & groups.

Now click Invite Users copy the URL and share it with the user to whom you want to invite to this platform. You can also assign the roles such as Editor or reader.

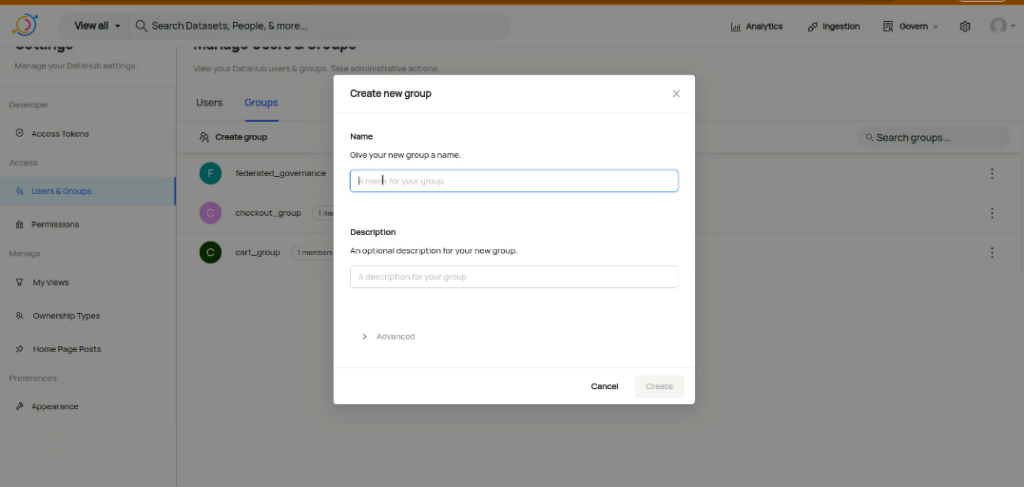

2. Group

A group is the combination of two or more users that work on the same functionality or as a team. To Create a group click on Create Group name the group and describe for what purpose this group was created and click on Create.

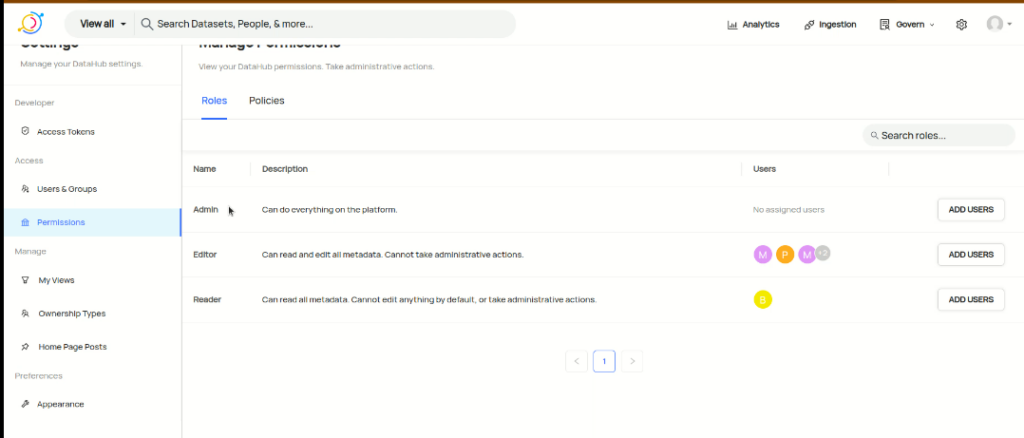

3. Roles & Permission

In Datahub, we have to define the roles and permission of a user, on the data that is ingested from other sources.

There are three main roles

- Admin: The user who has all the permission.

- Editor: The user who can manage the data, data owner, etc.

- Reader: The user who can view the data, its quality, data lineage, etc.

Data Ingestion

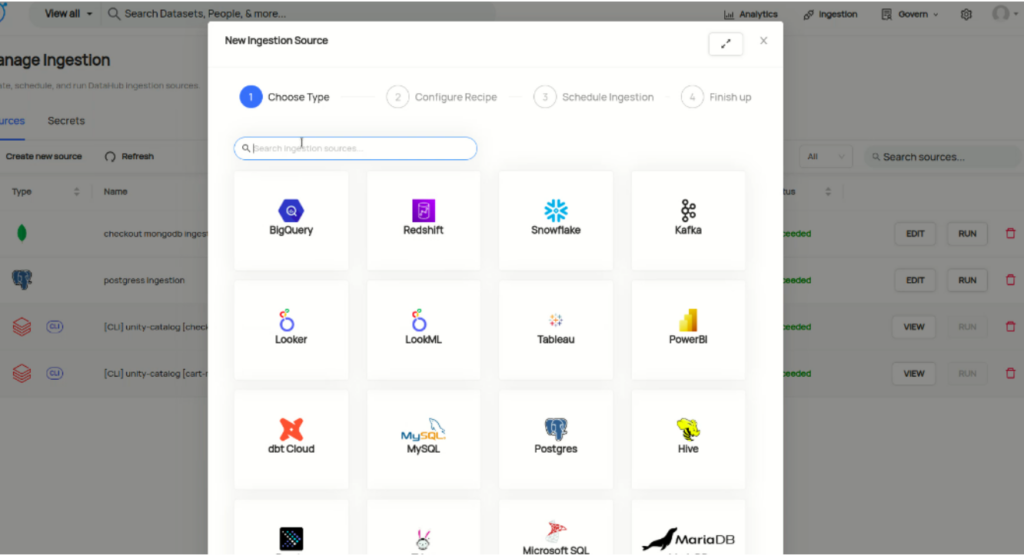

DataHub supports too many different sources from where we can ingest the data some of the sources are Databricks, BigTable, Hive, Postgres, Great Expectations, etc. The data that are ingested we can observe the data, data lineage, data quality, schema, owner of the data, etc.

Types of Data Ingestion Sources

- Databricks

- Apache Spark

- Great Expectations

- BigQuery

- DynamoDB

- Postgres

- PowerBI and many more

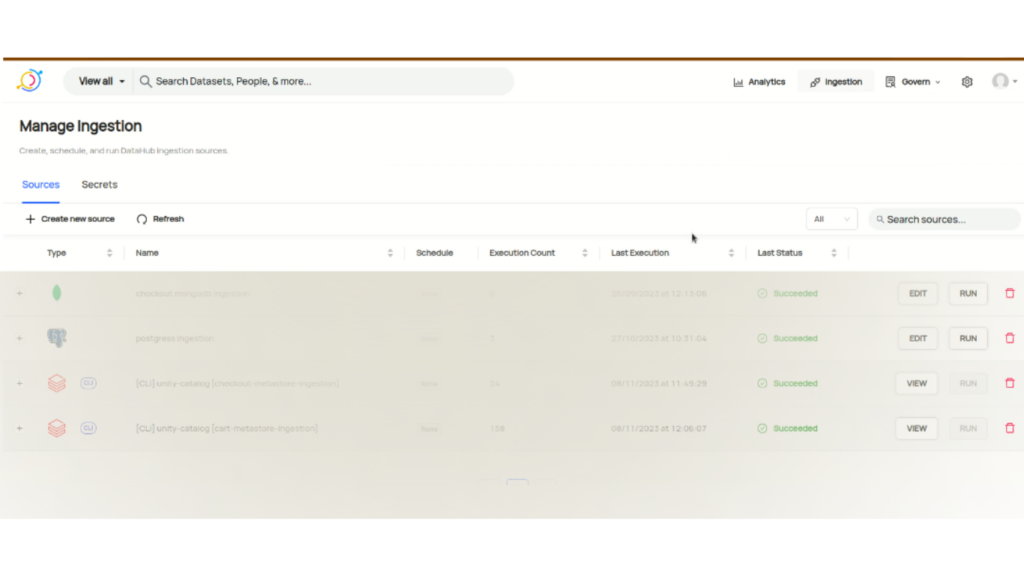

Ingesting data from UI

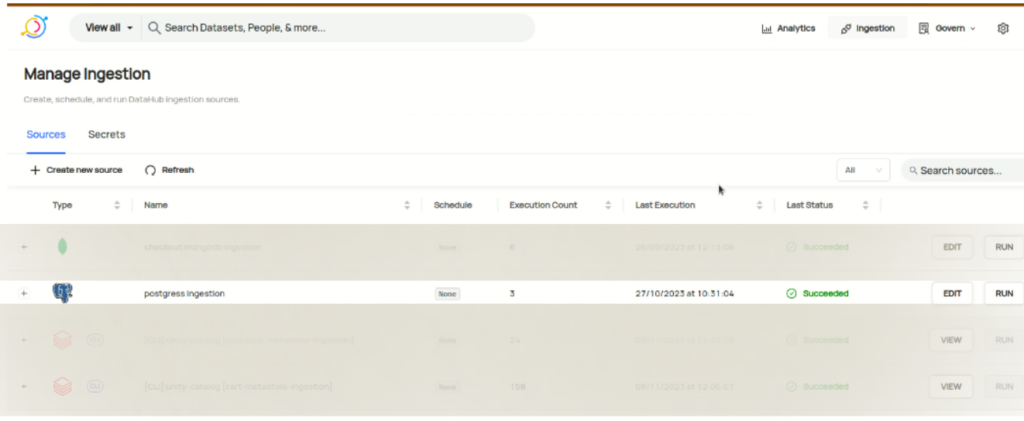

1. Click on the Ingestion tab on the datahub UI

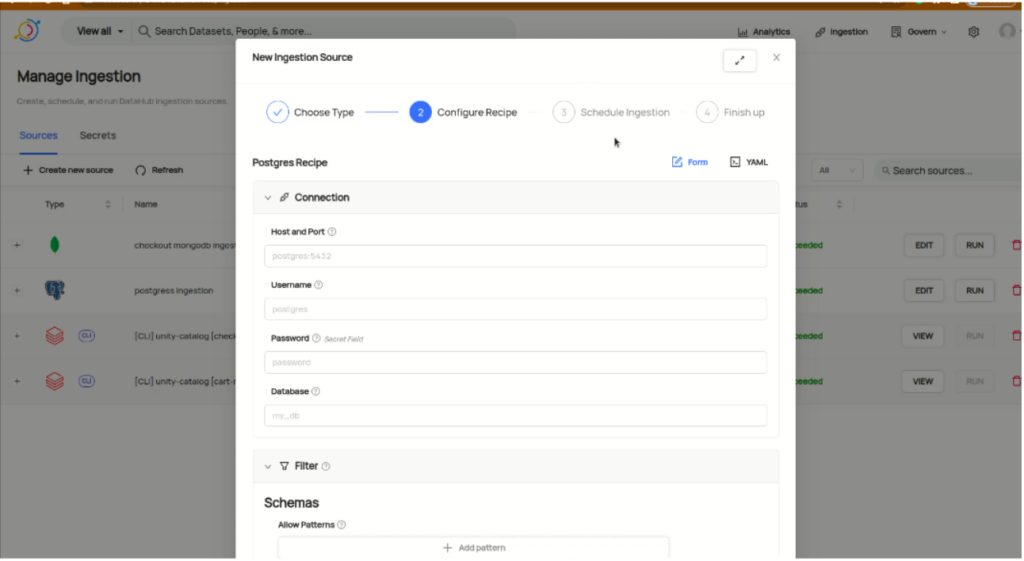

2. Click on Create New Source. It will suggest different sources. We are taking an example of Postgres data source.

3. Search for Postgres and after that specify the configuration of Postgres sources such as host and port, username, password, and database name.

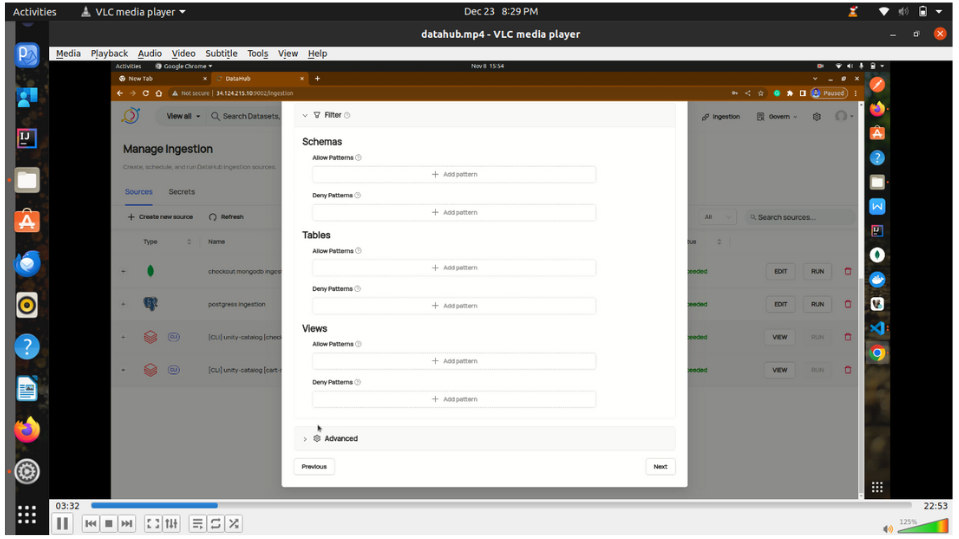

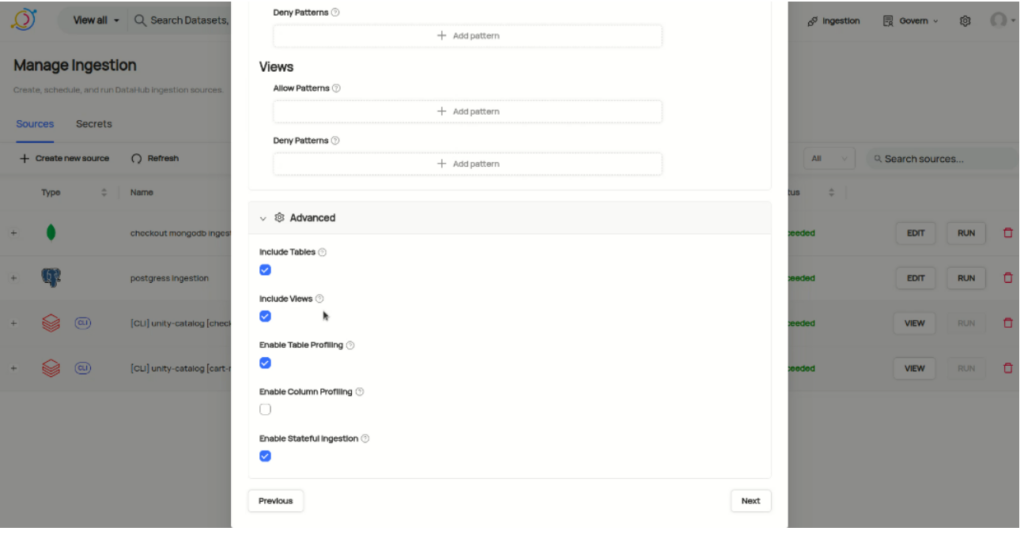

4. In this part you can specify which table, view, and schema you want to ingest or deny.

5. In the advanced option you can enable or disable the table, view for data lineage, and can also set profiling(sample of data) on ingested data.

Click on next, set the scheduled time, and finish it.

6. Now you can click on run and check the status of it.

Similarly, you can do it for other data sources as per their configuration.

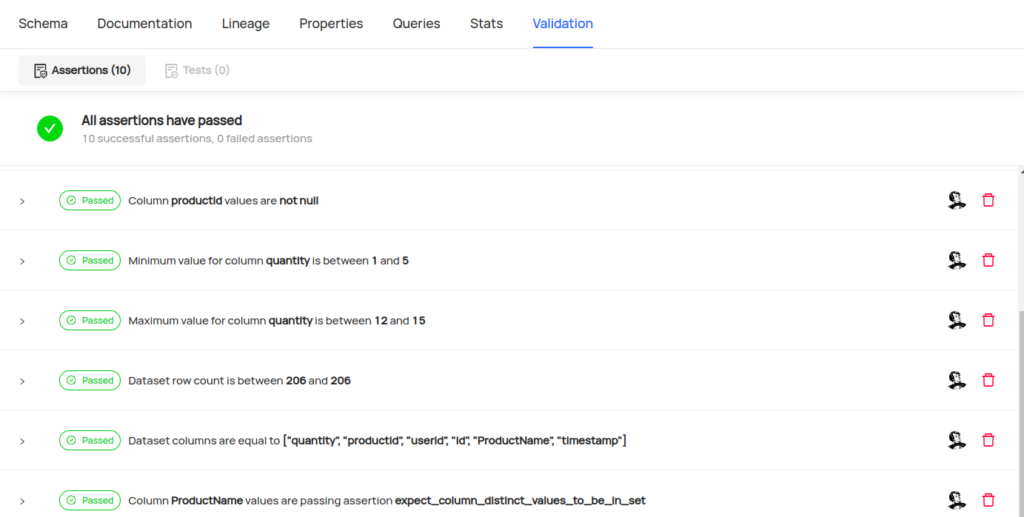

Monitoring & Reporting Tools

If we talk about the monitoring and reporting tools that can be used with datahub are many more but we are going to discuss the Great Expectations tools that can be used to monitor the data quality while ingesting the data from other sources. It also generates the records of which data are valid or not.

You can refer to this link to integrate great expectations with other data sources for quality checks.

If you want to integrate great expectations with the Datahub platform then specify the below line of code in the yaml file. Refer above link

- name: datahub_action

action:

module_name: datahub.integrations.great_expectations.action

class_name: DataHubValidationAction

server_url: http://35.240.***.***:8080

platform_alias: databricks

platform_instance_map: {

cart_datasource : 558*******524.metastore.main,

//take this platform instance by visting on the data asset in datahub platform

}

Conclusion

DataHub emerges as a cutting-edge data catalog, offering end-to-end observability, discovery, and governance. With a focus on comprehensive data management, it facilitates centralized user administration, group collaboration, and precise roles and permissions. Supporting diverse data sources, DataHub enables seamless ingestion with detailed insights into data lineage, quality, and ownership. The platform’s integration with monitoring tools, exemplified by Great Expectations, further enhances its capabilities, ensuring data quality and reliability. DataHub emerges as a pivotal solution, empowering organizations to navigate the complexities of modern data ecosystems with efficiency.