What is OpenAI model?

An OpenAI model is a type of artificial intelligence (AI) system trained by OpenAI to understand and generate human-like content — such as text, images, code, or speech.

These models are trained using machine learning and vast amounts of data so they can perform tasks like:

💻 Writing code (like Codex, now part of GPT)

🗣️ Writing and understanding language (e.g. GPT-4)

💬 Having conversations (like ChatGPT)

🖼️ Creating images from text (like DALL·E)

🔊 Turning speech into text (like Whisper)

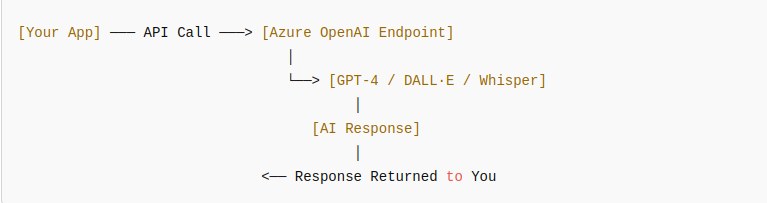

The Azure OpenAI Service is a cloud-based offering from Microsoft Azure that gives developers access to OpenAI’s powerful language models — like GPT-4, GPT-3.5, DALL·E, and Whisper — through secure, scalable APIs.

It brings the capabilities of OpenAI’s models (the ones behind ChatGPT, Copilot, etc.) into the enterprise-grade environment of Microsoft Azure.

Architecture Overview (Simple Flow)

Azure OpenAI models

Based on model functionality, we can group models as follows:

Language Models (Text Understanding & Generation)

These are the core models for natural language processing — great for chatbots, summarization, reasoning, Q&A, content creation, etc.

Some example models:

- gpt-4

- gpt-4-32k

- gpt-4-0125-preview

- gpt-4-turbo-2024-04-09 (supports vision too)

- gpt-4-vision-preview

- gpt-35-turbo

- gpt-35-turbo-16k

Reasoning & Problem-Solving Models

Focused on tasks like complex logic, math, code reasoning, and structured decision-making.

Some example models:

- o3-mini

- o1

- o1-mini

- o1-preview

Multimodal Models (Text + Image + Audio)

Models that can process multiple input types (like images and audio alongside text).

Some example models:

- gpt-4o (text, vision, and audio)

- gpt-4o-mini

- gpt-4o-audio-preview

- gpt-4o-realtime-preview

- gpt-4o-mini-audio-preview

- gpt-4o-mini-realtime-preview

Image Generation Models

Designed to generate images based on text prompts.

Some example models:

- dall-e-3

- dall-e-2

Speech Recognition Models

For transcribing speech into text (speech-to-text tasks).

Some example models:

- whisper

Language Models Comparison – GPT-4 Series vs GPT-3.5 Turbo Series

Note: Below are just the writer’s personal experiments.

Some Example Code

Example 1

Well, let’s go through an example first. We will send the same prompt to GPT-4 Turbo and GPT-3.5 Turbo using the Azure OpenAI Python SDK — so you can compare their outputs in real time.

Setup

Make sure you’ve installed the Azure OpenAI package

pip install openaiExample Python Code

import openai

# Azure OpenAI configuration

openai.api_type = "azure"

openai.api_base = "https://<your-resource-name>.openai.azure.com/"

openai.api_version = "2025-03-01-preview"

openai.api_key = "<your-api-key>"

# Sample user prompt

prompt = "Explain quantum computing in simple terms a 10-year-old could understand."

# Request to GPT-4 Turbo

response_gpt4 = openai.ChatCompletion.create(

engine="gpt-4-turbo", # your GPT-4 deployment name

messages=[

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": prompt}

],

temperature=0.7,

max_tokens=200

)

# Request to GPT-3.5 Turbo

response_gpt35 = openai.ChatCompletion.create(

engine="gpt-35-turbo", # your GPT-3.5 deployment name

messages=[

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": prompt}

],

temperature=0.7,

max_tokens=200

)

# Print the results

print("GPT-4 Turbo Response:")

print(response_gpt4['choices'][0]['message']['content'])

print("\n---\n")

print("GPT-3.5 Turbo Response:")

print(response_gpt35['choices'][0]['message']['content'])

Compare simulated result

| GPT-4 Turbo | GPT-3.5 Turbo | |

| Response | Imagine your computer is like a superhero solving puzzles. Normal computers use tiny light switches called bits that are either ON (1) or OFF (0). But quantum computers use super special switches called qubits that can be ON, OFF, or both at the same time! | Quantum computers are special kinds of computers that don’t just use 1s and 0s like regular ones. They use something called “qubits” that can be both 1 and 0 at the same time. |

| Highlights | – Uses analogies (maze, superhero) – Engaging and imaginative – Easy for a child to follow | – Accurate but less playful – Straightforward explanation – Lacks storytelling or deeper metaphor |

Now let’s go to another example with a coding-related input

Example 2

Prompt

Write a Python function that takes a list of numbers and returns the second largest number. Explain your codeSimulated Output

GPT-4 Turbo Response

def second_largest(numbers):

unique_numbers = list(set(numbers)) # Remove duplicates

if len(unique_numbers) < 2:

return None # Not enough distinct numbers

unique_numbers.sort(reverse=True)

return unique_numbers[1]GPT-3.5 Turbo Response

def second_largest(numbers):

numbers.sort()

return numbers[-2]Let’s compare!

| GPT-4 Turbo | GPT-3.5 Turbo | |

| Explanation | This function first removes duplicates using set(), then checks if there are at least two unique numbers. It sorts the list in descending order and returns the second item. If there aren’t enough unique values, it returns None | This function sorts the list and returns the second last element, which is the second largest. |

| Strengths/Weaknesses | – Handles duplicates – Includes edge-case handling – Clear, concise explanation | – Doesn’t handle duplicates correctly – Doesn’t check for list length or uniqueness – Short explanation, no edge-case coverage |

Summary Comparison

| Feature | GPT-4 Turbo | GPT-3.5 Turbo |

| Creativity | High (fun analogies) | Basic |

| Explanation Depth | – Strong, layered – Thorough and thoughtful | – Simple and brief – Lless helpful |

| Readability | Designed for young audience | Still a bit technical |

| Cost & Speed | Slower and costlier | Fast and affordable |

| Correctness | High (handles edge cases) | Prone to error |

| Code Quality | Robust, reusable | Simplified, fragile |

Some general comparisons

| Feature | GPT-4 Series | GPT-3.5 Turbo Series |

| Models | gpt-4, gpt-4-32k, gpt-4-turbo | gpt-35-turbo, gpt-35-turbo-16k |

| Reasoning Power | Very high (handles logic, nuance, ambiguity) | Moderate (sometimes makes simple mistakes) |

| Speed | Slower than 3.5 | Very fast and efficient |

| Cost | Higher | Cheaper |

| Context Length | Up to 128k tokens (turbo) | Up to 16k tokens |

| Training Cutoff | December 2023 (turbo version) | October 2023 |

| Function Calling | Supported (with tool use + JSON mode) | Supported (with tool use + JSON mode) |

| Use Cases | Advanced assistants, analysis, content creation | Chatbots, summarization, lighter tasks |

Conclusion: When to Use?

| Use Case | Recommended Model |

| Deep reasoning, long documents | GPT-4 Turbo |

| Fast & budget-friendly apps | GPT-3.5 Turbo |

| Educational content or creativity | GPT-4 |

| Simple chat or summarization | GPT-3.5 |

Note: The above results are only based on the author’s personal experience and testing, depending on the case, the results may be different. In addition, AI is constantly developing and new models will be continuously released, so in the future, the recommendations may no longer be correct.