ChatGPT needs no introduction. As of now, the word has been spread like jungle fire. In every aspect of our problem-solving, we are using it in our daily lives. If you want to build it for some serious use case, Let us dive a little deeper into how it works from a developer’s point of view. In this blog, I will walk you through the steps which it takes to build any application around Chat-GPT-based OpenAI API. Let us start with the problem statement.

The problem statement

The problem which we are solving with this is very generic e.g. Extracting entities from a text using the most advanced technology. As simple as extracting educational qualifications from a resume. As of now, you can also query the same using the UI interface provided by the ChatGPT but doing it repeatedly as good as a Recruiter filtering out resumes based on Educational qualification. You can upload a resume and ask ChatGPT to get Education as JSON from the given document.

We will solve this problem using OpenAI API and calling the API provided by OpenAI. OpenAI provides API to interact with its large language models. You just need to specify a bunch of headers and the input text on which you want any result.

How does Chat-GPT API work?

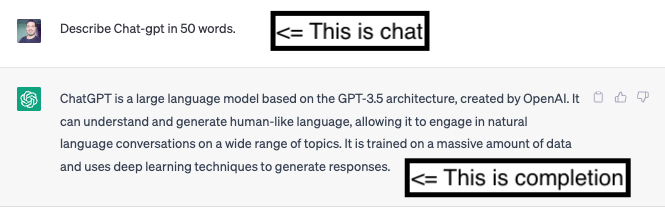

The interaction with large language models provided by OpenAI API interacts with two basic terms. When you submit a request as input it is called a chat Or a prompt. You receive a response from the API that is called a completion.

We interact with the API in a similar fashion. To send any message as a prompt, we can send an HTTP request with a message as a user sending a prompt. For e.g. use the following request to make a curl request:

curl https://api.openai.com/v1/chat/completions \

-H "Content-Type: application/json" \

-H "Authorization: Bearer $OPENAI_API_KEY" \

-d '{

"model": "gpt-3.5-turbo",

"messages": [{"role": "user", "content": "Read the following text as a resume {INSERT RESUME TEXT HERE} and return all the educational qualification as a JSON object!"}],

"temperature": 0.7https://platform.openai.com/account/api-keys

}'To authenticate with OpenAI API, you would need to set OPEN_API_KEY as the environment variable. If you don’t have one, check out https://platform.openai.com/account/api-keys to generate one. Once you sign up, it should look like this if you have generated one

I used a tiny Python Flask app to make a request and It would look like this where you can simply pass your message template as a param to the method:

import openai

def complete(text, template, model_name):

return openai.ChatCompletion.create(

model=model_name,

messages=[{"role": "user",

"content": "Read this Resume text. Resume start\n" + text + "\n Resume end. "

"Give the information based on the above resume in the following format as a valid JSON object:\n" + template +

"Do not include fields in the json if information is not provided"

}]

)- The response would be returned as a JSON as requested as a complete object.

{

"choices": [

{

"finish_reason": "stop",

"index": 0,

"message": {

"content": "{\n \"Education\": [{\n \"Degree\": \"Master\u2019s in Computer Application\",\n \"University\": \"IP University\",\n \"Location\": \"Delhi, India\",\n \"GraduationDate\": \"May 2018\"\n },\n {\n \"Degree\": \"Bachelor\u2019s in Computer Science\",\n \"University\": \"University of Delhi\",\n \"Location\": \"Delhi, India\",\n \"GraduationDate\": \"May 2015\"\n }\n ]\n}",

"role": "assistant"

}

}

],

"created": 1682880768,

"id": "chatcmpl-7B6S86XoXQ9uDhbLYvySwGn9Z4zXn",

"model": "gpt-3.5-turbo-0301",

"object": "chat.completion",

"usage": {

"completion_tokens": 145,

"prompt_tokens": 760,

"total_tokens": 905

}

}The response we have received contains the response as the complete object. The exact path is choices[0].message.conten Let us talk about the additional information which I have highlighted in the JSON above. Here completion_tokens is 145 which is the number of tokens in the response. The prompt_tokens are tokens uploaded as requested? The total toke decides how much money you will spend using OpenAI API. Yes!! you heard it right.

You can refer to the pricing section to know how much it costs to make a request for a particular number of tokens. When you sign up you get sufficient credit to use and prototype whatever you are building around OpenAI.

Limitations and workaround

There are some technical limitations around the number of tokens you can include in a single request. it depends on which language model you are using to make the request. It is limited to 4097 tokens in a single request. Ideally as far as I have tested the API, the shorter and more concise the prompt, the lesser the response time will be. Long request prompts take more time to process and also generate a response.

In case your use case demands more tokens to process the input, you will have to break the use case so that input can be separated into logical chunks and output can be accumulated together.

Making it more interactive

If you think the response time is too much, you can still stream the results in a way that as soon as API starts generating output, you can start receiving it. The total time taken to complete will still be the same as the non-streaming result but it would be more interactive. You can set stream=true in the request body and the results will be streamed. Check full details Here. https://platform.openai.com/docs/api-reference/chat/create

Hope this blog gave you a starting sense to use OpenAI API for a particular use case. Thanks for reading!