Terraform Cloud provides the facility to store your terraform data. You can store your tfstate files on workspaces and keep the whole set of module packages with all tf files on the registry. The registry is both Public and Private. And here, we will deal with the private registry for creating terraform module.

Prerequisites

- Terraform Cloud Id

- Azure DevOps Id

- GitHub Id

- Understanding of API

Basic Setup for Terraform Cloud Module

We set up each environment one by one before creating the pipeline.

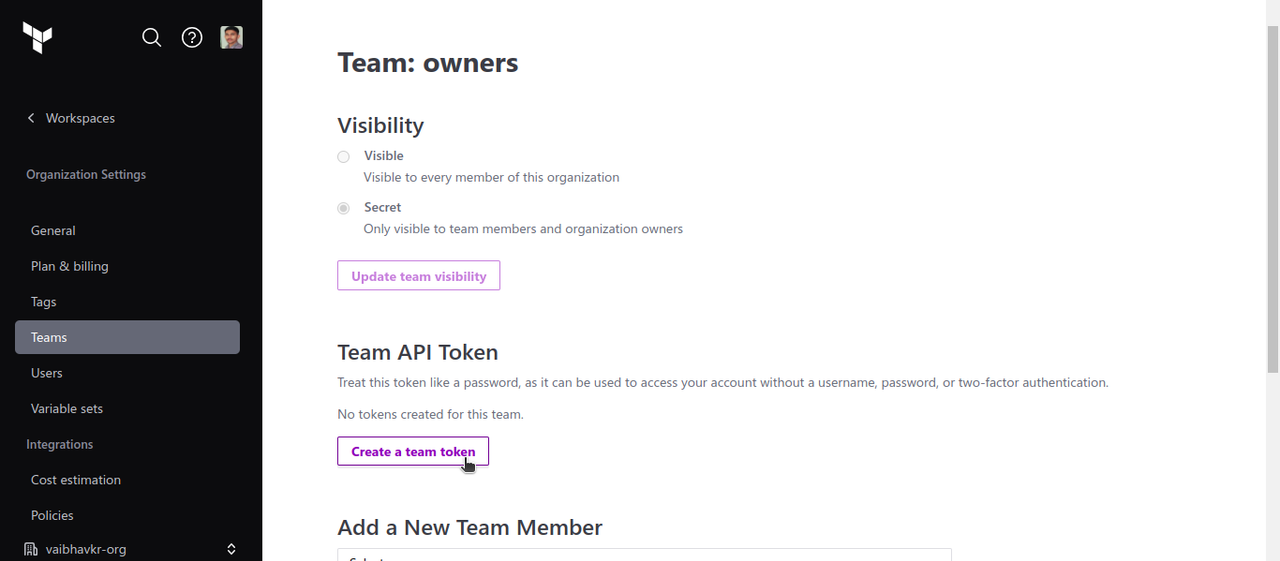

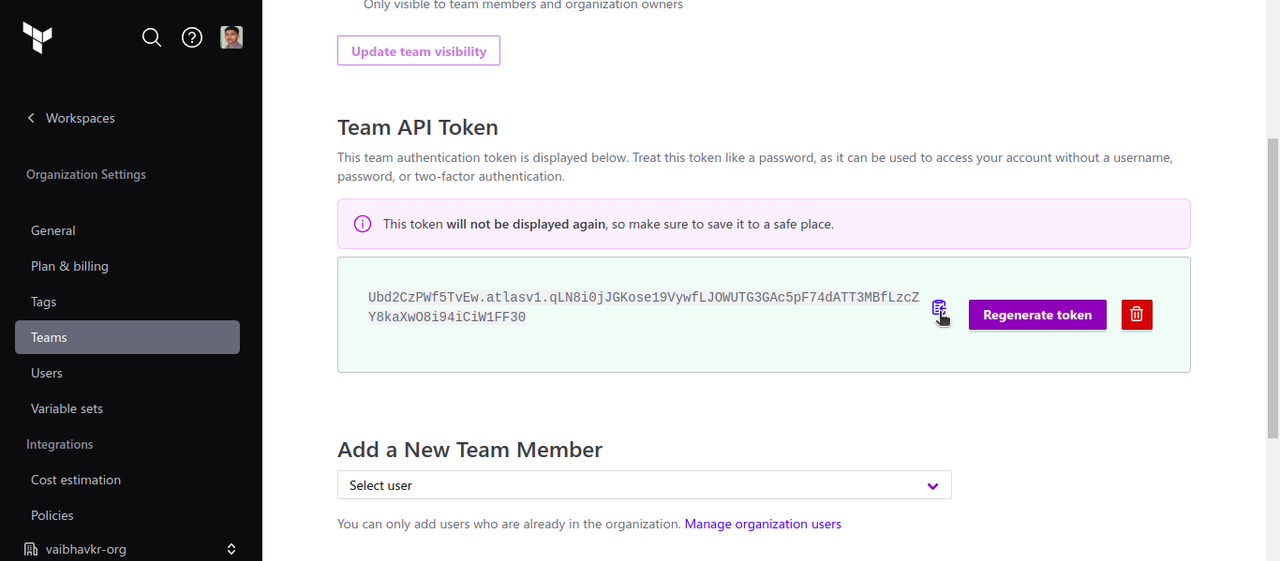

1. Create a Token on Terraform Cloud to access the API:

1.1 Go to Settings–>Teams–>Team API Token

1.2 Click on Create a team token.

1.3 Select the number of days for validity. And then click to generate token.

And now, save the token for future use.

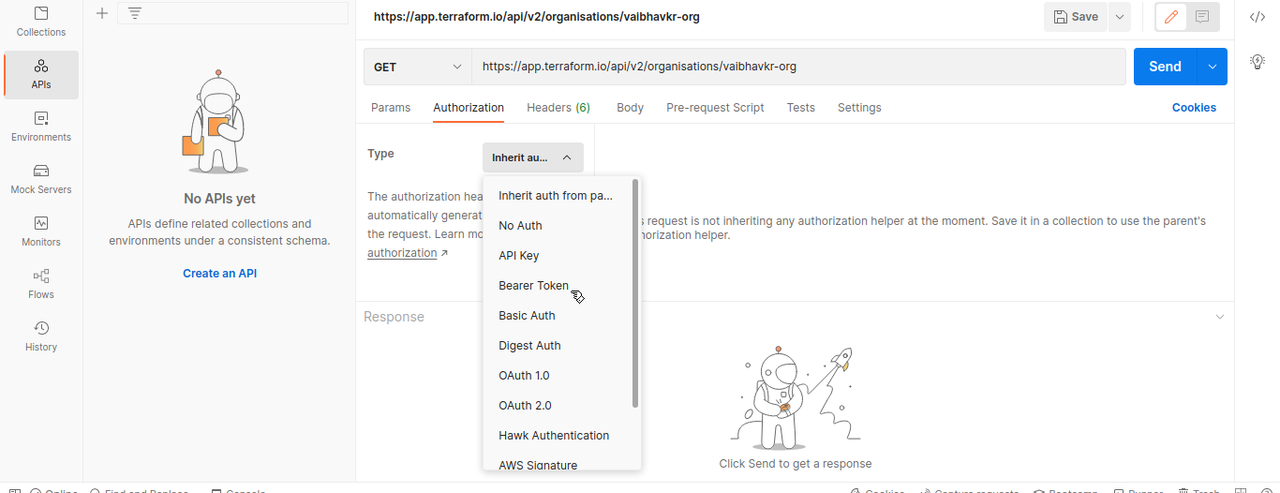

2. Now we need to check the access to create module:

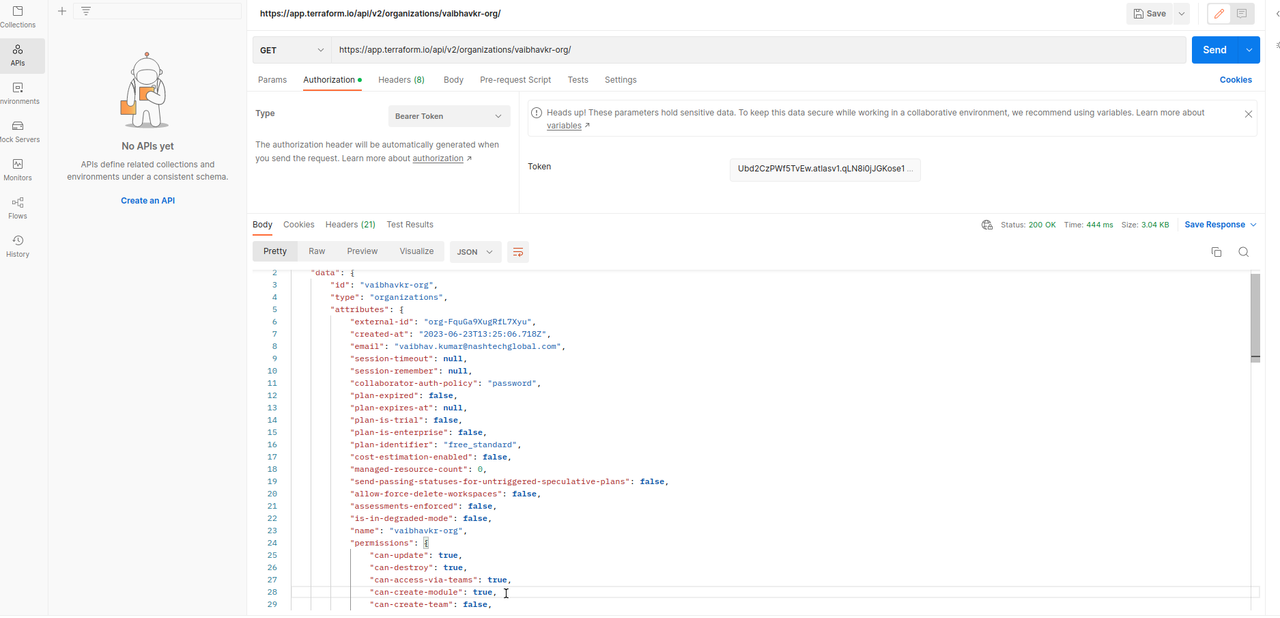

2.1 We can do a GET REQUEST and see the access.

In following Image, I used POSTMAN to run do a get request on “https://app.terraform.io/api/v2/oraganizations/<organisation-name>/”.

Since, we generated a token earlier it is to be used as bearer token. And in the headers, use“Content-Type: application/vnd.api+json”.

As you can see, in “data.attributes.persmissions.can-create-module” is listed as true. Then, we can create a module with this token. After we create the module, it becomes storage for all versions of terrafform module packages.

3. Connect GitHub to Azure DevOps

3.1 To use the repository with with all source code you need to create a service connection for GitHub.

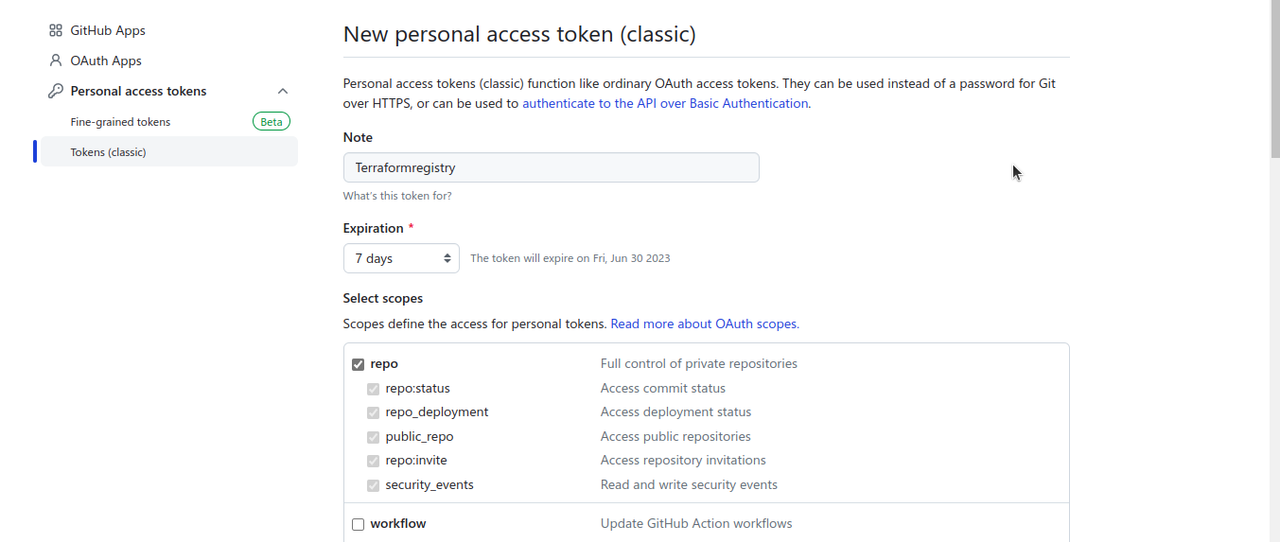

3.2 Create a personal access token on github with repo access.

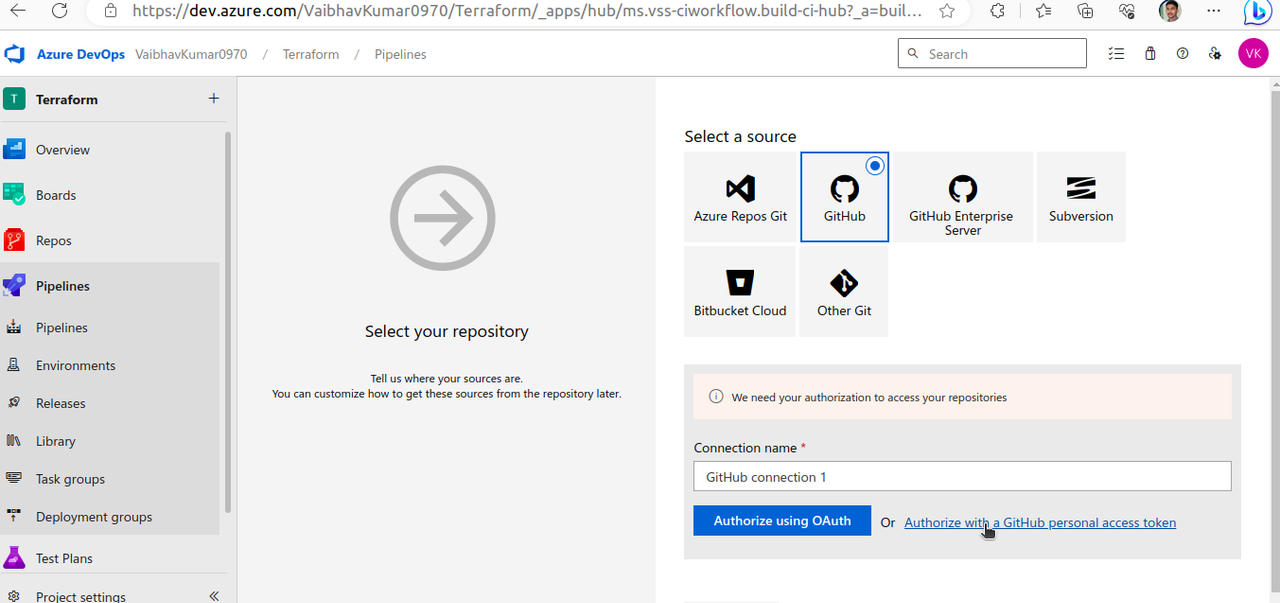

3.3 Open Service Connection in project settings for GitHub.

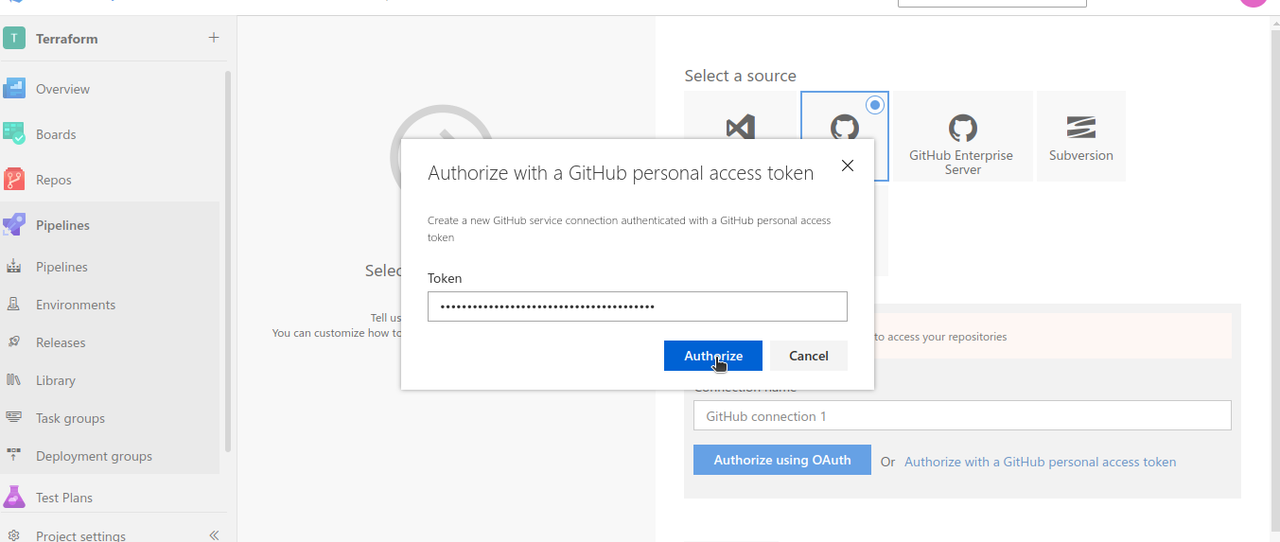

3.4 Select personal access token method.

3.5 Authorize.

We can directly add token to our pipeline without going to project settings as done here.

Creation of Module

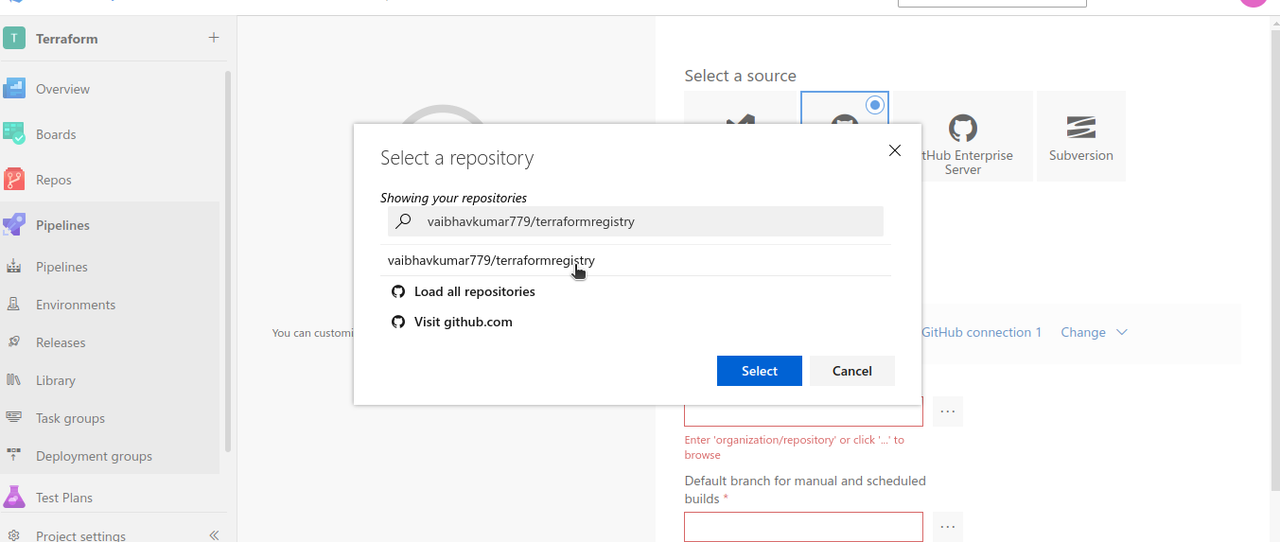

- We will add the repository in azure pipeline to access the yml we created.

- And then, we modify the contents of pipeline as suited like name and location of pipeline.yml.

After authorization, Azure DevOps allows to select the repository required.

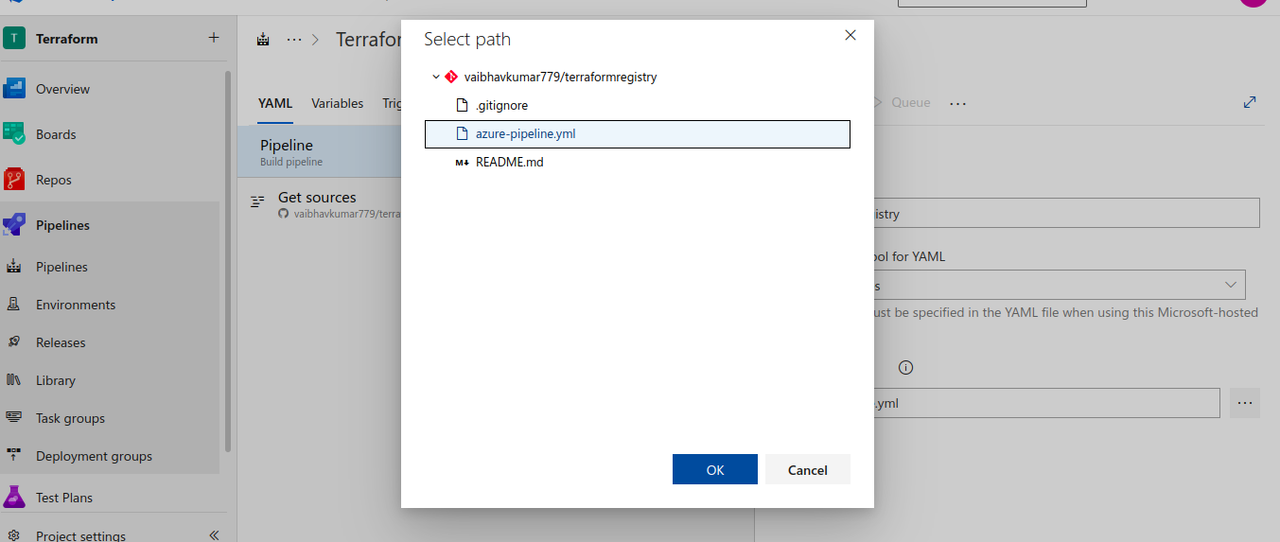

Now, we add location and save the pipeline.

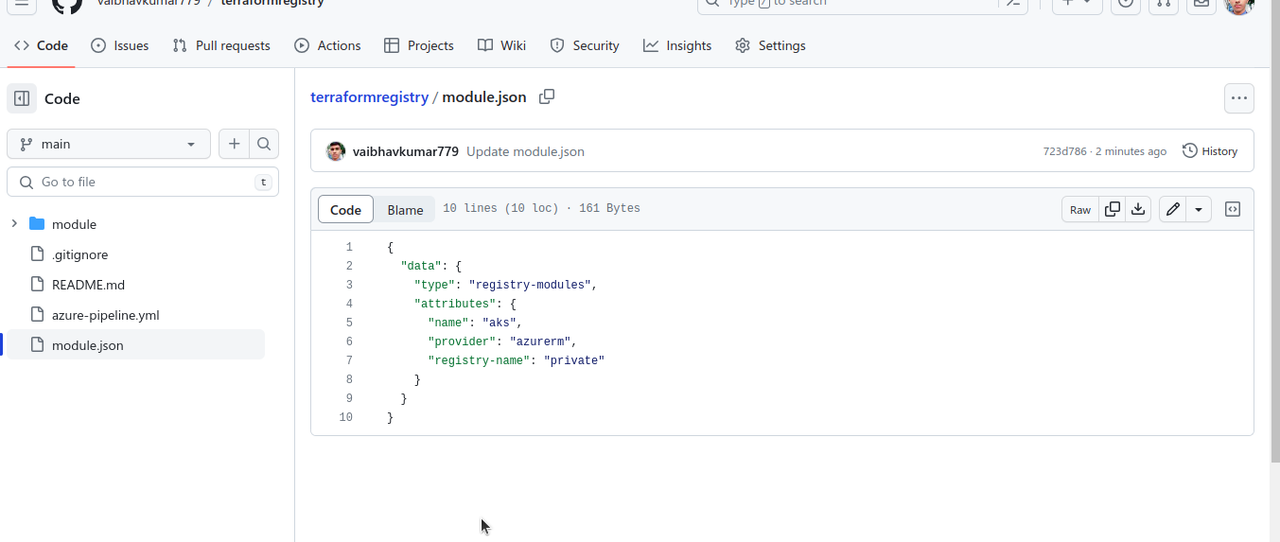

Now run the pipeline. The pipeline requires module.json we use following content.

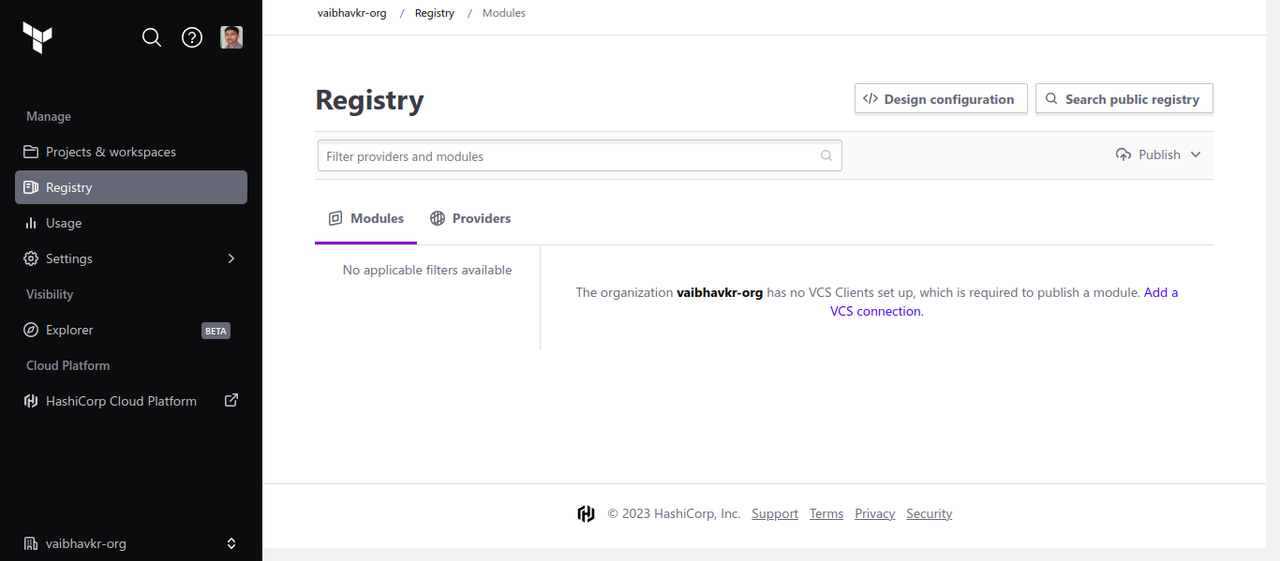

First there was no module. But then one module is created.

We took references from the documentations of terraform cloud: https://developer.hashicorp.com/terraform/cloud-docs/api-docs/private-registry/modules

The script in pipeline is used in following way:

jobs: - job: "TerraformPackaging"

steps:

- checkout: self

displayName: Clean Checkout

clean: true

- script: |

curl --header "Authorization: Bearer $(TERRAFORM_REGISTRY_TOKEN)" --header "Content-Type: application/vnd.api+json" --request POST --data @${{ parameters.JSON_MODULE_LOCATION }} https://app.terraform.io/api/v2/organizations/$(TF_ORGANIZATION)/registry-modules | jq -r

displayName: "Terraform Registry Module Creation"

name: "ModuleCreation"

It is a POST request which utilises the information in module.json file to convey Terraform cloud to create the module and the version we want now. The variables used inside the url are taken from variable group defined in pipeline.