You know what drains you? Spent hundreds and hundreds of hours writing UI test scripts, just to have them break the instant a DEV moves a button or changes a field name. I’ve run into that problem more times than I want to think about. It jolted me to stand back and really think. I feel there has to be a better way that also is more robust than UI testing. That’s the revelation that made me explore a concept that’s now becoming a realistic solution. Reinforcement learning, once a specialized area of AI research, is now beginning to revolutionize the way we conduct software testing. The title “Driving Intelligent Automation: How RL is Shaping the Future of UI Interaction Testing” isn’t a marketing gimmick. It is the real change that’s occurring in the way quality assurance is being met today.

Why Old-Swag Testing Drives Me Insane

Imagine you are a QA developer on a high-volume development team. UI changes every sprint. Buttons get moved, new features get added, and features get dropped. Now imagine having to keep up with a test suite that gets broken by every one of these changes. That is life for many of us.

I recall having worked on an e-commerce site whose product recommendation algorithm was being constantly updated based on user activity. Our static in nature test scripts could not cope. Tests were failing not because the feature was buggy, but because the page content was not what we expected. We spent too much time updating the test cases rather than debugging. It was like being in a loop.

Reinforcement Learning: The Game Changer

So, consider this. So if we aren’t the ones that constantly have to write everything that goes into the UI, what if something else can be a part of how we interact with the UI? That’s just the capability that reinforcement learning, or RL, gives us. “If you go on to learn them, you don’t feel like, ‘Oh, shucks, I guess I should have known this!’ You feel like you’re discovering things for the first time.”It’s like learning a new video game, he says: You play around, you press a button, you get beaten up a few times, you learn, and you get better. Ultimately, they discover what makes people tick. RL does the same learning.

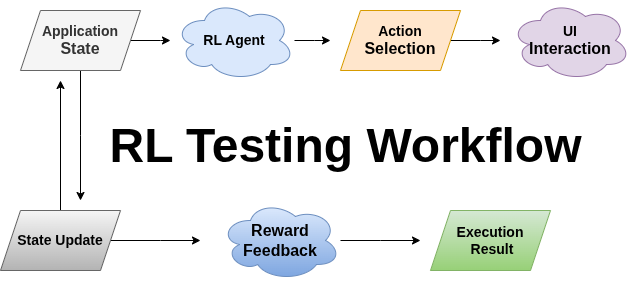

Translated to testing, the idea is having an “agent” watch what the app is doing, try things it can do, and learn from what happens as a result. If it succeeds, it remembers that. If it fails, it adjusts. Over time, it learns to use the app in better, more effective ways. It doesn’t have scripted commands because it learns what to do by exploring the program.

Constructing Something That Works

To give you a practical feel, I created a simple Java-based RL agent and ran it. The general idea is like this: maintain a map of states and optimal actions for each of them. The following is a simple example:

public class SimpleRLAgent {

private Map<String, Double> qTable = new HashMap<>();

private double learningRate = 0.1;

private double discount = 0.9;

private double epsilon = 0.8;

public String selectAction(String state, String[] actions) {

// Sometimes explore randomly, sometimes use what we've learned

if (ThreadLocalRandom.current().nextDouble() < epsilon) {

return actions[ThreadLocalRandom.current().nextInt(actions.length)];

}

// Pick the action with the highest expected value

String bestAction = actions[0];

double bestValue = getQValue(state, bestAction);

for (String action : actions) {

double value = getQValue(state, action);

if (value > bestValue) {

bestValue = value;

bestAction = action;

}

}

return bestAction;

}

}This employs what’s called the epsilon-greedy policy. The agent chooses the action that it considers to be the best in most cases. It occasionally switches to another action to prevent overlooking better ones.

The Learning Environment

The agent requires an environment where it can experiment safely and figure out from its experiments. That’s the environment. The agent gets a reward every time it makes a move on the basis of whether the result was fruitful or not. Let’s see how this reward could work in real life:

public TestResult executeAction(String action) {

stepCount++;

boolean success = false;

double reward = -0.1; // Small penalty for each step

switch (action) {

case "SEARCH":

success = webDriver.performSearch("selenium testing");

reward = success ? 5.0 : -2.0;

break;

case "CLICK_RESULT":

success = webDriver.clickFirstResult();

reward = success ? 3.0 : -1.0;

break;

// More actions...

}

String newState = webDriver.getCurrentState();

boolean done = stepCount >= maxSteps;

return new TestResult(newState, reward, done, success);

}This is where it learns. If the agent manages to get a task accomplished, it is rewarded. For instance, if it does a search excellently, it can be rewarded 5.0 points. But if it fails or gets sidetracked, it can be punished, for instance, it can be punished -2.0. These rewards and punishments shape the agent in the long run. They compel it to learn what actions are beneficial and what are not. The more it moves, the better it understands how to choose the correct steps.

What’s Unique About This Method

Following GPS directions is akin to old-school test scripts, and all is well until there is some road construction in some off-the-beaten-path location. RL-based testing is akin to having a local driver who knows many roads, familiar with a couple of routes and can make it up as he goes.

When I first rolled out this method, I was blown away by how the agent uncovered test scenarios I had not thought of. It uncovered eccentric button click paths that uncovered latent bugs and ventured into edge cases our manual testing had not caught.

A specific case that is still very clear to me was when the agent found out that double-clicking the search button quickly in succession triggered a race condition that disabled the search function. Our routine tests were not able to find this out because they employed ordered, sequential click patterns.

The Real-World Benefits

With this system in place for a few months, the advantages were apparent. First, test maintenance disappeared. When UI components were being changed by developers, the agent learned in training sessions rather than script changes manually.

Secondly, our bug detection rate went up dramatically. The agent’s tendency to experiment led to it testing action combinations we would never have tried to script ourselves. It was having an unlimited number of QA testers who never got tired and always tried out new things.

Third, the system also provided us with feedback on patterns of user usage. Through observing what the agent most preferred to do, we discovered what were the most optimal paths through our application by the user.

The Challenges Nobody Talks About

Come on, this approach is not actually problem-free. It has to be done in phases during the training process. You can’t just switch the switch and get a fully trained test agent overnight. It takes time for the system to acquire effective strategies.

Computational resources are an issue. It takes more computational resources to train a typical RL agent than to execute normal test scripts. Cloud computing has facilitated that more easily than a couple of years ago, though.

Then, of course, there is the issue of interpretability. If a standard test does not pass, it’s simple to determine which step did not pass and why. With RL, however, it’s not always obvious why the agent made some specific choice. We’ve circumvented this by having logging systems monitor the sequence of decisions by the agent.

Looking Ahead

The future of testing with RL is very promising. I’m hoping that someday we will have multi-agent systems where every agent is specialized in one area of testing, one for performance, one for security, and the third for user experience.

Natural language integration is another. Imagine, being able to write your test criteria in English and have the system run through all permutations. We are not there, of course, but the elements to get us there are being assembled right now. “Cross platform learning will be possible as well. If a learning agent could be trained on a single web application and be able to generalize the learning to other similar applications while saving significant training time for new projects.

Conclusion

Reinforcement learning is revolutionizing the game of UI testing. We no longer need to remain one step behind each update to the interface. We now build systems that learn while they run and adapt to updates without pre-defined rules. They learn by experience, as we do.

Yes, it requires more effort up front. But the payoff is immediate. You debug test scripts fewer times, discover more latent bugs, and learn more about how the users really behave on the app. It actually allows me to care more about the quality and less about conforming to the failure that each small change creates.

References

https://www.geeksforgeeks.org/machine-learning/types-of-reinforcement-learning