When we build up a system which need more data such as for decision-making, market analytics, risk managements. We need a large data from different sources. They are from multiple systems, databases, files, … In testing, we must test how the system extract data from different sources, transforming it to meet the target schema or format, and loading it into a central repository for further analysis and use. It’s called ETL testing.

Today, I would like to share my experience in this area – ETL testing.

What is ETL Testing?

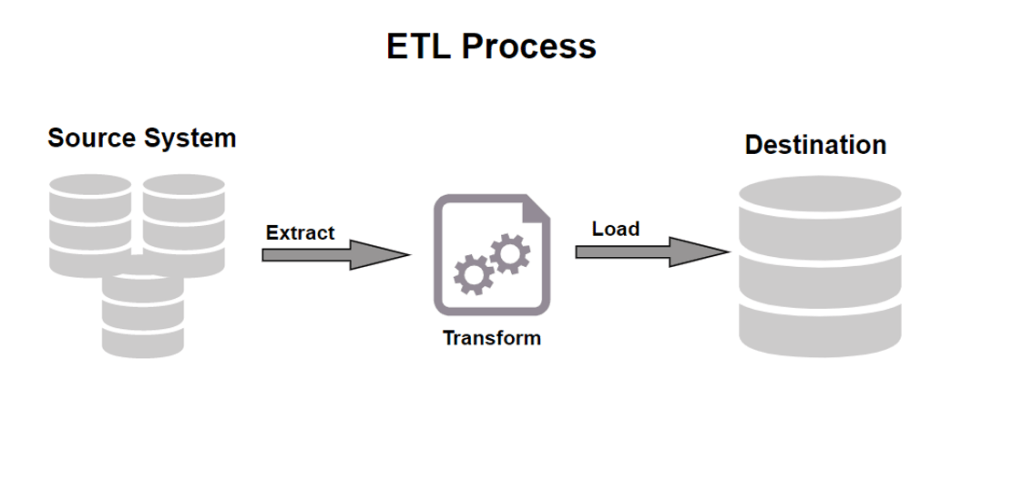

ETL testing refers to the process of testing the Extract, Transform, and Load (ETL) process in data warehousing. The ETL process is a critical component of data warehousing, and it involves extracting data from various sources, transforming it into a suitable format, and then loading it into a target database or data warehouse.

The primary goal of ETL testing is to ensure that the ETL process is working correctly and is producing accurate and consistent data. ETL testing is typically performed by testing the individual components of the ETL process, such as the extraction process, transformation logic, and the loading process. The testing can be done manually or through automated tools.

To effectively test the ETL project, it is important to understand some key concepts and terminologies that are specific to ETL testing. Here are some of the important concepts to know:

Dimension and Fact Table

Dimension Table: Dimension tables provide descriptive or contextual information for the measurement of a fact table. A dimension table contains a surrogate key, natural key, and a set of attributes.

Example: a Dimension = an object: a seller, a customer, a date

Fact Table: A fact table has values of attributes in the dimension table. It is a primary table in dimensional model. It has data in numeric form, the data that needs to be analyzed.

Cubes and BI Reports

Cubes: Data processing units composed of fact tables and dimensions from the data warehouse.

Provide multidimensional views of data, querying and analytical capabilities to clients.

BI Reports: The process of gathering data by utilizing different software and tools to extract relevant insights. Provides suggestions and observations about business trends, empowering decision-makers to act.

Staging, Data warehouse and Data mart

Staging: An intermediated storage area used for data processing during the extract, transform and load (ETL) process.

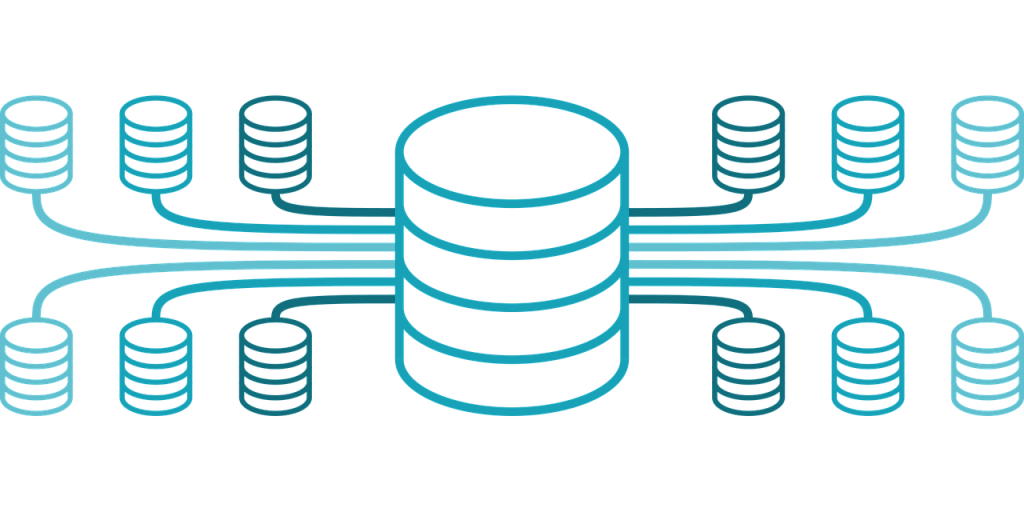

Data warehouse: A system used for reporting and data analysis and core component of BI. Central repositories of integrated data from one or more disparate sources.

Data mart: A subset of data warehouse and is oriented to a specific business line or team.

ETL Testing Approach

We can follow the following approaches:

TOP-DOWN: Follow the data from the Report directly to the Data warehouse.

BOTTOM-UP:

Approach 1: Follow the data from the Data Sources directly to the DW.

Approach 2: Follow the data from the Data Sources through each step in the ETL process and into the DW.

The ideal approach for ETL testing is the Bottom-up approach, specifically Approach 2. This approach allows testers to follow the step-by-step flow of the ETL process, making it easier for the team to identify and address any issues or bugs that arise. While this approach may require more testing time, it offers numerous benefits such as improved problem pinpointing, better collaboration with the development team, and enhanced overall testing effectiveness.

ETL Testing Process

In ETL testing, there are five stages involved in the testing process:

However, we should pay special attention to estimate the testing effort, as it encompasses designing scripts (such as SQL or Oracle scripts) to check and compare data mapping and transformation business rules.

ETL Testing Types

There are several specific testing types within ETL testing:

- Data Completeness Testing: Verify that all expected data loads into the data warehouse.

- Data Transformation Testing:Validate data is transformed correctly based on business rules.

- Data Quality Testing: Verify the accuracy of the data.

- Metadata Testing: Verify the table definitions conform to the data model and application. Carries out data type, index, length, and constraint checks of the ETL application metadata.

- Incremental Testing: Verify updates on the sources are getting loaded into the target system properly.

- Performance Testing: Verify the ETL batch loading system should be able to extract and load the records in timely manner or as required.

- Integration Testing: The goal of ETL integration testing is to perform end-to-end testing of the data in the ETL process and the consuming application.

- Regression Testing: Verify that the ETL is producing the same output for a given input before and after the change. Any differences need to be validated whether are expected as per the changes.

ETL Testing Methods

ETL testing has specific testing methods, here are some suggestions based on my experience.

- Sampling technique: Sampling techniques in ETL testing involve selecting a subset of data from a larger dataset for testing. This approach allows us to focus on a representative sample rather than testing the entire dataset. It helps optimize coverage, save time, and still provide meaningful insights into the ETL process. By selecting a statistically significant sample, we can identify potential issues efficiently and validate the functionality of the ETL pipeline.

- Use Excel/Compare tools: Using Excel/Compare tools in ETL testing can be beneficial for various tasks, such as data comparison, validation, and analysis. These tools offer features that help streamline the testing process and improve efficiency.

- Utilize Automation utility: Team can utilize automation test to run all test scripts one time. It helps to save time and testing effort.

- Use ETL Automation Test tools: ETL testing tools are software applications designed to automate the ETL testing process and help ensure data accuracy, completeness, and consistency. However, the tool license should be considered carefully. Here are some supported tools:

- Informatica Data Validation Option: Informatica DVO is a comprehensive data validation and testing tool that ensures data accuracy and completeness across multiple systems.

- QuerySurge: It is a data testing and validation tool designed specifically for Big Data and data warehouse environments.

- ETL Validator: It is an ETL testing automation tool developed by Datagaps which helps in automating the ETL/ELT validation during data migration and data warehouse projects. ETL Validator is one of the top ETL testing tools available in the industry for 100% data validation and has multiple features and benefits when compared with competitor.

ETL Testing Challenges

ETL Testing is a complex process that involves several challenges. Based on my experience, here are some of the significant challenges faced by testers during ETL (Extract, Transform, Load) testing.

The first challenge in ETL testing is dealing with bad data sources. It comes from manual data entry. This introduces the risk of errors, whether intentional or unintentional. Moreover, inconsistencies can arise when users input units of measurement in written or abbreviated forms. Manual data entry can also lead to duplicate records, especially when multiple people are involved in the same project.

To overcome the challenges posed by bad data sources during ETL testing, several strategies can be employed:

- Implement Tools (Macro) for Historical Data: In cases where historical data needs to be transferred to standardized formats, the development team can create macro tools. These tools automate the transformation process, ensuring data consistency and accuracy. By standardizing the historical data, it becomes easier to validate and integrate it into the ETL process.

- Create New Templates/Tools for Upcoming Data: For new data entries, it is beneficial to develop new templates or tools to assist business users. These tools can provide data validation rules, drop-down menus, or other features that enhance data entry accuracy. Additionally, improving the application used for data processing can ensure that clean and up-to-date data is available for all future monthly data uploads, reducing the chances of human errors during manual data processing.

By implementing these strategies, organizations can mitigate the challenges caused by bad data sources, improve data quality, and streamline the ETL testing process.

The next challenge relates to the huge and complicated of data. The presence of a vast amount of data in various sources, including multiple file formats, compatible databases, and proprietary data formats, highlights the need to harmonize and consolidate the data into a single source. This consolidated source then feeds into BI reports, which drive business decision-making. Cleansing and harmonizing the data is necessary to ensure its accuracy, consistency, and reliability for effective analysis. How can we overcome this situation?

To address the challenge of dealing with huge data during ETL testing, the following approaches can be beneficial:

- Utilize Sampling Techniques: Working with large volumes of data can be time-consuming and resource intensive. To optimize coverage, testers can employ sampling techniques. By selecting representative subsets of data, they can focus their testing efforts on a smaller yet representative portion of the overall dataset. This helps in identifying potential issues and validating the ETL process without the need to process the entire dataset.

- Automate Data Comparison: Comparing large datasets manually can be error-prone and time-consuming. To overcome this challenge, testers can leverage automated data comparison tools such as Beyond Compare or Datatific tool. These tools provide efficient and accurate comparisons between source and target data, highlighting any discrepancies or inconsistencies. Automating the comparison process helps save time and ensures thorough validation of the transformed data.

By employing sampling techniques and leveraging automated data comparison tools, we can effectively tackle the challenges associated with handling large volumes of data in ETL testing. These approaches contribute to improved efficiency, enhanced coverage, and more accurate validation of the ETL process.

To overcome the challenges posed by complicated data during ETL testing, the following strategies can be implemented:

- Rank Data Sources: When dealing with complex data, it can be helpful to rank data sources based on their importance. Identify the critical data sources that have a significant impact on the business processes or decision-making. Prioritize testing efforts on these high-priority data sources to ensure their accuracy and reliability.

- Collaborate with BAs (Business Analysis): Close collaboration with BA closely during ETL testing. Work closely with them to understand the business requirements and identify any gaps or inconsistencies. By addressing these gaps early on, we can align the ETL process with the business expectations, reducing the risk of errors and ensuring accurate data transformation.

- Involve Client Testing Early: Encourage clients to involve the testing team as early as possible in the ETL process. This allows us to provide feedback and identify potential issues during the design and development stages. Early involvement ensures that complications related to complex data are identified and addressed promptly, reducing rework and saving time and effort.

- Obtain Test Cases/Scenarios for UAT: Request the client to provide test cases or scenarios for User Acceptance Testing (UAT) stage. These test cases align the ETL process with the client’s specific requirements and expectations. Having predefined test cases facilitates smooth UAT execution, streamlines the validation process, and reduces the overall time and effort required for testing.

By implementing these strategies, we can effectively tackle the challenges associated with complex data in ETL testing. Close collaboration with the business users, early involvement in the process, and leveraging client test cases contribute to ensuring accurate data transformation and meeting the business requirements.

Last but not at least, limit time frame and resource challenge. Meeting tight project deadlines due to client business needs can be a significant challenge for ETL testing when the necessary testing resources are not available. To overcome this challenge, the following my strategies can be adopted:

- Prioritize Critical Functions/Areas/Test Cases: Identify the critical functions, areas, and test cases that are crucial for the ETL process. Allocate resources and time specifically to test these priority areas to ensure they are thoroughly validated within the project deadlines.

- Use Sampling Techniques: Sampling techniques can help optimize ETL testing coverage and reduce testing time. By selecting a representative sample of critical data elements and scenarios, testers can identify potential issues in the ETL process while minimizing the time and effort required for testing.

- Involve All Team Members: Consider involving all relevant team members, including developers and business analysts, in ETL testing. Their expertise and contributions can help expedite the testing process and ensure efficient and effective testing outcomes.

- Utilize SSIS Packages for Regression Testing: To streamline regression testing in ETL, consider using SSIS (SQL Server Integration Services) packages to execute all test scripts at least once. This approach saves time and effort while ensuring that critical areas of the ETL process are thoroughly tested.

- Back up ETL Testing Outputs: It is essential to maintain backups of ETL testing outputs, including test cases, SQL scripts, test data, and results. These backups serve multiple purposes, such as regression testing, bug verification, and training new team members. They ensure that valuable testing artifacts are preserved and readily available when needed.

- Utilize MDX queries: When time is limited for writing SQL scripts in ETL testing, an alternative approach is to utilize MDX queries. MDX (Multidimensional Expressions) is a query language specifically designed for working with multidimensional databases, such as OLAP (Online Analytical Processing) systems. It provides a drag and drop interface, allowing us to quickly select dimensions, hierarchies, and measures from the multidimensional database. It also supports advanced analytical operations, such as slicing, dicing, drill-down, and aggregation across multiple dimensions. These capabilities enable testers to perform in-depth data analysis and validate complex transformations in the ETL process.

- Use CUBE Functions to Break Out of Pivot Tables: This is another option when time is limited for writing SQL scripts in ETL testing. In Microsoft Excel, CUBE functions can be used to break out of pivot tables and retrieve specific data from a multidimensional data source, such as an OLAP (Online Analytical Processing) cube. The CUBE functions provide a way to perform calculations and extract data beyond the scope of a standard pivot table. To use these functions, we need an active connection to an OLAP cube. We can establish the connection by going to the “Data” tab in Excel, selecting “Get Data” or “From Other Sources,” and choosing the appropriate OLAP cube connection option. Once the connection is established, we can insert the desired CUBE function into a cell outside the pivot table and reference the appropriate cube fields and expressions to retrieve the desired data. Remember to adjust the function arguments according to your specific OLAP cube structure and data requirements. Overall, CUBE functions provide a powerful way to break out of pivot tables and access multidimensional data for advanced analysis and reporting in Excel.

- Use Checksum/AVG/Minus queries: Checksum, AVG (average), and Minus queries… can indeed be useful in detecting missing or wrong data during the ETL (Extract, Transform, Load) process. Using these types of queries can provide a quick way to verify values during the ETL process and help we detect missing or wrong data. However, it’s important to note that these queries are not foolproof and may not catch all issues. They should be used in combination with other data validation techniques and thorough testing to ensure data integrity.

Common Bugs in ETL Testing

- Incorrect data mapping

- Incorrect calculations or aggregated values

- Data loss during the ETL process

- Default data loading for NULL or blank values

Unit Testing Checklist

Unit testing is an essential part of the overall testing process. A well-designed unit test can have a significant impact on reducing the testing effort at the end of the project and enhancing the overall project quality. From my experience, a checklist will aid the team to systematically test their code during coding:

- Check the mapping of fields between data staging, Data warehouse and in data marts.

- Check for duplication of values generated using sequence generators.

- Check the correctness of surrogate keys that uniquely identify rows of data.

- Check for data-type constraints of the fields present in staging and core levels.

- Check the data loading status and error messages after ETLs (extracts, transformations, loads).

- Look for string columns that are incorrectly left or right trimmed.

- Make sure all tables and specified fields were loaded from source to staging.

- Verify that not-null fields were populated.

- Verify that no data truncation occurred in each field.

- Make sure data types and formats are as specified during database design.

- Make sure there are no duplicate records in target tables.

- Make sure data transformations are correctly based on business rules.

- Verify that numeric fields are populated precisely.

- Make sure every ETL session completed with only planned exceptions.

- Verify all data cleansing, transformation, and error and exception handling.

- Verify stored procedure calculations and data mappings.

ETL Effort Estimation

Is the testing effort estimation for ETL testing similar to or different from other testing types? As mentioned earlier, ETL testing presents specific challenges and requires unique testing techniques compared to other testing types. When it comes to estimating testing effort, ETL testing typically differs from other types of testing. Due to the complexities involved in data extraction, transformation, and loading processes, ETL testing often requires additional time and resources compared to other testing types. Therefore, estimating the testing effort for ETL testing may differ from estimating effort for other testing types.

Difference ETL Testing & Data Migration Testing

ETL testing and Data Migration testing are both types of data-related testing, but they have some key differences:

- Purpose: ETL testing is performed to ensure that data is properly extracted, transformed, and loaded between systems, while Data Migration testing is performed to ensure that data is accurately moved from one system or environment to another.

- Scope: ETL testing focuses on the ETL process and may involve validating the accuracy and completeness of data at each stage of the process, while Data Migration testing may involve testing the entire system, including interfaces, data flows, and user interfaces.

- Data sources: ETL testing typically involves working with data that is already within the system, while Data Migration testing involves moving data from one system to another.

- Complexity: ETL testing may involve complex transformations of data, while Data Migration testing may be less complex, but may involve testing data across multiple systems.

In summary, while both ETL testing and Data Migration testing involve testing data, their scope, purpose, and complexity can differ significantly.

References

[1] Analysis Services MDX Query Designer (Power Pivot) – Microsoft Support

[2] Datagaps | Automated Cloud Data Testing | ETL, BI & BigData

[3] ETL Testing Tutorial (guru99.com)