Discover AI-driven test data generation in our guide, which reveals innovative tools and methods that revolutionize software testing. Additionally, AI’s prominent role in this domain is pivotal amidst growing demands for robust software. This importance is particularly evident in creating realistic datasets. Moreover, to fully understand AI’s impact on software testing, it is essential to delve into its fundamentals. Specifically, AI utilizes machine learning algorithms to efficiently generate high-quality test data that closely resembles real-world scenarios. Furthermore, this capability, in turn, further enhances software testing efficacy.

Understanding AI-Driven Test Data Generation:

AI-driven test data generation leverages the power of machine learning algorithms to create realistic and diverse datasets that mimic real-world scenarios. Contrarily, unlike traditional methods which often produce generic or limited test data, AI-driven approaches can generate data that covers a broader spectrum of inputs, edge cases, and anomalies. Consequently, this enhances the robustness of testing.

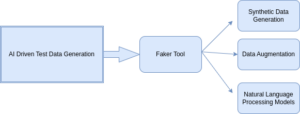

Exploring Tools and Techniques used Through AI:

The confidence and usefulness of software programmes, machine learning models, and other data-driven systems are greatly dependent on their quality and variety of test data. But, due to factors such as data availability, diversity, and privacy concerns, getting real-Tools like Faker, Pydbgen, and TensorFlow can generate synthetic data for various applications and domains. Below is an example code snippet demonstrating how to generate synthetic user data for a form using Faker.js and Playwright._world data for testing can be difficult. This is where test data generation methods powered by AI become useful. We’ll look at three main methods for creating test data using Playwright and JavaScript: synthetic data generation, data augmentation, and natural language processing (NLP) models.

1. Synthetic Data Generation:

One of the primary techniques employed in AI-driven test data generation is synthetic data generation. This useful technique particularly shines when real data is hard to access or when privacy issues prohibit the use of actual data. Tools like Faker, Pydbgen, and TensorFlow can generate synthetic data for various applications and domains. Furthermore, below is an example code snippet demonstrating how to generate synthetic user data for a form using Faker.js and Playwright.

Moreover, you usually need to set up your testing framework and integrate Faker.js correctly before using synthetic user data for a form created with Playwright and Faker.js. Additionally, this ensures seamless functionality and accurate simulation during testing. The actions you might take are as follows:

- Setting up your testing framework: Ensure you have a testing framework set up to write and execute your tests. Popular JavaScript testing frameworks are Mocha, Jasmine, and Jest. Select the option that most closely matches your needs.

- Installation of dependencies: Install Faker.js run the command in terminal npm install faker to install Faker.js, which is a library for generating fake data like names, addresses, emails, etc.

- Configuration: Import Faker.js in your test files, import Faker.js to generate synthetic user data.

Here’s an example of a test script using Playwright and Faker.js to fill out a form with synthetic user data:

In this code:

- We imports the test and expect functions from the @playwright/test library. These functions are used to define and run tests in Playwright.

- Third line defines a test titled “Fill Form with Fake Data”. The test is defined using an arrow function that takes a parameter {Page}. This parameter represents the Playwright Page object, which allows us to interact with the webpage.

- We import the Faker library using require(‘faker’).

- We generate fake data for name, address, and email using the Faker library’s methods.

- We print the generated data to the console.

2. Data Agumentation:

Data augmentation involves creating new variations of existing data to improve the diversity and quality of datasets. AI-driven test data generation leverages artificial intelligence techniques to create synthetic data that closely resembles real-world data. This synthetic data can be used to augment existing datasets, allowing machine learning models to better generalize patterns and improve performance.

Here example, we’ll Explore AI-powered test data generation approach to generate synthetic product prices:

// Original dataset of product prices

// Func to apply data augmentation techniques

// Create an empty array to store augmented prices

// Iterate through each price in the original dataset

// Generate synthetic price using AI-driven techniques

// Add the synthetic price to the array

// Func to generate synthetic price using AI-driven techniques

// Use AI-driven methods to generate synthetic data

// Navigate to the URL of the form

// Apply data augmentation to the original prices

// Fill the form fields with augmented prices

In this code:

- We start with an original dataset of product prices stored in the array originalPrices.

- The augmentPrices() function is defined to apply data augmentation techniques. Within this function

I) We iterate through each price in the original dataset.

II) For each price, we call the generateSyntheticPrice() function to generate a synthetic price using AI-driven techniques provided by the faker library.

III) The synthetic prices are stored in an array augmentedPrices. - The generateSyntheticPrice() function utilizes AI-driven methods provided by the faker library to generate synthetic product prices.

- Finally, we call the augmentPrices() function with the original prices array and store the augmented prices in a variable augmentedPrices. Subsequently, we then print these augmented prices to the console.

Indeed, this example demonstrates how AI-driven techniques, such as those provided by the faker library, can be utilized for data augmentation to create synthetic data that closely resembles real-world data. Moreover, this approach showcases the potential for advancing data generation methods in various domains.

3. Natural Language Processing Models (NLP):

A subfield of artificial intelligence (AI) called natural language processing (NLP) is concerned with how people and computers communicate using natural language. It entails the creation of methods and algorithms that let computers comprehend, analyse, and produce data in human language in a meaningful and practical way.

Moreover, numerous applications, such as text analysis, sentiment analysis, machine translation, chatbots, speech recognition, and information retrieval, heavily rely on natural language processing (NLP) techniques. NLP gives computers the ability to interpret and comprehend human language, which allows them to carry out a variety of activities like sentiment analysis, text summarization, question answering, and language translation.

Explore AI-powered test data generation, Let’s look at an imagined scenario where we wish to test a sentiment analysis text tool for product reviews. To evaluate the tool’s functionality, we can create synthetic product reviews with a range of responses using AI-driven methodologies.

In this code:

- We navigate to the sentiment analysis tool.

- We generate synthetic reviews using faker’s faker.lorem.sentence() method.

- We fill the textarea with the synthetic reviews.

- We submit the form to analyze sentiment.

- We wait for the sentiment analysis results to appear.

- We assert that the results are displayed on the page.

Conclusion:

Explore AI-powered test data generation revolutionizes software testing by employing innovative methods and tools to enhance efficiency and effectiveness. Leveraging machine learning algorithms, it creates realistic datasets mimicking real-world scenarios, covering a broad spectrum of inputs and anomalies. With tools like Faker.js, testers can easily generate synthetic data for various scenarios, streamlining testing workflows. Embracing AI-driven approaches promises comprehensive testing, improved software quality, and accelerated development cycles, crucial for staying competitive in today’s evolving technological landscape.

Reference: https://gretel.ai/blog/test-data-generation