Introduction to Apache Airflow

Airbnb originally created Apache Airflow as an open-source platform to programmatically author, schedule, and monitor workflows. They later donated it to the Apache Software Foundation.

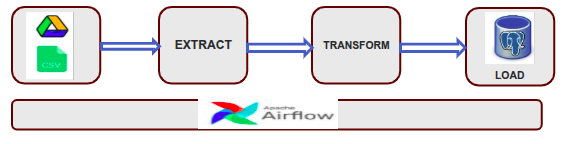

It is designed for orchestrating complex computational workflows and data processing pipelines.

What is Apache Airflow

Apache Airflow is an open-source platform designed to programmatically author, schedule, and monitor workflows.

Instead of managing workflows manually or through simple cron jobs, Airflow allows us to create workflows as code, which improves flexibility, monitoring, error handling, and scalability.

It was originally developed at Airbnb in 2014 and later became a project under the Apache Software Foundation.

In Airflow, you define workflows as Directed Acyclic Graphs (DAGs), where each node represents a unit of work.

Why to Use Apache Airflow

Managing complex workflows becomes increasingly challenging as projects grow in size. Traditional methods like cron jobs or simple scripts lack flexibility, monitoring capabilities, and retry mechanisms. Airflow solves these problems by providing:

- A programmatic way to define workflows as Python code.

- A powerful web UI for tracking the status of tasks and workflows.

- Automatic retries and alerting on task failure.

- Scalable execution engines that can run workflows locally, on a cluster, or on the cloud. This makes Airflow an ideal solution for modern data engineering, machine learning, and DevOps use cases where reliability and scalability are critical.

Core Concepts of Apache Airflow

DAG (Directed Acyclic Graph)

DAG represents a workflow where tasks are defined in a specific order without any cycles. It organizes the flow of tasks, showing dependencies and execution sequence. Each DAG is written in Python and includes metadata like start date, schedule, and task relationships.

Task

A task is a single unit of work within a DAG. Each task represents a specific action, such as running a script, querying a database, or sending an email. Tasks are created using operators and are linked together in the DAG to define the order of execution.

Operator

The Operator defines the action that a task will perform. It acts as a template for what the task does such as executing Python code (PythonOperator), running a shell command (BashOperator), or transferring data (S3ToRedshiftOperator). Each task in a DAG is created by instantiating an operator.

Scheduler

Scheduler is the component responsible for monitoring DAGs and triggering task executions based on their defined schedules. It reads DAG definitions, determines when they should run, and queues the tasks for execution. The scheduler ensures tasks run in the correct order according to dependencies and timing.

Executor

The Executor is the component that actually runs the tasks. Once the Scheduler queues a task, the Executor picks it up and executes it using the configured backend (e.g., local system, Celery, Kubernetes). The type of executor determines how tasks are distributed and run either sequentially, in parallel, or across multiple machines.

Metadata Database

Metadata Database is a critical component that stores all the internal state and information about DAGs, tasks, schedules, and executions. It tracks things like task statuses (e.g., success, failed), DAG run history, configuration settings, and more. This database allows Airflow to monitor, manage, and recover workflows reliably.

DAG Architecture Workflow

DAG stands for Directed Acyclic Graph. It is the fundamental concept in Airflow, representing a workflow or pipeline of tasks.

A DAG defines:

- When the tasks should run (scheduling)

- How are they connected (dependencies)

- What each task does (the work itself)

Features of Apache Airflow

Dynamic Workflow Creation

Dynamic Workflow Creation in Apache Airflow refers to the ability to generate tasks and DAGs programmatically using Python code. Instead of manually defining each task, you can use loops, conditionals, or external configurations (like JSON or a database) to create tasks dynamically. This makes it easier to build scalable and reusable workflows, especially when dealing with similar or repetitive tasks.

Rich Operator Library

Apache Airflow provides a wide range of pre-built operators to simplify workflow development. These include operators for common tasks like running Bash commands, executing Python functions, performing SQL queries, and interacting with cloud services such as AWS (e.g., S3, Redshift), GCP (e.g., BigQuery, Cloud Storage), and Azure. This rich library enables quick and easy integration with various systems without writing custom code from scratch.

Monitoring and Logging

Apache Airflow offers a robust monitoring and logging system through its web UI and backend logs. Users can track the status of DAG runs and individual tasks, view detailed logs for troubleshooting, and get alerts on failures or retries. This visibility helps ensure workflows run smoothly and makes debugging easier.

Extensibility

Apache Airflow is highly extensible, allowing users to create custom operators, sensors, hooks, and plugins to tailor workflows to specific needs. This flexibility lets you integrate with virtually any system or service, extend Airflow’s functionality, and adapt it to complex or unique use cases beyond the built-in capabilities.

Scalability

It is designed to handle workflows of any size by supporting multiple executors like Celery and Kubernetes, which allow tasks to run in parallel across many workers or nodes. This makes it easy to scale up from small setups to large, distributed environments, ensuring efficient processing as your data and workflow complexity grow.

Integration

Apache Airflow seamlessly integrates with a wide variety of tools, databases, and cloud platforms through its extensive set of built-in operators, hooks, and connectors. This makes it easy to connect and automate workflows across different systems like AWS, Google Cloud, Azure, Hadoop, and many databases, enabling smooth end-to-end data pipelines.

Conclusion

Apache Airflow is a powerful, flexible, and extensible tool for orchestrating complex workflows and data pipelines. Its Python-based, code-centric approach makes it easy to build, maintain, and scale workflows, especially in data engineering and machine learning environments.

With features like dynamic DAG creation, built-in scheduling, retries, logging, and a rich UI, Airflow helps teams automate and monitor their processes efficiently. For more blogs, click here.