Introduction

In today’s data-driven world, optimizing data storage is crucial for achieving both efficiency and cost-effectiveness. Selecting the right data architecture can significantly enhance your ability to manage vast amounts of data, ensuring fast access and scalability. In this blog, we will explore various storage systems, optimization strategies, and cloud platforms that can help you streamline your data storage processes.

Types of Data Storage Systems

Understanding the types of data storage systems is key to making informed decisions about where and how to store your data.

Relational Databases

Relational databases are ideal for structured data that adheres to a tabular format, supporting the relationship between different entities via foreign keys and indexes. These systems are optimized for applications requiring high data integrity and transactional support.

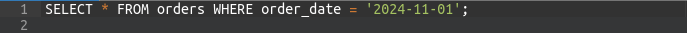

For example, with SQL, you can query structured data efficiently:

- This query retrieves all orders placed on a specific date.

NoSQL Databases

NoSQL databases are designed to handle unstructured and semi-structured data. They offer flexibility, enabling storage of a variety of data types, such as JSON or XML, without enforcing a predefined schema. These databases are well-suited for big data, real-time applications, and unstructured datasets.

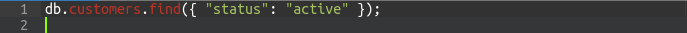

Code Example (Javascript, MongoDB)

- This MongoDB query retrieves all active customers.

Object Storage

When dealing with large, unstructured data like media files, object storage platforms such as Amazon S3 or Google Cloud Storage are ideal. These services provide scalable, cost-effective storage solutions for backups, archives, and large files.

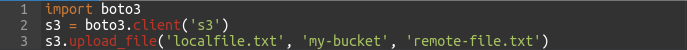

Code Example (Python, AWS S3):

- This Python code uploads a file to AWS S3.

Choosing Between Data Lakes, Data Warehouses, and Hybrid Approaches

Choosing between data lakes, data warehouses, and hybrid approaches depends on your specific storage and analysis needs.

Data Lakes

A Data Lake stores raw, unprocessed data, allowing you to ingest everything in its native format, such as CSV or Parquet. This flexibility makes data lakes ideal for big data, machine learning, and analytics tasks that require diverse datasets.

Example (Bash, AWS S3 for Data Lake):

- data is stored in raw format in a data lake, allowing for later analysis and processing.

Data Warehouses

Data Warehouses store structured and processed data, optimized for fast querying and analytics. They support large-scale reporting and data analysis, making them suitable for business intelligence tasks.

Example (Sql, AWS Redshift Data Warehouse):

- This SQL query could be used to quickly analyze sales data stored in a data warehouse.

Hybrid Approaches

In a Hybrid Approach, data is stored in both a data lake and a data warehouse. Raw data is stored in the lake, while processed data is moved to the warehouse for efficient querying. This combination allows you to leverage the strengths of both environments.

Storage Optimization Techniques

Efficient storage is not just about selecting the right system; it’s about optimizing your storage strategies to enhance speed and reduce costs. Below are key techniques to optimize data storage.

Compression

Compression algorithms reduce the size of data, making it faster to transfer and cheaper to store. Popular formats include GZIP, Snappy, and LZ4.

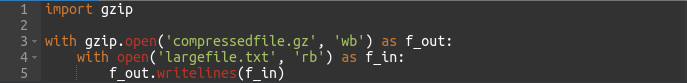

Code Example (Python, GZIP Compression):

- This code compresses a file using GZIP, reducing storage space.

Partitioning

Partitioning helps break large datasets into smaller, more manageable chunks, allowing for faster data retrieval. By partitioning data based on criteria like time or geography, queries can be processed more efficiently.

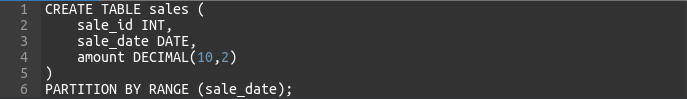

Code Example (SQL Partitioning):

- This SQL code partitions the

salestable by thesale_datefield, which allows for faster queries on specific time periods.

Cloud Storage Platforms

Cloud storage platforms offer scalable and flexible solutions for storing data. Here, we will discuss some popular services that can meet a variety of storage needs.

AWS S3

AWS S3 is an object storage service known for its durability and scalability. It is perfect for storing large volumes of data in a data lake or as a backup solution.

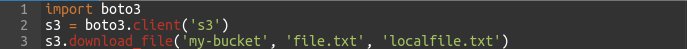

Code Example (Python, AWS S3):

Azure Blob Storage

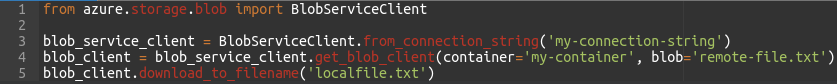

Azure Blob Storage offers a range of storage options, including hot, cool, and archive tiers, to store unstructured data. It’s versatile and suitable for a wide variety of applications, including backups and large file storage.

Code Example (Python, Azure Blob Storage):

Google Cloud Storage

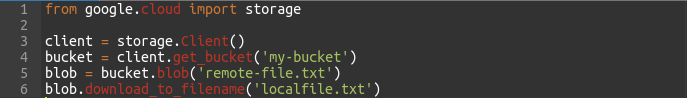

Google Cloud Storage provides high availability and global distribution, making it an excellent choice for serving large datasets and building data lakes.

Code Example (Python, Google Cloud Storage):

Data Sharding and Indexing Strategies

When working with large datasets, two critical strategies—sharding and indexing—are essential to optimize performance and scalability.

Sharding

Sharding divides data into smaller, distributed subsets called “shards,” which can be spread across multiple servers or databases to reduce load and enhance performance. This is especially beneficial for NoSQL databases.

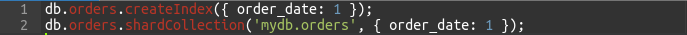

Code Example (Javascript, MongoDB Sharding):

we create an index on order_date and shard the collection based on this field, allowing for distributed queries.

Indexing

Indexing improves query performance by creating an index on frequently queried fields. It enables quick lookups and accelerates the retrieval process.

Code Example (SQL Indexing):

This creates an index on the customer_id column, speeding up queries that filter by this field.

Data Archiving and Retention Policies

Data archiving and retention are crucial for managing the lifecycle of your data and ensuring compliance with regulatory requirements.

Archiving

For data that is rarely accessed, archiving it to low-cost storage like AWS Glacier or Azure Archive Blob Storage is a good option.

Code Example (Python, AWS Glacier Archiving):

Retention Policies

Retention policies define how long data should be kept before it is archived or deleted. These policies help manage storage space and ensure compliance with data retention regulations.

Conclusion

In conclusion, choosing the right data architecture and optimizing your data storage practices are critical for handling large volumes of data efficiently. By understanding the differences between relational databases, NoSQL systems, and object storage, as well as leveraging optimization techniques such as compression, partitioning, sharding, and indexing, you can ensure both cost efficiency and high performance.

Whether you use cloud platforms like AWS S3, Azure Blob Storage, or Google Cloud Storage, implementing the best practices outlined in this blog will empower you to scale your data storage and analytics capabilities effectively. The provided code examples serve as a guide to get you started in optimizing your data storage environment.