Introduction

In the dynamic landscape of containerized applications, efficient storage management is crucial. This comprehensive guide aims to empower you with the knowledge and practical steps needed for effective Persistent Volume (PV) management and expansion within Azure Kubernetes Service (AKS), utilizing Azure File Share as the underlying storage solution. Follow along to learn the intricacies of the process, including code snippets and best practices. In this blog, we’ll explore the intricacies of dynamically creating persistent volumes using Azure Files and tackle the challenge of resizing PVCs without disrupting your pods.

Let’s first take a look on how Kubernetes create volumes. Kubernetes supports the creation of volumes in two distinct modes:

Static mode: In this mode, volumes are manually created and referenced in the Pod specification.

Dynamic mode: In dynamic mode, AKS automatically creates volumes and associates them with Persistent Volume Claims (PVCs) using specifications defined within the cluster.

Dynamic provisioning simplifies the management of storage resources. As applications demand more storage, PVs can be dynamically created to meet these requirements. This is where Storage Classes come into play, defining the type of storage, its characteristics, and the provisioner responsible for creating the underlying storage resource. Kubernetes introduced the ability to dynamically resize PVCs starting from version 1.11. This feature enables you to adjust the size of a PVC without having to manually intervene or disrupt the applications using the storage.

Prerequisites

- An active Azure Kubernetes Service (AKS) cluster.

- Have

kubectlinstalled and configured to communicate with your Kubernetes cluster. - Need an Azure storage account.

Dynamically provision a volume:

Create a storage class->

Let’s create a storage class first. Storage classes define how to create an Azure file share. The node resource group automatically creates a storage account to be used with the storage class for holding the Azure File share. I’ve already created storage class yaml, So let’s just create it.

kind: StorageClass

apiVersion: storage.k8s.io/v1

metadata:

name: my-azurefile

provisioner: file.csi.azure.com # replace with "kubernetes.io/azure-file" if aks version is less than 1.21

allowVolumeExpansion: true

mountOptions:

- dir_mode=0777

- file_mode=0777

- uid=0

- gid=0

- mfsymlinks

- cache=strict

- actimeo=30

parameters:

skuName: Standard_LRSThe allowVolumeExpansion parameter in a StorageClass configuration is a critical option that determines whether the Persistent Volumes (PVs) created using that StorageClass can be resized or not.

This parameter, when set to `true`, enables the capability for Persistent Volumes (PVs) created with this StorageClass to be dynamically resized.

To increase storage for a PVC using this StorageClass, edit the PVC’s size, and the underlying PV will automatically resize accordingly. So it’s very necessary to add this attribute in our storage class yaml file.

Create the storage class using the kubectl apply command.

kubectl apply -f sc.yamlCreate a persistent volume claim->

A persistent volume claim (PVC) uses the storage class object to dynamically provision an Azure file share. let’s create a file named pvc-for-sc.yaml and make sure the storageClassName matches the storage class we created in the previous step.

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: my-azurefile

spec:

accessModes:

- ReadWriteMany

storageClassName: my-azurefile

resources:

requests:

storage: 10GiCreate the persistent volume claim using the kubectl apply command.

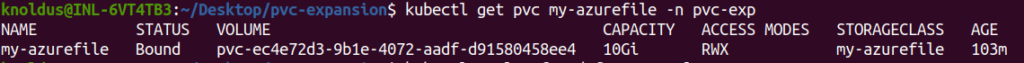

kubectl apply -f pvc-for-sc.yamlNow, verify that everything is complete, with the file storage ready and Kubernetes setting up a secret containing the necessary connection information and credentials. To see how the Persistent Volume Claim (PVC) is doing, just use the kubectl get command. We can also see all these details on azure portal.

Use the persistent volume->

Let’s create an example NGINX pod that uses the Persistent Volume (PV) and Persistent Volume Claim (PVC) we’ve set up with the “my-azurefile” StorageClass.

kind: Pod

apiVersion: v1

metadata:

name: mypod

spec:

containers:

- name: mypod

image: mcr.microsoft.com/oss/nginx/nginx:1.15.5-alpine

resources:

requests:

cpu: 100m

memory: 128Mi

limits:

cpu: 250m

memory: 256Mi

volumeMounts:

- mountPath: /usr/share/nginx/html

name: my-azurefile

readOnly: false

volumes:

- name: my-azurefile

persistentVolumeClaim:

claimName: my-azurefileLet’s apply this pod configuration using the kubectl apply command, Once applied, Kubernetes will create the pod, and NGINX will be serving content from the dynamically provisioned persistent volume.

Resize Dynamically provisioned volume:

One common challenge is resizing PVCs without disrupting our applications. Thankfully, Kubernetes introduced the ability to resize PVCs since version 1.11. Here’s how we can achieve this:

Edit the PVC with the desired storage size with ‘kubectl edit ‘

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: my-azurefile

spec:

accessModes:

- ReadWriteMany

storageClassName: my-azurefile

resources:

requests:

storage: 20GiModify the resources.requests.storage field to your desired size, save, and close the editor. Kubernetes will automatically resize the underlying Azure File Share without affecting your running pods. We have changed the storage attribute to 20Gi.

After resizing the PVC we can verify it using ‘kubectl get pvc‘

Resized the PVC’s capacity to 20Gi, and now simply restart our pod.

Conclusion

Dynamic resizing of Persistent Volumes brings a new level of flexibility to Kubernetes storage management. By understanding the principles of persistent volumes, dynamic provisioning, and the steps involved in resizing, you empower your applications to scale seamlessly with changing storage needs.

However, keep in mind a few considerations:

- The storage backend (in this case, Azure Files) must support dynamic resizing.

- The file system type used in the PVC might have specific requirements or limitations for resizing.

Embrace these practices to unlock the full potential of persistent volumes in your Kubernetes journey. Happy resizing!

Reference

https://learn.microsoft.com/en-us/azure/aks/azure-csi-files-storage-provision