Testing in production might sound like a developer or tester’s worst nightmare. However, in the modern-day development, testing in production is becoming more and more prevalent.

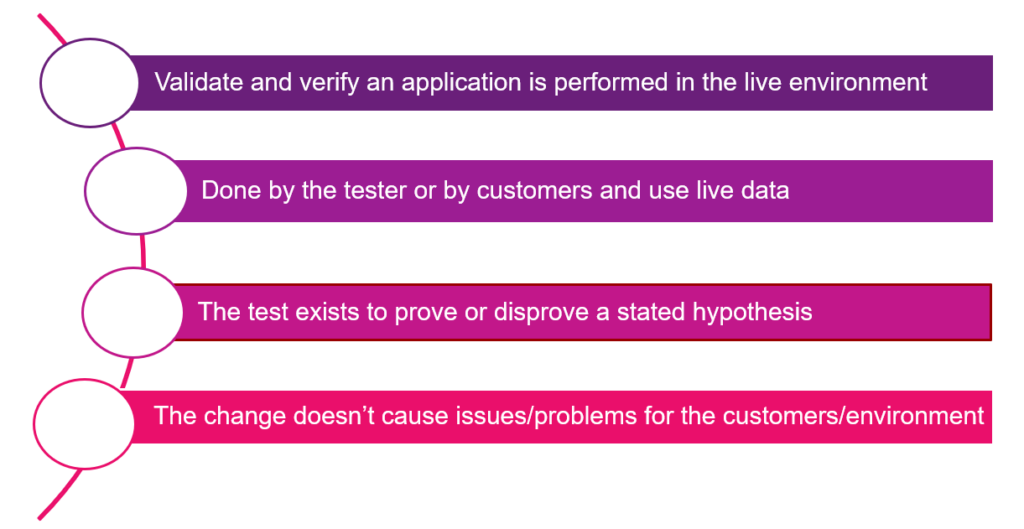

Production testing is the continuous testing of any software product or application in the production environment. Testing in the production environment conforms to testing new code changes in the live environment instead of using a staging environment.

What is Production Testing?

Now, let’s discuss why production testing is essential with respect to modern quality strategy.

Why testing in Production?

Creating a Staging environment takes a long time, and the result may not meet business needs. No one cares if the feature is working in Staging, we care if it works in Production environment.

In this section, we will go through some main benefits why we should run tests on a production environment.

- Able to detect problems in scenarios hard to replicate in Staging environments.

- Improve test accuracy.

- Identify which user experiences are more effective.

- Release faster

- Increase confidence in releases.

- Catch problems before users notice them.

- Verify stability and reliability under realistic levels.

Production Testing Approach

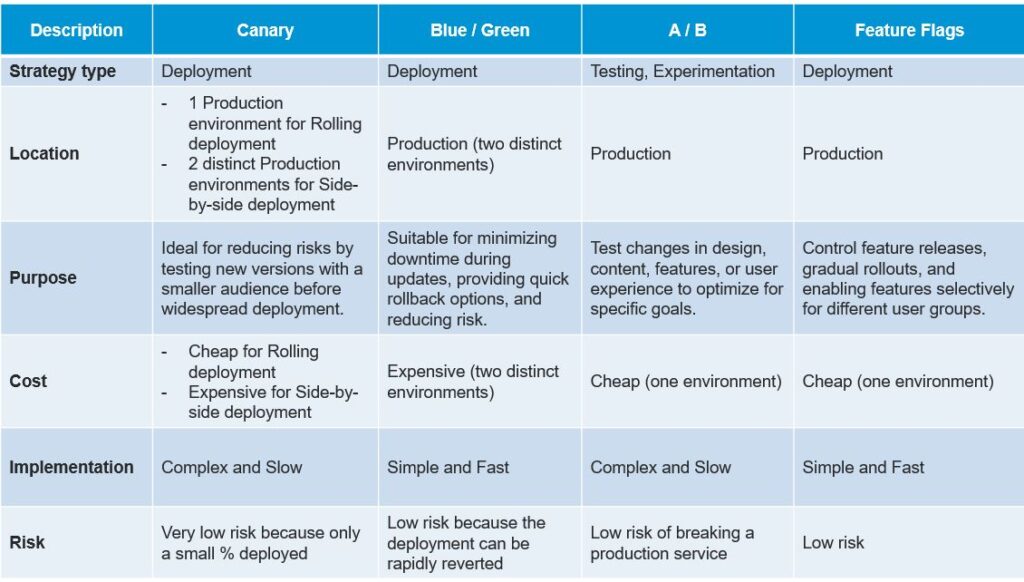

There are numerous approaches available for Production Testing; however, four main approaches are widely popular, namely:

1. A/B Testing (Split Testing/Bucket Testing)

What is A/B Testing?

- A type of statistical experiment for comparing two or more versions of a webpage or element to see which one performs better. In A/B test, some percentage of users automatically receives version A and other receives version B.

- To run the experiment user groups are split into 2 groups. Group A, often called the control group, continues to receive the existing product version, while Group B, often called the treatment group, receives a different experience, based on a specific hypothesis about the metrics to be measured.

- At the end the results from 2 groups which is a variety of metrics is compared to determine which version performed better.

A/B Testing Process

- Collect data/problem: Begin optimizing by analyzing high-traffic areas in website or application using tools like Google Analytics. Focus on improving pages with high bounce or drop-off rates for better conversion. Don’t forget to explore insights from heatmaps, social media, and surveys for additional areas to enhance.

- Identify goals: Conversion goals are the specific metrics we use to assess the success of a variation compared to the original version. These goals can range from clicking a button or link to completing product purchases.

- Develop hypothesis: After identifying a goal, we can start generating A/B testing ideas and formulating hypotheses for why they might outperform the current version. Once we’ve compiled a list of ideas, prioritize them based on expected impact and implementation complexity.

- Create different variations: Utilize A/B testing software, such as Optimizely or Convert, to implement the desired modifications to an element on website or mobile app. This could involve altering button colors, rearranging page elements, concealing navigation items, or even implementing custom changes.

- Run experiment: Initiate experiment and await visitor participation. At this stage, visitors to website or app will be randomly assigned to either the control or variation of our experience. Their interactions with each version are meticulously measured, tallied, and compared against the baseline to assess their respective performance.

- Analyze results: After experiment concludes, the next step is to analyze the results. A/B testing software will provide data from the experiment, highlighting the performance differences between the two-page versions and indicating whether these differences are statistically significant. Achieving statistical significance is crucial for having confidence in the test’s outcome.

- Implement the winner: If version B is deemed the winner, implement the changes from version B into website or app. Make sure to track the impact of these changes over time to ensure they continue to produce positive results.

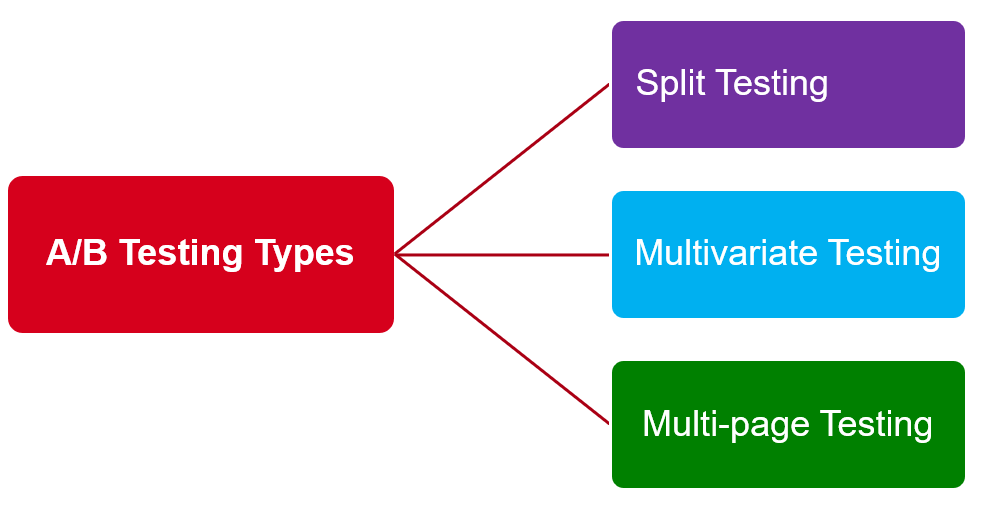

Types of A/B Testing

- Split Testing: Compare two versions (sometimes more): Original and Variation (has single or few distinct changes) of the same web page to determine which one performs better based on user behavior.

- Multivariate Testing: Involve simultaneously testing variations of multiple variables on a page to determine which combination performs best.

- Multi-page Testing: Involve testing changes to particular elements across multiple web pages.

Where and What to A/B Testing?

Where?

A/B Testing can be applied for the following items:

- All websites experiences.

- Paid Advertising

- Mobile apps

- Marketing campaign

What?

Here is a compilation of common elements suitable for A/B testing:

- Headlines and subheadings

- Navigation and menus

- Forms

- CTA buttons

- Images

- Page’s design and layout

- Popups

- Social proof (case studies, awards, expert views…)

Benefits & Challenges of A/B Testing

Benefits

- Increase the overall usability, revenue, conversion rates.

- Create effective marketing strategies.

- Measure the efficacy of a solution.

- Provide needed feedback for developers/testers/other stakeholders.

Challenges

- Not for a small number of users

- The impact of A/B testing on SEO

- Mostly only good for specific design problems

- Very costly (recourses) and timely process

2. Feature Flags

- Feature flags are a way that we can conditionally turn certain sections of their code on or off.

- It is extending Continuous Delivery into Continuous Deployment – a way to put changes into production behind a flag and turn them on in a controlled way later.

- It allows the testing of new features in production that can be rolled back using a ‘kill switch’ if necessary. Because it’s difficult to fully imitate the production environment in staging, feature flags let we test new components in the real world while reducing risk.

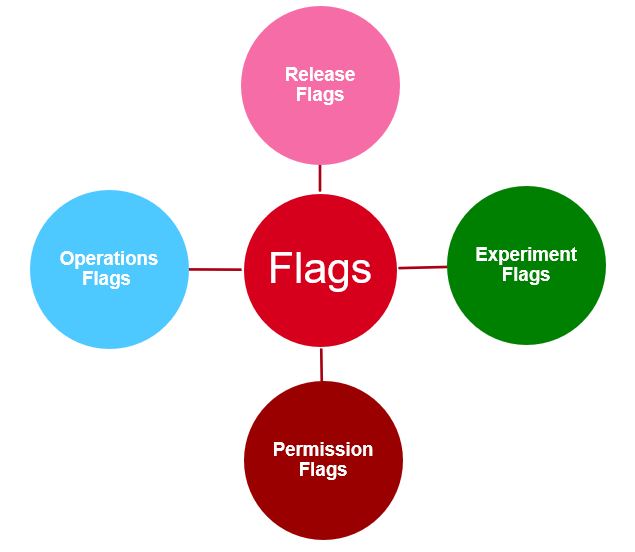

Types of Feature Flags

- Release flags – During product development, we employ these flags to conceal particular functionalities when deploying software. These release flags also facilitate Continuous Delivery and trunk-based development, allowing us to easily hide any unprepared features behind a new flag.

- Experiment flags – Using these flags to gain insights into the effects of a change on various aspects. This can encompass technical experiments, such as assessing how a new UI affects server load, or user experiments, like evaluating the impact of a new button on conversion rates.

- Operation flags – An operational feature flag oversees and regulates a system’s behavior, sometimes referred to as a kill switch or circuit breaker. Both serve the purpose of deactivating a poorly performing feature, but they operate differently. A kill switch is typically a short-term flag used during a release to disable a feature in case of issues.

- Permission flags – Use to control user access to specific features, making it ideal for restricting certain functionalities to subscribers, beta testers, or internal users.

Benefits & Challenges of Feature Flags

Benefits

- Allow the testing of new features in production that can be rolled back.

- Reduce risk, control testing, separate feature delivery.

- Collect user feedback and make changes when needed.

- Ability to carry out Canary Deployment and A/B Testing

Challenges

- Managing feature flag debt: If feature flags are not properly managed and cleaned up, they can accumulate as technical debt over time. Unused flags can clutter the codebase and make it harder to maintain.

- Require robust monitoring and metrics: Effectively monitoring and measuring the impact of various feature configurations demand robust tracking and metrics. Comprehending the effects of different flags on user behavior and performance can be a complex undertaking.

- Complex testing: Testing becomes more intricate with various combinations of enabled and disabled features. Ensuring comprehensive testing of all potential feature configurations can be quite challenging.

3. Canary Deployment (Canary Release)

- A deployment that involves rolling out a new release to a small subset of users and monitoring their performance and feedback. If the result is positive, the new release is gradually deployed to the rest.

- Require running two versions of the application simultaneously. The old version is labeled “stable” and the newer one “canary.”

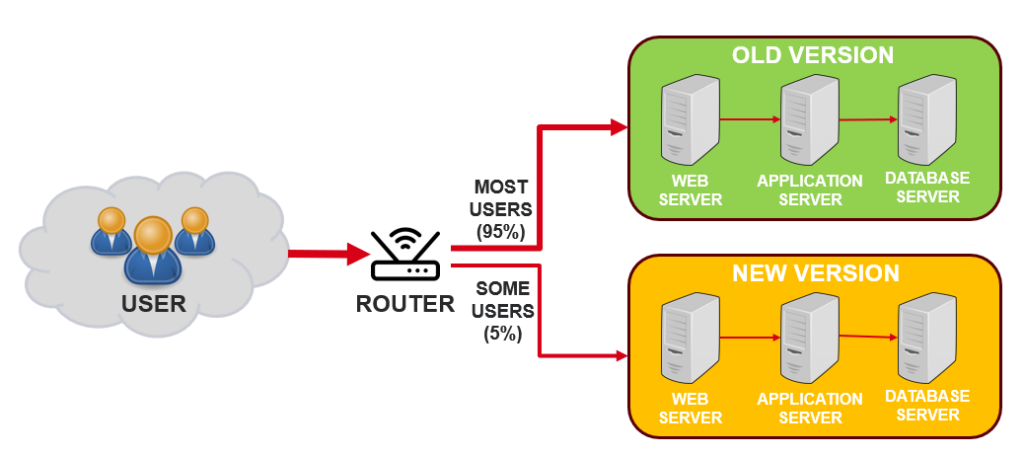

- It is a technique to reduce the risk of introducing a new software version in production by slowly rolling out the change to a small subset of users before rolling it out to the entire infrastructure and making it available to everybody.

Two ways to deploy

- Rolling Deployments: The new version of the application is gradually deployed to a subset of servers or instances while maintaining the old version on others. The rollout progresses incrementally until all instances have been updated.

- Side-by-Side Deployments: Deploy two versions (old and new) of the application simultaneously and directs user traffic to either version based on a predefined criterion (canary group).

Benefits & Challenges of Canary Deployment

Benefits

- Easy experiment with new features

- Zero production downtime, faster rollback

- Ability to carry out A/B testing.

- Early feedback and bug identification

- Reduce the risk of introducing major bugs that affects all users

Challenges

- Require more resources.

- Limited test coverage

- Complexity deployment & maintenance

4. Blue/Green Deployment

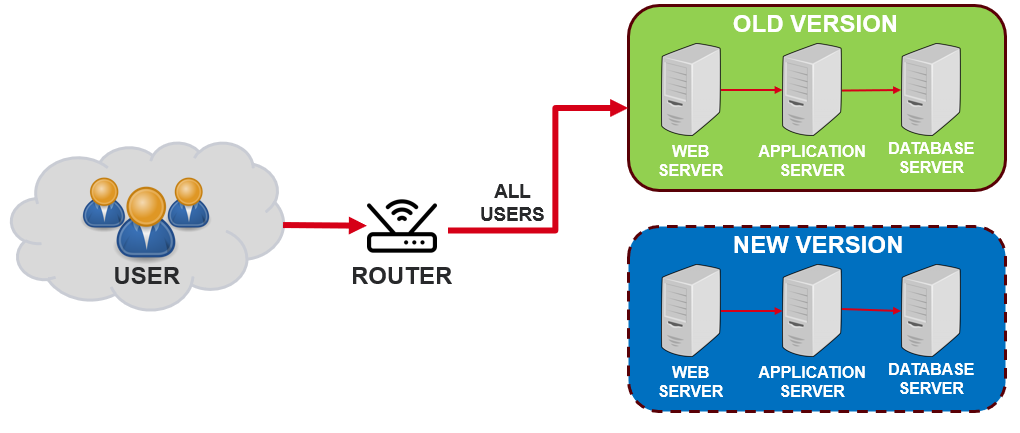

A deployment that involves running two identical versions of a system in parallel, called blue and green, and switching traffic between them when a new release is ready.

Benefits & Challenges of Blue/Green Deployment

Benefits

- Simple, fast, easy to understand and implement.

- Reduce the risk of errors and bugs affecting the users

- Quick rollback to a stable version in case of issues.

- Zero production downtime

Challenges

- Require a large infrastructure budget for two distinct hosting environments.

- Requires resources for maintaining two environments.

- More complex setup due to separate environments.

Summary

Types of Production Test

We still apply almost testing types like the others testing environments. However, in production testing we can focus on some testing types with specific tools.

Figure below shows goals of various types of production tests:

Recommend Tools

Next section let’s discuss about recommend tools for production testing.

Load Test

Although high user traffic may be mimicked in a lower setting, volume testing in a production environment yields the most accurate findings. It gives data for every type of network load, browser responsiveness, and server performance when serving client requests.

Testing Methods/Tools:

- Apache JMeter: It focuses more on Web Services (DB protocols supported via JDBC). This the old-fashioned (though somewhat cumbersome) Java tool I was using ten years ago for fun and profit.

- BlazeMeter: It is a proprietary tool. It runs JMeter, Gatling, Locust, Selenium (and more) open-source scripts in the cloud to enable simulation of more users from more locations.

Debug and Monitor

Testing in production provides us with real product data, that we can then use for debugging. Therefore, we need to monitor and debug the test results in production.

Testing Methods/Tools:

- New Relic: A very strong APM tool, which offers log management, AI ops, monitoring and more.

- Lightrun: It is a powerful production debugger. It enables adding logs, performance metrics and traces to production and staging in real-time, on demand. Lightrun enables developers to securely adding instrumentation without having to redeploy or restart.

Chaos Engineering

Chaos engineering is the discipline of experimenting on a system in order to build confidence in the system’s capability to withstand turbulent conditions in production. Below are some recommended tools for this part.

- Chaos Monkey: A tool developed as open source by Netflix. Its purpose is to randomly terminate services within your production system. This deliberate disruption is designed to test and ensure the resilience of your application to unexpected failures.

- Gremlin: A tool for chaos engineering, enabling DevOps to simulate scenarios like resource unavailability, state changes, and network failures to assess application reactions.

Deploy in Production

- ElasticBeanstalk: AWS supports Blue/Green deployment with its PaaS ElasticBeanstalk solution

- LaunchDarkly: A feature flagging solution for canary releases – enabling to perform a gradual capacity testing on new features and safe rollback if issues are found.

- Convert: Convert is a high-powered testing and personalization tool best suited for CRO experts and agencies. It is one of the best A/B testing platforms, which provides a flicker-free experience to the visitors during the experiment.

Best Practices

- Create Test Data: When testing in production, the information used should represent the software’s actual condition. Hence, we may need to create sample data or use a suitable replacement for the test cases.

- Name of Test Data Realistically: Good test data names can improve organization and communication in production testing

- Avoid Using Real User Data: Using real data in production testing can imitate production environment data, but it can also pose risks such as violating data privacy laws and causing security breaches. This also means we’re violating data privacy laws, including the General Data Protection Regulation (GDPR) and the California Consumer Privacy Act (CCPA).

- Have a rollback plan: We always have a rollback plan when something goes wrong during production testing. This will help we quickly revert to a previous version of the software if necessary.

- Consider checking data/integration with third parties/external systems: When dealing with systems or applications that integrate with other systems or third parties, it is essential to perform comprehensive checks to ensure that there are no adverse impacts on them.

- Inform/Share Plan/Schedule Test to client/stakeholders: Before initiating production testing, it is important to inform and share the test plan and schedule with the clients and stakeholders. This allows us to effectively manage risks and mitigate potential impacts.

- Use real devices and browsers: As virtual devices such as emulators and simulators can’t replicate real user conditions, it is hard to assess the software’s functionality without putting it in a real- world situation.

- Automate testing: There are some automated tests that we can perform in a production environment – API, Smoke testing, Regression testing, Integration testing, Performance testing, Security testing, and Acceptance testing.

Overall, Production Testing is an essential part of the customer process that can help ensure quality, reduce costs, and improve customer satisfaction.

Reference

- https://lightrun.com/testing-in-production-recommended-tools/

- https://www.predicagroup.com/blog/what-are-feature-flags-and-how-to-use-them/

- https://www.mailmodo.com/guides/ab-testing/#1–split-testing

- https://mixpanel.com/blog/ab-tests-vs-multivariate-tests/

- https://www.hostinger.com/tutorials/wordpress-a-b-testing

- https://www.squadcast.com/blog/what-are-canary-deployments-and-why-are-they-important

- https://opensource.com/article/19/5/dont-test-production