Artificial Intelligence is shifting from simple predictive models toward more autonomous systems, often referred to as Agentic AI, capable of reasoning and acting independently. At the same time, serverless computing has become a cornerstone of cloud-native development due to its scalability and efficiency. Bringing these two paradigms together creates powerful opportunities for developers. In particular, building Serverless Agentic AI with Node.js on Azure Functions provides a highly effective way to deliver scalable, event-driven, and production-ready AI applications.

This article explores the foundations of Serverless Agentic AI with Node.js, focusing on practical deployment strategies using Azure Functions.

Understanding Agentic AI in a Serverless Context

Agentic AI represents a new class of artificial intelligence systems capable of reasoning, planning, and taking autonomous actions without explicit step-by-step instructions. Unlike traditional machine learning models that simply return predictions, Agentic AI agents can trigger workflows, interact with APIs, or manage state transitions.

When placed within a serverless architecture, these agents gain:

- Elastic scalability: Functions scale automatically based on workload.

- Event-driven responsiveness: Agents can react to triggers like HTTP requests, message queues, or IoT events.

- Operational efficiency: No server management or idle infrastructure costs.

Example use cases include intelligent chatbots, AI-driven monitoring systems, and real-time decision engines for IoT.

Why Node.js for Serverless Agentic AI

Node.js is particularly well-suited for serverless workloads due to its lightweight, event-driven runtime. Some advantages include:

- Fast startup times: Minimizes cold-start latency for serverless functions.

- Event-driven design: Naturally aligns with serverless triggers and bindings.

- Rich ecosystem: Thousands of libraries (npm) that support AI agents, APIs, and integrations.

Compared to Python or Java, Node.js often provides better concurrency handling for I/O-bound tasks, which is common in AI agents interacting with APIs or databases.

Popular libraries supporting AI development in Node.js include:

- OpenAI Node.js SDK (for large language models).

- LangChain.js (for orchestrating agent workflows).

- Azure SDK for JavaScript (for cloud services integration).

Deploying Serverless Agentic AI on Azure Functions

Azure Functions Overview for AI Workloads

Azure Functions is Microsoft’s event-driven serverless compute platform. It supports Node.js and integrates seamlessly with other Azure services, such as:

- Azure OpenAI Service for language models.

- Azure Cognitive Services for speech, vision, and language tasks.

- Azure Event Hub and Service Bus for real-time data ingestion.

This combination allows developers to build intelligent, scalable AI pipelines without provisioning dedicated servers.

Setting Up a Node.js Agent on Azure Functions

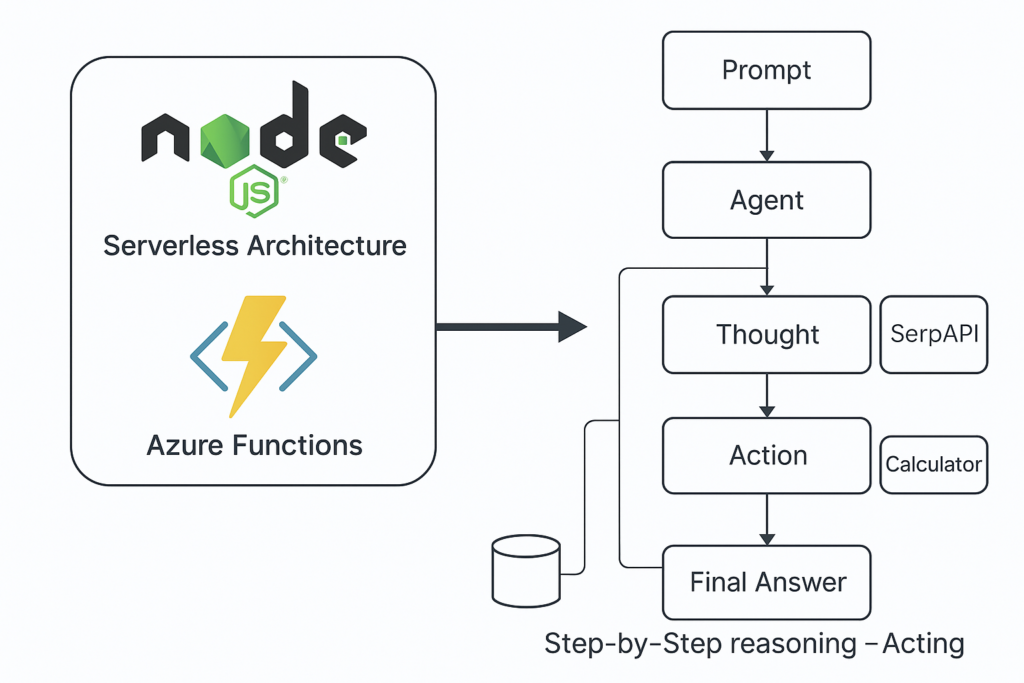

Unlike simple LLM queries, Agentic AI requires agents that can plan, reason, and act through tools. Using LangChain.js, we can deploy an AI agent on Azure Functions that automatically decides whether to search the web or perform calculations to complete a task.

Below is a demo of a ReAct agent (Reasoning + Acting) running on Azure Functions with Node.js. It uses SerpAPI for search and a Calculator tool for arithmetic:

// index.mjs

import { app } from "@azure/functions";

import { ChatOpenAI } from "@langchain/openai";

import { AgentExecutor, createReactAgent } from "langchain/agents";

import { SerpAPI } from "@langchain/community/tools/serpapi";

import { Calculator } from "@langchain/community/tools/calculator";

const model = new ChatOpenAI({

model: "gpt-4o-mini",

temperature: 0,

apiKey: process.env.OPENAI_API_KEY

});

const tools = [

new SerpAPI(), // Uses SERPAPI_API_KEY

new Calculator()

];

const agent = await createReactAgent({ llm: model, tools });

const executor = AgentExecutor.fromAgentAndTools({

agent,

tools,

returnIntermediateSteps: true,

verbose: true

});

app.http("agentic-ai", {

methods: ["POST"],

authLevel: "function",

route: "agentic-ai",

handler: async (request, context) => {

const body = (await request.json().catch(() => ({}))) || {};

const input = body.prompt || "Find the current population of France and add 1,000,000.";

const result = await executor.invoke({ input });

const flow = (result.intermediateSteps || []).map((s, i) => ({

step: i + 1,

thought: s.action?.log ?? "(hidden by model)",

action: s.action?.tool ?? "(none)",

input: s.action?.toolInput,

observation:

typeof s.observation === "string"

? s.observation

: JSON.stringify(s.observation)

}));

return {

status: 200,

jsonBody: {

input,

output: result.output,

flow

}

};

}

});

Example Step-by-Step Flow

When invoked with the prompt:

{ "prompt": "Find the current population of France and add 1,000,000." }

The agent may produce a reasoning trace like this:

- Thought: I need the latest population of France; I should search the web.

Action:serpapi

Input:"current population of France"

Observation: Top results show ~67.8M. - Thought: Now I must add 1,000,000 to that value.

Action:calculator

Input:67,800,000 + 1,000,000

Observation:68,800,000 - Thought: I can now finalize the answer.

Final Answer:≈ 68.8 million(based on current estimates plus 1,000,000).

Why This Matters

This approach goes beyond static AI responses. The agent reasons step by step, selects appropriate tools, and validates intermediate results before returning an answer. As a result, developers can build autonomous, event-driven AI agents that fit perfectly within a serverless architecture.

Steps to Deploy:

Firstly, install Azure Functions Core Tools and initialize a Node.js project:

func init agentic-ai-func --worker-runtime node

cd agentic-ai-func

func new --name AgenticAI --template "HTTP trigger"

Secondly, add the OpenAI package:

npm install openai

Finally, run the command to deploy to Azure:

func azure functionapp publish <your-function-app-name>

Managing Scalability and Costs

When running Serverless Agentic AI with Node.js, scalability and cost management are key considerations. Moreover, Azure Functions provides automatic scaling and flexible pricing options, making it easier to balance performance with efficiency.

- Functions automatically scale up during heavy load and scale down to zero when idle.

- The Consumption Plan charges only for execution time, while the Premium Plan offers reduced latency.

- Caching, batching, and queue-based processing can lower costs and improve performance.

- Durable Functions help manage long-running AI workflows without breaking budgets.

Best Practices for Serverless Agentic AI with Node.js

Building reliable AI agents requires more than just code. Therefore, applying best practices ensures that applications remain secure, efficient, and maintainable over time.

- Use an event-driven design by aligning triggers with AI use cases.

- Apply retry policies and circuit breakers for resilient API interactions.

- Store secrets securely in Azure Key Vault.

- Enable Application Insights to monitor and log the behavior of the AI agent.

- Keep code modular and implement structured error handling to ensure a robust system.

Real-World Use Cases

Serverless Agentic AI with Node.js is not just theoretical. In fact, organizations are already applying it across multiple industries to solve real problems.

- Chatbots that scale instantly with customer requests.

- IoT automation agents that process sensor data and trigger alerts.

- Real-time analytics in finance or operations that detect anomalies.

- Data pipelines that enrich information dynamically with AI reasoning.

Challenges and Future of Serverless Agentic AI

Despite its advantages, this approach also comes with limitations. However, ongoing research and platform improvements are addressing these challenges.

- Cold starts can slow down first-time executions.

- Latency increases when agents rely on external APIs.

- State management requires external databases or durable functions.

- Future trends indicate a shift toward hybrid solutions that combine edge and cloud capabilities.

Conclusion

The combination of Serverless Agentic AI with Node.js on Azure Functions represents a significant step forward in building cloud-native intelligence. Moreover, it bridges the gap between scalable infrastructure and autonomous AI systems, allowing developers to design applications that can react instantly to events.

Additionally, Node.js offers a lightweight, event-driven runtime that aligns naturally with serverless triggers. As a result, Agentic AI agents benefit from both rapid execution and a vast ecosystem of open-source tools. Consequently, organizations can create solutions that are not only cost-efficient but also highly adaptable to fluctuating workloads.

However, challenges such as cold starts, latency, and state management remain important considerations. Therefore, careful architectural choices, combined with features such as durable functions and caching strategies, are crucial for achieving reliable outcomes.

Ultimately, the synergy between serverless computing, Node.js, and Agentic AI paves the way for intelligent systems that scale effortlessly while maintaining efficiency and effectiveness. This evolution points to a future where autonomous agents are deployed seamlessly across industries, demonstrating the true potential of serverless intelligence.

References

- https://learn.microsoft.com/azure/azure-functions/

- https://platform.openai.com/docs

- https://www.npmjs.com/package/langchain

- https://learn.microsoft.com/azure/ai-services/