Understanding RUST Async Primitives Through Real-World Patterns

Most async Rust tutorials show you isolated examples: "Here's how mpsc works," or "This is what broadcast does." But understanding when and why to choose each primitive is much harder when you only see them in isolation.

The real insight comes from seeing how these primitives behave under different conditions—what happens when channels fill up? How do you coordinate shutdown across multiple tasks? When should you use broadcast vs watch vs mpsc?

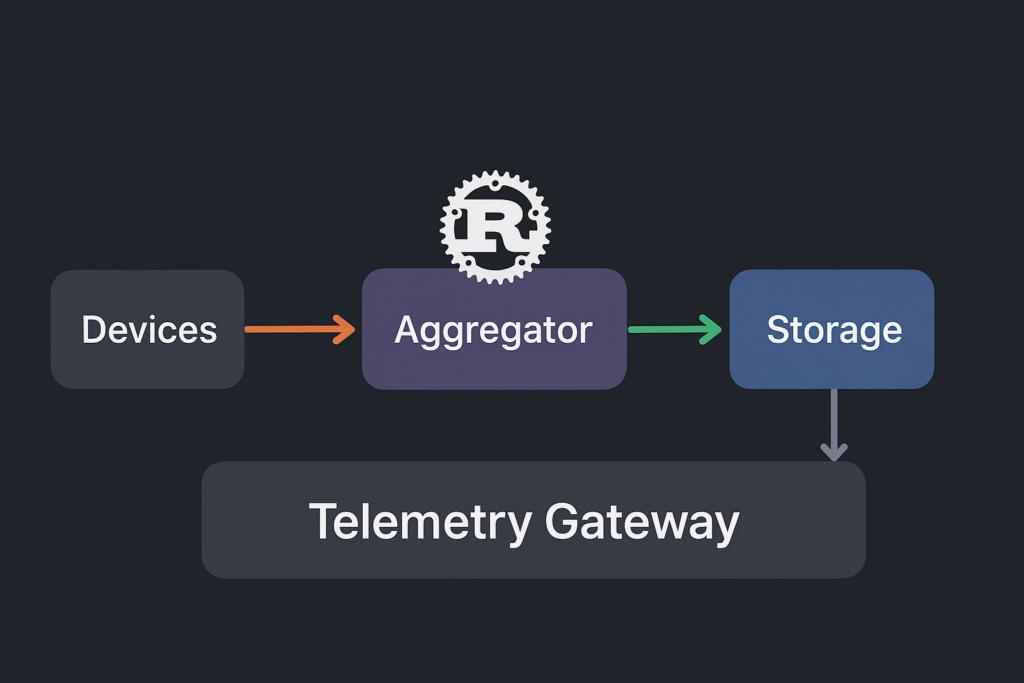

To explore these questions, I've built a "Telemetry Gateway" that demonstrates eight different async primitives working together. This isn't a production system you should copy—it's a learning tool designed to show you the behavioral differences between primitives in realistic scenarios.

Learning Through a Telemetry Gateway

To understand how async primitives behave in realistic scenarios, I've created a telemetry gateway that processes sensor data:

[Devices] --mpsc--> [Aggregator] --broadcast--> [Clients]

| |

| +--mpsc--> [Storage]

|

+--Semaphore (bandwidth limits)

|

+--oneshot--> [Health Checker] (request-response)

This setup lets us explore:

- 7 devices → How does

mpschandle multiple producers? - 1 aggregator → What happens when the consumer can't keep up?

- 1 storage writer → How do bounded channels create backpressure?

- 4 clients → When do you need

broadcastvswatch? - Health checks → How does

oneshothandle request-response patterns?

Each component demonstrates different primitive behaviors under load, helping you understand not just the API, but the underlying mechanics and trade-offs.

Async Primitives in Action

Each primitive in the telemetry gateway addresses specific distributed systems challenges. The selection criteria extend beyond basic functionality to encompass performance characteristics, failure modes, and operational complexity:

| Primitive | Communication Pattern | Message Ordering | System Role | Architectural Rationale |

|---|---|---|---|---|

mpsc |

Many producers → Single consumer | FIFO per producer | Device telemetry aggregation | Zero-copy message passing with bounded memory guarantees |

mpsc |

Single producer → Single consumer | Strict FIFO | Batch storage pipeline | Controlled buffering enables efficient bulk operations |

broadcast |

Single producer → Multiple consumers | All messages, FIFO | Real-time client updates | Independent consumption rates without head-of-line blocking |

watch |

Shared mutable state | Latest value only | System snapshot distribution | Single-value semantics eliminate replay complexity |

oneshot |

Single producer → Single consumer | Single message only | Device health checks | Request-response pattern with automatic cleanup |

Semaphore |

Resource pool management | No message ordering | Concurrency limiting | Models finite external resource constraints |

Notify |

Event coordination | Signal only, no data | Shutdown signaling | Lightweight broadcast notification without data payload |

JoinSet |

Task lifecycle tracking | Completion order varies | Structured concurrency | Deterministic cleanup with failure isolation |

The mpsc Backbone — Controlled Flow, Predictable Pressure

Bounded mpsc channels form the backbone of the telemetry pipeline. Each device task pushes readings into a queue with fixed capacity—a deliberate choice to enforce backpressure at the producer boundary.

When the aggregator momentarily lags (during JSON parsing or network delay), the channel buffer fills. Rather than letting unbounded queues grow and silently exhaust memory, tokio::sync::mpsc naturally pushes pressure upstream: device tasks must await space, slowing their emission rate.

This feedback loop turns a potentially unstable, memory-amplifying system into one that self-throttles under stress. The trade-off is predictable latency for bounded memory—an essential design principle in high-throughput async Rust services.

// Devices → Aggregator: enforces flow control across producers

let (telemetry_tx, telemetry_rx) = mpsc::channel::<TelemetryData>(50);

// Aggregator → Storage: smaller buffer, amplifies batching discipline

let (storage_tx, storage_rx) = mpsc::channel::<TelemetryBatch>(10);

How the 50-Capacity Telemetry Channel Behaves

With 7 devices sending at ~1Hz each, the channel typically holds 7-14 messages under normal load. When the aggregator processes faster than devices produce, the buffer stays nearly empty. But during aggregator delays (JSON parsing, metric calculations), the buffer fills progressively:

- Messages 1-50:

send()completes immediately, devices continue at full rate - Message 51: First device blocks on

send().await, automatically throttling - Cascading slowdown: As buffer stays full, all devices reduce their effective rate

This creates natural load balancing—the system automatically matches input rate to processing capacity.

How the 10-Capacity Storage Channel Behaves

The storage channel's tight capacity forces aggressive batching. The aggregator accumulates telemetry for ~2 seconds, then flushes a batch:

- Batches 1-10: Storage processes normally, channel stays clear

- Batch 11: Aggregator blocks on

try_send(), must wait for storage to catch up - Backpressure cascade: Storage delay → aggregator delay → device throttling

The small buffer prevents the aggregator from building massive batches that would consume memory and increase data-at-risk during failures.

Message Ordering Guarantees

mpsc channels provide FIFO ordering per producer. Device 1's readings always arrive in temporal sequence, but Device 1's message might arrive before or after Device 2's concurrent message. This per-producer ordering preserves individual device trends while allowing natural interleaving.

Sender and Receiver Lifecycle

mpsc channels have specific drop semantics that affect system behavior:

- Sender lifecycle: Each device holds a

Sender<TelemetryData>. When a device task completes (normal shutdown or panic), its sender is automatically dropped - Multiple senders: The aggregator's receiver stays open as long as ANY sender exists. With 7 device senders, the channel closes only when all 7 devices shut down

- Receiver lifecycle: When the aggregator drops its receiver, all device

send()operations immediately returnErr(SendError), causing devices to detect the closed channel and exit - Graceful vs abrupt closure:

- Normal shutdown: Devices receive shutdown signal → drop senders → receiver detects closure via

recv() = None - Aggregator crash: Receiver drops → all device sends fail → devices detect failure and exit

- Normal shutdown: Devices receive shutdown signal → drop senders → receiver detects closure via

This automatic cleanup prevents zombie tasks and ensures consistent shutdown behavior regardless of failure scenarios.

Broadcast Distribution for Client Fan-Out

broadcast channels address the fundamental challenge of one-to-many distribution with heterogeneous consumer performance:

// High-capacity buffer accommodates varying client consumption rates

let (broadcast_tx, _) = broadcast::channel::<SystemSnapshot>(100);

// Each subscription creates independent consumption state

let client_rx = broadcast_tx.subscribe();

How the 100-Message Broadcast Buffer Works

The circular buffer maintains 100 slots for system snapshots. Each client subscription tracks its own read position within this shared buffer:

- Normal operation: 4 clients consume at similar rates, all stay within 5-10 messages of the head

- Slow client: Client 3 falls behind due to network issues, its read position lags by 20 messages

- Buffer wraparound: After 100 new messages, the slowest client's position gets overwritten

- Lag notification: Client 3 receives

RecvError::Lagged(20)on nextrecv()attempt

The large buffer (100 vs 50 for telemetry) accommodates client performance variance while preventing one slow client from blocking the entire distribution.

Independent Consumption Isolation

Each subscribe() call creates a logically independent receiver with its own read position within the shared circular buffer. This architecture prevents the "convoy effect" where slow consumers block fast ones—a critical requirement for real-time telemetry distribution.

When clients fall behind due to network latency, processing delays, or temporary disconnections, they receive RecvError::Lagged(n) notifications indicating missed messages. This explicit lag detection enables clients to implement appropriate catch-up strategies:

match broadcast_rx.recv().await {

Ok(snapshot) => process_update(snapshot),

Err(RecvError::Lagged(missed)) => {

// Explicit lag handling - fetch current state from watch channel

let current = watch_rx.borrow().clone();

resync_from_snapshot(current);

}

}

Operational Resilience

Subscriber lifecycle management becomes transparent to the publisher. Client disconnections automatically clean up receiver state without publisher coordination. New clients can subscribe at any time without disrupting existing subscribers or requiring publisher-side registration logic.

The 100-message buffer provides substantial headroom for client performance variance while maintaining bounded memory usage. Under normal conditions with 4 clients consuming at ~1 update/sec, the buffer accommodates significant client-side processing delays without data loss.

Alternative Analysis

Compared to manual fan-out using multiple mpsc channels, broadcast eliminates the publisher-side complexity of managing dynamic subscriber sets. The shared buffer approach also reduces memory overhead compared to per-client buffering, particularly important when subscriber counts scale beyond single digits.

Sender and Receiver Lifecycle

broadcast channels have unique lifecycle semantics that enable dynamic subscription management:

- Sender lifecycle: The aggregator holds the primary

Sender<SystemSnapshot>. Unlikempsc, the broadcast channel stays open as long as the sender exists, regardless of receiver count - Receiver lifecycle: Each client creates a receiver via

sender.subscribe(). Receivers are independent—dropping one doesn't affect others or the sender - Dynamic subscription:

- New clients can

subscribe()at any time, even after the sender has sent thousands of messages - Clients can drop their receivers without coordination—no "unsubscribe" call needed

- The sender doesn't track subscriber count or state

- New clients can

- Channel closure behavior:

- When sender drops: All receivers immediately get

RecvError::Closedon nextrecv() - When all receivers drop: Sender continues working normally, messages are simply discarded

- No "last subscriber" special case—the channel remains functional until sender drops

- When sender drops: All receivers immediately get

This design enables resilient pub-sub patterns where subscriber churn doesn't affect the publisher or other subscribers.

Request-Response with oneshot Channels

oneshot channels solve the classic request-response problem in async systems. Unlike mpsc channels that can send many messages, oneshot is designed for exactly one message exchange—perfect for scenarios where you need to ask a question and get back a single answer.

// Health check: Create single-use channel for request-response

let (response_tx, response_rx) = oneshot::channel::<DeviceStatus>();

// Send the response sender to the device

health_tx.send(response_tx).await?;

// Wait for the single response

let status = response_rx.await?;

How Device Health Checks Work

Every 3 seconds, the health check service randomly selects 2-3 devices to query. For each device:

- Create oneshot pair:

oneshot::channel()creates a sender/receiver pair - Send request: The sender half goes to the device via

mpsc - Device responds: Device sends its status back via the

oneshotsender - Automatic cleanup: Both halves are consumed and dropped after one use

This pattern eliminates the complexity of correlating requests with responses that you'd face with mpsc channels.

Timeout and Error Handling

The health check demonstrates realistic error handling patterns:

match tokio::time::timeout(Duration::from_millis(500), response_rx).await {

Ok(Ok(status)) => {

// Success: got device status within timeout

info!("Device {} health: uptime={}s, battery={}%",

status.device_id, status.uptime_seconds, status.battery_level);

}

Ok(Err(_)) => {

// Device dropped the sender without responding

info!("Device health check failed - sender dropped");

}

Err(_) => {

// Timeout: device didn't respond within 500ms

info!("Device health check timeout");

}

}

Why Not Use mpsc for Request-Response?

You could implement request-response with mpsc by including a correlation ID in each message, but this creates several problems:

- Correlation complexity: You need to match responses to requests

- Memory leaks: Unmatched requests accumulate in hash maps

- Ordering issues: Responses might arrive out of order

- Cleanup burden: You must manually clean up expired requests

oneshot eliminates all these issues by design—each channel pair handles exactly one exchange and automatically cleans up.

Performance Characteristics

oneshot channels are optimized for single-use scenarios:

- Zero allocation for the common case (sender and receiver in same task)

- Automatic cleanup prevents memory leaks

- Type safety ensures exactly one message per channel

- Cancellation support via dropping either half

Sender and Receiver Lifecycle

oneshot channels have the most restrictive lifecycle of all primitives, designed for exactly one message exchange:

- Creation:

oneshot::channel()creates a linked sender/receiver pair - Single use consumption:

sender.send(value)consumes the sender, making it unusable afterwardreceiver.awaitconsumes the receiver, returning the sent value- Both operations can only happen once per channel pair

- Cancellation semantics:

- If sender drops before sending:

receiver.awaitreturnsErr(RecvError) - If receiver drops before receiving:

sender.send()returnsErr(SendError(value)) - Either half can detect cancellation by the other half

- If sender drops before sending:

- Timeout handling: The receiver can be wrapped in

tokio::time::timeout()to detect unresponsive senders - Memory efficiency: After message exchange, both halves are consumed and all memory is freed automatically

This lifecycle makes oneshot perfect for request-response patterns where you need guaranteed cleanup and cancellation detection.

State Synchronization via watch Channels

watch channels solve the "current state" distribution problem by maintaining single-value semantics with efficient multi-reader access:

// Initialize with baseline system state

let (snapshot_tx, snapshot_rx) = watch::channel(SystemSnapshot::default());

// Non-blocking access to current state

let current_state = snapshot_rx.borrow().clone();

How Watch Channel State Replacement Works

Unlike queuing channels, watch maintains exactly one value slot:

- Initial state:

SystemSnapshot { active_devices: 0, total_messages: 0, ... } - First update:

send()atomically replaces with{ active_devices: 3, total_messages: 15, ... } - Rapid updates: 10 updates/second → only the latest value is retained, intermediate states are lost

- Reader access:

borrow()always returns the most recent state, never stale data

This "latest wins" behavior is perfect for reconnecting clients who need current system status, not historical updates.

Single-Value State Semantics

Unlike message-oriented channels that accumulate historical data, watch channels maintain only the most recent value. Each send() operation atomically replaces the previous state, ensuring readers always access current information without processing update deltas.

This design eliminates the "state reconstruction" problem common in event-sourced systems. Reconnecting clients don't need to replay missed events—they immediately access the current system snapshot, reducing both complexity and latency.

Concurrent Reader Efficiency

watch channels optimize for the common pattern of multiple readers accessing shared state. The borrow() operation provides zero-copy access to the current value through Ref<T>, enabling concurrent reads without coordination overhead:

// Multiple tasks can read concurrently without blocking

let metrics = snapshot_rx.borrow();

let device_count = metrics.active_devices;

let avg_temp = metrics.device_metrics.values()

.map(|m| m.avg_temperature)

.sum::<f64>() / metrics.active_devices as f64;

Memory and Performance Characteristics

The single-value approach provides constant memory usage regardless of update frequency or reader count. State updates trigger notifications to waiting readers via changed(), but don't accumulate in buffers. This makes watch channels ideal for high-frequency state updates where only the latest value matters.

Complementary Role with broadcast

The system uses watch and broadcast channels for different access patterns:

broadcast: Delivers every state transition for real-time monitoringwatch: Provides current state for reconnection and periodic polling

This dual-channel approach optimizes for both real-time updates and state synchronization without forcing a single communication pattern.

Message Ordering: All vs Latest

broadcast: Delivers every message in FIFO order—clients see all state transitionswatch: Provides only the latest value—clients skip intermediate states

For telemetry monitoring, broadcast ensures clients observe every system state change, while watch enables efficient reconnection without processing stale updates.

Sender and Receiver Lifecycle

watch channels have unique lifecycle semantics optimized for shared state distribution:

- Sender lifecycle: Only one sender exists per channel. When the aggregator (sender holder) drops, the channel closes permanently

- Receiver lifecycle: Multiple receivers can exist via

receiver.clone(). Each receiver maintains independent notification state but shares the same underlying value - Value persistence: The current value persists in the channel even if all receivers are dropped. New receivers created via

sender.subscribe()immediately see the latest value - Closure behavior:

- When sender drops: All receivers'

changed().awaitcalls returnErr(RecvError), andborrow()continues returning the last sent value - When all receivers drop: Sender continues working normally, updates still replace the stored value

- The channel maintains the last value until the sender drops

- When sender drops: All receivers'

- Clone semantics:

receiver.clone()creates independent notification tracking but shares the same value storage. Each clone canawait changed()independently

This lifecycle enables efficient state synchronization where the latest value is always available, regardless of receiver lifecycle timing.

Resource Pool Management with Semaphore

Semaphore primitives model finite resource constraints that exist in real distributed systems:

// Network bandwidth simulation: 5 concurrent device operations

let device_semaphore = Arc::new(Semaphore::new(5));

// Database connection pool: 2 concurrent storage operations

let db_pool = Arc::new(Semaphore::new(2));

How the 5-Permit Device Semaphore Behaves

With 7 devices competing for 5 bandwidth slots, contention is guaranteed:

- Permits 1-5: First 5 devices acquire immediately, begin telemetry transmission

- Devices 6-7: Block on

try_acquire(), must wait for permit release - Permit release: Device completes transmission, automatically returns permit via

Drop - Queue progression: Waiting devices acquire permits in FIFO order

This creates natural rate limiting—the system never exceeds 5 concurrent network operations, preventing bandwidth saturation and API rate limit violations.

How the 2-Connection Database Pool Behaves

The storage writer uses only 2 database connections, forcing serialization during high-throughput periods:

- Connections 1-2: First 2 storage operations proceed immediately

- Operation 3: Blocks on

acquire().await, waits for connection availability - Connection release: Completed write returns connection, next operation proceeds

- Backpressure cascade: DB bottleneck → storage delay → aggregator blocking → device throttling

The small pool size (2 vs 5 for devices) creates an intentional bottleneck that prevents database connection exhaustion while maintaining predictable write latency.

Resource Contention Patterns

Semaphores transform resource exhaustion from crash scenarios into controlled waiting:

- Without semaphores: 100 concurrent DB connections → pool exhaustion → timeout failures

- With semaphores: 2 concurrent connections → queued operations → predictable latency

This approach converts resource contention from a failure mode into a flow control mechanism.

Permit Lifecycle and Resource Management

Semaphore permits have automatic lifecycle management that prevents resource leaks:

- Permit acquisition:

semaphore.acquire().awaitreturns aSemaphorePermitguard - Automatic release: When the permit guard drops (via

drop(), scope exit, or panic), the permit is automatically returned to the semaphore - RAII guarantees: Even if a task panics while holding permits, the

Dropimplementation ensures permits are returned - Permit counting: The semaphore maintains an internal counter that decreases on acquisition and increases on release

- Fairness: Waiting tasks acquire permits in FIFO order, preventing starvation

- Semaphore lifecycle: The semaphore itself can be dropped, causing all waiting

acquire()calls to returnErr(AcquireError)

This design ensures that resource limits are enforced consistently, even in the presence of task failures or early returns.

Coordination Primitives: Notify and JoinSet

Lightweight Event Signaling with Notify

Notify provides efficient broadcast signaling for coordination events without data payloads:

// Single notification source for all tasks

let shutdown = Arc::new(Notify::new());

// Broadcast shutdown signal to all waiting tasks

shutdown.notify_waiters();

How Notify Signal Propagation Works

The shutdown sequence demonstrates Notify's broadcast behavior:

- Initial state: 12 tasks (7 devices + 1 aggregator + 1 storage + 4 clients) all blocked on

shutdown.notified() - Signal trigger: Main task calls

notify_waiters()after 10-second timer - Instant wakeup: All 12 tasks wake simultaneously, no message queuing or ordering

- Cleanup cascade: Each task begins its shutdown sequence (flush buffers, close connections)

- Completion: Tasks exit their main loops and return from spawned functions

Notify provides zero-overhead signaling—no memory allocation, no message serialization, just a simple atomic flag that wakes all waiters instantly.

Notification Lifecycle and Wakeup Semantics

Notify has stateless lifecycle semantics optimized for coordination events:

- Persistent object:

Notifyinstances don't have senders/receivers—they're persistent coordination points - Waiter registration:

notify.notified()returns a future that completes when signaled. Multiple tasks can wait simultaneously - Signal delivery:

notify_one(): Wakes exactly one waiting task (FIFO order)notify_waiters(): Wakes ALL currently waiting tasks simultaneously- Signals are not queued—if no tasks are waiting, the signal is lost

- No message persistence: Unlike channels,

Notifydoesn't store signals. Tasks must be waiting before the signal is sent - Reusable: After signaling, the same

Notifycan be used for future coordination events - Drop behavior: When

Notifydrops, all waitingnotified()futures are cancelled

This design makes Notify perfect for one-time coordination events like shutdown, where you need to wake multiple tasks simultaneously without message overhead.

Structured Concurrency with JoinSet

JoinSet provides deterministic task lifecycle management, ensuring no spawned tasks are abandoned:

let mut join_set = JoinSet::new();

// Track all spawned tasks

join_set.spawn(device_task(id, tx, semaphore, shutdown.clone()));

join_set.spawn(storage_task(rx, db_pool, shutdown.clone()));

// Ensure all tasks complete before exit

while let Some(result) = join_set.join_next().await {

match result {

Ok(_) => info!("Task completed successfully"),

Err(e) => error!("Task failed: {:?}", e),

}

}

How JoinSet Task Tracking Works

The telemetry gateway spawns 12 tasks total, all tracked by a single JoinSet:

- Task spawning: Each

spawn()call returns a handle stored internally - Completion detection:

join_next().awaitblocks until any task completes - Result handling: Returns

Ok(())for clean shutdown,Err(JoinError)for panics - Completion order: Tasks finish in arbitrary order based on their cleanup complexity

- Final guarantee: Loop continues until all 12 tasks have completed

Failure Isolation Behavior

If Device 3 panics during telemetry generation:

- Panic isolation: Device 3 task terminates, returns

Err(JoinError)tojoin_next() - System continuity: Remaining 11 tasks continue operating normally

- Error logging: Main task logs the failure but doesn't propagate panic

- Graceful degradation: System operates with 6 devices instead of 7

This prevents the "one task kills all" problem common in naive async systems.

Deterministic Shutdown Sequence

- Signal broadcast:

notify_waiters()wakes all 12 tasks simultaneously - Parallel cleanup: Tasks begin shutdown in parallel (flush buffers, close connections)

- Completion tracking:

JoinSetcollects completion results as tasks finish - Final barrier: Main task blocks until all tasks complete cleanup

- Process exit: Only after all resources are released

This eliminates race conditions between task cleanup and process termination.

Task Handle Lifecycle and Completion Tracking

JoinSet provides structured task lifecycle management with automatic cleanup:

- Task registration:

join_set.spawn(future)returns()but internally stores aJoinHandle<T> - Completion detection:

join_next().awaitblocks until ANY tracked task completes, returning its result - Handle consumption: Each task completion consumes its handle—completed tasks are automatically removed from tracking

- Failure isolation: If a task panics,

join_next()returnsErr(JoinError)but doesn't affect other tasks - Abort semantics:

join_set.abort_all()cancels all remaining tasks, causing them to returnErr(JoinError::Cancelled) - Drop behavior: When

JoinSetdrops, all tracked tasks are automatically aborted - Empty detection:

join_next()returnsNonewhen no tasks remain, indicating all work is complete

This lifecycle ensures no spawned tasks are abandoned and provides deterministic completion detection for graceful shutdown sequences.

Backpressure and Resource Modeling

Bounded channels and semaphores create cascading backpressure that mirrors real system constraints. Observable behavior includes:

📱 Device 3 waiting for bandwidth slot

💾 All DB connections busy, waiting...

📦 Storage queue full - applying backpressure

These logs represent the system's self-protection mechanisms in action. Semaphore primitives model finite resource pools with realistic contention:

let device_semaphore = Arc::new(Semaphore::new(5)); // Network bandwidth slots

let db_pool = Arc::new(Semaphore::new(2)); // Database connection limit

Resource exhaustion triggers cooperative waiting rather than resource thrashing. Device tasks pause when network slots are exhausted, preventing connection storms. Storage operations queue when database connections are saturated, avoiding connection pool exhaustion that would cascade into timeout failures.

This approach transforms resource contention from a failure mode into a flow control mechanism, maintaining system stability under varying load conditions.

Deterministic Shutdown Coordination

Shutdown coordination across distributed async tasks requires explicit lifecycle management. The system combines Notify for signaling with JoinSet for completion tracking:

let shutdown = Arc::new(Notify::new());

let mut join_set = JoinSet::new();

// Broadcast shutdown signal to all tasks

shutdown.notify_waiters();

// Block until all tasks complete cleanup

while let Some(result) = join_set.join_next().await {

// Handle task completion or failure

}

Each task integrates shutdown signaling into its event loop using tokio::select!:

tokio::select! {

Some(data) = rx.recv() => {

// Process normal workload

}

_ = shutdown.notified() => {

// Flush buffers, close connections, cleanup state

break;

}

}

JoinSet provides structured concurrency guarantees—the main task cannot exit until all spawned tasks complete their cleanup sequences. This prevents the "zombie task" problem where background work continues after main() exits, potentially corrupting shared resources or leaving connections open.

The pattern ensures deterministic resource cleanup and prevents the race conditions that occur when tasks are abandoned mid-operation.

Lessons Learned

Choose Primitives by Communication Pattern

Don't default to mpsc for everything. Each primitive optimizes for different patterns:

- Use

mpscfor pipelines and work distribution - Use

broadcastwhen multiple consumers need the same data - Use

watchfor shared state that changes over time - Use

Semaphoreto model resource constraints

Bounded Channels Are Your Friend

Unbounded channels are memory leaks waiting to happen. Bounded channels force you to think about backpressure and create self-regulating systems.

Async Design Is About Flow Control

The real skill in async programming isn't spawning tasks—it's designing how they communicate. Think about:

- Who produces data and who consumes it?

- What happens when consumers are slower than producers?

- How do you coordinate lifecycle events?

- Where are your resource bottlenecks?

Structured Concurrency Prevents Chaos

JoinSet and proper shutdown coordination aren't optional in production systems. They're the difference between a system that degrades gracefully and one that leaves zombie tasks and corrupted state.

Conclusion

Async primitives in Rust aren't just about concurrency—they're about building robust, self-regulating systems that handle real-world constraints. The telemetry gateway demonstrates how thoughtful primitive selection creates emergent properties like backpressure, graceful degradation, and clean shutdown.

The next time you're designing an async system, don't think about individual primitives. Think about communication patterns, flow control, and failure modes. The primitives are just tools to implement your design.

The complete code for this telemetry gateway serves as both a learning resource and a reference for production async patterns. It's the kind of system that handles the messy realities of production workloads—because that's where async Rust truly shines.