Weka (Waikato Environment for Knowledge Analysis) remains one of the most accessible yet powerful machine learning tools available for Java developers today. Written in Java and developed at the University of Waikato, New Zealand, Weka bridges the gap between academic research and practical machine learning development. While many developers have shifted toward Python-based frameworks, Weka’s unique strengths in Java-native environments, comprehensive algorithm support, and intuitive graphical interface make it an underrated choice for building robust machine learning pipelines in enterprise Java applications.

This comprehensive guide explores what makes Weka machine learning distinctive for Java developers, when to use it, and how to implement practical machine learning solutions using Weka’s powerful Java API and GUI environment. Whether you’re building a Salesforce-integrated ML system, a microservice with embedded predictions, or an educational platform, this guide provides the knowledge you need to master Weka.

Weka Machine Learning’s Core Architecture

Weka machine learning represents a fundamentally different approach to ML compared to Python-dominated frameworks. Rather than relegating machine learning to data scientists who spend time converting code between scripting languages and production systems, Weka integrates directly into the Java ecosystem, which powers enterprise applications worldwide. The framework is built on the principle of accessibility without sacrificing functionality—it provides both a visual interface for rapid prototyping and a comprehensive Java API for production deployments.

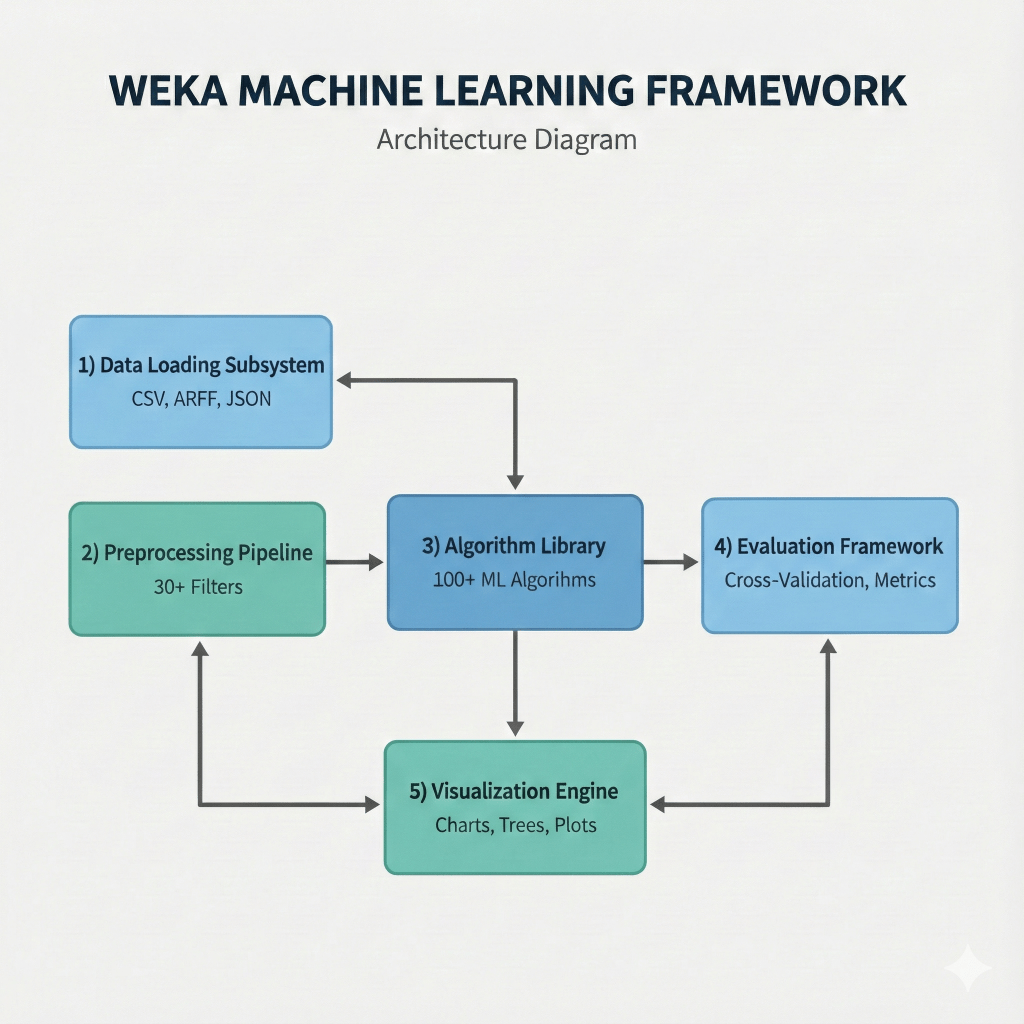

The architecture of Weka consists of five primary components that work in concert to deliver end-to-end machine learning capabilities:

- Data Loading Subsystem – Supports multiple formats including CSV, ARFF (Attribute-Relation File Format), JSON, and direct database connections

- Preprocessing Pipeline – Includes 30+ filters for data transformation, normalization, and feature engineering

- Algorithm Library – Encompasses 100+ machine learning algorithms spanning supervised learning (classification, regression), unsupervised learning (clustering, association rules), and ensemble methods

- Evaluation Framework – Provides statistical validation through cross-validation, holdout testing, and performance metrics

- Visualization Engine – Renders decision trees, scatter plots, and cluster diagrams in real-time

What distinguishes Weka machine learning from newer frameworks is its maturity and stability. Developed over two decades with contributions from hundreds of researchers, the codebase has been battle-tested in academic publications, enterprise systems, and educational institutions worldwide. This pedigree means that algorithms are implemented according to their original research papers rather than adapted through trial-and-error.

For developers working within Java-based systems—whether Spring Boot microservices, legacy enterprise applications, or financial services platforms—Weka eliminates the friction of context-switching between languages. Your machine learning models live in the same codebase, run with the same reliability guarantees, and integrate seamlessly with existing Java infrastructure.

When to Use Weka Machine Learning?

The choice of machine learning tools should be driven by specific technical constraints and project requirements rather than general trends. Weka excels in scenarios where organizations have already invested heavily in Java infrastructure, where model reproducibility matters more than cutting-edge neural network architectures, or where business users need to understand and modify algorithms without needing a dedicated data science team.

Ideal Use Cases for Weka Machine Learning

Enterprise Java Environments with ML Integration: A financial services company running Salesforce Financial Services Cloud (FSC) alongside Java microservices can embed Weka directly into their transaction processing pipelines. Banks processing loan applications can train decision tree models using Weka’s J48 algorithm, then integrate that trained model directly into a Java application using a few lines of code—no Python subprocess calls or REST API wrappers required.

Research and Academic Applications: When publishing findings in peer-reviewed journals, researchers benefit from Weka machine learning’s direct implementation of classic algorithms, the ability to track exact parameters used, and reproducible results across different operating systems. Educational institutions use Weka to teach machine learning fundamentals because students can see the decision trees being built, understand feature importance visually, and experiment with hyperparameter changes in milliseconds.

Healthcare and Regulatory Environments: In biomedical applications where FDA validation is required, Weka’s transparent, well-documented algorithms are easier to certify than black-box deep learning models. A hospital predicting patient readmission risk can build a logistic regression model in Weka, export the exact coefficients, and have those coefficients validated by medical compliance teams.

When NOT to Use Weka Machine Learning

However, Weka has significant limitations for specific use cases:

- Deep Learning Applications: Requiring convolutional neural networks for computer vision or recurrent networks for sequence modeling should use TensorFlow or PyTorch instead

- Massive-Scale Distributed Computing: Beyond a single machine’s RAM capacity is better served by Apache Spark MLlib or distributed frameworks

- Real-Time Streaming Applications: Processing high-velocity data requires frameworks like Apache Kafka with Flink, where Weka’s in-memory architecture becomes a bottleneck

Setting Up Weka Machine Learning: Installation and Environment Configuration

Getting started with Weka requires minimal setup, which is one of its primary advantages. Since Weka is pure Java, installation is straightforward—download the JAR file from Weka’s official SourceForge page, ensure Java 8 or higher is installed, and launch with java -jar weka.jar.

For Maven-based projects, adding Weka as a dependency takes one line in your pom.xml file using the Weka Maven Repository:

<dependency>

<groupId>nz.ac.waikato.cms.weka</groupId>

<artifactId>weka-stable</artifactId>

<version>3.8.6</version>

</dependency>

The GUI interface launches with five primary tabs:

- Preprocess – Data loading and transformation

- Classify – Supervised learning algorithms

- Cluster – Unsupervised grouping

- Associate – Relationship mining

- Visualize – Exploring data distributions and model results

For developers preferring programmatic approaches, the Java API provides direct access to these components without touching the GUI, making Weka machine learning ideal for Spring Boot applications and microservices.

Example: Binary Classification with Weka Using Naive Bayes on Medical Data

Let’s implement a practical diabetes prediction model using Weka’s Naive Bayes classifier on the classic Pima Indians Diabetes dataset. This dataset contains 768 medical records with features like glucose level, blood pressure, and BMI, where the target is a binary outcome: diabetes onset within five years or not.

import weka.classifiers.bayes.NaiveBayes;

import weka.classifiers.evaluation.Evaluation;

import weka.core.Instances;

import weka.core.converters.ConverterUtils.DataSource;

public class DiabetesPredictionModel {

public static void main(String[] args) throws Exception {

// Step 1: Load the diabetes dataset

DataSource source = new DataSource("data/diabetes.arff");

Instances dataset = source.getDataSet();

// Step 2: Set the class index (last attribute is the target)

if (dataset.classIndex() == -1) {

dataset.setClassIndex(dataset.numAttributes() - 1);

}

// Step 3: Create and train the Naive Bayes classifier

NaiveBayes classifier = new NaiveBayes();

classifier.buildClassifier(dataset);

// Step 4: Evaluate using 10-fold cross-validation

Evaluation evaluation = new Evaluation(dataset);

evaluation.crossValidateModel(classifier, dataset, 10, new java.util.Random(1));

// Step 5: Display results

System.out.println("=== Diabetes Prediction Results ===");

System.out.println(evaluation.toSummaryString());

System.out.println("Accuracy: " + (100 * evaluation.pctCorrect()) + "%");

System.out.println("Precision: " + evaluation.precision(1));

System.out.println("Recall: " + evaluation.recall(1));

System.out.println("\nConfusion Matrix:");

printConfusionMatrix(evaluation);

}

private static void printConfusionMatrix(Evaluation eval) {

double[][] matrix = eval.confusionMatrix();

System.out.println(" Predicted No Predicted Yes");

System.out.println("Actual No: " + (int)matrix[0][0] + " " + (int)matrix[0][1]);

System.out.println("Actual Yes: " + (int)matrix[1][0] + " " + (int)matrix[1][1]);

}

}

Expected output shows approximately 75% accuracy on this dataset. The confusion matrix reveals the trade-off between false positives and false negatives—in medical diagnosis, this trade-off has real consequences, so understanding it is critical.

Key preprocessing operations:

- Normalize Filter – Rescales numerical features to a 0-1 range, preventing algorithms sensitive to feature magnitude from giving excessive weight to large-scale features

- Discretize Filter – Converts continuous variables into categorical ranges, useful for algorithms like Naive Bayes that work better with categorical data and for improving interpretability

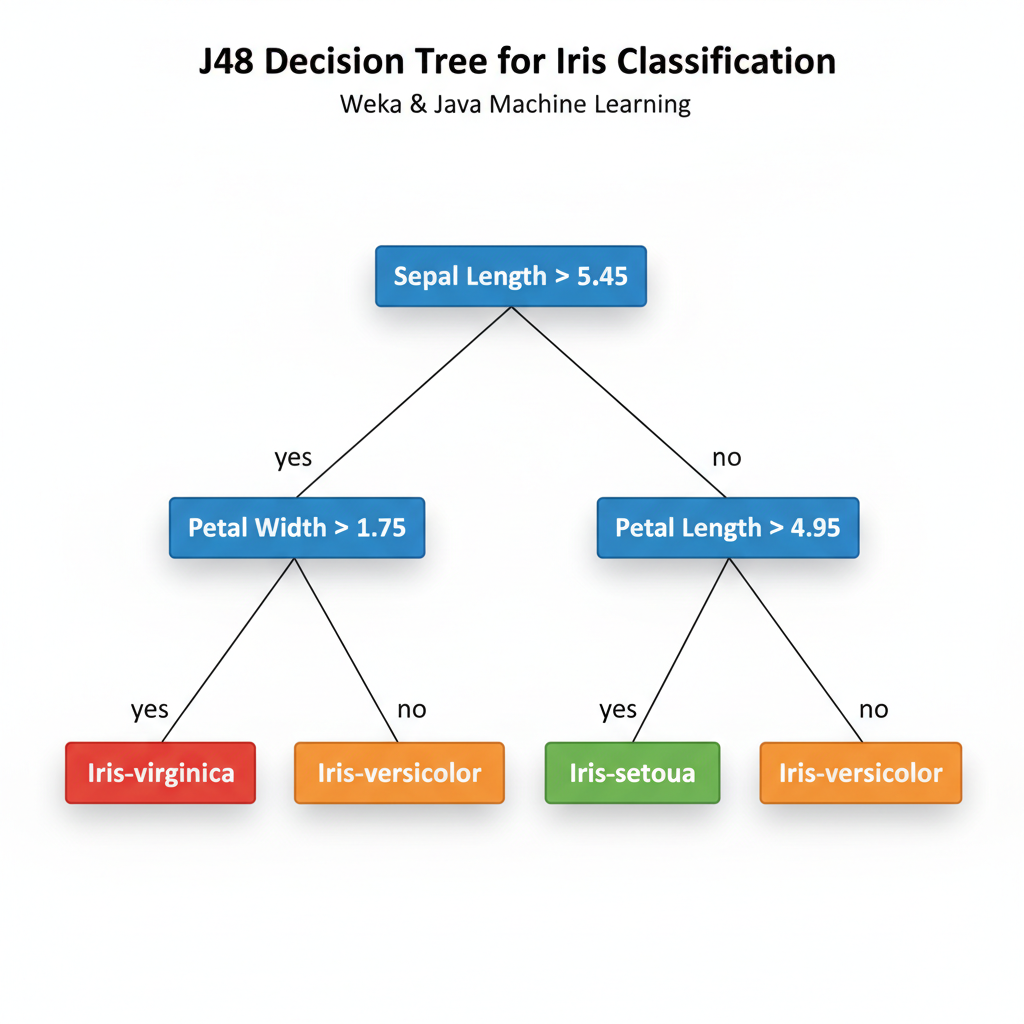

Weka Multi-Class Classification: Iris Flower Species Recognition Using Decision Trees

While binary classification handles yes/no decisions, many real problems involve multiple categories. The Iris dataset, distributed with Weka, contains 150 flower measurements across three iris species. This requires a multi-class approach using Weka’s powerful decision tree implementation.

import weka.classifiers.trees.J48;

import weka.classifiers.evaluation.Evaluation;

import weka.core.Instances;

import weka.core.converters.ConverterUtils.DataSource;

public class IrisClassification {

public static void main(String[] args) throws Exception {

// Load iris dataset

DataSource source = new DataSource("data/iris.arff");

Instances dataset = source.getDataSet();

dataset.setClassIndex(dataset.numAttributes() - 1);

// Create J48 decision tree (similar to C4.5)

J48 tree = new J48();

tree.setConfidenceFactor(0.25f);

tree.setMinNumObj(2);

// Train the model

tree.buildClassifier(dataset);

// Display the decision tree structure

System.out.println("=== Decision Tree for Iris Classification ===");

System.out.println(tree.toString());

// Evaluate with 10-fold cross-validation

Evaluation eval = new Evaluation(dataset);

eval.crossValidateModel(tree, dataset, 10, new java.util.Random(1));

System.out.println("\n=== Evaluation Results ===");

System.out.println(eval.toSummaryString());

System.out.println("Accuracy: " + (100 * eval.pctCorrect()) + "%");

// Show per-class metrics

System.out.println("\nPer-Class Metrics:");

for (int i = 0; i < dataset.numClasses(); i++) {

System.out.println("Class " + dataset.classAttribute().value(i) + ": " +

"Precision=" + String.format("%.3f", eval.precision(i)) + ", " +

"Recall=" + String.format("%.3f", eval.recall(i)));

}

}

}

The J48 decision tree generates an interpretable model that shows exact decision rules. This explainability is invaluable in regulated industries where models must justify their predictions to auditors or customers. Unlike black-box machine learning approaches, Weka’s decision trees are fully transparent.

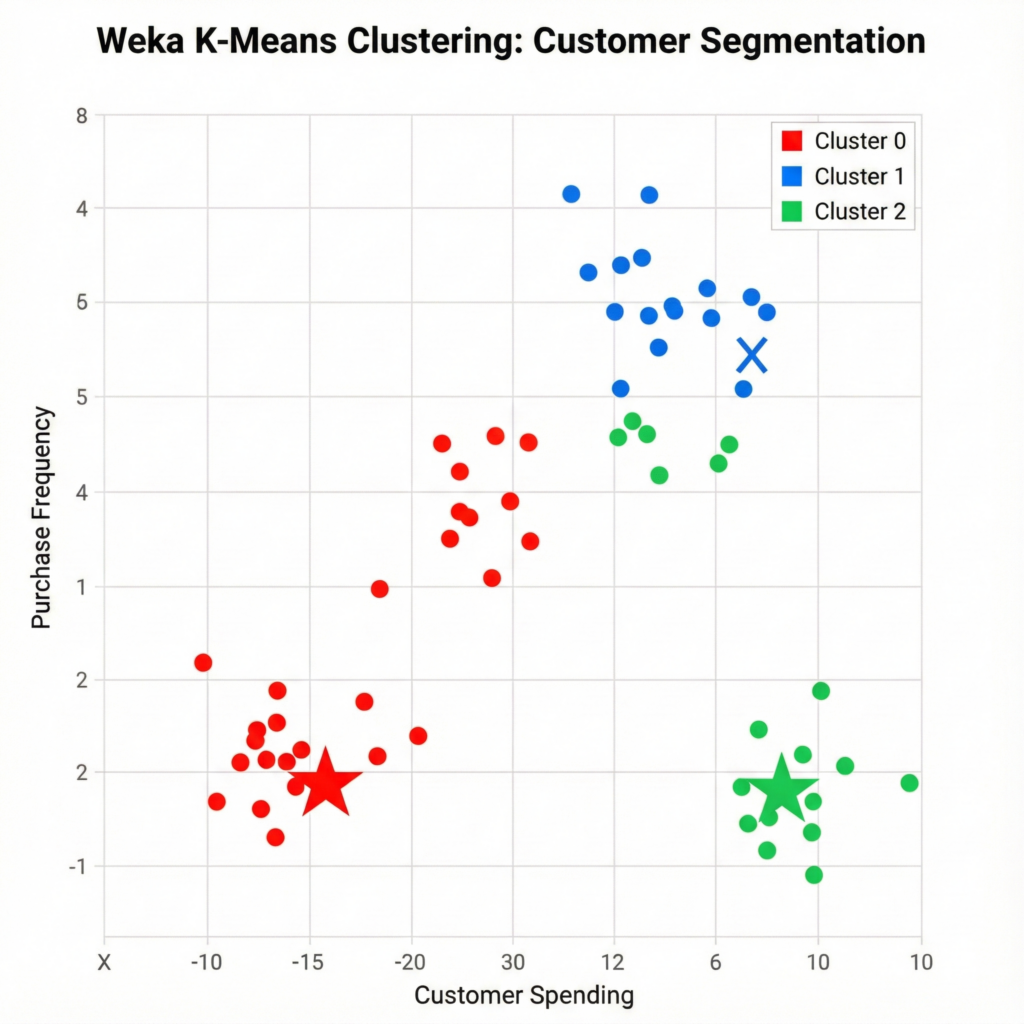

Weka Clustering: Unsupervised Pattern Discovery in Customer Data with K-Means

Not all machine learning problems have labeled outcomes. Customer segmentation, anomaly detection, and exploratory data analysis use unsupervised learning where algorithms discover natural groupings without predefined categories. Weka’s K-means implementation makes this straightforward.

import weka.clusterers.SimpleKMeans;

import weka.core.Instances;

import weka.core.converters.ConverterUtils.DataSource;

public class CustomerSegmentation {

public static void main(String[] args) throws Exception {

// Load unlabeled customer data

DataSource source = new DataSource("data/customer-features.arff");

Instances dataset = source.getDataSet();

// K-means clustering with 3 segments

SimpleKMeans kMeans = new SimpleKMeans();

kMeans.setNumClusters(3);

kMeans.setMaxIterations(100);

kMeans.setRandomSeed(10);

kMeans.buildClusterer(dataset);

System.out.println("=== K-Means Customer Segmentation ===");

System.out.println("Number of clusters: " + kMeans.getNumClusters());

System.out.println("Iterations: " + kMeans.getIterations());

// Show cluster centroids

System.out.println("\nCluster Centroids:");

Instances centroids = kMeans.getClusterCentroids();

for (int i = 0; i < centroids.numInstances(); i++) {

System.out.println("Cluster " + i + ": " + centroids.instance(i));

}

// Assign each customer to a cluster

System.out.println("\nCustomer-to-Cluster Assignment:");

for (int i = 0; i < Math.min(10, dataset.numInstances()); i++) {

int cluster = kMeans.clusterInstance(dataset.instance(i));

System.out.println("Customer " + i + " → Cluster " + cluster);

}

// Get cluster sizes

int[] clusterSizes = new int[kMeans.getNumClusters()];

for (int i = 0; i < dataset.numInstances(); i++) {

int cluster = kMeans.clusterInstance(dataset.instance(i));

clusterSizes[cluster]++;

}

System.out.println("\nCluster Sizes:");

for (int i = 0; i < clusterSizes.length; i++) {

System.out.println("Cluster " + i + ": " + clusterSizes[i] +

" customers (" + String.format("%.1f%%", 100.0 * clusterSizes[i] / dataset.numInstances()) + ")");

}

}

}

K-means clustering partitions customers into groups based on feature similarity. Companies then apply targeted marketing to each segment. A retail company might discover three distinct clusters: price-sensitive bulk buyers, premium customers seeking quality, and occasional shoppers.

Comparing Weka Algorithms: Making Informed Machine Learning Selection Decisions

Machine learning rarely involves finding the single “best” algorithm. Instead, practitioners evaluate multiple algorithms on their specific dataset and select based on accuracy, interpretability, training time, and deployment constraints. Weka facilitates this comparison through its Experimenter tool or programmatically through code.

import weka.classifiers.bayes.NaiveBayes;

import weka.classifiers.functions.LinearRegression;

import weka.classifiers.functions.Logistic;

import weka.classifiers.lazy.IBk;

import weka.classifiers.trees.J48;

import weka.classifiers.evaluation.Evaluation;

import weka.core.Instances;

import weka.core.Classifier;

import weka.core.converters.ConverterUtils.DataSource;

public class AlgorithmComparison {

public static void main(String[] args) throws Exception {

DataSource source = new DataSource("data/diabetes.arff");

Instances dataset = source.getDataSet();

dataset.setClassIndex(dataset.numAttributes() - 1);

// Create multiple classifiers

Classifier[] classifiers = {

new NaiveBayes(),

new J48(),

new Logistic(),

new IBk(5),

new LinearRegression()

};

String[] names = {"Naive Bayes", "J48 Tree", "Logistic Regression",

"5-Nearest Neighbors", "Linear Regression"};

System.out.println("Algorithm Comparison on Diabetes Dataset");

System.out.println("==========================================");

System.out.printf("%-25s %-10s %-10s%n", "Algorithm", "Accuracy", "Time (ms)");

System.out.println("------------------------------------------");

for (int i = 0; i < classifiers.length; i++) {

long startTime = System.currentTimeMillis();

// Train and evaluate

Evaluation eval = new Evaluation(dataset);

eval.crossValidateModel(classifiers[i], dataset, 10, new java.util.Random(1));

long endTime = System.currentTimeMillis();

double accuracy = eval.pctCorrect();

long trainingTime = endTime - startTime;

System.out.printf("%-25s %-10.2f%% %-10d%n", names[i], accuracy, trainingTime);

}

}

}

This comparison reveals practical trade-offs:

- Naive Bayes trains in milliseconds but may sacrifice accuracy

- J48 trees provide interpretable rules but might overfit

- K-Nearest Neighbors requires storing all training data

The "best" choice depends on your specific constraints and priorities.

Understanding Weka Machine Learning’s Limitations and When to Use Alternatives

Weka’s accessibility shouldn’t obscure its limitations. While excellent for many applications, certain scenarios demand different tools:

| Scenario | Challenge | Better Alternative |

|---|---|---|

| Datasets larger than RAM | Memory constraints with billion-row datasets | Apache Spark MLlib |

| Deep Learning | Limited deep learning capabilities | TensorFlow, PyTorch |

| Real-Time Streaming | Batch processing architecture | Kafka + Flink |

| Unstructured Data | Limited image/text/audio support | Specialized frameworks |

Weka remains optimal for:

- Interpretability-critical applications

- Enterprise Java environments

- Rapid prototyping and research

- Educational settings where understanding algorithm mechanics matters

The trade-offs are clear: choose Weka for transparency and integration, choose TensorFlow for scale and deep learning, choose Python + Scikit-learn for flexibility and ecosystem breadth.

Production Deployment: Integrating Weka Machine Learning into Java Applications

The real value of Weka emerges when trained models are deployed into production systems. Unlike Python scripts that require separate infrastructure, Weka models can be serialized and loaded directly into existing Java applications. This is particularly valuable for Spring Boot microservices, Salesforce integrations, and enterprise systems.

import weka.classifiers.Classifier;

import weka.core.Instances;

import weka.core.Instance;

import weka.core.DenseInstance;

import weka.classifiers.bayes.NaiveBayes;

import weka.core.converters.ConverterUtils.DataSource;

import java.io.ObjectOutputStream;

import java.io.ObjectInputStream;

import java.io.FileOutputStream;

import java.io.FileInputStream;

public class ModelPersistence {

// Step 1: Train and save model

public static void trainAndSaveModel() throws Exception {

DataSource source = new DataSource("data/diabetes.arff");

Instances dataset = source.getDataSet();

dataset.setClassIndex(dataset.numAttributes() - 1);

NaiveBayes classifier = new NaiveBayes();

classifier.buildClassifier(dataset);

// Save to file

ObjectOutputStream oos = new ObjectOutputStream(

new FileOutputStream("diabetes_model.model"));

oos.writeObject(classifier);

oos.writeObject(dataset.getStructure());

oos.close();

System.out.println("Model saved to diabetes_model.model");

}

// Step 2: Load model and make predictions

public static void loadAndPredict() throws Exception {

ObjectInputStream ois = new ObjectInputStream(

new FileInputStream("diabetes_model.model"));

Classifier classifier = (Classifier) ois.readObject();

Instances structure = (Instances) ois.readObject();

ois.close();

// Create new instance for prediction

double[] values = {1.0, 89.0, 66.0, 23.0, 94.0, 28.1, 0.167, 21.0};

Instance newInstance = new DenseInstance(1.0, values);

newInstance.setDataset(structure);

double prediction = classifier.classifyInstance(newInstance);

double[] probabilities = classifier.distributionForInstance(newInstance);

System.out.println("Prediction: " + structure.classAttribute().value((int)prediction));

System.out.println("Confidence: " +

String.format("%.2f%% probability", probabilities[(int)prediction] * 100));

}

public static void main(String[] args) throws Exception {

trainAndSaveModel();

loadAndPredict();

}

}

This deployment pattern allows a financial institution to train models offline, test them rigorously, serialize the trained model, and then load it into their production transaction system. The entire prediction pipeline runs within the JVM with no external dependencies—critical for systems handling millions of transactions daily. For Salesforce users, Weka models can even be embedded within custom Apex code for real-time predictions.

Conclusion: Weka Machine Learning’s Enduring Value in the Modern ML Landscape

Two decades after its creation, Weka remains relevant not because it’s cutting-edge but because it solves specific problems exceptionally well. In an ecosystem dominated by Python frameworks optimized for academic researchers and startups, Weka fills a critical niche: it’s the machine learning tool for Java developers, data scientists in regulated industries, and organizations where enterprise requirements matter more than chasing the latest research papers.

The practical combination of a user-friendly GUI for exploration and a robust Java API for production deployment makes Weka uniquely positioned for professionals who need both rapid experimentation and stable, auditable implementations. For building diabetes prediction systems in healthcare settings, customer segmentation in financial services, pattern detection in bioinformatics, or teaching machine learning fundamentals in computer science programs, Weka delivers value that more modern frameworks struggle to match.

Start with Weka if you’re working in Java environments, need explainable models, or want to understand algorithm implementations without fighting framework abstractions. Transition to specialized tools when your specific requirements like massive scale, deep learning, streaming—demand them.

Related Resources & Further Reading

Expand your knowledge of machine learning in Java with these curated resources:

Official Documentation & Tutorials

- Weka Tutorial – Complete Guide

- Use Weka in Your Java Code – Official Wiki

- Weka Maven Repository – Add Dependency

Java Machine Learning Alternatives

- Java for Machine Learning with TensorFlow or PyTorch

- Where Spring Boot Meets Machine Learning Services – Deep Java Library

- The Best Java Machine Learning Libraries You Should Know

Advanced Integration Guides

- Integrating Machine Learning Models with Java Applications

- Machine Learning in Java: From Concept to Code

- Machine Learning in Java: Getting Started with Weka

Practical Implementations

- Machine Learning with Java Applications in NetBeans with Weka

- Introduction to Weka: Key Features and Applications