The AWS Well-Architected Framework provides best practices and guidelines so organizations can prioritize efforts based on business needs. In this blog, we will first explore the framework’s importance and then examine the best practices across its six pillars.

1. The Needs of AWS Well-Architected

Many great ideas fail not because they lack vision but because they lack a mechanism to execute them effectively. As Jeff Bezos once said, “Good intentions never work, you need a good mechanism to make things happen.”. This principle is especially relevant in business, technology, and leadership, where structured mechanisms ensure consistent execution and scalable success.

Without a well-defined framework, building applications in the cloud can result in poor performance, security vulnerabilities, high costs, and unreliable systems. Therefore, the AWS Well-Architected Framework provides guidance to help organizations avoid these pitfalls while ensuring their cloud workloads align with business and technical requirements.

2. AWS Well-Architected Overview

We prefer to distribute capabilities into teams rather than have a centralized team with that capability. We must also ensure that each team can create architecture that meets common standards and understands the strengths, weaknesses, and trade-offs needed when designing. In order to effectively adapt to these requirements, we need to take several necessary actions.

- Ensure that best practices are both visible and easily accessible to all teams.

- In order to promote collaboration, foster a community where principal engineers can regularly review and refine their designs.

- Furthermore, best practices from real-world experience managing thousands of systems must be continuously collected.

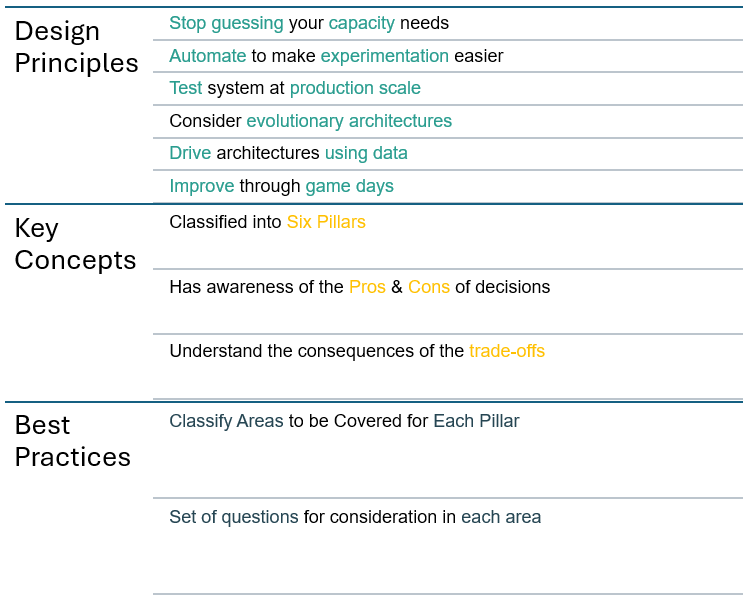

When discussing AWS Well-Architected, we should consider General Design Principles, Key Concepts, and Best Practices.

2.1 General Design Principles

The AWS Well-Architected Framework outlines key General Design Principles to help organizations build secure, high-performing, resilient, and cost-efficient cloud workloads. For example, it encourages eliminating capacity guesswork, testing at the production scale, and automating processes to improve efficiency. Moreover, it promotes data-driven decision-making and evolutionary architectures that adapt over time. By following these principles, businesses can optimize their cloud strategies and ensure long-term success.

- Stop guessing your capacity needs: Instead of manually estimating resource needs, leverage cloud scalability to adjust capacity based on demand automatically. This prevents both over-provisioning, which increases costs, and under-provisioning, which affects performance.

- Automate to make experimentation easier: By implementing automation, you can rapidly test and deploy changes with minimal effort and reduced risk. Automation enables quick iterations, facilitates innovation, and allows for controlled rollbacks when needed.

- Test system at production scale: Rather than relying solely on limited test environments, create production-scale replicas in the cloud. This approach ensures that performance, security, and reliability are validated under real-world conditions, reducing deployment risks.

- Consider evolutionary architectures: Traditional architectures remain static, but cloud environments enable continuous improvements. By designing for flexibility, systems can evolve over time, integrating new technologies and adapting to changing business needs without significant disruptions.

- Drive architectures using data: Instead of making assumptions, collect and analyze real-time data on workload performance. This enables fact-based improvements and optimizations, ensuring that architectural decisions align with system behavior and business goals.

- Improve through game days: To ensure system resilience, conduct simulations to test responses to failures, security incidents, or traffic spikes. As a result, these controlled exercises help teams effectively identify weaknesses, continuously refine incident response strategies, and ultimately build confidence in system reliability.

By following these principles, organizations can create scalable, efficient, and resilient cloud architectures that align with business and technical requirements.

2.2 Key Concepts

The Key Concepts of the AWS Well-Architected Framework enable organizations to make informed architectural decisions. By organizing workloads across six pillars, teams can effectively assess the pros and cons of each choice while also understanding the trade-offs involved. This approach ensures that cloud architectures remain secure, efficient, and scalable while aligning with business objectives.

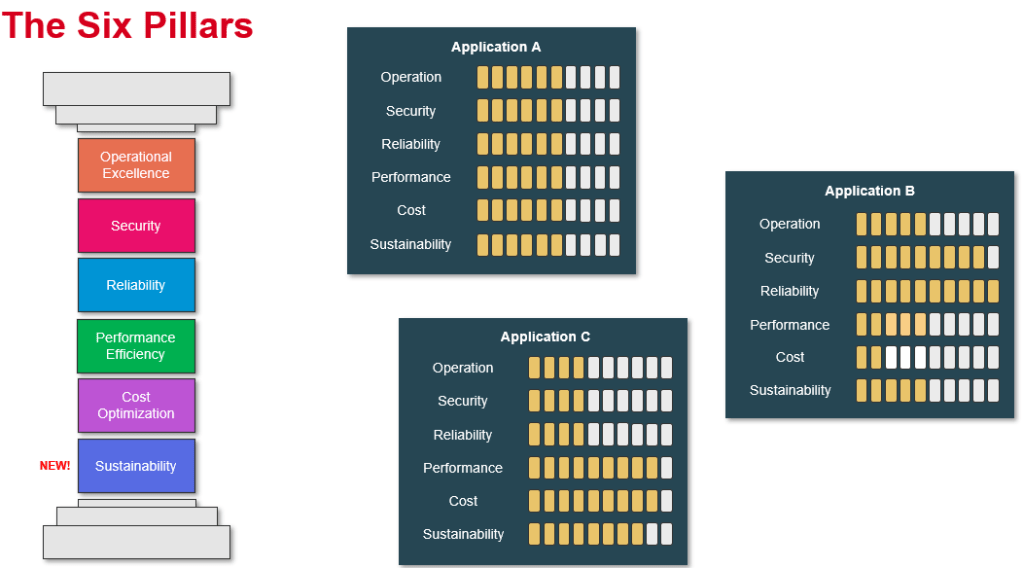

- Classified into Six Pillars: The AWS Well-Architected Framework is structured around six key pillars: Operational Excellence, Security, Reliability, Performance Efficiency, Cost Optimization, and Sustainability. Each pillar addresses critical aspects of cloud architecture, ensuring that workloads are secure, high-performing, cost-efficient, and sustainable. By following these structured principles, organizations can systematically improve their cloud infrastructure.

- Has an awareness of the Pros & Cons of decisions: Every architectural decision has trade-offs. For example, prioritizing performance efficiency might increase cloud costs, while focusing on cost optimization could impact latency. Awareness of these pros and cons helps organizations make informed decisions that align with their business priorities while balancing performance, security, and expenses.

- Understand the consequences of the trade-offs: Making one choice often means compromising in another area. For example, adding high availability through multi-region deployment improves resilience but increases operational complexity and costs. Understanding these trade-offs allows teams to make strategic, data-driven decisions that best serve their use cases. Organizations can design systems that evolve efficiently without unnecessary risks or expenses by considering long-term impacts.

By applying these key concepts, businesses can build well-architected cloud solutions that balance security, performance, cost, and operational efficiency.

2.3 Best Practices

The Best Practices of the AWS Well-Architected Framework provide actionable guidelines to build secure, high-performing, resilient, and cost-efficient cloud workloads. By following these practices, organizations can optimize their infrastructure, automate operations, and make data-driven decisions to ensure long-term scalability and reliability.

- Classify Areas to be Covered for Each Pillar: Each Well-Architected pillar focuses on specific architectural concerns, such as security, reliability, cost optimization, and performance efficiency. Organizations can systematically evaluate their cloud workloads by classifying these areas and ensuring alignment with best practices.

- Set of questions for consideration in each area: Organizations should use structured guiding questions to assess adherence to AWS Well-Architected principles. These questions help identify potential risks, trade-offs, and areas for improvement. For example, in the Security pillar, teams may ask, “How do we manage access control and encryption?” This approach ensures a comprehensive evaluation of cloud architectures.

3. The Six Pillars

The Six Pillars of the AWS Well-Architected Framework provide a structured approach to building secure, scalable, and efficient cloud workloads. By following these principles, organizations can optimize performance, enhance security, reduce costs, and ensure long-term resilience in the cloud.

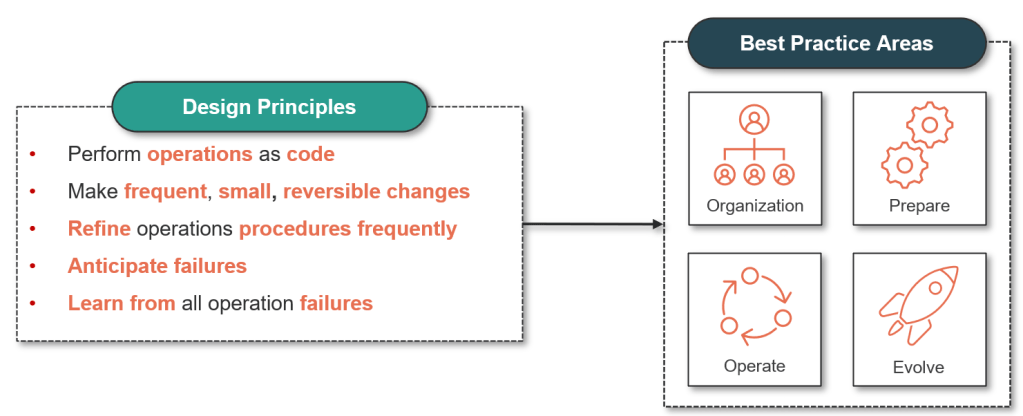

3.1 Pillar: Operational Excellence (OPS)

The Operational Excellence Pillar of the AWS Well-Architected Framework focuses on continuous improvement, automation, and monitoring to ensure workloads operate efficiently and reliably in the cloud. Its goal is to help organizations optimize operations, enhance agility, and respond to changes effectively while minimizing risks.

3.1.1 Design Principles

To achieve operational excellence, AWS recommends the following key design principles:

- Perform Operations as Code: Use Infrastructure as Code (IaC) tools like AWS CloudFormation and Terraform to automate deployments and avoid manual configurations.

- Make Frequent, Small, and Reversible Changes: Adopt CI/CD pipelines with AWS CodePipeline and AWS CodeBuild to deploy updates incrementally, reducing risk.

- Refine Operations Frequently: Optimize operations using automated testing, performance reviews, and monitoring dashboards.

- Anticipate Failure: Implement proactive monitoring with Amazon CloudWatch and AWS X-Ray to detect and respond to issues before they impact users.

- Learn from Operational Failures: Conduct post-mortems and implement feedback loops using AWS Fault Injection Simulator (FIS) to test resilience and improve processes.

3.1.2 Best Practices

The Operational Excellence Pillar is structured into four key best practice areas:

- Organization: Establishing Strong Operational Foundations

- Define roles and responsibilities for operational excellence.

- Use AWS Organizations to manage accounts and governance.

- Implement AWS Identity and Access Management (IAM) for secure and controlled access.

- Prepare: Readiness for Efficient Operations

- Standardize infrastructure deployment with AWS CloudFormation and AWS CDK.

- Use AWS Systems Manager for centralized visibility and automation of operations.

- Develop incident response playbooks and automate remediation workflows.

- Operate: Running Workloads Effectively

- Monitor health and performance using Amazon CloudWatch and AWS X-Ray.

- Use AWS Auto Scaling and Amazon EventBridge to automate responses to workload changes.

- Implement AWS Config to track configuration changes and ensure compliance.

- Evolve: Continuous Improvement and Innovation

- Conduct AWS Well-Architected Reviews regularly to identify operational gaps.

- Simulate real-world failure scenarios with AWS Fault Injection Simulator (FIS).

- Use AWS Trusted Advisor to optimize security, cost, and performance.

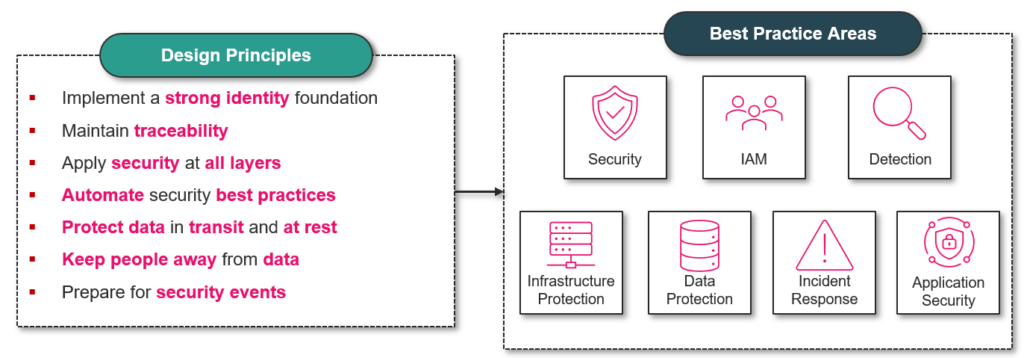

3.2 Pillar: Security (SEC)

The Security Pillar of the AWS Well-Architected Framework focuses on protecting data, systems, and assets by implementing security best practices and risk management strategies. As a result, organizations can ensure confidentiality, integrity, and availability while maintaining secure cloud operations.

Furthermore, adopting a strong security foundation helps businesses comply with regulations, mitigate threats, and establish resilient protection mechanisms. By following these principles, organizations can enhance security without compromising performance or agility.

3.2.1 Design Principles

AWS defines seven key design principles to enhance security, minimize risks, and build a resilient cloud environment:

- Implement a Strong Identity Foundation

- Enforce least-privilege access using AWS IAM roles, policies, and permissions.

- Additionally, enable Multi-Factor Authentication (MFA) for all privileged users.

- Use AWS IAM Identity Center (SSO) to centralize identity management.

- Maintain Traceability

- Continuously monitor security events using AWS CloudTrail, AWS Config, and Amazon CloudWatch Logs.

- Furthermore, we can detect anomalies and potential threats with Amazon GuardDuty and AWS Security Hub.

- Aggregate security logs for auditing and compliance purposes.

- Apply Security at All Layers

- Secure networks with AWS WAF, AWS Shield, and AWS Network Firewall.

- Meanwhile, we use Amazon VPC, Security Groups, and Network ACLs for workload isolation.

- In addition, endpoint security and malware protection should be implemented using Amazon Inspector.

- Automate Security Best Practices

- Enforce compliance using AWS Config, AWS Security Hub, and AWS Lambda.

- Moreover, we will automate patch management with AWS Systems Manager Patch Manager.

- Scan for vulnerabilities using Amazon Inspector.

- Protect Data in Transit and at Rest

- Encrypt sensitive data using AWS Key Management Service (KMS) and AWS Secrets Manager to safeguard it.

- Additionally, secure data transmission with TLS encryption and manage SSL/TLS certificates via AWS Certificate Manager (ACM).

- Use Amazon Macie to classify and monitor sensitive data.

- Keep People Away from Data

- Minimize direct human access using AWS Systems Manager Session Manager.

- Implement automated key rotation and restricted access policies with AWS KMS and IAM roles.

- Store and manage credentials securely using AWS Secrets Manager.

- Prepare for Security Events

- Develop incident response playbooks to handle security incidents efficiently.

- Automate security response workflows with AWS Lambda, Amazon EventBridge, and AWS Systems Manager.

- Conduct security drills and simulations using AWS Fault Injection Simulator (FIS) to test incident response readiness.

3.2.2 Best Practices

The Security Pillar of the AWS Well-Architected Framework is divided into seven key best practice areas. By implementing these practices, organizations can significantly enhance cloud security, effectively automate threat detection, and ensure compliance with industry standards.

- Security Foundation

- First and foremost, security policies and compliance requirements must be defined.

- In addition, AWS Organizations and AWS Control Tower can be used for governance.

- Identity and Access Management (IAM)

- To reduce risks, enforce least-privilege access with IAM roles and policies.

- Moreover, MFA should be implemented for all privileged accounts.

- Detection and Monitoring

- To improve visibility, enable AWS CloudTrail to track API activity.

- Meanwhile, Amazon GuardDuty can be used for real-time threat detection.

- Infrastructure Protection

- Use AWS WAF, AWS Shield, and Network Firewall to prevent unauthorized access.

- Similarly, workloads can be isolated with Amazon VPC and Security Groups.

- Data Protection

- Above all, data can be encrypted at rest and in transit using AWS KMS and TLS.

- Additionally, AWS Secrets Manager can be used to store credentials.

- Incident Response

- To strengthen response readiness, develop playbooks, and automate workflows.

- Furthermore, security drills should be conducted using an AWS Fault Injection Simulator (FIS).

- Application Security

- To minimize vulnerabilities, integrate secure coding practices in development.

- At the same time, we use AWS WAF and Amazon CodeGuru to detect risks.

3.3 Pillar: Reliability (REL)

The Reliability Pillar of the AWS Well-Architected Framework focuses on ensuring workloads perform consistently despite failures, disruptions, or demand spikes. Organizations can increase system resilience while minimizing downtime by implementing best practices in architecture, automation, and fault tolerance.

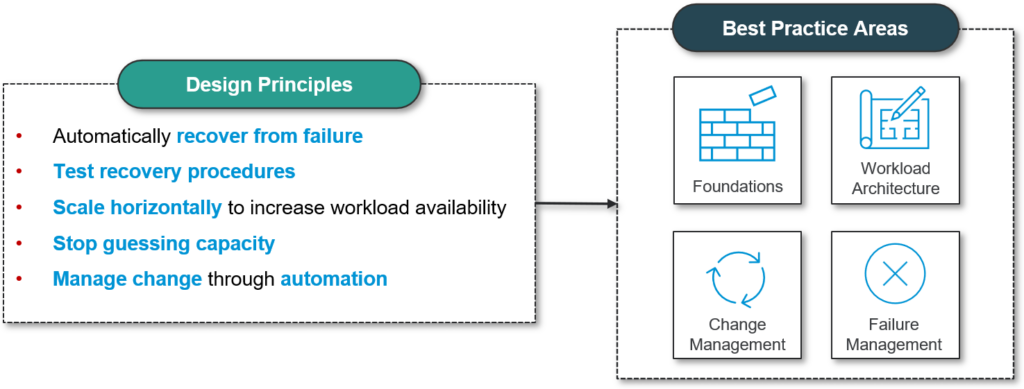

3.3.1 Design Principles

AWS defines six key design principles to enhance reliability:

- Automatically Recover from Failure

- To minimize downtime, detect failures, and trigger automated recovery actions.

- For example, AWS Auto Scaling, Amazon Route 53, and AWS Lambda can be used to handle disruptions.

- Test Recovery Procedures

- Instead of waiting for real failures, simulate outages with AWS Fault Injection Simulator (FIS).

- Additionally, recovery testing should be automated to validate system resilience.

- Scale Horizontally to Increase Workload Availability

- Rather than relying on a single instance, distribute workloads across multiple smaller resources.

- For instance, we use Amazon EC2 Auto Scaling, AWS Load Balancer, and Multi-AZ deployments.

- Stop Guessing Capacity

- To prevent over-provisioning or under-provisioning, monitor demand and scale accordingly.

- As a solution, leverage AWS Auto Scaling, Amazon CloudWatch, and AWS Compute Optimizer.

- Manage Change Through Automation

- To reduce human error, automate deployments using AWS CloudFormation or AWS CDK.

- Moreover, CI/CD pipelines should be implemented with AWS CodePipeline and AWS CodeDeploy.

3.3.2 Best Practices

The Reliability Pillar is structured into four key best practice areas:

- Foundations

- To build a strong base, establish clear service quotas and limits.

- Additionally, we use AWS Trusted Advisor to monitor service limits.

- Workload Architecture

- To enhance redundancy, design workloads with Multi-AZ and Multi-Region deployments.

- Distribute traffic using Elastic Load Balancing (ELB).

- Change Management

- To improve reliability, automate infrastructure updates with AWS CloudFormation.

- Similarly, we use AWS CodeDeploy to minimize downtime during deployments.

- Failure Management

- To proactively prepare and implement backup and disaster recovery plans.

- Meanwhile, test failure scenarios using AWS Fault Injection Simulator (FIS).

3.4 Pillar: Performance Efficiency (PERF)

The Performance Efficiency pillar of the AWS Well-Architected Framework focuses on using computing resources efficiently to meet system requirements, even as demand fluctuates over time. By optimizing performance, organizations can maintain high responsiveness while minimizing costs.

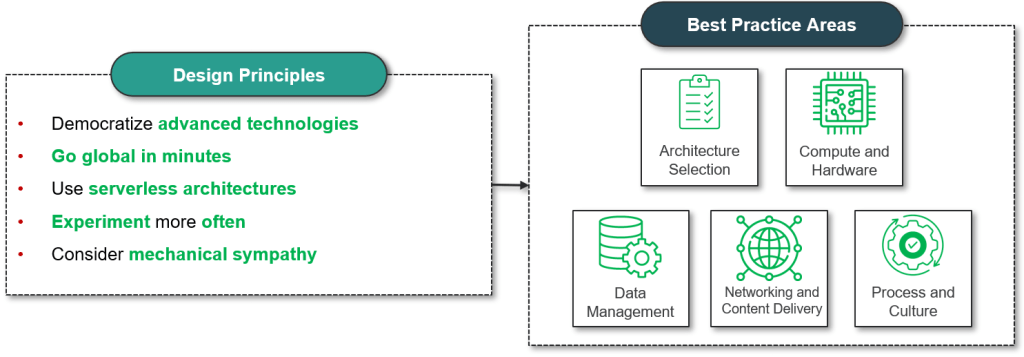

3.4.1 Design Principles

AWS outlines several key design principles to enhance performance efficiency:

- Democratize Advanced Technologies: AWS enables easy access to cutting-edge technologies such as AI/ML, Big Data analytics, and serverless computing. This allows organizations to focus on innovation rather than managing complex infrastructure.

- Go Global in Minutes: As a result, with AWS’s global infrastructure, applications can be deployed across multiple Regions and Availability Zones. Consequently, this reduces latency and improves user experience worldwide.

- Use Serverless Architectures: For instance, AWS services like Lambda, Fargate, and DynamoDB eliminate the need to manage infrastructure. Moreover, they scale automatically based on workload demands, ensuring efficiency and cost savings.

- Experiment More Often: AWS supports rapid experimentation with cost-effective solutions such as Auto Scaling, Spot Instances, and containerized services, enabling continuous optimization and innovation.

- Consider Mechanical Sympathy: Choosing the right technology for specific workloads ensures maximum efficiency. For example:

- Lambda for event-driven microservices.

- EC2 instances with GPUs for Machine Learning applications.

- Aurora or DynamoDB for high-performance databases.

3.4.2 Best Practices

AWS recommends five key areas to optimize performance efficiency:

- Architecture Selection

- Use well-architected design patterns to improve scalability and resilience.

- Leverage microservices and event-driven architectures for flexibility.

- Optimize database choices, such as relational (Aurora, RDS) vs. NoSQL (DynamoDB, ElastiCache), based on workload requirements.

- Compute and Hardware Optimization

- Choose the right instance types based on workload needs (EC2, Lambda, ECS, EKS).

- Use AWS Compute Optimizer for recommendations on instance right-sizing.

- Consider AWS Graviton for better performance and cost efficiency.

- Data Management

- Optimize storage by using S3 Intelligent-Tiering to automatically move data to the most cost-effective storage class.

- Improve database efficiency with Aurora, DynamoDB, and ElastiCache.

- Use partitioning and indexing strategies for optimized data retrieval.

- Networking and Content Delivery

- Implement Amazon CloudFront to accelerate content delivery and reduce latency.

- Use AWS Global Accelerator for faster global connectivity.

- Optimize VPC Endpoints to reduce costs and enhance performance when accessing AWS services like S3 or DynamoDB.

- Process and Culture Optimization

- Regularly conduct load testing using tools like JMeter and AWS Load Testing.

- Enable AWS X-Ray to analyze and improve application performance.

- Foster a culture of continuous monitoring and automation through CloudWatch, Auto Scaling, and Trusted Advisor.

3.5 Pillar: Cost Optimization (COST)

The Cost Optimization pillar in the AWS Well-Architected Framework focuses on running workloads in the most cost-efficient manner while still meeting business and performance requirements. By applying the right strategies, organizations can reduce waste, maximize return on investment, and align cloud spending with business goals.

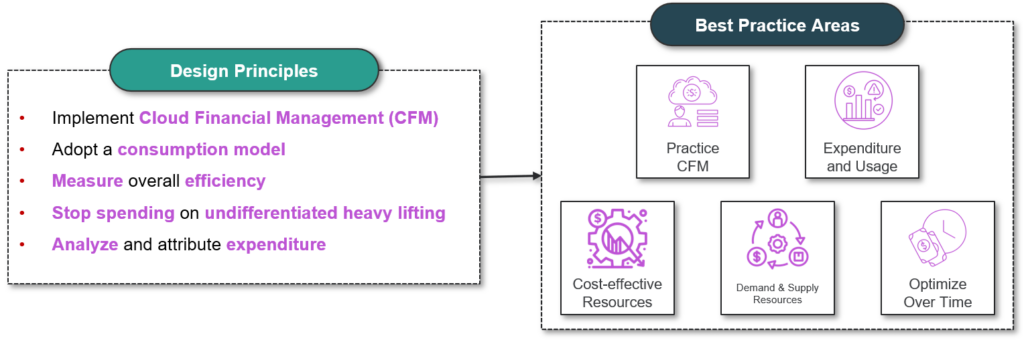

3.5.1 Design Principles

AWS defines several key design principles to help businesses optimize costs effectively:

- Implement Cloud Financial Management:

A strong cloud financial management (CFM) strategy ensures that organizations treat cost as a business metric rather than an afterthought. This involves budgeting, forecasting, and monitoring cloud spending to align with business objectives. - Adopt a Consumption-Based Model:

AWS enables a pay-as-you-go approach, allowing companies to pay only for what they use. Furthermore, by leveraging Auto Scaling and On-Demand resources, businesses can avoid over-provisioning and unnecessary costs. As a result, they achieve better cost efficiency while maintaining performance. - Measure Overall Efficiency:

By continuously tracking the cost-performance ratio, organizations can determine whether they are getting the most value from their AWS investments. AWS provides cost allocation tags and reports to gain insights into expenditure trends. - Optimize Resources to Align with Business Needs:

Choosing the right AWS services and pricing models is crucial. Whether it’s spot instances for short-term tasks or reserved instances for steady workloads, businesses should align their infrastructure with usage patterns. - Automate Cost Optimization:

In addition, automation can help eliminate manual errors and ensure cost efficiency. For example, services like AWS Auto Scaling, AWS Budgets, and AWS Cost Anomaly Detection enable proactive cost management. As a result, businesses can optimize spending while maintaining operational effectiveness.

3.5.2 Best Practices

AWS recommends focusing on five key areas to ensure cost-effective cloud operations:

- Practice Cloud Financial Management (CFM)

- Establish a finops (financial operations) culture to integrate cost awareness across teams.

- Use AWS Cost and Usage Reports (CUR) for granular insights into cloud expenditure.

- Set up AWS Budgets to track spending and prevent cost overruns.

- Improve Expenditure and Usage Awareness

- Implement cost allocation tags to identify high-cost services and optimize them.

- Use AWS Cost Explorer to analyze historical data and forecast future expenses.

- Enable AWS Trusted Advisor to receive cost-saving recommendations.

- Choose Cost-Effective Resources

- Select the right instance types based on workload requirements (e.g., Graviton instances for lower-cost compute power).

- Leverage AWS Compute Optimizer to right-size EC2 instances and avoid underutilization.

- Optimize storage costs by using S3 Intelligent-Tiering and EBS Volume Snapshots.

- Manage Demand and Supply of Resources

- Implement Auto Scaling to adjust compute resources based on traffic fluctuations.

- Use Spot Instances for non-critical workloads to reduce compute costs significantly.

- Enable Savings Plans and Reserved Instances for predictable workloads to lower long-term expenses.

- Optimize Costs Over Time

- Regularly conduct cost reviews and audits to identify new savings opportunities.

- Continuously monitor spending with AWS Cost Anomaly Detection to prevent budget spikes.

- Adapt cost optimization strategies as business needs evolve.

3.6 Pillar: Sustainability (SUS)

The Sustainability pillar in the AWS Well-Architected Framework emphasizes reducing the environmental impact of cloud workloads. By optimizing resource usage, adopting energy-efficient architectures, and leveraging AWS’s sustainability-focused services, organizations can minimize their carbon footprint while maintaining high performance.

3.6.1 Design Principles

AWS provides several key design principles to help organizations build sustainable and environmentally responsible cloud architectures.

- Understand Your Impact:

First and foremost, organizations need to measure and analyze the environmental impact of their workloads. Monitoring energy consumption, carbon emissions, and resource utilization provides the foundation for making data-driven sustainability improvements. - Establish Sustainability Goals:

In addition, setting clear sustainability goals ensures that organizations align their cloud strategies with long-term environmental objectives. Defining measurable KPIs, tracking carbon footprints, and optimizing workloads based on sustainability benchmarks drive continuous improvement. - Maximize Utilization:

Furthermore, increasing resource efficiency helps reduce waste. Right-sizing instances, utilizing auto-scaling, and consolidating workloads prevent over-provisioning, thereby lowering energy consumption. - Anticipate and Adopt More Efficient Hardware and Software:

As technology evolves, AWS frequently introduces new energy-efficient hardware and software optimizations. Adopting AWS Graviton processors, ARM-based instances, and optimized machine learning frameworks helps reduce energy waste while maintaining performance. - Use Managed Services:

Another effective strategy is leveraging AWS managed services, which are designed for high efficiency and optimized resource allocation. Using AWS Lambda, DynamoDB, and Fargate eliminates the need to manage underutilized infrastructure, resulting in a lower environmental footprint. - Reduce the Downstream Impact of Cloud Workloads:

Lastly, designing workloads to minimize their impact on end-user devices and networks helps improve overall sustainability. Optimizing data transfer, reducing processing requirements, and implementing edge computing ensure that cloud applications remain efficient while consuming less energy.

3.6.2 Best Practices

AWS recommends focusing on six key areas to ensure sustainable cloud operations:

- Region Selection:

- To begin with, choosing AWS regions powered by renewable energy can significantly reduce carbon emissions.

- AWS provides sustainability data for different regions, allowing organizations to select the most eco-friendly locations.

- Additionally, using edge computing and content delivery networks (CDNs) helps minimize energy-intensive data transfers.

- Align Resources to Demand:

- For better efficiency, implement Auto Scaling and Spot Instances to adjust compute resources dynamically.

- Use serverless architectures like AWS Lambda to avoid maintaining idle infrastructure.

- Optimize database performance by selecting the appropriate storage class and indexing strategies.

- Optimize Software and Architecture:

- To illustrate, designing event-driven architectures reduces unnecessary processing and improves efficiency.

- Use lazy loading, caching, and efficient algorithms to minimize compute power.

- Optimize serverless applications by reducing execution time and memory usage.

- Improve Data Management Efficiency:

- To reduce storage waste, use Amazon S3 Intelligent-Tiering to automatically optimize storage costs and energy usage.

- Compress, deduplicate, and archive data to minimize processing power and storage footprint.

- Implement data retention policies to ensure that only necessary data is stored long-term.

- Use Energy-Efficient Hardware and Services:

- For better sustainability, leverage AWS Graviton processors, which use less energy than traditional x86-based instances.

- Choose managed services such as DynamoDB, RDS, and Aurora, which are optimized for energy efficiency.

- Use AWS Nitro System-powered instances to improve security and sustainability.

- Promote a Sustainability-Focused Process and Culture:

- Additionally, establish company-wide sustainability objectives to ensure long-term environmental responsibility.

- Use AWS CloudWatch and the AWS Sustainability Dashboard to track carbon footprint and optimize resources.

- Educate teams on green software development practices, such as optimizing queries and reducing redundant computations.

Conclusion

The AWS Well-Architected Framework provides a structured approach to designing, building, and operating secure, high-performing, resilient, and efficient cloud workloads. By following its six key pillars—Operational Excellence, Security, Reliability, Performance Efficiency, Cost Optimization, and Sustainability—organizations can develop architectures that align with best practices while continuously improving over time.

In essence, this framework enables businesses to identify risks, optimize resources, enhance security, and ensure long-term sustainability in the cloud. By integrating these principles into their cloud strategy, organizations can achieve greater scalability, agility, and cost-effectiveness while delivering high-value solutions.

Ultimately, the AWS Well-Architected Framework is not just a set of guidelines but a continuous process that empowers organizations to innovate, adapt, and thrive in the cloud era.

Read more:

- AWS Cost Optimization Expertise with NashTech’s Solutions – NashTech Insights

- Unlocking AWS Performance: Expert Solutions and Strategies – NashTech Insights

- AWS Cloud Security: Comprehensive Protection Measures – NashTech Insights

- Cloud Provider and Technology Selection in Cloud Optimization – NashTech Insights

- Service and Resource Management in Cloud Optimization – NashTech Insights

- Confidential Computing In AWS – NashTech Insights

- Using AWS CDK To build An Enclave EC2 – NashTech Insights

- AWS Identity Center – SSO Solution – NashTech Insights

- Serverless Framework Hand-on – NashTech Insights

- Cloud Optimization – Overall – NashTech Insights

- IT Architecture Tool – AWS Well-Architected Tool – AWS