Introduction: Terraform, an infrastructure-as-code (IaC) tool developed by HashiCorp, offers powerful features for managing and provisioning infrastructure resources. One critical aspect of working with Terraform is managing the state, which tracks the current state of your infrastructure. As your Terraform projects grow in complexity and involve collaboration among team members, effective state management becomes essential. In this blog post, we will explore strategies for managing Terraform state, including collaboration and scaling techniques, along with an example Terraform code snippet.

Understanding Terraform State: Terraform state is a crucial component that tracks the current state of your infrastructure. It contains metadata about resources created by Terraform, including resource IDs, configurations, and dependencies. Terraform uses state to plan and manage infrastructure changes, ensuring idempotent operations and accurately reflecting the desired infrastructure state.

Collaboration Strategies for Terraform State: When working on a Terraform project with multiple team members, collaborating on the state becomes crucial. Here are two common strategies for collaborative state management:

Local State with Remote Backend: In this approach, each team member maintains their local Terraform state. However, the state is stored in a remote backend, such as Amazon S3, Azure Blob Storage, or HashiCorp Consul. The remote backend serves as a centralized location for storing and sharing the state. Team members can pull the latest state from the backend, make changes locally, and then push the updated state back to the backend. This strategy allows for collaboration while ensuring a single source of truth for the state.

Remote State with Locking Mechanism: With this strategy, the Terraform state is stored remotely and managed by a locking mechanism. When a team member wants to make changes, they acquire a lock on the state, preventing others from modifying it simultaneously. This approach ensures that only one person can make changes to the state at a given time, preventing conflicts. Popular locking mechanisms include HashiCorp Consul, Amazon DynamoDB, or custom solutions built on top of distributed systems like ZooKeeper or etcd.

Scaling Strategies for Terraform State: As your infrastructure grows, managing the state efficiently becomes even more critical. Here are two strategies to scale your Terraform state management:

Workspace Separation: Workspaces allow you to manage multiple instances of the same infrastructure in isolation. You can create separate workspaces for different environments, such as development, staging, and production. Each workspace maintains its own state, allowing for independent changes and deployments. This approach helps in scaling by separating the state and reducing the risk of conflicts when working with different environments.

Modularization and Backend Selection: When managing a large-scale infrastructure, modularization plays a vital role. Splitting your Terraform configuration into smaller modules allows for better organization and easier management of state files. Additionally, choosing an appropriate backend based on the scale of your infrastructure can improve state management. Remote backends like Amazon S3 or HashiCorp Terraform Cloud provide better performance and scalability compared to local state files.

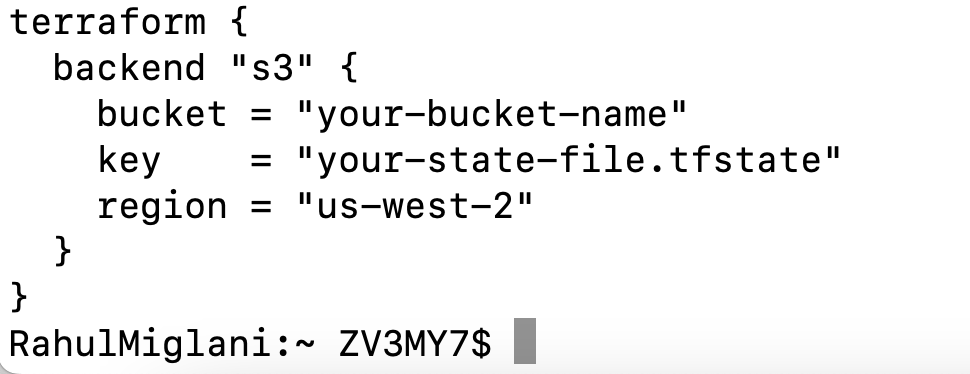

Example: Remote State with Amazon S3 Backend: Here’s an example Terraform code snippet demonstrating the configuration for using the Amazon S3 backend to store the state:

In the code snippet above, we configure Terraform to use the Amazon S3 backend by specifying the bucket, key (state file name), and the AWS region. This setup allows for remote state storage and collaboration among team members.

Conclusion: Effective management of Terraform state is crucial for collaboration and scaling of infrastructure-as-code

projects. By implementing collaboration strategies, such as using a remote backend or a locking mechanism, teams can work together seamlessly on Terraform projects, ensuring consistent state management and avoiding conflicts.

Scaling strategies, such as workspace separation and modularization, help address the challenges of managing larger and more complex infrastructures. By leveraging multiple workspaces and breaking down configurations into smaller modules, you can achieve better organization and scalability. Additionally, selecting an appropriate backend, like Amazon S3 or Terraform Cloud, improves performance and scalability as your infrastructure grows.

Remember, when managing Terraform state, it’s essential to follow best practices for security and access control. Restricting access to the state files, implementing encryption, and leveraging identity and access management (IAM) policies are crucial to maintaining the integrity and confidentiality of your infrastructure.

In summary, managing Terraform state is a critical aspect of infrastructure-as-code projects. By adopting collaboration and scaling strategies, teams can ensure seamless collaboration, reduce conflicts, and effectively manage larger and more complex infrastructures. By following best practices and leveraging appropriate tools and technologies, you can achieve reliable and efficient state management with Terraform.

Implement these strategies in your Terraform projects, and you’ll be well on your way to successful collaboration and scaling in your infrastructure-as-code journey.

Happy state management with Terraform!