As we know that a Microservices Application is a software application architecture where the application is built as a collection of small, loosely coupled, and independently deployable services called microservices. Each microservice represents a self-contained component that can be developed, deployed, and managed independently of other microservices.

With 3+ years of experience in testing the microservice projects, I would like to share my testing experience in Microservices Application. Similar to other kinds of application, we have to do non-functional testing for Microservice application including the performance testing, security testing, etc….

In this sharing, I focus on the functional testing only and here is the test pyramid for the microservice application:

Unit Testing

In a monolithic architecture, the applications contain features. Microservices takes one feature as a service and fulfill the goal of execution. Since there will be a separate microservice for a single business function, it is quite easy to test them in unit testing.

We focus on testing the functionality and behavior of individual microservices in isolation. Each microservice should have its own comprehensive unit tests to verify its functionality, handle edge cases, and validate inputs and outputs. Mocking frameworks can be used to simulate dependencies and interactions with other microservices.

Component Testing

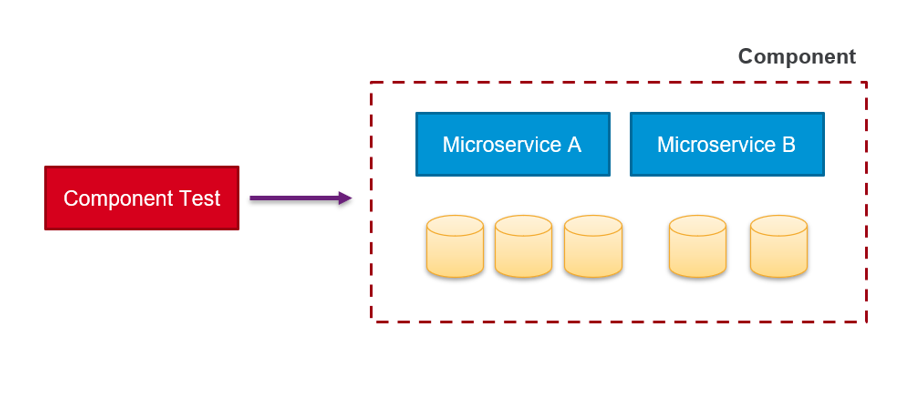

Component and end-to-end testing may look similar. But the difference is that end-to-end tests the complete system (all the microservices) in a production-like environment, whereas component does it on an isolated piece of the whole system. Both types of tests check the behavior of the system from the user (or consumer) perspective, following the journeys a user would perform.

A component is a microservice or set of microservices that accomplishes a role within the larger system. Component test is a type of acceptance testing. In which we examine the component’s behavior in isolation by substituting services with simulated resources or mocking. Component tests are more thorough than integration tests because they travel happy and unhappy paths — for instance, how the component responds to simulated network outages or malformed requests. We want to know if the component meets the needs of its consumer, much like we do in acceptance or end-to-end testing. Component test performs end-to-end testing to a group of microservices. Services outside the scope of the component are mocked.

Component test running in the same process as the microservice. The test injects a mocked service in the adapter to simulate interactions with other components.

Contract Testing

Contract testing is a technique for testing an integration point by checking each application in isolation to ensure the messages it sends or receives conform to a shared understanding that is documented in a “contract”. So, contract test focuses on verifying the compatibility and adherence to the agreed-upon contracts or APIs between microservices.

In a microservices architecture, different services interact with each other through APIs or contracts. Contract testing ensures that these interfaces remain consistent and compatible across services. By validating that the contracts are adhered to, it prevents integration issues and ensures smooth communication between microservices.

Integration Testing

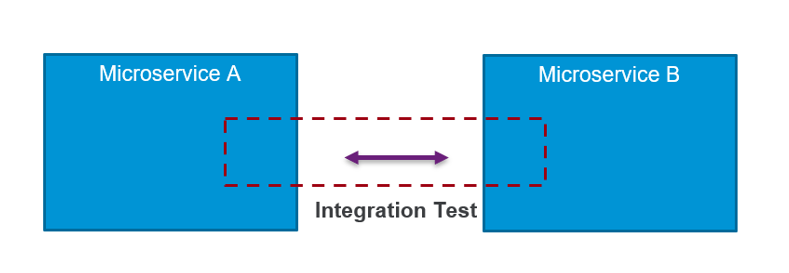

Integration tests on microservices work slightly differently than in other architectures. The goal is to identify interface defects by making microservices interact. Integration tests use real services.

Integration tests are not interested in evaluating behavior or business logic of a service. Instead, we want to make sure that the microservices can communicate with one another and their own databases. We’re looking for things like missing HTTP headers and mismatched request/response pairings. And, as a result, integration tests are typically implemented at the interface level.

Stimulates interaction between modules; tests that the microservice works with the other REAL microservices involved.

Helps find problems related to the interaction between modules. Assists in making sure modules and their results are appropriate for the project.

The higher complexity of a more complete test environment pushes testing further out and delays feedback to the developers. Larger integration tests can also present problems in finding the main cause of a defect.

Using integration tests to check that the microservices can communicate with other services, databases, and third-party endpoints.

End-to-end Testing

End-to-end test verifies the entire microservice system’s functionality from end to end, including multiple microservices and their interactions, external dependencies, and user workflows. It ensures that the system works as expected and delivers the desired business value. We should test the complete transaction and verifies that all microservices work together. In my opinion, the application should run in an environment as close as possible to production. Ideally, The test environment would include all the third-party services that the application usually needs.

Continuous Testing

You don’t see this kind of testing in the pyramid. However, it is important in Microservice testing. We start doing the manual functional testing from the beginning of the project and need to apply the automation test for the features/services which are stable enough. The automation test can apply in different test levels and be integrated into the continuous integration/continuous deployment (CI/CD) pipeline to ensure ongoing quality assurance.

Tips for Testing Microservice Applications

Each microservice operates independently and specific functionality or business capability. It can serve many applications. We should consider testing each service like a small application by doing both functional and non-functional testing for each service.

We all know the importance of logs. They’re usually the first place to check when issues occur. Finding issues in a microservices architecture is a bit more complicated. Services work together to perform a specific function, but each service can be thought of as its own separate system. It is important to implement monitoring and observability mechanisms to gather metrics, logs, and traces from microservices. Monitor service health, performance, and logs in real-time to identify issues, detect anomalies, and troubleshoot problems effectively.

Each microservice should have its own isolated test data to avoid interference or dependencies between different services. This ensures that tests for one microservice do not impact the data or behavior of other microservices. Utilize tools or libraries like FactoryBoy, Faker, or custom data generation scripts to generate realistic and diverse test data.

Reference

- https://semaphoreci.com/blog/test-microservices/

- https://www.loggly.com/use-cases/log-management-in-microservices-architecture/

- https://microsoft.github.io/code-with-engineering-playbook/automated-testing/cdc-testing/